João Magalhães

ALBA: A European Portuguese Benchmark for Evaluating Language and Linguistic Dimensions in Generative LLMs

Mar 27, 2026Abstract:As Large Language Models (LLMs) expand across multilingual domains, evaluating their performance in under-represented languages becomes increasingly important. European Portuguese (pt-PT) is particularly affected, as existing training data and benchmarks are mainly in Brazilian Portuguese (pt-BR). To address this, we introduce ALBA, a linguistically grounded benchmark designed from the ground up to assess LLM proficiency in linguistic-related tasks in pt-PT across eight linguistic dimensions, including Language Variety, Culture-bound Semantics, Discourse Analysis, Word Plays, Syntax, Morphology, Lexicology, and Phonetics and Phonology. ALBA is manually constructed by language experts and paired with an LLM-as-a-judge framework for scalable evaluation of pt-PT generated language. Experiments on a diverse set of models reveal performance variability across linguistic dimensions, highlighting the need for comprehensive, variety-sensitive benchmarks that support further development of tools in pt-PT.

AMALIA Technical Report: A Fully Open Source Large Language Model for European Portuguese

Mar 27, 2026Abstract:Despite rapid progress in open large language models (LLMs), European Portuguese (pt-PT) remains underrepresented in both training data and native evaluation, with machine-translated benchmarks likely missing the variant's linguistic and cultural nuances. We introduce AMALIA, a fully open LLM that prioritizes pt-PT by using more high-quality pt-PT data during both the mid- and post-training stages. To evaluate pt-PT more faithfully, we release a suite of pt-PT benchmarks that includes translated standard tasks and four new datasets targeting pt-PT generation, linguistic competence, and pt-PT/pt-BR bias. Experiments show that AMALIA matches strong baselines on translated benchmarks while substantially improving performance on pt-PT-specific evaluations, supporting the case for targeted training and native benchmarking for European Portuguese.

Agentic Search in the Wild: Intents and Trajectory Dynamics from 14M+ Real Search Requests

Jan 24, 2026Abstract:LLM-powered search agents are increasingly being used for multi-step information seeking tasks, yet the IR community lacks empirical understanding of how agentic search sessions unfold and how retrieved evidence is used. This paper presents a large-scale log analysis of agentic search based on 14.44M search requests (3.97M sessions) collected from DeepResearchGym, i.e. an open-source search API accessed by external agentic clients. We sessionize the logs, assign session-level intents and step-wise query-reformulation labels using LLM-based annotation, and propose Context-driven Term Adoption Rate (CTAR) to quantify whether newly introduced query terms are traceable to previously retrieved evidence. Our analyses reveal distinctive behavioral patterns. First, over 90% of multi-turn sessions contain at most ten steps, and 89% of inter-step intervals fall under one minute. Second, behavior varies by intent. Fact-seeking sessions exhibit high repetition that increases over time, while sessions requiring reasoning sustain broader exploration. Third, agents reuse evidence across steps. On average, 54% of newly introduced query terms appear in the accumulated evidence context, with contributions from earlier steps beyond the most recent retrieval. The findings suggest that agentic search may benefit from repetition-aware early stopping, intent-adaptive retrieval budgets, and explicit cross-step context tracking. We plan to release the anonymized logs to support future research.

RedTWIZ: Diverse LLM Red Teaming via Adaptive Attack Planning

Oct 08, 2025Abstract:This paper presents the vision, scientific contributions, and technical details of RedTWIZ: an adaptive and diverse multi-turn red teaming framework, to audit the robustness of Large Language Models (LLMs) in AI-assisted software development. Our work is driven by three major research streams: (1) robust and systematic assessment of LLM conversational jailbreaks; (2) a diverse generative multi-turn attack suite, supporting compositional, realistic and goal-oriented jailbreak conversational strategies; and (3) a hierarchical attack planner, which adaptively plans, serializes, and triggers attacks tailored to specific LLM's vulnerabilities. Together, these contributions form a unified framework -- combining assessment, attack generation, and strategic planning -- to comprehensively evaluate and expose weaknesses in LLMs' robustness. Extensive evaluation is conducted to systematically assess and analyze the performance of the overall system and each component. Experimental results demonstrate that our multi-turn adversarial attack strategies can successfully lead state-of-the-art LLMs to produce unsafe generations, highlighting the pressing need for more research into enhancing LLM's robustness.

MoralCLIP: Contrastive Alignment of Vision-and-Language Representations with Moral Foundations Theory

Jun 06, 2025Abstract:Recent advances in vision-language models have enabled rich semantic understanding across modalities. However, these encoding methods lack the ability to interpret or reason about the moral dimensions of content-a crucial aspect of human cognition. In this paper, we address this gap by introducing MoralCLIP, a novel embedding representation method that extends multimodal learning with explicit moral grounding based on Moral Foundations Theory (MFT). Our approach integrates visual and textual moral cues into a unified embedding space, enabling cross-modal moral alignment. MoralCLIP is grounded on the multi-label dataset Social-Moral Image Database to identify co-occurring moral foundations in visual content. For MoralCLIP training, we design a moral data augmentation strategy to scale our annotated dataset to 15,000 image-text pairs labeled with MFT-aligned dimensions. Our results demonstrate that explicit moral supervision improves both unimodal and multimodal understanding of moral content, establishing a foundation for morally-aware AI systems capable of recognizing and aligning with human moral values.

Aligning Web Query Generation with Ranking Objectives via Direct Preference Optimization

May 25, 2025Abstract:Neural retrieval models excel in Web search, but their training requires substantial amounts of labeled query-document pairs, which are costly to obtain. With the widespread availability of Web document collections like ClueWeb22, synthetic queries generated by large language models offer a scalable alternative. Still, synthetic training queries often vary in quality, which leads to suboptimal downstream retrieval performance. Existing methods typically filter out noisy query-document pairs based on signals from an external re-ranker. In contrast, we propose a framework that leverages Direct Preference Optimization (DPO) to integrate ranking signals into the query generation process, aiming to directly optimize the model towards generating high-quality queries that maximize downstream retrieval effectiveness. Experiments show higher ranker-assessed relevance between query-document pairs after DPO, leading to stronger downstream performance on the MS~MARCO benchmark when compared to baseline models trained with synthetic data.

DeepResearchGym: A Free, Transparent, and Reproducible Evaluation Sandbox for Deep Research

May 25, 2025

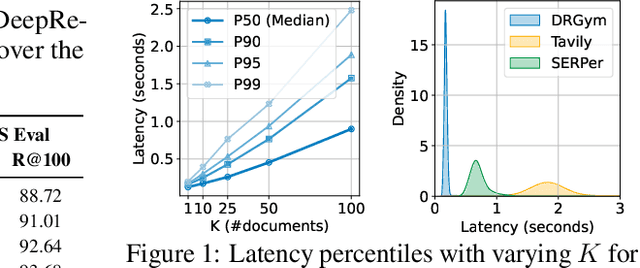

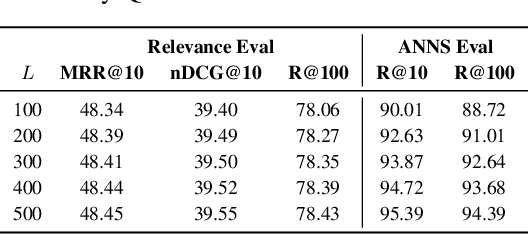

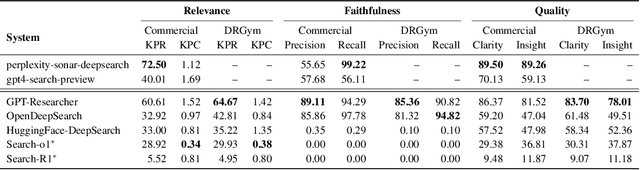

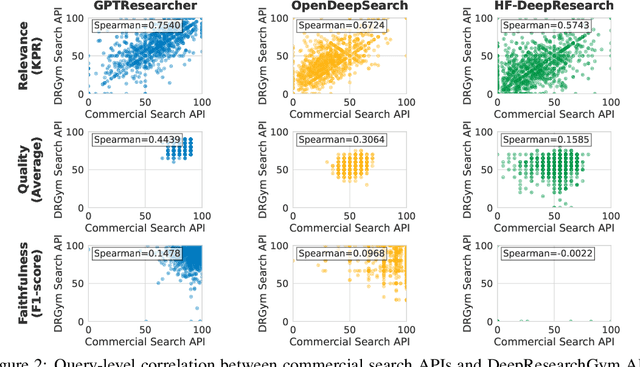

Abstract:Deep research systems represent an emerging class of agentic information retrieval methods that generate comprehensive and well-supported reports to complex queries. However, most existing frameworks rely on dynamic commercial search APIs, which pose reproducibility and transparency challenges in addition to their cost. To address these limitations, we introduce DeepResearchGym, an open-source sandbox that combines a reproducible search API with a rigorous evaluation protocol for benchmarking deep research systems. The API indexes large-scale public web corpora, namely ClueWeb22 and FineWeb, using a state-of-the-art dense retriever and approximate nearest neighbor search via DiskANN. It achieves lower latency than popular commercial APIs while ensuring stable document rankings across runs, and is freely available for research use. To evaluate deep research systems' outputs, we extend the Researchy Questions benchmark with automatic metrics through LLM-as-a-judge assessments to measure alignment with users' information needs, retrieval faithfulness, and report quality. Experimental results show that systems integrated with DeepResearchGym achieve performance comparable to those using commercial APIs, with performance rankings remaining consistent across evaluation metrics. A human evaluation study further confirms that our automatic protocol aligns with human preferences, validating the framework's ability to help support controlled assessment of deep research systems. Our code and API documentation are available at https://www.deepresearchgym.ai.

Latent Beam Diffusion Models for Decoding Image Sequences

Mar 26, 2025

Abstract:While diffusion models excel at generating high-quality images from text prompts, they struggle with visual consistency in image sequences. Existing methods generate each image independently, leading to disjointed narratives - a challenge further exacerbated in non-linear storytelling, where scenes must connect beyond adjacent frames. We introduce a novel beam search strategy for latent space exploration, enabling conditional generation of full image sequences with beam search decoding. Unlike prior approaches that use fixed latent priors, our method dynamically searches for an optimal sequence of latent representations, ensuring coherent visual transitions. To address beam search's quadratic complexity, we integrate a cross-attention mechanism that efficiently scores search paths and enables pruning, prioritizing alignment with both textual prompts and visual context. Human evaluations confirm that our approach outperforms baseline methods, producing full sequences with superior coherence, visual continuity, and textual alignment. By bridging advances in search optimization and latent space refinement, this work sets a new standard for structured image sequence generation.

Multi-trait User Simulation with Adaptive Decoding for Conversational Task Assistants

Oct 16, 2024

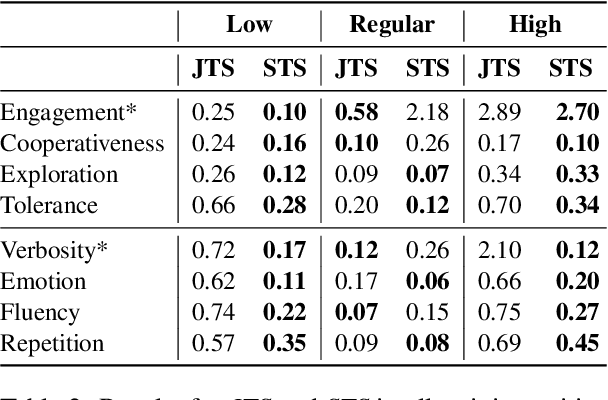

Abstract:Conversational systems must be robust to user interactions that naturally exhibit diverse conversational traits. Capturing and simulating these diverse traits coherently and efficiently presents a complex challenge. This paper introduces Multi-Trait Adaptive Decoding (mTAD), a method that generates diverse user profiles at decoding-time by sampling from various trait-specific Language Models (LMs). mTAD provides an adaptive and scalable approach to user simulation, enabling the creation of multiple user profiles without the need for additional fine-tuning. By analyzing real-world dialogues from the Conversational Task Assistant (CTA) domain, we identify key conversational traits and developed a framework to generate profile-aware dialogues that enhance conversational diversity. Experimental results validate the effectiveness of our approach in modeling single-traits using specialized LMs, which can capture less common patterns, even in out-of-domain tasks. Furthermore, the results demonstrate that mTAD is a robust and flexible framework for combining diverse user simulators.

Show and Guide: Instructional-Plan Grounded Vision and Language Model

Sep 27, 2024

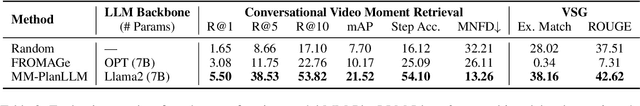

Abstract:Guiding users through complex procedural plans is an inherently multimodal task in which having visually illustrated plan steps is crucial to deliver an effective plan guidance. However, existing works on plan-following language models (LMs) often are not capable of multimodal input and output. In this work, we present MM-PlanLLM, the first multimodal LLM designed to assist users in executing instructional tasks by leveraging both textual plans and visual information. Specifically, we bring cross-modality through two key tasks: Conversational Video Moment Retrieval, where the model retrieves relevant step-video segments based on user queries, and Visually-Informed Step Generation, where the model generates the next step in a plan, conditioned on an image of the user's current progress. MM-PlanLLM is trained using a novel multitask-multistage approach, designed to gradually expose the model to multimodal instructional-plans semantic layers, achieving strong performance on both multimodal and textual dialogue in a plan-grounded setting. Furthermore, we show that the model delivers cross-modal temporal and plan-structure representations aligned between textual plan steps and instructional video moments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge