Jiho Choi

Weighted Knowledge Distillation for Semi-Supervised Segmentation of Maxillary Sinus in Panoramic X-ray Images

Apr 22, 2026Abstract:Accurate segmentation of maxillary sinus in panoramic X-ray images is essential for dental diagnosis and surgical planning; however, this task remains relatively underexplored in dental imaging research. Structural overlap, ambiguous anatomical boundaries inherent to two-dimensional panoramic projections, and the limited availability of large scale clinical datasets with reliable pixel-level annotations make the development and evaluation of segmentation models challenging. To address these challenges, we propose a semi-supervised segmentation framework that effectively leverages both labeled and unlabeled panoramic radiographs, where knowledge distillation is utilized to train a student model with reliable structural information distilled from a teacher model. Specifically, we introduce a weighted knowledge distillation loss to suppress unreliable distillation signals caused by structural discrepancies between teacher and student predictions. To further enhance the quality of pseudo labels generated by the teacher network, we introduce SinusCycle-GAN which is a refinement network based on unpaired image-to-image translation. This refinement process improves the precision of boundaries and reduces noise propagation when learning from unlabeled data during semi-supervised training. To evaluate the proposed method, we collected clinical panoramic X-ray images from 2,511 patients, and experimental results demonstrate that the proposed method outperforms state-of-the-art segmentation models, achieving the Dice score of 96.35\% while reducing boundary error. The results indicate that the proposed semi-supervised framework provides robust and anatomically consistent segmentation performance under limited labeled data conditions, highlighting its potential for broader dental image analysis applications.

When Sinks Help or Hurt: Unified Framework for Attention Sink in Large Vision-Language Models

Apr 01, 2026Abstract:Attention sinks are defined as tokens that attract disproportionate attention. While these have been studied in single modality transformers, their cross-modal impact in Large Vision-Language Models (LVLM) remains largely unexplored: are they redundant artifacts or essential global priors? This paper first categorizes visual sinks into two distinct categories: ViT-emerged sinks (V-sinks), which propagate from the vision encoder, and LLM-emerged sinks (L-sinks), which arise within deep LLM layers. Based on the new definition, our analysis reveals a fundamental performance trade-off: while sinks effectively encode global scene-level priors, their dominance can suppress the fine-grained visual evidence required for local perception. Furthermore, we identify specific functional layers where modulating these sinks most significantly impacts downstream performance. To leverage these insights, we propose Layer-wise Sink Gating (LSG), a lightweight, plug-and-play module that dynamically scales the attention contributions of V-sink and the rest visual tokens. LSG is trained via standard next-token prediction, requiring no task-specific supervision while keeping the LVLM backbone frozen. In most layers, LSG yields improvements on representative multimodal benchmarks, effectively balancing global reasoning and precise local evidence.

Sparse Bayesian Message Passing under Structural Uncertainty

Jan 03, 2026Abstract:Semi-supervised learning on real-world graphs is frequently challenged by heterophily, where the observed graph is unreliable or label-disassortative. Many existing graph neural networks either rely on a fixed adjacency structure or attempt to handle structural noise through regularization. In this work, we explicitly capture structural uncertainty by modeling a posterior distribution over signed adjacency matrices, allowing each edge to be positive, negative, or absent. We propose a sparse signed message passing network that is naturally robust to edge noise and heterophily, which can be interpreted from a Bayesian perspective. By combining (i) posterior marginalization over signed graph structures with (ii) sparse signed message aggregation, our approach offers a principled way to handle both edge noise and heterophily. Experimental results demonstrate that our method outperforms strong baseline models on heterophilic benchmarks under both synthetic and real-world structural noise.

PosterForest: Hierarchical Multi-Agent Collaboration for Scientific Poster Generation

Aug 29, 2025Abstract:We present a novel training-free framework, \textit{PosterForest}, for automated scientific poster generation. Unlike prior approaches, which largely neglect the hierarchical structure of scientific documents and the semantic integration of textual and visual elements, our method addresses both challenges directly. We introduce the \textit{Poster Tree}, a hierarchical intermediate representation that jointly encodes document structure and visual-textual relationships at multiple levels. Our framework employs a multi-agent collaboration strategy, where agents specializing in content summarization and layout planning iteratively coordinate and provide mutual feedback. This approach enables the joint optimization of logical consistency, content fidelity, and visual coherence. Extensive experiments on multiple academic domains show that our method outperforms existing baselines in both qualitative and quantitative evaluations. The resulting posters achieve quality closest to expert-designed ground truth and deliver superior information preservation, structural clarity, and user preference.

Sheaf Graph Neural Networks via PAC-Bayes Spectral Optimization

Aug 01, 2025Abstract:Over-smoothing in Graph Neural Networks (GNNs) causes collapse in distinct node features, particularly on heterophilic graphs where adjacent nodes often have dissimilar labels. Although sheaf neural networks partially mitigate this problem, they typically rely on static or heavily parameterized sheaf structures that hinder generalization and scalability. Existing sheaf-based models either predefine restriction maps or introduce excessive complexity, yet fail to provide rigorous stability guarantees. In this paper, we introduce a novel scheme called SGPC (Sheaf GNNs with PAC-Bayes Calibration), a unified architecture that combines cellular-sheaf message passing with several mechanisms, including optimal transport-based lifting, variance-reduced diffusion, and PAC-Bayes spectral regularization for robust semi-supervised node classification. We establish performance bounds theoretically and demonstrate that the resulting bound-aware objective can be achieved via end-to-end training in linear computational complexity. Experiments on nine homophilic and heterophilic benchmarks show that SGPC outperforms state-of-the-art spectral and sheaf-based GNNs while providing certified confidence intervals on unseen nodes.

3D-Aware Vision-Language Models Fine-Tuning with Geometric Distillation

Jun 11, 2025Abstract:Vision-Language Models (VLMs) have shown remarkable performance on diverse visual and linguistic tasks, yet they remain fundamentally limited in their understanding of 3D spatial structures. We propose Geometric Distillation, a lightweight, annotation-free fine-tuning framework that injects human-inspired geometric cues into pretrained VLMs without modifying their architecture. By distilling (1) sparse correspondences, (2) relative depth relations, and (3) dense cost volumes from off-the-shelf 3D foundation models (e.g., MASt3R, VGGT), our method shapes representations to be geometry-aware while remaining compatible with natural image-text inputs. Through extensive evaluations on 3D vision-language reasoning and 3D perception benchmarks, our method consistently outperforms prior approaches, achieving improved 3D spatial reasoning with significantly lower computational cost. Our work demonstrates a scalable and efficient path to bridge 2D-trained VLMs with 3D understanding, opening up wider use in spatially grounded multimodal tasks.

AdaRank: Adaptive Rank Pruning for Enhanced Model Merging

Mar 28, 2025

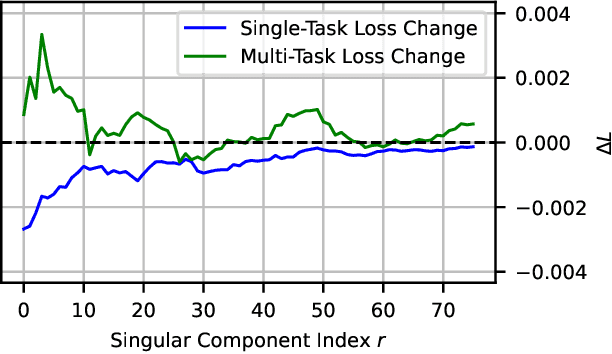

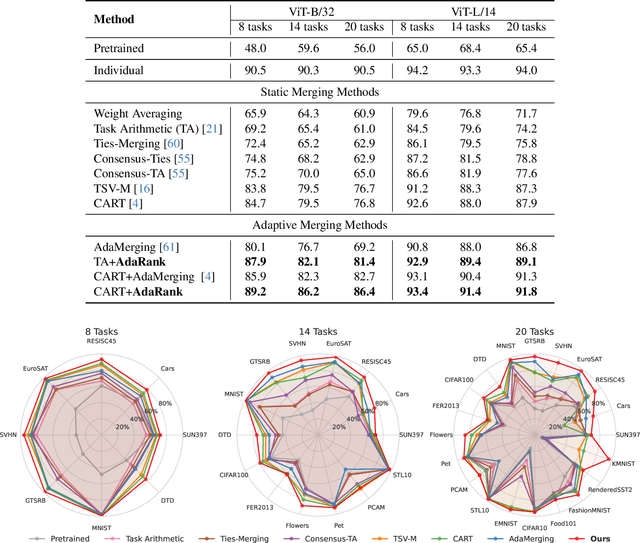

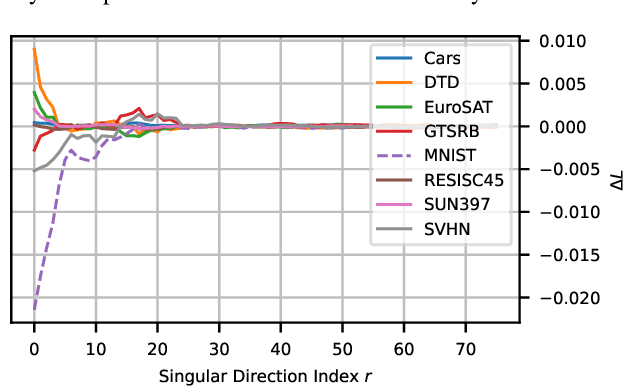

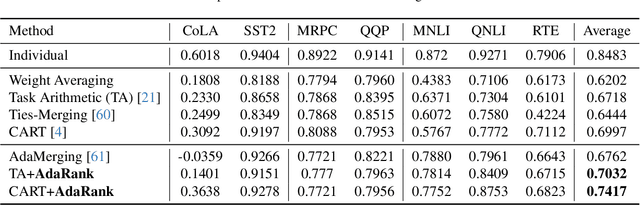

Abstract:Model merging has emerged as a promising approach for unifying independently fine-tuned models into an integrated framework, significantly enhancing computational efficiency in multi-task learning. Recently, several SVD-based techniques have been introduced to exploit low-rank structures for enhanced merging, but their reliance on such manually designed rank selection often leads to cross-task interference and suboptimal performance. In this paper, we propose AdaRank, a novel model merging framework that adaptively selects the most beneficial singular directions of task vectors to merge multiple models. We empirically show that the dominant singular components of task vectors can cause critical interference with other tasks, and that naive truncation across tasks and layers degrades performance. In contrast, AdaRank dynamically prunes the singular components that cause interference and offers an optimal amount of information to each task vector by learning to prune ranks during test-time via entropy minimization. Our analysis demonstrates that such method mitigates detrimental overlaps among tasks, while empirical results show that AdaRank consistently achieves state-of-the-art performance with various backbones and number of tasks, reducing the performance gap between fine-tuned models to nearly 1%.

Fine-Grained Image-Text Correspondence with Cost Aggregation for Open-Vocabulary Part Segmentation

Jan 16, 2025Abstract:Open-Vocabulary Part Segmentation (OVPS) is an emerging field for recognizing fine-grained parts in unseen categories. We identify two primary challenges in OVPS: (1) the difficulty in aligning part-level image-text correspondence, and (2) the lack of structural understanding in segmenting object parts. To address these issues, we propose PartCATSeg, a novel framework that integrates object-aware part-level cost aggregation, compositional loss, and structural guidance from DINO. Our approach employs a disentangled cost aggregation strategy that handles object and part-level costs separately, enhancing the precision of part-level segmentation. We also introduce a compositional loss to better capture part-object relationships, compensating for the limited part annotations. Additionally, structural guidance from DINO features improves boundary delineation and inter-part understanding. Extensive experiments on Pascal-Part-116, ADE20K-Part-234, and PartImageNet datasets demonstrate that our method significantly outperforms state-of-the-art approaches, setting a new baseline for robust generalization to unseen part categories.

Length-Aware DETR for Robust Moment Retrieval

Dec 30, 2024Abstract:Video Moment Retrieval (MR) aims to localize moments within a video based on a given natural language query. Given the prevalent use of platforms like YouTube for information retrieval, the demand for MR techniques is significantly growing. Recent DETR-based models have made notable advances in performance but still struggle with accurately localizing short moments. Through data analysis, we identified limited feature diversity in short moments, which motivated the development of MomentMix. MomentMix employs two augmentation strategies: ForegroundMix and BackgroundMix, each enhancing the feature representations of the foreground and background, respectively. Additionally, our analysis of prediction bias revealed that short moments particularly struggle with accurately predicting their center positions of moments. To address this, we propose a Length-Aware Decoder, which conditions length through a novel bipartite matching process. Our extensive studies demonstrate the efficacy of our length-aware approach, especially in localizing short moments, leading to improved overall performance. Our method surpasses state-of-the-art DETR-based methods on benchmark datasets, achieving the highest R1 and mAP on QVHighlights and the highest R1@0.7 on TACoS and Charades-STA (such as a 2.46% gain in R1@0.7 and a 2.57% gain in mAP average for QVHighlights). The code is available at https://github.com/sjpark5800/LA-DETR.

Revisiting Weight Averaging for Model Merging

Dec 11, 2024Abstract:Model merging aims to build a multi-task learner by combining the parameters of individually fine-tuned models without additional training. While a straightforward approach is to average model parameters across tasks, this often results in suboptimal performance due to interference among parameters across tasks. In this paper, we present intriguing results that weight averaging implicitly induces task vectors centered around the weight averaging itself and that applying a low-rank approximation to these centered task vectors significantly improves merging performance. Our analysis shows that centering the task vectors effectively separates core task-specific knowledge and nuisance noise within the fine-tuned parameters into the top and lower singular vectors, respectively, allowing us to reduce inter-task interference through its low-rank approximation. We evaluate our method on eight image classification tasks, demonstrating that it outperforms prior methods by a significant margin, narrowing the performance gap with traditional multi-task learning to within 1-3%

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge