Jiaqi Ding

GeoDynamics: A Geometric State-Space Neural Network for Understanding Brain Dynamics on Riemannian Manifolds

Jan 20, 2026Abstract:State-space models (SSMs) have become a cornerstone for unraveling brain dynamics, revealing how latent neural states evolve over time and give rise to observed signals. By combining the flexibility of deep learning with the principled dynamical structure of SSMs, recent studies have achieved powerful fits to functional neuroimaging data. However, most existing approaches still view the brain as a set of loosely connected regions or impose oversimplified network priors, falling short of a truly holistic and self-organized dynamical system perspective. Brain functional connectivity (FC) at each time point naturally forms a symmetric positive definite (SPD) matrix, which resides on a curved Riemannian manifold rather than in Euclidean space. Capturing the trajectories of these SPD matrices is key to understanding how coordinated networks support cognition and behavior. To this end, we introduce GeoDynamics, a geometric state-space neural network that tracks latent brain-state trajectories directly on the high-dimensional SPD manifold. GeoDynamics embeds each connectivity matrix into a manifold-aware recurrent framework, learning smooth and geometry-respecting transitions that reveal task-driven state changes and early markers of Alzheimer's disease, Parkinson's disease, and autism. Beyond neuroscience, we validate GeoDynamics on human action recognition benchmarks (UTKinect, Florence, HDM05), demonstrating its scalability and robustness in modeling complex spatiotemporal dynamics across diverse domains.

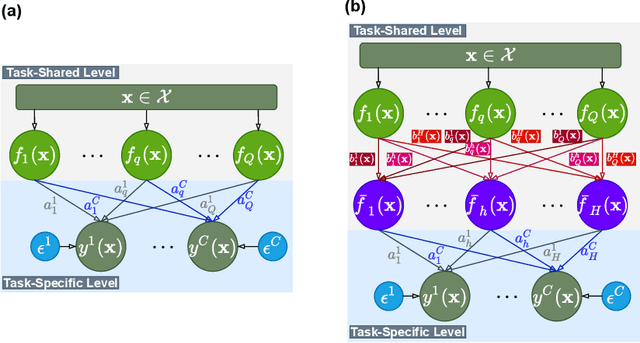

BrainMAP: Learning Multiple Activation Pathways in Brain Networks

Dec 23, 2024Abstract:Functional Magnetic Resonance Image (fMRI) is commonly employed to study human brain activity, since it offers insight into the relationship between functional fluctuations and human behavior. To enhance analysis and comprehension of brain activity, Graph Neural Networks (GNNs) have been widely applied to the analysis of functional connectivities (FC) derived from fMRI data, due to their ability to capture the synergistic interactions among brain regions. However, in the human brain, performing complex tasks typically involves the activation of certain pathways, which could be represented as paths across graphs. As such, conventional GNNs struggle to learn from these pathways due to the long-range dependencies of multiple pathways. To address these challenges, we introduce a novel framework BrainMAP to learn Multiple Activation Pathways in Brain networks. BrainMAP leverages sequential models to identify long-range correlations among sequentialized brain regions and incorporates an aggregation module based on Mixture of Experts (MoE) to learn from multiple pathways. Our comprehensive experiments highlight BrainMAP's superior performance. Furthermore, our framework enables explanatory analyses of crucial brain regions involved in tasks. Our code is provided at https://github.com/LzyFischer/Graph-Mamba.

NeuroPath: A Neural Pathway Transformer for Joining the Dots of Human Connectomes

Sep 26, 2024

Abstract:Although modern imaging technologies allow us to study connectivity between two distinct brain regions in-vivo, an in-depth understanding of how anatomical structure supports brain function and how spontaneous functional fluctuations emerge remarkable cognition is still elusive. Meanwhile, tremendous efforts have been made in the realm of machine learning to establish the nonlinear mapping between neuroimaging data and phenotypic traits. However, the absence of neuroscience insight in the current approaches poses significant challenges in understanding cognitive behavior from transient neural activities. To address this challenge, we put the spotlight on the coupling mechanism of structural connectivity (SC) and functional connectivity (FC) by formulating such network neuroscience question into an expressive graph representation learning problem for high-order topology. Specifically, we introduce the concept of topological detour to characterize how a ubiquitous instance of FC (direct link) is supported by neural pathways (detour) physically wired by SC, which forms a cyclic loop interacted by brain structure and function. In the clich\'e of machine learning, the multi-hop detour pathway underlying SC-FC coupling allows us to devise a novel multi-head self-attention mechanism within Transformer to capture multi-modal feature representation from paired graphs of SC and FC. Taken together, we propose a biological-inspired deep model, coined as NeuroPath, to find putative connectomic feature representations from the unprecedented amount of neuroimages, which can be plugged into various downstream applications such as task recognition and disease diagnosis. We have evaluated NeuroPath on large-scale public datasets including HCP and UK Biobank under supervised and zero-shot learning, where the state-of-the-art performance by our NeuroPath indicates great potential in network neuroscience.

Machine Learning on Dynamic Functional Connectivity: Promise, Pitfalls, and Interpretations

Sep 17, 2024

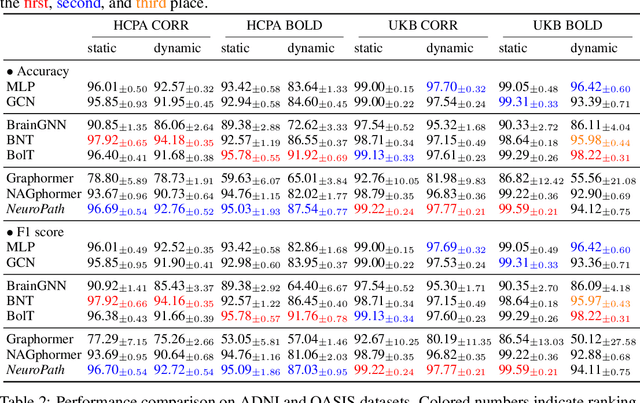

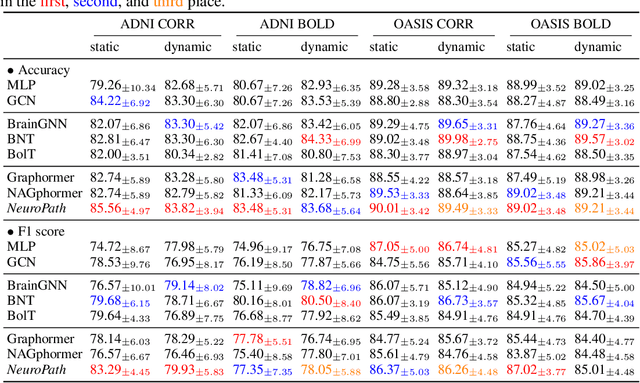

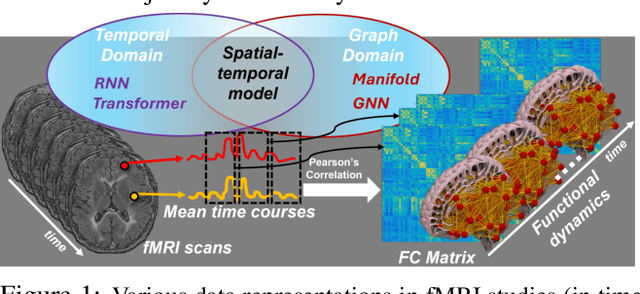

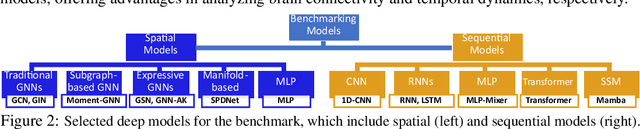

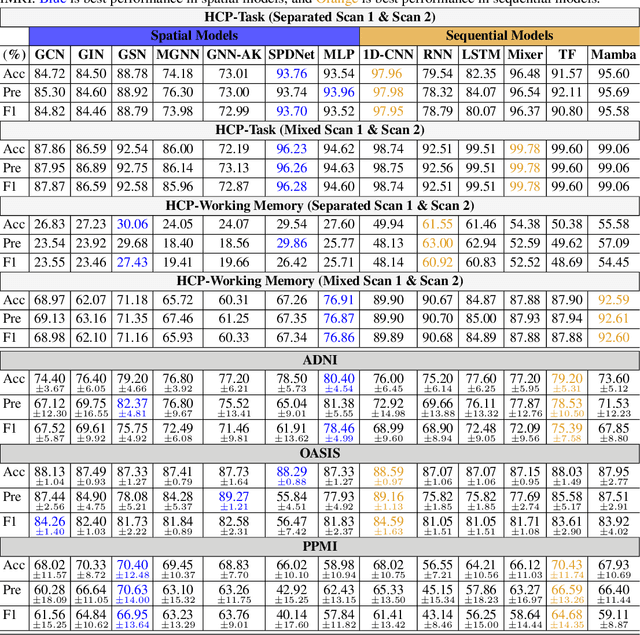

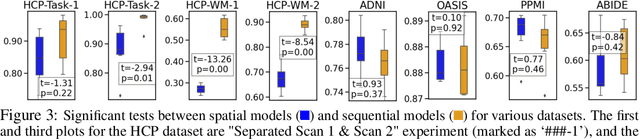

Abstract:An unprecedented amount of existing functional Magnetic Resonance Imaging (fMRI) data provides a new opportunity to understand the relationship between functional fluctuation and human cognition/behavior using a data-driven approach. To that end, tremendous efforts have been made in machine learning to predict cognitive states from evolving volumetric images of blood-oxygen-level-dependent (BOLD) signals. Due to the complex nature of brain function, however, the evaluation on learning performance and discoveries are not often consistent across current state-of-the-arts (SOTA). By capitalizing on large-scale existing neuroimaging data (34,887 data samples from six public databases), we seek to establish a well-founded empirical guideline for designing deep models for functional neuroimages by linking the methodology underpinning with knowledge from the neuroscience domain. Specifically, we put the spotlight on (1) What is the current SOTA performance in cognitive task recognition and disease diagnosis using fMRI? (2) What are the limitations of current deep models? and (3) What is the general guideline for selecting the suitable machine learning backbone for new neuroimaging applications? We have conducted a comprehensive evaluation and statistical analysis, in various settings, to answer the above outstanding questions.

Re-Think and Re-Design Graph Neural Networks in Spaces of Continuous Graph Diffusion Functionals

Jul 01, 2023

Abstract:Graph neural networks (GNNs) are widely used in domains like social networks and biological systems. However, the locality assumption of GNNs, which limits information exchange to neighboring nodes, hampers their ability to capture long-range dependencies and global patterns in graphs. To address this, we propose a new inductive bias based on variational analysis, drawing inspiration from the Brachistochrone problem. Our framework establishes a mapping between discrete GNN models and continuous diffusion functionals. This enables the design of application-specific objective functions in the continuous domain and the construction of discrete deep models with mathematical guarantees. To tackle over-smoothing in GNNs, we analyze the existing layer-by-layer graph embedding models and identify that they are equivalent to l2-norm integral functionals of graph gradients, which cause over-smoothing. Similar to edge-preserving filters in image denoising, we introduce total variation (TV) to align the graph diffusion pattern with global community topologies. Additionally, we devise a selective mechanism to address the trade-off between model depth and over-smoothing, which can be easily integrated into existing GNNs. Furthermore, we propose a novel generative adversarial network (GAN) that predicts spreading flows in graphs through a neural transport equation. To mitigate vanishing flows, we customize the objective function to minimize transportation within each community while maximizing inter-community flows. Our GNN models achieve state-of-the-art (SOTA) performance on popular graph learning benchmarks such as Cora, Citeseer, and Pubmed.

Scalable Multi-Task Gaussian Processes with Neural Embedding of Coregionalization

Sep 20, 2021

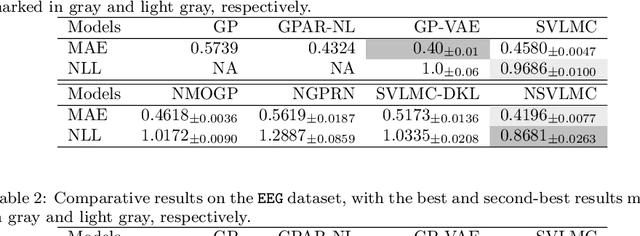

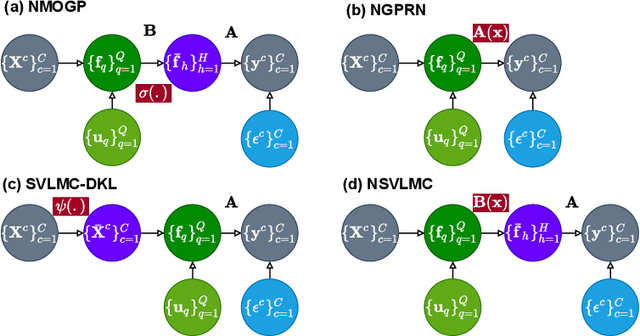

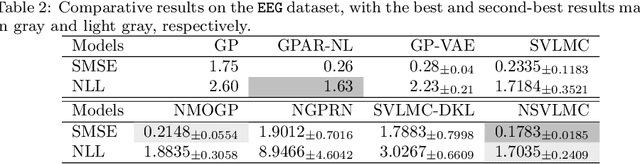

Abstract:Multi-task regression attempts to exploit the task similarity in order to achieve knowledge transfer across related tasks for performance improvement. The application of Gaussian process (GP) in this scenario yields the non-parametric yet informative Bayesian multi-task regression paradigm. Multi-task GP (MTGP) provides not only the prediction mean but also the associated prediction variance to quantify uncertainty, thus gaining popularity in various scenarios. The linear model of coregionalization (LMC) is a well-known MTGP paradigm which exploits the dependency of tasks through linear combination of several independent and diverse GPs. The LMC however suffers from high model complexity and limited model capability when handling complicated multi-task cases. To this end, we develop the neural embedding of coregionalization that transforms the latent GPs into a high-dimensional latent space to induce rich yet diverse behaviors. Furthermore, we use advanced variational inference as well as sparse approximation to devise a tight and compact evidence lower bound (ELBO) for higher quality of scalable model inference. Extensive numerical experiments have been conducted to verify the higher prediction quality and better generalization of our model, named NSVLMC, on various real-world multi-task datasets and the cross-fluid modeling of unsteady fluidized bed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge