Jennifer Prendki

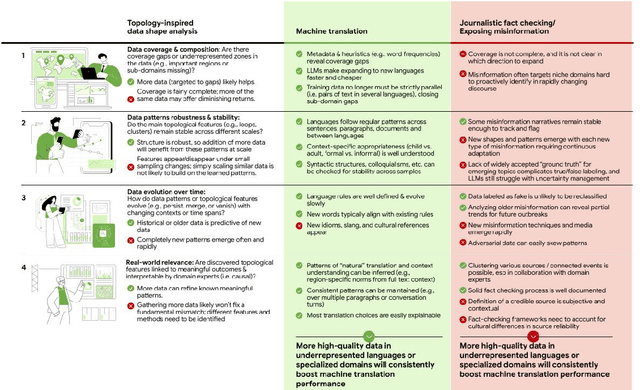

Not Every AI Problem is a Data Problem: We Should Be Intentional About Data Scaling

Jan 23, 2025

Abstract:While Large Language Models require more and more data to train and scale, rather than looking for any data to acquire, we should consider what types of tasks are more likely to benefit from data scaling. We should be intentional in our data acquisition. We argue that the topology of data itself informs which tasks to prioritize in data scaling, and shapes the development of the next generation of compute paradigms for tasks where data scaling is inefficient, or even insufficient.

Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context

Mar 08, 2024Abstract:In this report, we present the latest model of the Gemini family, Gemini 1.5 Pro, a highly compute-efficient multimodal mixture-of-experts model capable of recalling and reasoning over fine-grained information from millions of tokens of context, including multiple long documents and hours of video and audio. Gemini 1.5 Pro achieves near-perfect recall on long-context retrieval tasks across modalities, improves the state-of-the-art in long-document QA, long-video QA and long-context ASR, and matches or surpasses Gemini 1.0 Ultra's state-of-the-art performance across a broad set of benchmarks. Studying the limits of Gemini 1.5 Pro's long-context ability, we find continued improvement in next-token prediction and near-perfect retrieval (>99%) up to at least 10M tokens, a generational leap over existing models such as Claude 2.1 (200k) and GPT-4 Turbo (128k). Finally, we highlight surprising new capabilities of large language models at the frontier; when given a grammar manual for Kalamang, a language with fewer than 200 speakers worldwide, the model learns to translate English to Kalamang at a similar level to a person who learned from the same content.

Gemini: A Family of Highly Capable Multimodal Models

Dec 19, 2023Abstract:This report introduces a new family of multimodal models, Gemini, that exhibit remarkable capabilities across image, audio, video, and text understanding. The Gemini family consists of Ultra, Pro, and Nano sizes, suitable for applications ranging from complex reasoning tasks to on-device memory-constrained use-cases. Evaluation on a broad range of benchmarks shows that our most-capable Gemini Ultra model advances the state of the art in 30 of 32 of these benchmarks - notably being the first model to achieve human-expert performance on the well-studied exam benchmark MMLU, and improving the state of the art in every one of the 20 multimodal benchmarks we examined. We believe that the new capabilities of Gemini models in cross-modal reasoning and language understanding will enable a wide variety of use cases and we discuss our approach toward deploying them responsibly to users.

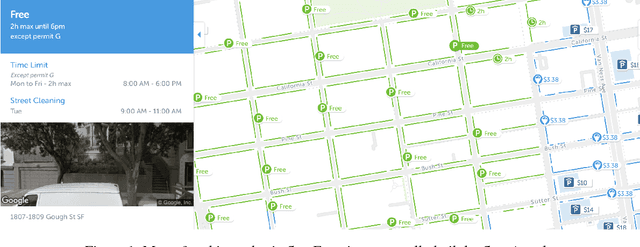

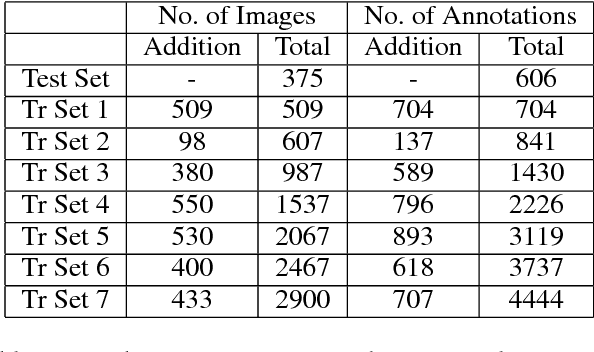

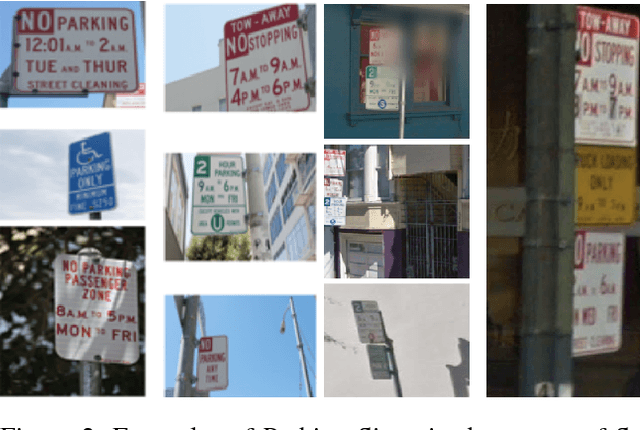

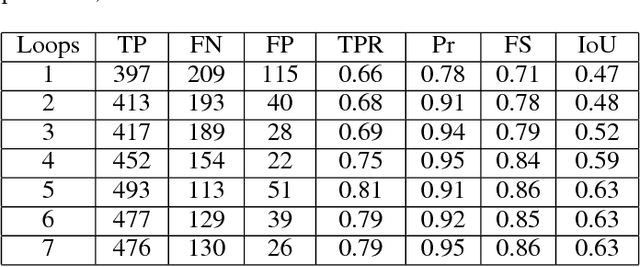

Crowd Sourcing based Active Learning Approach for Parking Sign Recognition

Dec 03, 2018

Abstract:Deep learning models have been used extensively to solve real-world problems in recent years. The performance of such models relies heavily on large amounts of labeled data for training. While the advances of data collection technology have enabled the acquisition of a massive volume of data, labeling the data remains an expensive and time-consuming task. Active learning techniques are being progressively adopted to accelerate the development of machine learning solutions by allowing the model to query the data they learn from. In this paper, we introduce a real-world problem, the recognition of parking signs, and present a framework that combines active learning techniques with a transfer learning approach and crowd-sourcing tools to create and train a machine learning solution to the problem. We discuss how such a framework contributes to building an accurate model in a cost-effective and fast way to solve the parking sign recognition problem in spite of the unevenness of the data associated with the fact that street-level images (such as parking signs) vary in shape, color, orientation and scale, and often appear on top of different types of background.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge