Honghui Du

Multi-Label Transfer Learning in Non-Stationary Data Streams

Sep 09, 2025

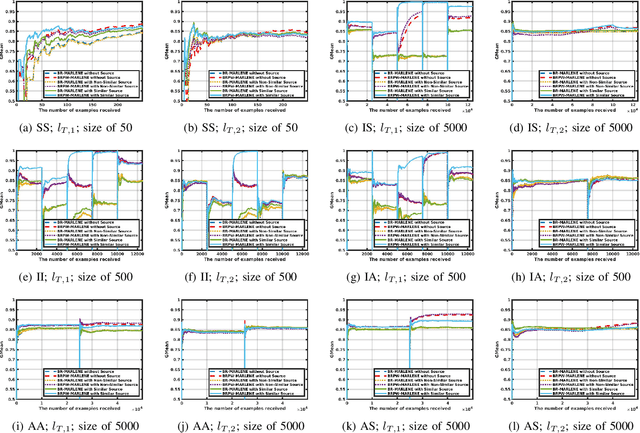

Abstract:Label concepts in multi-label data streams often experience drift in non-stationary environments, either independently or in relation to other labels. Transferring knowledge between related labels can accelerate adaptation, yet research on multi-label transfer learning for data streams remains limited. To address this, we propose two novel transfer learning methods: BR-MARLENE leverages knowledge from different labels in both source and target streams for multi-label classification; BRPW-MARLENE builds on this by explicitly modelling and transferring pairwise label dependencies to enhance learning performance. Comprehensive experiments show that both methods outperform state-of-the-art multi-label stream approaches in non-stationary environments, demonstrating the effectiveness of inter-label knowledge transfer for improved predictive performance.

MARLINE: Multi-Source Mapping Transfer Learning for Non-Stationary Environments

Sep 09, 2025

Abstract:Concept drift is a major problem in online learning due to its impact on the predictive performance of data stream mining systems. Recent studies have started exploring data streams from different sources as a strategy to tackle concept drift in a given target domain. These approaches make the assumption that at least one of the source models represents a concept similar to the target concept, which may not hold in many real-world scenarios. In this paper, we propose a novel approach called Multi-source mApping with tRansfer LearnIng for Non-stationary Environments (MARLINE). MARLINE can benefit from knowledge from multiple data sources in non-stationary environments even when source and target concepts do not match. This is achieved by projecting the target concept to the space of each source concept, enabling multiple source sub-classifiers to contribute towards the prediction of the target concept as part of an ensemble. Experiments on several synthetic and real-world datasets show that MARLINE was more accurate than several state-of-the-art data stream learning approaches.

Deep Learning Approaches for Medical Imaging Under Varying Degrees of Label Availability: A Comprehensive Survey

Apr 15, 2025

Abstract:Deep learning has achieved significant breakthroughs in medical imaging, but these advancements are often dependent on large, well-annotated datasets. However, obtaining such datasets poses a significant challenge, as it requires time-consuming and labor-intensive annotations from medical experts. Consequently, there is growing interest in learning paradigms such as incomplete, inexact, and absent supervision, which are designed to operate under limited, inexact, or missing labels. This survey categorizes and reviews the evolving research in these areas, analyzing around 600 notable contributions since 2018. It covers tasks such as image classification, segmentation, and detection across various medical application areas, including but not limited to brain, chest, and cardiac imaging. We attempt to establish the relationships among existing research studies in related areas. We provide formal definitions of different learning paradigms and offer a comprehensive summary and interpretation of various learning mechanisms and strategies, aiding readers in better understanding the current research landscape and ideas. We also discuss potential future research challenges.

Transformers4NewsRec: A Transformer-based News Recommendation Framework

Oct 17, 2024

Abstract:Pre-trained transformer models have shown great promise in various natural language processing tasks, including personalized news recommendations. To harness the power of these models, we introduce Transformers4NewsRec, a new Python framework built on the \textbf{Transformers} library. This framework is designed to unify and compare the performance of various news recommendation models, including deep neural networks and graph-based models. Transformers4NewsRec offers flexibility in terms of model selection, data preprocessing, and evaluation, allowing both quantitative and qualitative analysis.

Differentiable Neural-Integrated Meshfree Method for Forward and Inverse Modeling of Finite Strain Hyperelasticity

Jul 15, 2024

Abstract:The present study aims to extend the novel physics-informed machine learning approach, specifically the neural-integrated meshfree (NIM) method, to model finite-strain problems characterized by nonlinear elasticity and large deformations. To this end, the hyperelastic material models are integrated into the loss function of the NIM method by employing a consistent local variational formulation. Thanks to the inherent differentiable programming capabilities, NIM can circumvent the need for derivation of Newton-Raphson linearization of the variational form and the resulting tangent stiffness matrix, typically required in traditional numerical methods. Additionally, NIM utilizes a hybrid neural-numerical approximation encoded with partition-of-unity basis functions, coined NeuroPU, to effectively represent the displacement and streamline the training process. NeuroPU can also be used for approximating the unknown material fields, enabling NIM a unified framework for both forward and inverse modeling. For the imposition of displacement boundary conditions, this study introduces a new approach based on singular kernel functions into the NeuroPU approximation, leveraging its unique feature that allows for customized basis functions. Numerical experiments demonstrate the NIM method's capability in forward hyperelasticity modeling, achieving desirable accuracy, with errors among $10^{-3} \sim 10^{-5}$ in the relative $L_2$ norm, comparable to the well-established finite element solvers. Furthermore, NIM is applied to address the complex task of identifying heterogeneous mechanical properties of hyperelastic materials from strain data, validating its effectiveness in the inverse modeling of nonlinear materials. To leverage GPU acceleration, NIM is fully implemented on the JAX deep learning framework in this study, utilizing the accelerator-oriented array computation capabilities offered by JAX.

RecPrompt: A Prompt Tuning Framework for News Recommendation Using Large Language Models

Dec 16, 2023

Abstract:In the evolving field of personalized news recommendation, understanding the semantics of the underlying data is crucial. Large Language Models (LLMs) like GPT-4 have shown promising performance in understanding natural language. However, the extent of their applicability in news recommendation systems remains to be validated. This paper introduces RecPrompt, the first framework for news recommendation that leverages the capabilities of LLMs through prompt engineering. This system incorporates a prompt optimizer that applies an iterative bootstrapping process, enhancing the LLM-based recommender's ability to align news content with user preferences and interests more effectively. Moreover, this study offers insights into the effective use of LLMs in news recommendation, emphasizing both the advantages and the challenges of incorporating LLMs into recommendation systems.

Neural-Integrated Meshfree (NIM) Method: A differentiable programming-based hybrid solver for computational mechanics

Nov 21, 2023

Abstract:We present the neural-integrated meshfree (NIM) method, a differentiable programming-based hybrid meshfree approach within the field of computational mechanics. NIM seamlessly integrates traditional physics-based meshfree discretization techniques with deep learning architectures. It employs a hybrid approximation scheme, NeuroPU, to effectively represent the solution by combining continuous DNN representations with partition of unity (PU) basis functions associated with the underlying spatial discretization. This neural-numerical hybridization not only enhances the solution representation through functional space decomposition but also reduces both the size of DNN model and the need for spatial gradient computations based on automatic differentiation, leading to a significant improvement in training efficiency. Under the NIM framework, we propose two truly meshfree solvers: the strong form-based NIM (S-NIM) and the local variational form-based NIM (V-NIM). In the S-NIM solver, the strong-form governing equation is directly considered in the loss function, while the V-NIM solver employs a local Petrov-Galerkin approach that allows the construction of variational residuals based on arbitrary overlapping subdomains. This ensures both the satisfaction of underlying physics and the preservation of meshfree property. We perform extensive numerical experiments on both stationary and transient benchmark problems to assess the effectiveness of the proposed NIM methods in terms of accuracy, scalability, generalizability, and convergence properties. Moreover, comparative analysis with other physics-informed machine learning methods demonstrates that NIM, especially V-NIM, significantly enhances both accuracy and efficiency in end-to-end predictive capabilities.

Can We Transfer Noise Patterns? A Multi-environment Spectrum Analysis Model Using Generated Cases

Aug 14, 2023

Abstract:Spectrum analysis systems in online water quality testing are designed to detect types and concentrations of pollutants and enable regulatory agencies to respond promptly to pollution incidents. However, spectral data-based testing devices suffer from complex noise patterns when deployed in non-laboratory environments. To make the analysis model applicable to more environments, we propose a noise patterns transferring model, which takes the spectrum of standard water samples in different environments as cases and learns the differences in their noise patterns, thus enabling noise patterns to transfer to unknown samples. Unfortunately, the inevitable sample-level baseline noise makes the model unable to obtain the paired data that only differ in dataset-level environmental noise. To address the problem, we generate a sample-to-sample case-base to exclude the interference of sample-level noise on dataset-level noise learning, enhancing the system's learning performance. Experiments on spectral data with different background noises demonstrate the good noise-transferring ability of the proposed method against baseline systems ranging from wavelet denoising, deep neural networks, and generative models. From this research, we posit that our method can enhance the performance of DL models by generating high-quality cases. The source code is made publicly available online at https://github.com/Magnomic/CNST.

Multi-Source Transfer Learning for Non-Stationary Environments

Jan 07, 2019

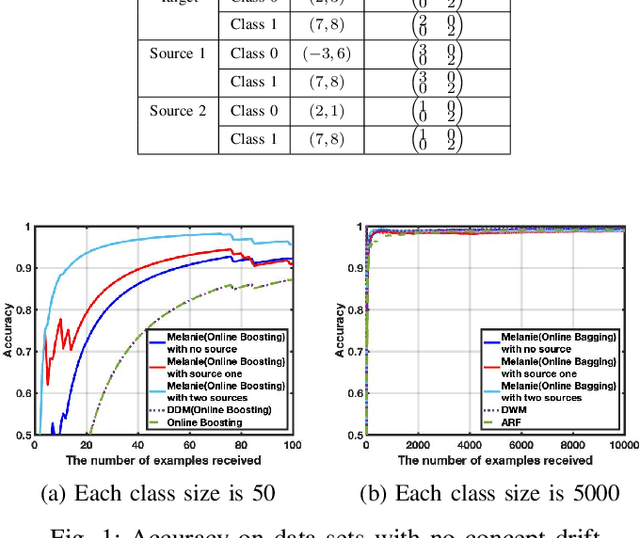

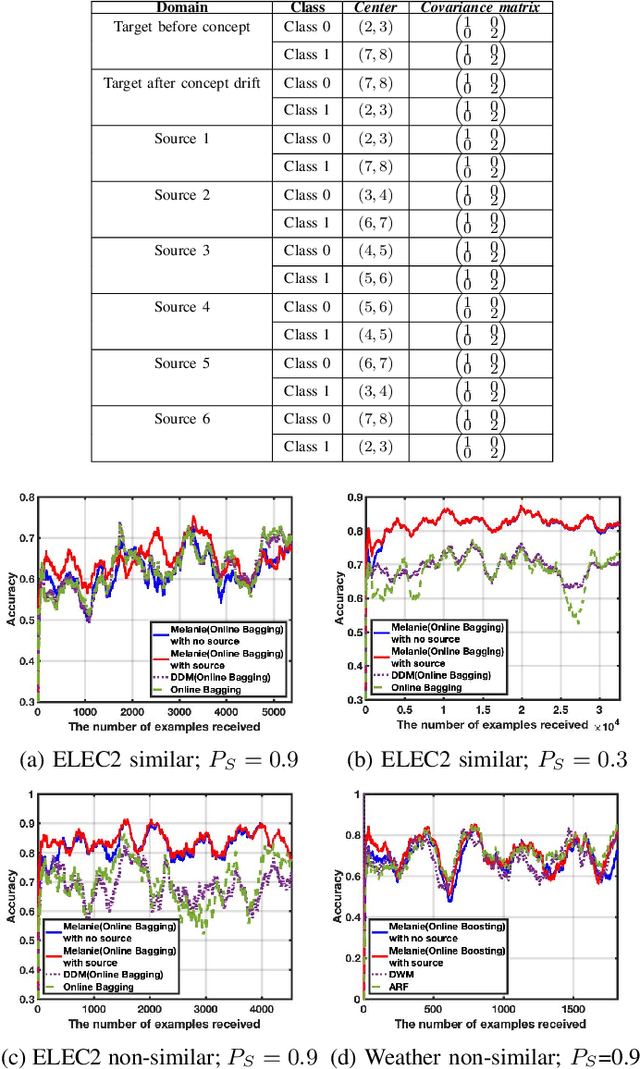

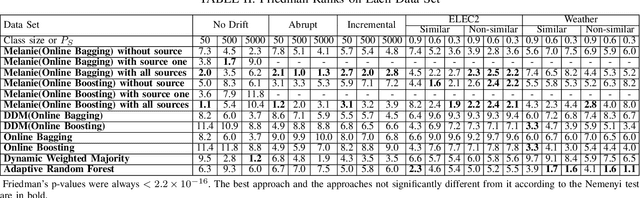

Abstract:In data stream mining, predictive models typically suffer drops in predictive performance due to concept drift. As enough data representing the new concept must be collected for the new concept to be well learnt, the predictive performance of existing models usually takes some time to recover from concept drift. To speed up recovery from concept drift and improve predictive performance in data stream mining, this work proposes a novel approach called Multi-sourcE onLine TrAnsfer learning for Non-statIonary Environments (Melanie). Melanie is the first approach able to transfer knowledge between multiple data streaming sources in non-stationary environments. It creates several sub-classifiers to learn different aspects from different source and target concepts over time. The sub-classifiers that match the current target concept well are identified, and used to compose an ensemble for predicting examples from the target concept. We evaluate Melanie on several synthetic data streams containing different types of concept drift and on real world data streams. The results indicate that Melanie can deal with a variety drifts and improve predictive performance over existing data stream learning algorithms by making use of multiple sources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge