Hiroshi Yamakawa

DEQ-MCL: Discrete-Event Queue-based Monte-Carlo Localization

Apr 22, 2024

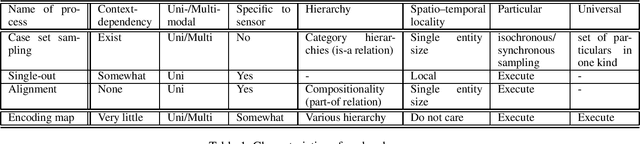

Abstract:Spatial cognition in hippocampal formation is posited to play a crucial role in the development of self-localization techniques for robots. In this paper, we propose a self-localization approach, DEQ-MCL, based on the discrete event queue hypothesis associated with phase precession within the hippocampal formation. Our method effectively estimates the posterior distribution of states, encompassing both past, present, and future states that are organized as a queue. This approach enables the smoothing of the posterior distribution of past states using current observations and the weighting of the joint distribution by considering the feasibility of future states. Our findings indicate that the proposed method holds promise for augmenting self-localization performance in indoor environments.

Recognition of All Categories of Entities by AI

Aug 17, 2022

Abstract:Human-level AI will have significant impacts on human society. However, estimates for the realization time are debatable. To arrive at human-level AI, artificial general intelligence (AGI), as opposed to AI systems that are specialized for a specific task, was set as a technically meaningful long-term goal. But now, propelled by advances in deep learning, that achievement is getting much closer. Considering the recent technological developments, it would be meaningful to discuss the completion date of human-level AI through the "comprehensive technology map approach," wherein we map human-level capabilities at a reasonable granularity, identify the current range of technology, and discuss the technical challenges in traversing unexplored areas and predict when all of them will be overcome. This paper presents a new argumentative option to view the ontological sextet, which encompasses entities in a way that is consistent with our everyday intuition and scientific practice, as a comprehensive technological map. Because most of the modeling of the world, in terms of how to interpret it, by an intelligent subject is the recognition of distal entities and the prediction of their temporal evolution, being able to handle all distal entities is a reasonable goal. Based on the findings of philosophy and engineering cognitive technology, we predict that in the relatively near future, AI will be able to recognize various entities to the same degree as humans.

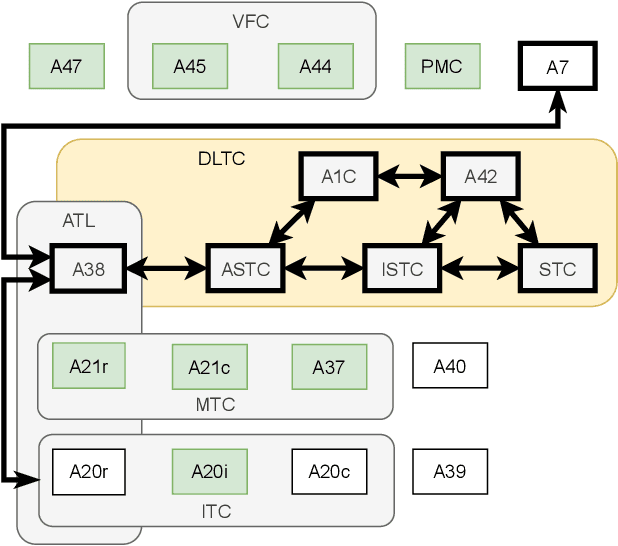

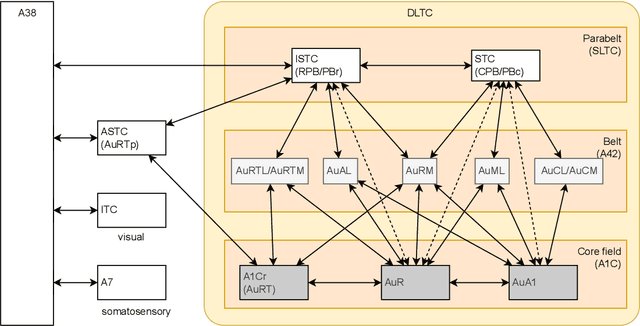

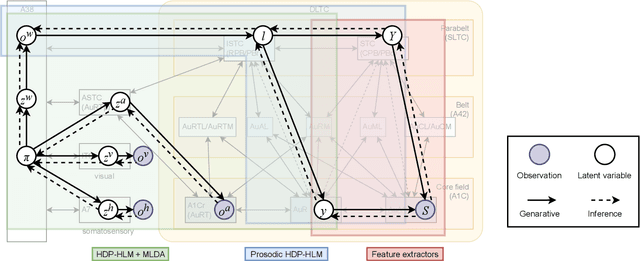

Brain-inspired probabilistic generative model for double articulation analysis of spoken language

Jul 06, 2022

Abstract:The human brain, among its several functions, analyzes the double articulation structure in spoken language, i.e., double articulation analysis (DAA). A hierarchical structure in which words are connected to form a sentence and words are composed of phonemes or syllables is called a double articulation structure. Where and how DAA is performed in the human brain has not been established, although some insights have been obtained. In addition, existing computational models based on a probabilistic generative model (PGM) do not incorporate neuroscientific findings, and their consistency with the brain has not been previously discussed. This study compared, mapped, and integrated these existing computational models with neuroscientific findings to bridge this gap, and the findings are relevant for future applications and further research. This study proposes a PGM for a DAA hypothesis that can be realized in the brain based on the outcomes of several neuroscientific surveys. The study involved (i) investigation and organization of anatomical structures related to spoken language processing, and (ii) design of a PGM that matches the anatomy and functions of the region of interest. Therefore, this study provides novel insights that will be foundational to further exploring DAA in the brain.

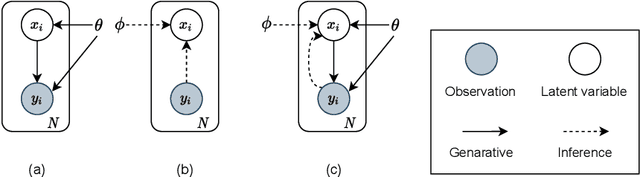

Whole brain Probabilistic Generative Model toward Realizing Cognitive Architecture for Developmental Robots

Mar 15, 2021Abstract:Building a humanlike integrative artificial cognitive system, that is, an artificial general intelligence, is one of the goals in artificial intelligence and developmental robotics. Furthermore, a computational model that enables an artificial cognitive system to achieve cognitive development will be an excellent reference for brain and cognitive science. This paper describes the development of a cognitive architecture using probabilistic generative models (PGMs) to fully mirror the human cognitive system. The integrative model is called a whole-brain PGM (WB-PGM). It is both brain-inspired and PGMbased. In this paper, the process of building the WB-PGM and learning from the human brain to build cognitive architectures is described.

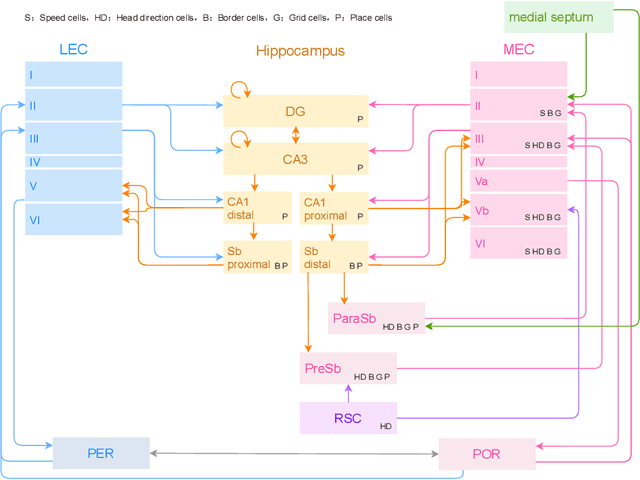

Hippocampal formation-inspired probabilistic generative model

Mar 12, 2021

Abstract:We constructed a hippocampal formation (HPF)-inspired probabilistic generative model (HPF-PGM) using the structure-constrained interface decomposition method. By modeling brain regions with PGMs, this model is positioned as a module that can be integrated as a whole-brain PGM. We discuss the relationship between simultaneous localization and mapping (SLAM) in robotics and the findings of HPF in neuroscience. Furthermore, we survey the modeling for HPF and various computational models, including brain-inspired SLAM, spatial concept formation, and deep generative models. The HPF-PGM is a computational model that is highly consistent with the anatomical structure and functions of the HPF, in contrast to typical conventional SLAM models. By referencing the brain, we suggest the importance of the integration of egocentric/allocentric information from the entorhinal cortex to the hippocampus and the use of discrete-event queues.

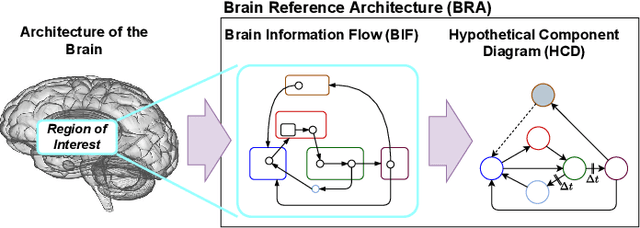

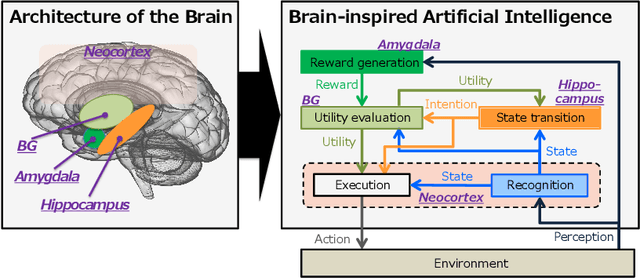

The whole brain architecture approach: Accelerating the development of artificial general intelligence by referring to the brain

Mar 06, 2021

Abstract:The vastness of the design space created by the combination of a large number of computational mechanisms, including machine learning, is an obstacle to creating an artificial general intelligence (AGI). Brain-inspired AGI development, in other words, cutting down the design space to look more like a biological brain, which is an existing model of a general intelligence, is a promising plan for solving this problem. However, it is difficult for an individual to design a software program that corresponds to the entire brain because the neuroscientific data required to understand the architecture of the brain are extensive and complicated. The whole-brain architecture approach divides the brain-inspired AGI development process into the task of designing the brain reference architecture (BRA) -- the flow of information and the diagram of corresponding components -- and the task of developing each component using the BRA. This is called BRA-driven development. Another difficulty lies in the extraction of the operating principles necessary for reproducing the cognitive-behavioral function of the brain from neuroscience data. Therefore, this study proposes the Structure-constrained Interface Decomposition (SCID) method, which is a hypothesis-building method for creating a hypothetical component diagram consistent with neuroscientific findings. The application of this approach has begun for building various regions of the brain. Moving forward, we will examine methods of evaluating the biological plausibility of brain-inspired software. This evaluation will also be used to prioritize different computational mechanisms, which should be merged, associated with the same regions of the brain.

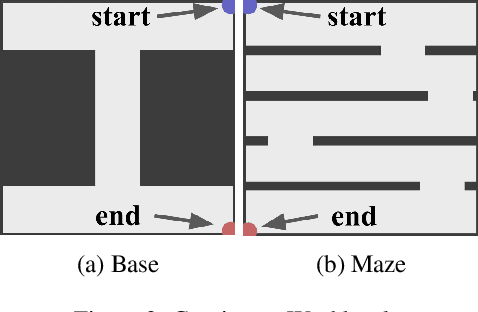

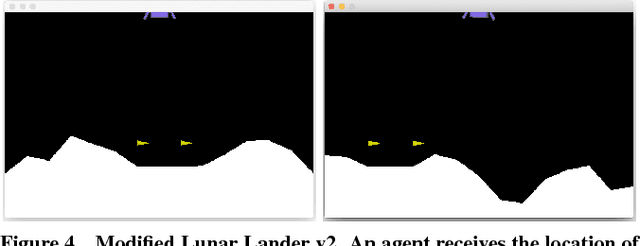

Macro Action Reinforcement Learning with Sequence Disentanglement using Variational Autoencoder

Mar 22, 2019

Abstract:One problem in the application of reinforcement learning to real-world problems is the curse of dimensionality on the action space. Macro actions, a sequence of primitive actions, have been studied to diminish the dimensionality of the action space with regard to the time axis. However, previous studies relied on humans defining macro actions or assumed macro actions as repetitions of the same primitive actions. We present Factorized Macro Action Reinforcement Learning (FaMARL) which autonomously learns disentangled factor representation of a sequence of actions to generate macro actions that can be directly applied to general reinforcement learning algorithms. FaMARL exhibits higher scores than other reinforcement learning algorithms on environments that require an extensive amount of search.

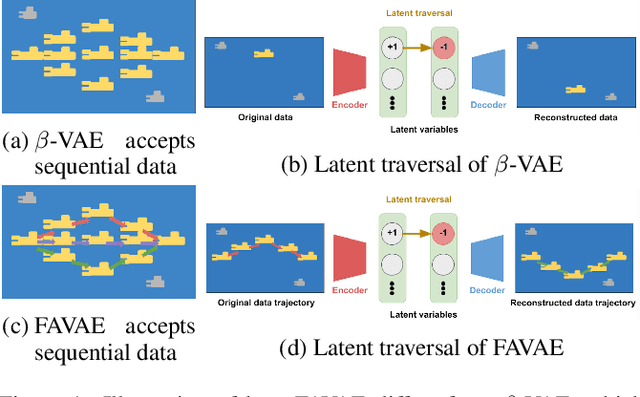

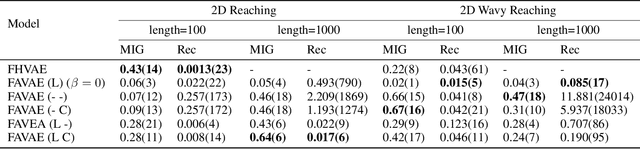

FAVAE: Sequence Disentanglement using Information Bottleneck Principle

Feb 22, 2019

Abstract:We propose the factorized action variational autoencoder (FAVAE), a state-of-the-art generative model for learning disentangled and interpretable representations from sequential data via the information bottleneck without supervision. The purpose of disentangled representation learning is to obtain interpretable and transferable representations from data. We focused on the disentangled representation of sequential data since there is a wide range of potential applications if disentanglement representation is extended to sequential data such as video, speech, and stock market. Sequential data are characterized by dynamic and static factors: dynamic factors are time dependent, and static factors are independent of time. Previous models disentangle static and dynamic factors by explicitly modeling the priors of latent variables to distinguish between these factors. However, these models cannot disentangle representations between dynamic factors, such as disentangling "picking up" and "throwing" in robotic tasks. FAVAE can disentangle multiple dynamic factors. Since it does not require modeling priors, it can disentangle "between" dynamic factors. We conducted experiments to show that FAVAE can extract disentangled dynamic factors.

Autonomous Self-Explanation of Behavior for Interactive Reinforcement Learning Agents

Oct 20, 2018

Abstract:In cooperation, the workers must know how co-workers behave. However, an agent's policy, which is embedded in a statistical machine learning model, is hard to understand, and requires much time and knowledge to comprehend. Therefore, it is difficult for people to predict the behavior of machine learning robots, which makes Human Robot Cooperation challenging. In this paper, we propose Instruction-based Behavior Explanation (IBE), a method to explain an autonomous agent's future behavior. In IBE, an agent can autonomously acquire the expressions to explain its own behavior by reusing the instructions given by a human expert to accelerate the learning of the agent's policy. IBE also enables a developmental agent, whose policy may change during the cooperation, to explain its own behavior with sufficient time granularity.

Bayesian Inference of Self-intention Attributed by Observer

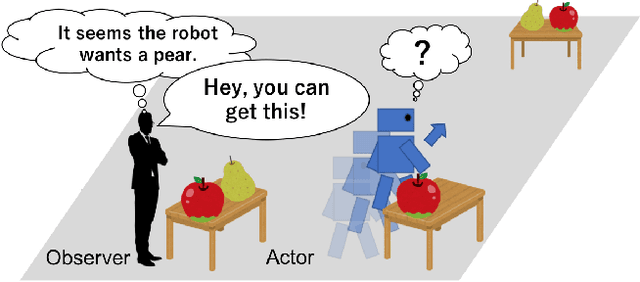

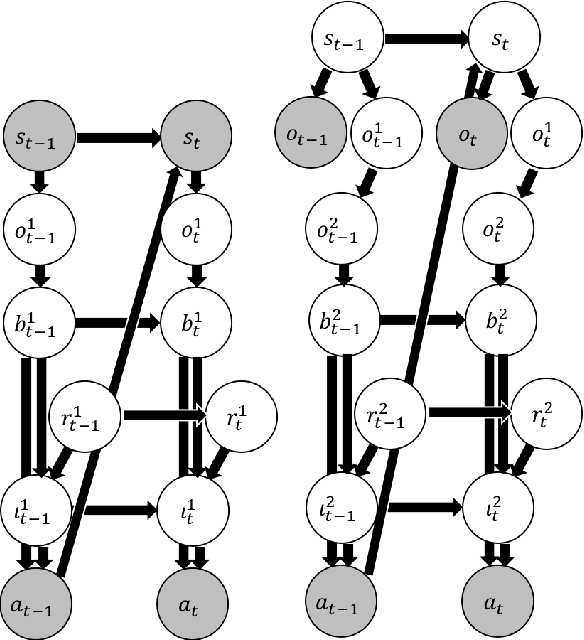

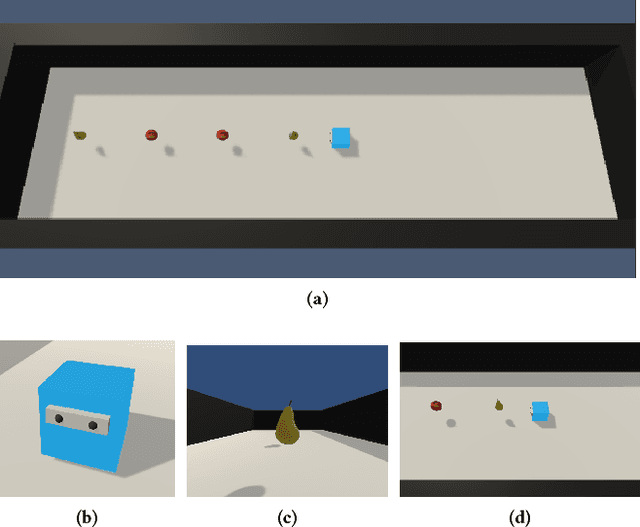

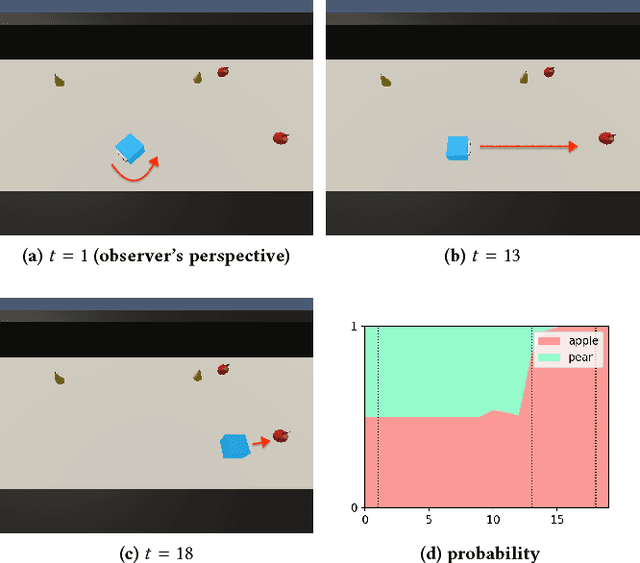

Oct 12, 2018

Abstract:Most of agents that learn policy for tasks with reinforcement learning (RL) lack the ability to communicate with people, which makes human-agent collaboration challenging. We believe that, in order for RL agents to comprehend utterances from human colleagues, RL agents must infer the mental states that people attribute to them because people sometimes infer an interlocutor's mental states and communicate on the basis of this mental inference. This paper proposes PublicSelf model, which is a model of a person who infers how the person's own behavior appears to their colleagues. We implemented the PublicSelf model for an RL agent in a simulated environment and examined the inference of the model by comparing it with people's judgment. The results showed that the agent's intention that people attributed to the agent's movement was correctly inferred by the model in scenes where people could find certain intentionality from the agent's behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge