Macro Action Reinforcement Learning with Sequence Disentanglement using Variational Autoencoder

Paper and Code

Mar 22, 2019

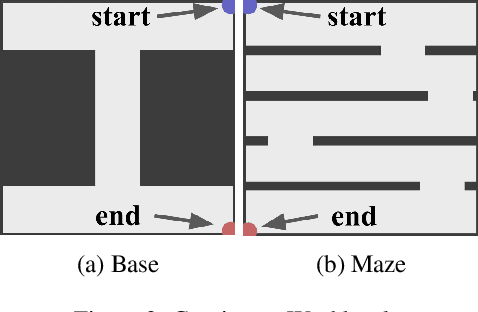

One problem in the application of reinforcement learning to real-world problems is the curse of dimensionality on the action space. Macro actions, a sequence of primitive actions, have been studied to diminish the dimensionality of the action space with regard to the time axis. However, previous studies relied on humans defining macro actions or assumed macro actions as repetitions of the same primitive actions. We present Factorized Macro Action Reinforcement Learning (FaMARL) which autonomously learns disentangled factor representation of a sequence of actions to generate macro actions that can be directly applied to general reinforcement learning algorithms. FaMARL exhibits higher scores than other reinforcement learning algorithms on environments that require an extensive amount of search.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge