He-Liang Huang

Sample-efficient quantum error mitigation via classical learning surrogates

Nov 10, 2025Abstract:The pursuit of practical quantum utility on near-term quantum processors is critically challenged by their inherent noise. Quantum error mitigation (QEM) techniques are leading solutions to improve computation fidelity with relatively low qubit-overhead, while full-scale quantum error correction remains a distant goal. However, QEM techniques incur substantial measurement overheads, especially when applied to families of quantum circuits parameterized by classical inputs. Focusing on zero-noise extrapolation (ZNE), a widely adopted QEM technique, here we devise the surrogate-enabled ZNE (S-ZNE), which leverages classical learning surrogates to perform ZNE entirely on the classical side. Unlike conventional ZNE, whose measurement cost scales linearly with the number of circuits, S-ZNE requires only constant measurement overhead for an entire family of quantum circuits, offering superior scalability. Theoretical analysis indicates that S-ZNE achieves accuracy comparable to conventional ZNE in many practical scenarios, and numerical experiments on up to 100-qubit ground-state energy and quantum metrology tasks confirm its effectiveness. Our approach provides a template that can be effectively extended to other quantum error mitigation protocols, opening a promising path toward scalable error mitigation.

Demonstration of Efficient Predictive Surrogates for Large-scale Quantum Processors

Jul 23, 2025Abstract:The ongoing development of quantum processors is driving breakthroughs in scientific discovery. Despite this progress, the formidable cost of fabricating large-scale quantum processors means they will remain rare for the foreseeable future, limiting their widespread application. To address this bottleneck, we introduce the concept of predictive surrogates, which are classical learning models designed to emulate the mean-value behavior of a given quantum processor with provably computational efficiency. In particular, we propose two predictive surrogates that can substantially reduce the need for quantum processor access in diverse practical scenarios. To demonstrate their potential in advancing digital quantum simulation, we use these surrogates to emulate a quantum processor with up to 20 programmable superconducting qubits, enabling efficient pre-training of variational quantum eigensolvers for families of transverse-field Ising models and identification of non-equilibrium Floquet symmetry-protected topological phases. Experimental results reveal that the predictive surrogates not only reduce measurement overhead by orders of magnitude, but can also surpass the performance of conventional, quantum-resource-intensive approaches. Collectively, these findings establish predictive surrogates as a practical pathway to broadening the impact of advanced quantum processors.

AI-Powered Algorithm-Centric Quantum Processor Topology Design

Dec 18, 2024Abstract:Quantum computing promises to revolutionize various fields, yet the execution of quantum programs necessitates an effective compilation process. This involves strategically mapping quantum circuits onto the physical qubits of a quantum processor. The qubits' arrangement, or topology, is pivotal to the circuit's performance, a factor that often defies traditional heuristic or manual optimization methods due to its complexity. In this study, we introduce a novel approach leveraging reinforcement learning to dynamically tailor qubit topologies to the unique specifications of individual quantum circuits, guiding algorithm-driven quantum processor topology design for reducing the depth of mapped circuit, which is particularly critical for the output accuracy on noisy quantum processors. Our method marks a significant departure from previous methods that have been constrained to mapping circuits onto a fixed processor topology. Experiments demonstrate that we have achieved notable enhancements in circuit performance, with a minimum of 20\% reduction in circuit depth in 60\% of the cases examined, and a maximum enhancement of up to 46\%. Furthermore, the pronounced benefits of our approach in reducing circuit depth become increasingly evident as the scale of the quantum circuits increases, exhibiting the scalability of our method in terms of problem size. This work advances the co-design of quantum processor architecture and algorithm mapping, offering a promising avenue for future research and development in the field.

Near-Term Quantum Computing Techniques: Variational Quantum Algorithms, Error Mitigation, Circuit Compilation, Benchmarking and Classical Simulation

Nov 17, 2022Abstract:Quantum computing is a game-changing technology for global academia, research centers and industries including computational science, mathematics, finance, pharmaceutical, materials science, chemistry and cryptography. Although it has seen a major boost in the last decade, we are still a long way from reaching the maturity of a full-fledged quantum computer. That said, we will be in the Noisy-Intermediate Scale Quantum (NISQ) era for a long time, working on dozens or even thousands of qubits quantum computing systems. An outstanding challenge, then, is to come up with an application that can reliably carry out a nontrivial task of interest on the near-term quantum devices with non-negligible quantum noise. To address this challenge, several near-term quantum computing techniques, including variational quantum algorithms, error mitigation, quantum circuit compilation and benchmarking protocols, have been proposed to characterize and mitigate errors, and to implement algorithms with a certain resistance to noise, so as to enhance the capabilities of near-term quantum devices and explore the boundaries of their ability to realize useful applications. Besides, the development of near-term quantum devices is inseparable from the efficient classical simulation, which plays a vital role in quantum algorithm design and verification, error-tolerant verification and other applications. This review will provide a thorough introduction of these near-term quantum computing techniques, report on their progress, and finally discuss the future prospect of these techniques, which we hope will motivate researchers to undertake additional studies in this field.

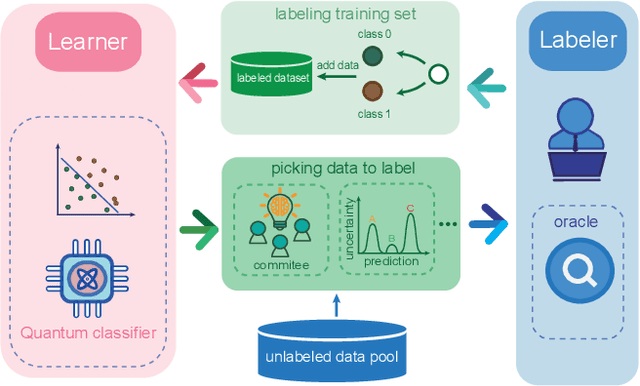

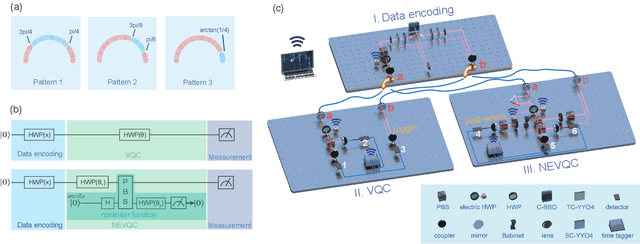

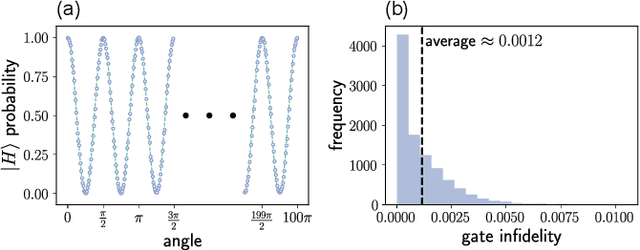

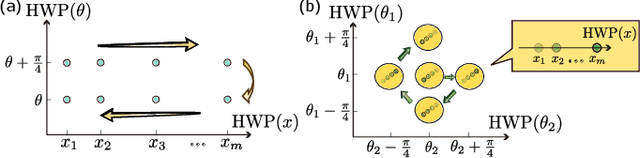

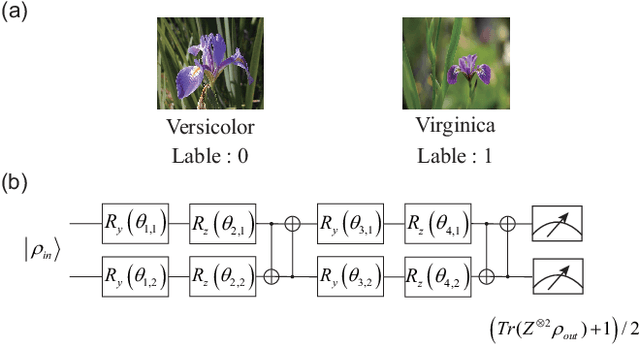

Active Learning on a Programmable Photonic Quantum Processor

Aug 03, 2022

Abstract:Training a quantum machine learning model generally requires a large labeled dataset, which incurs high labeling and computational costs. To reduce such costs, a selective training strategy, called active learning (AL), chooses only a subset of the original dataset to learn while maintaining the trained model's performance. Here, we design and implement two AL-enpowered variational quantum classifiers, to investigate the potential applications and effectiveness of AL in quantum machine learning. Firstly, we build a programmable free-space photonic quantum processor, which enables the programmed implementation of various hybrid quantum-classical computing algorithms. Then, we code the designed variational quantum classifier with AL into the quantum processor, and execute comparative tests for the classifiers with and without the AL strategy. The results validate the great advantage of AL in quantum machine learning, as it saves at most $85\%$ labeling efforts and $91.6\%$ percent computational efforts compared to the training without AL on a data classification task. Our results inspire AL's further applications in large-scale quantum machine learning to drastically reduce training data and speed up training, underpinning the exploration of practical quantum advantages in quantum physics or real-world applications.

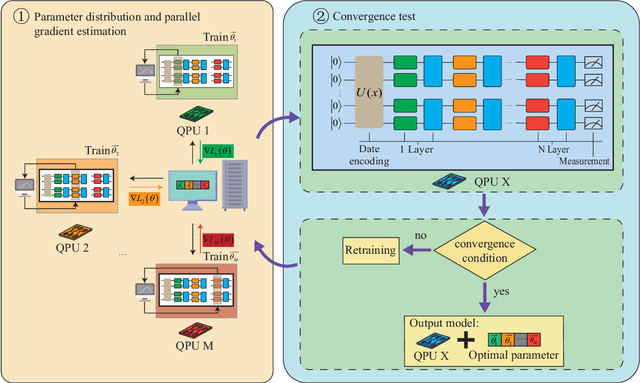

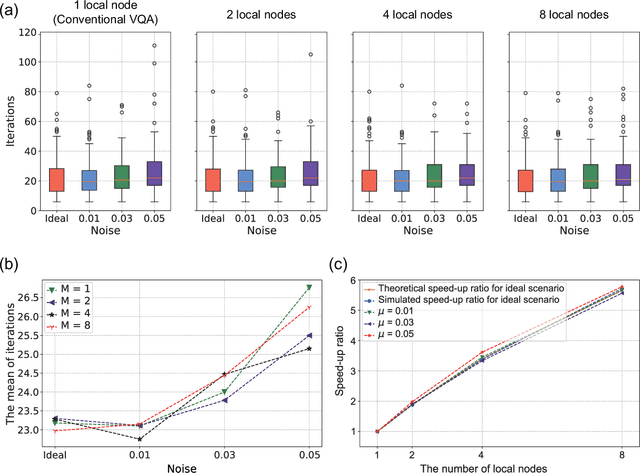

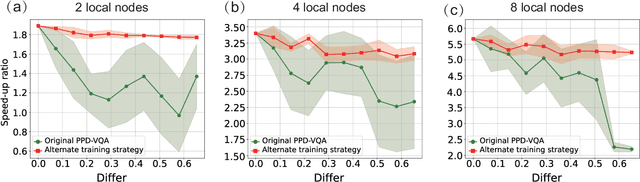

Parameter-Parallel Distributed Variational Quantum Algorithm

Jul 31, 2022

Abstract:Variational quantum algorithms (VQAs) have emerged as a promising near-term technique to explore practical quantum advantage on noisy intermediate-scale quantum (NISQ) devices. However, the inefficient parameter training process due to the incompatibility with backpropagation and the cost of a large number of measurements, posing a great challenge to the large-scale development of VQAs. Here, we propose a parameter-parallel distributed variational quantum algorithm (PPD-VQA), to accelerate the training process by parameter-parallel training with multiple quantum processors. To maintain the high performance of PPD-VQA in the realistic noise scenarios, a alternate training strategy is proposed to alleviate the acceleration attenuation caused by noise differences among multiple quantum processors, which is an unavoidable common problem of distributed VQA. Besides, the gradient compression is also employed to overcome the potential communication bottlenecks. The achieved results suggest that the PPD-VQA could provide a practical solution for coordinating multiple quantum processors to handle large-scale real-word applications.

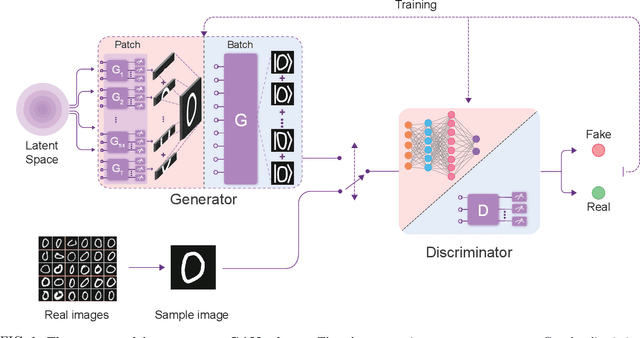

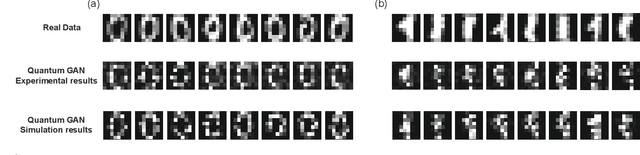

Experimental Quantum Generative Adversarial Networks for Image Generation

Oct 21, 2020

Abstract:Quantum machine learning is expected to be one of the first practical applications of near-term quantum devices. Pioneer theoretical works suggest that quantum generative adversarial networks (GANs) may exhibit a potential exponential advantage over classical GANs, thus attracting widespread attention. However, it remains elusive whether quantum GANs implemented on near-term quantum devices can actually solve real-world learning tasks. Here, we devise a flexible quantum GAN scheme to narrow this knowledge gap, which could accomplish image generation with arbitrarily high-dimensional features, and could also take advantage of quantum superposition to train multiple examples in parallel. For the first time, we experimentally achieve the learning and generation of real-world hand-written digit images on a superconducting quantum processor. Moreover, we utilize a gray-scale bar dataset to exhibit the competitive performance between quantum GANs and the classical GANs based on multilayer perceptron and convolutional neural network architectures, respectively, benchmarked by the Fr\'echet Distance score. Our work provides guidance for developing advanced quantum generative models on near-term quantum devices and opens up an avenue for exploring quantum advantages in various GAN-related learning tasks.

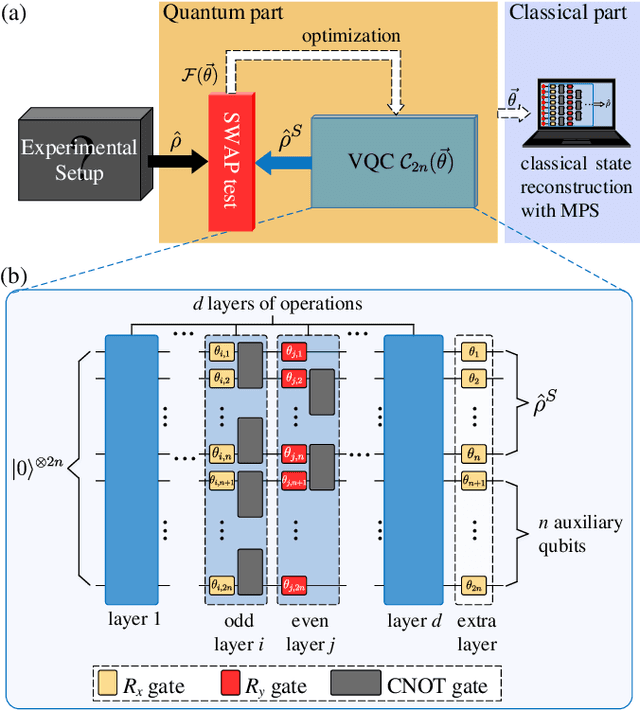

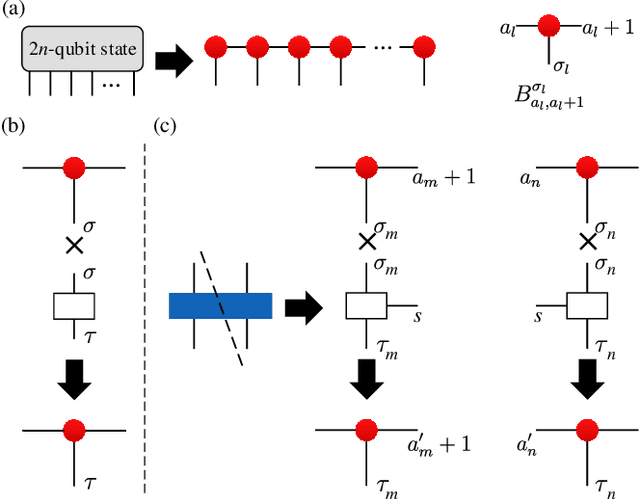

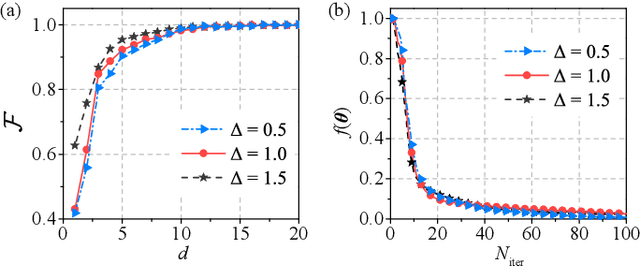

Variational Quantum Circuits for Quantum State Tomography

Dec 16, 2019

Abstract:We propose a hybrid quantum-classical algorithm for quantum state tomography. Given an unknown quantum state, a quantum machine learning algorithm is used to maximize the fidelity between the output of a variational quantum circuit and this state. The number of parameters of the variational quantum circuit grows linearly with the number of qubits and the circuit depth. After that, a subsequent classical algorithm is used to reconstruct the unknown quantum state. We demonstrate our method by performing numerical simulations to reconstruct the ground state of a one-dimensional quantum spin chain, using a variational quantum circuit simulator. Our method is suitable for near-term quantum computing platforms, and could be used for relatively large-scale quantum state tomography for experimentally relevant quantum states.

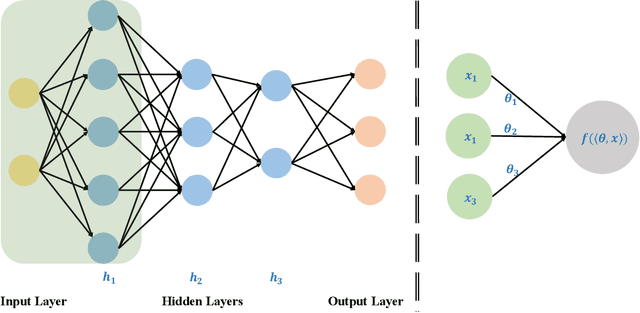

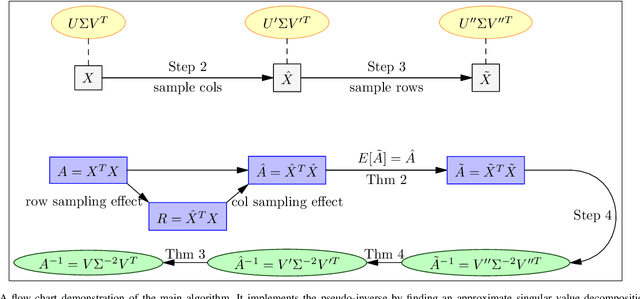

Quantum-Inspired Support Vector Machine

Jul 30, 2019

Abstract:Support vector machine (SVM) is a particularly powerful and flexible supervised learning model that analyze data for both classification and regression, whose usual algorithm complexity scales polynomially with the dimension of data space and the number of data points. Inspired by quantum SVM, we present a quantum-inspired classical algorithm for SVM using fast sampling techniques. In our approach, we developed a method sampling kernel matrix by the given information on data points and make classification through estimation of classification expression. Our approach can be applied to various types of SVM, such as linear SVM, non-linear SVM and soft SVM. Theoretical analysis shows one can make classification with arbitrary success probability in logarithmic runtime of both the dimension of data space and the number of data points, matching the runtime of the quantum SVM.

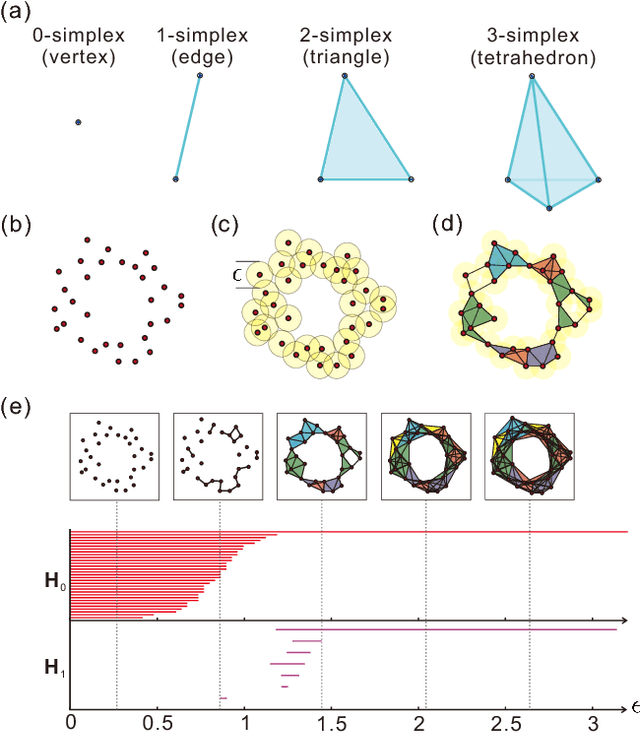

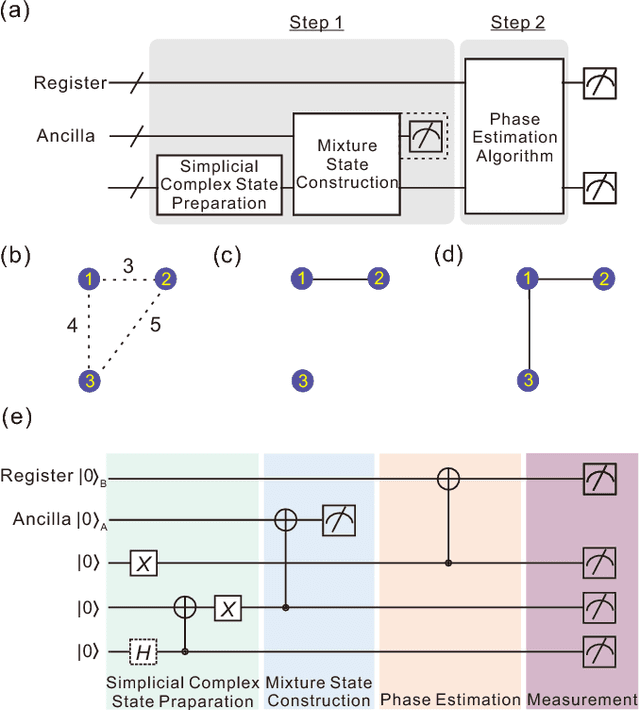

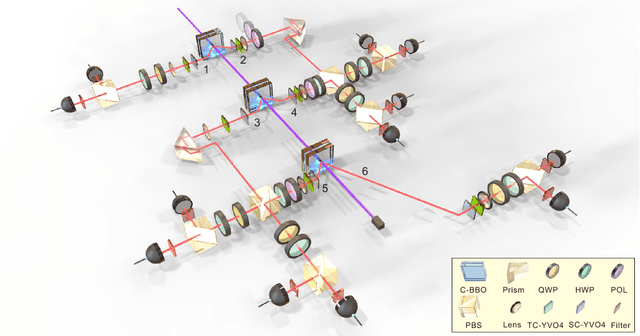

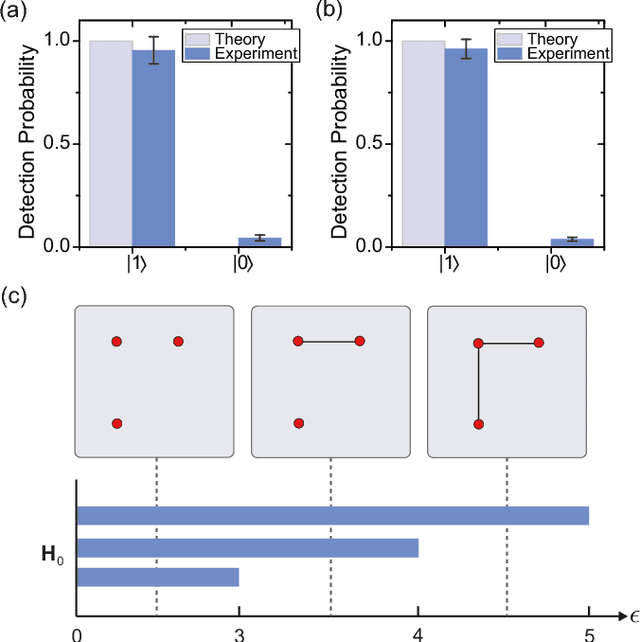

Demonstration of Topological Data Analysis on a Quantum Processor

Jan 19, 2018

Abstract:Topological data analysis offers a robust way to extract useful information from noisy, unstructured data by identifying its underlying structure. Recently, an efficient quantum algorithm was proposed [Lloyd, Garnerone, Zanardi, Nat. Commun. 7, 10138 (2016)] for calculating Betti numbers of data points -- topological features that count the number of topological holes of various dimensions in a scatterplot. Here, we implement a proof-of-principle demonstration of this quantum algorithm by employing a six-photon quantum processor to successfully analyze the topological features of Betti numbers of a network including three data points, providing new insights into data analysis in the era of quantum computing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge