H. Peter Soyer

The iToBoS dataset: skin region images extracted from 3D total body photographs for lesion detection

Jan 30, 2025

Abstract:Artificial intelligence has significantly advanced skin cancer diagnosis by enabling rapid and accurate detection of malignant lesions. In this domain, most publicly available image datasets consist of single, isolated skin lesions positioned at the center of the image. While these lesion-centric datasets have been fundamental for developing diagnostic algorithms, they lack the context of the surrounding skin, which is critical for improving lesion detection. The iToBoS dataset was created to address this challenge. It includes 16,954 images of skin regions from 100 participants, captured using 3D total body photography. Each image roughly corresponds to a $7 \times 9$ cm section of skin with all suspicious lesions annotated using bounding boxes. Additionally, the dataset provides metadata such as anatomical location, age group, and sun damage score for each image. This dataset aims to facilitate training and benchmarking of algorithms, with the goal of enabling early detection of skin cancer and deployment of this technology in non-clinical environments.

A General-Purpose Multimodal Foundation Model for Dermatology

Oct 19, 2024

Abstract:Diagnosing and treating skin diseases require advanced visual skills across multiple domains and the ability to synthesize information from various imaging modalities. Current deep learning models, while effective at specific tasks such as diagnosing skin cancer from dermoscopic images, fall short in addressing the complex, multimodal demands of clinical practice. Here, we introduce PanDerm, a multimodal dermatology foundation model pretrained through self-supervised learning on a dataset of over 2 million real-world images of skin diseases, sourced from 11 clinical institutions across 4 imaging modalities. We evaluated PanDerm on 28 diverse datasets covering a range of clinical tasks, including skin cancer screening, phenotype assessment and risk stratification, diagnosis of neoplastic and inflammatory skin diseases, skin lesion segmentation, change monitoring, and metastasis prediction and prognosis. PanDerm achieved state-of-the-art performance across all evaluated tasks, often outperforming existing models even when using only 5-10% of labeled data. PanDerm's clinical utility was demonstrated through reader studies in real-world clinical settings across multiple imaging modalities. It outperformed clinicians by 10.2% in early-stage melanoma detection accuracy and enhanced clinicians' multiclass skin cancer diagnostic accuracy by 11% in a collaborative human-AI setting. Additionally, PanDerm demonstrated robust performance across diverse demographic factors, including different body locations, age groups, genders, and skin tones. The strong results in benchmark evaluations and real-world clinical scenarios suggest that PanDerm could enhance the management of skin diseases and serve as a model for developing multimodal foundation models in other medical specialties, potentially accelerating the integration of AI support in healthcare.

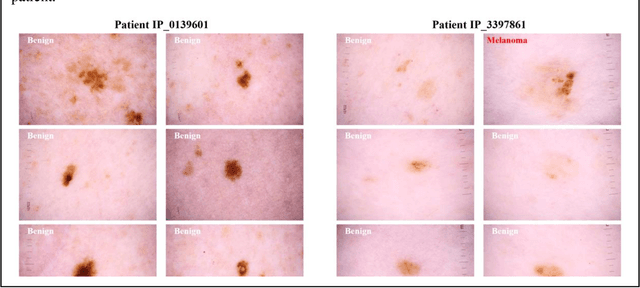

Ugly Ducklings or Swans: A Tiered Quadruplet Network with Patient-Specific Mining for Improved Skin Lesion Classification

Sep 18, 2023Abstract:An ugly duckling is an obviously different skin lesion from surrounding lesions of an individual, and the ugly duckling sign is a criterion used to aid in the diagnosis of cutaneous melanoma by differentiating between highly suspicious and benign lesions. However, the appearance of pigmented lesions, can change drastically from one patient to another, resulting in difficulties in visual separation of ugly ducklings. Hence, we propose DMT-Quadruplet - a deep metric learning network to learn lesion features at two tiers - patient-level and lesion-level. We introduce a patient-specific quadruplet mining approach together with a tiered quadruplet network, to drive the network to learn more contextual information both globally and locally between the two tiers. We further incorporate a dynamic margin within the patient-specific mining to allow more useful quadruplets to be mined within individuals. Comprehensive experiments show that our proposed method outperforms traditional classifiers, achieving 54% higher sensitivity than a baseline ResNet18 CNN and 37% higher than a naive triplet network in classifying ugly duckling lesions. Visualisation of the data manifold in the metric space further illustrates that DMT-Quadruplet is capable of classifying ugly duckling lesions in both patient-specific and patient-agnostic manner successfully.

Application of Machine Learning in Melanoma Detection and the Identification of 'Ugly Duckling' and Suspicious Naevi: A Review

Sep 05, 2023

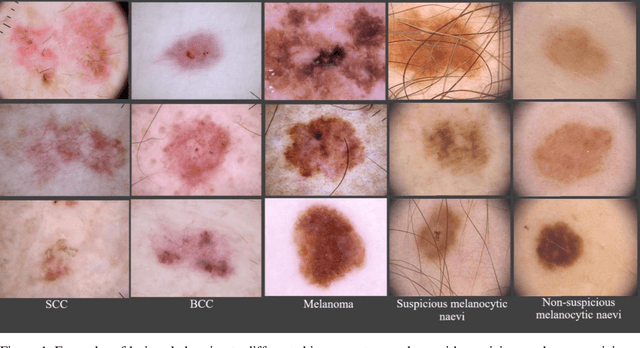

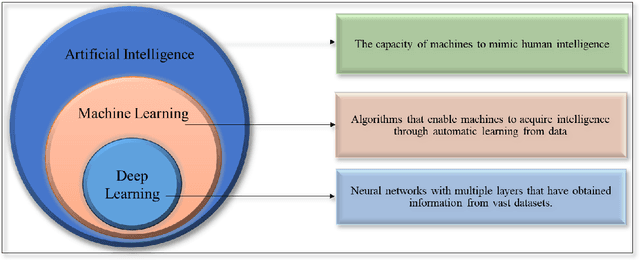

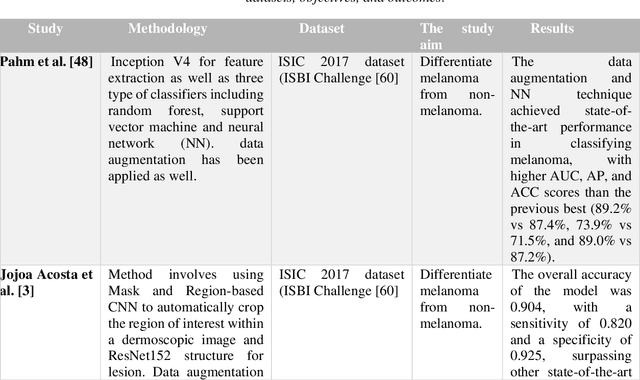

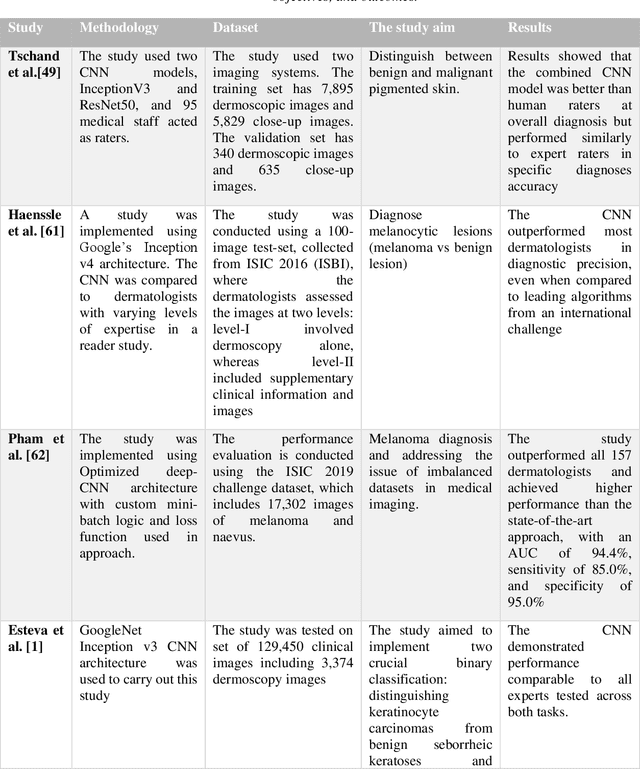

Abstract:Skin lesions known as naevi exhibit diverse characteristics such as size, shape, and colouration. The concept of an "Ugly Duckling Naevus" comes into play when monitoring for melanoma, referring to a lesion with distinctive features that sets it apart from other lesions in the vicinity. As lesions within the same individual typically share similarities and follow a predictable pattern, an ugly duckling naevus stands out as unusual and may indicate the presence of a cancerous melanoma. Computer-aided diagnosis (CAD) has become a significant player in the research and development field, as it combines machine learning techniques with a variety of patient analysis methods. Its aim is to increase accuracy and simplify decision-making, all while responding to the shortage of specialized professionals. These automated systems are especially important in skin cancer diagnosis where specialist availability is limited. As a result, their use could lead to life-saving benefits and cost reductions within healthcare. Given the drastic change in survival when comparing early stage to late-stage melanoma, early detection is vital for effective treatment and patient outcomes. Machine learning (ML) and deep learning (DL) techniques have gained popularity in skin cancer classification, effectively addressing challenges, and providing results equivalent to that of specialists. This article extensively covers modern Machine Learning and Deep Learning algorithms for detecting melanoma and suspicious naevi. It begins with general information on skin cancer and different types of naevi, then introduces AI, ML, DL, and CAD. The article then discusses the successful applications of various ML techniques like convolutional neural networks (CNN) for melanoma detection compared to dermatologists' performance. Lastly, it examines ML methods for UD naevus detection and identifying suspicious naevi.

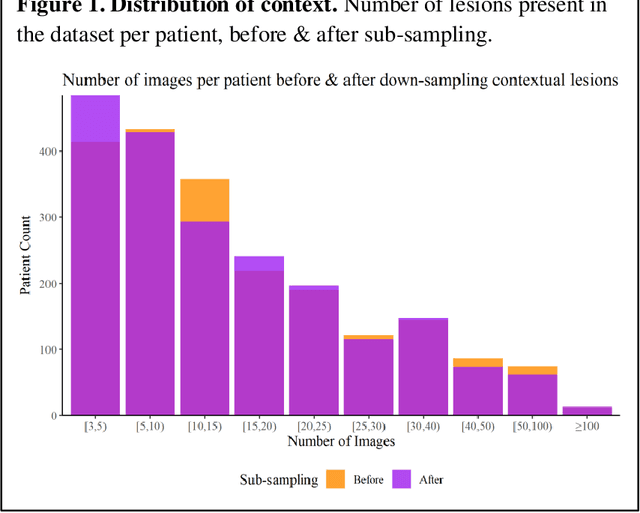

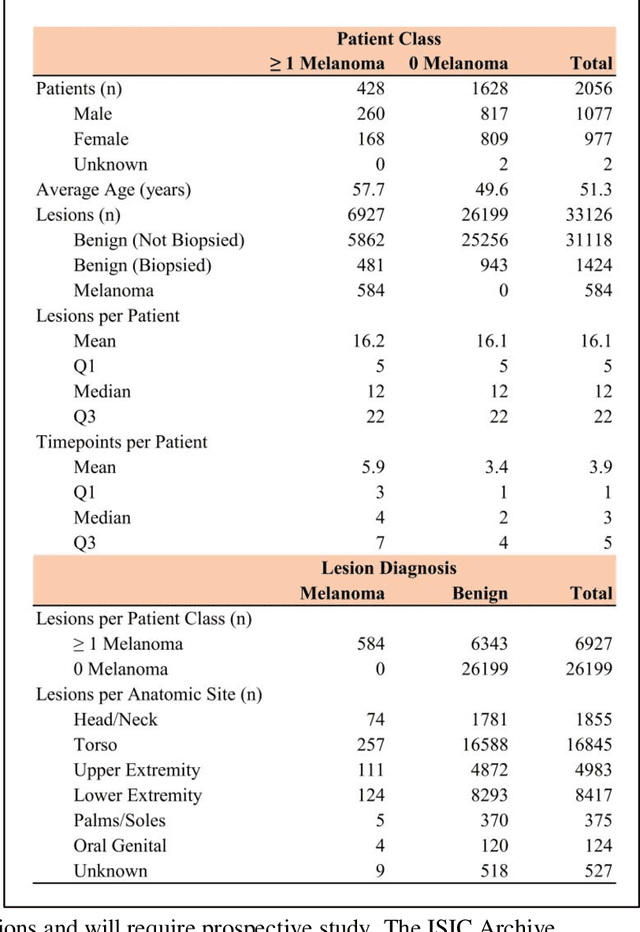

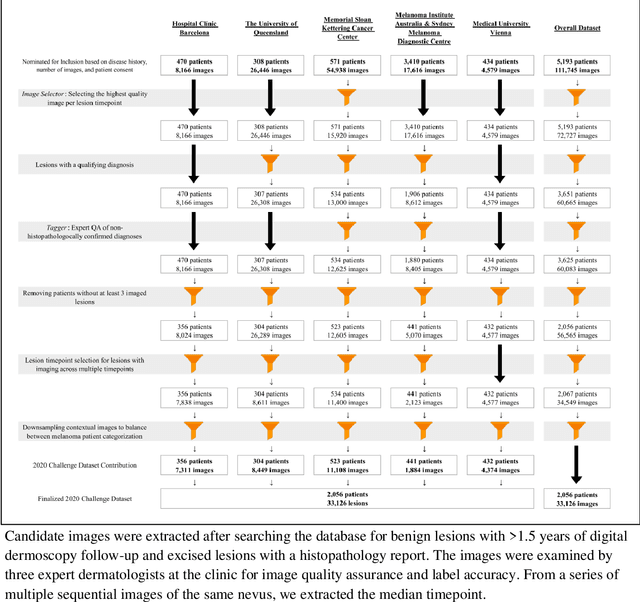

A Patient-Centric Dataset of Images and Metadata for Identifying Melanomas Using Clinical Context

Aug 07, 2020

Abstract:Prior skin image datasets have not addressed patient-level information obtained from multiple skin lesions from the same patient. Though artificial intelligence classification algorithms have achieved expert-level performance in controlled studies examining single images, in practice dermatologists base their judgment holistically from multiple lesions on the same patient. The 2020 SIIM-ISIC Melanoma Classification challenge dataset described herein was constructed to address this discrepancy between prior challenges and clinical practice, providing for each image in the dataset an identifier allowing lesions from the same patient to be mapped to one another. This patient-level contextual information is frequently used by clinicians to diagnose melanoma and is especially useful in ruling out false positives in patients with many atypical nevi. The dataset represents 2,056 patients from three continents with an average of 16 lesions per patient, consisting of 33,126 dermoscopic images and 584 histopathologically confirmed melanomas compared with benign melanoma mimickers.

Automatic Detection of Blue-White Veil and Related Structures in Dermoscopy Images

Sep 06, 2010

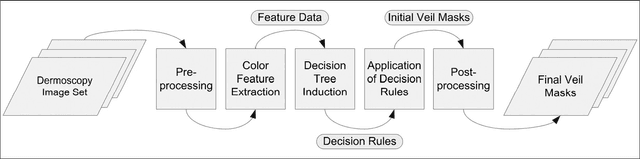

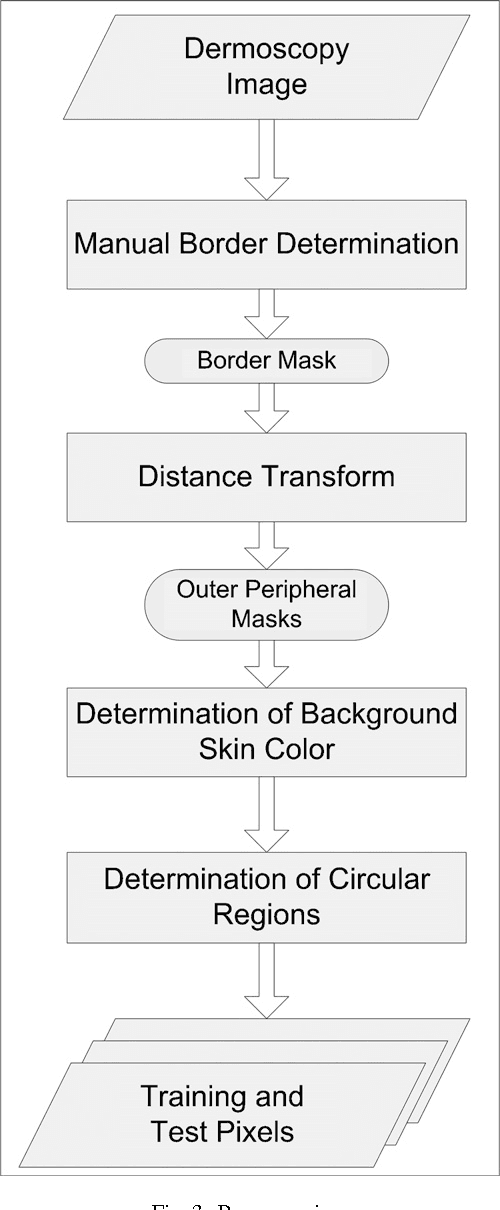

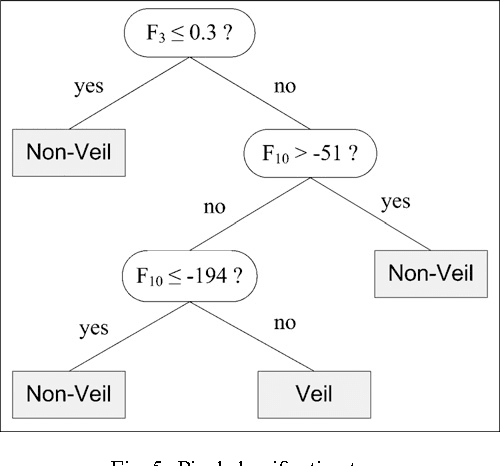

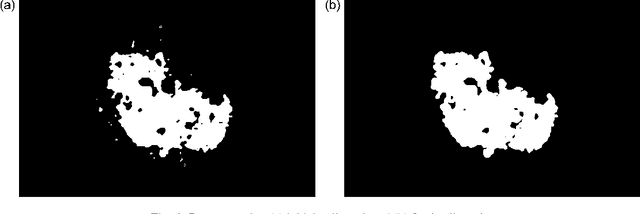

Abstract:Dermoscopy is a non-invasive skin imaging technique, which permits visualization of features of pigmented melanocytic neoplasms that are not discernable by examination with the naked eye. One of the most important features for the diagnosis of melanoma in dermoscopy images is the blue-white veil (irregular, structureless areas of confluent blue pigmentation with an overlying white "ground-glass" film). In this article, we present a machine learning approach to the detection of blue-white veil and related structures in dermoscopy images. The method involves contextual pixel classification using a decision tree classifier. The percentage of blue-white areas detected in a lesion combined with a simple shape descriptor yielded a sensitivity of 69.35% and a specificity of 89.97% on a set of 545 dermoscopy images. The sensitivity rises to 78.20% for detection of blue veil in those cases where it is a primary feature for melanoma recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge