Gholamali Aminian

$f$-FUM: Federated Unlearning via min--max and $f$-divergence

Feb 05, 2026Abstract:Federated Learning (FL) has emerged as a powerful paradigm for collaborative machine learning across decentralized data sources, preserving privacy by keeping data local. However, increasing legal and ethical demands, such as the "right to be forgotten", and the need to mitigate data poisoning attacks have underscored the urgent necessity for principled data unlearning in FL. Unlike centralized settings, the distributed nature of FL complicates the removal of individual data contributions. In this paper, we propose a novel federated unlearning framework formulated as a min-max optimization problem, where the objective is to maximize an $f$-divergence between the model trained with all data and the model retrained without specific data points, while minimizing the degradation on retained data. Our framework could act like a plugin and be added to almost any federated setup, unlike SOTA methods like (\cite{10269017} which requires model degradation in server, or \cite{khalil2025notfederatedunlearningweight} which requires to involve model architecture and model weights). This formulation allows for efficient approximation of data removal effects in a federated setting. We provide empirical evaluations to show that our method achieves significant speedups over naive retraining, with minimal impact on utility.

ReDiF: Reinforced Distillation for Few Step Diffusion

Dec 28, 2025Abstract:Distillation addresses the slow sampling problem in diffusion models by creating models with smaller size or fewer steps that approximate the behavior of high-step teachers. In this work, we propose a reinforcement learning based distillation framework for diffusion models. Instead of relying on fixed reconstruction or consistency losses, we treat the distillation process as a policy optimization problem, where the student is trained using a reward signal derived from alignment with the teacher's outputs. This RL driven approach dynamically guides the student to explore multiple denoising paths, allowing it to take longer, optimized steps toward high-probability regions of the data distribution, rather than relying on incremental refinements. Our framework utilizes the inherent ability of diffusion models to handle larger steps and effectively manage the generative process. Experimental results show that our method achieves superior performance with significantly fewer inference steps and computational resources compared to existing distillation techniques. Additionally, the framework is model agnostic, applicable to any type of diffusion models with suitable reward functions, providing a general optimization paradigm for efficient diffusion learning.

Log-Sum-Exponential Estimator for Off-Policy Evaluation and Learning

Jun 07, 2025Abstract:Off-policy learning and evaluation leverage logged bandit feedback datasets, which contain context, action, propensity score, and feedback for each data point. These scenarios face significant challenges due to high variance and poor performance with low-quality propensity scores and heavy-tailed reward distributions. We address these issues by introducing a novel estimator based on the log-sum-exponential (LSE) operator, which outperforms traditional inverse propensity score estimators. Our LSE estimator demonstrates variance reduction and robustness under heavy-tailed conditions. For off-policy evaluation, we derive upper bounds on the estimator's bias and variance. In the off-policy learning scenario, we establish bounds on the regret -- the performance gap between our LSE estimator and the optimal policy -- assuming bounded $(1+\epsilon)$-th moment of weighted reward. Notably, we achieve a convergence rate of $O(n^{-\epsilon/(1+ \epsilon)})$ for the regret bounds, where $\epsilon \in [0,1]$ and $n$ is the size of logged bandit feedback dataset. Theoretical analysis is complemented by comprehensive empirical evaluations in both off-policy learning and evaluation scenarios, confirming the practical advantages of our approach. The code for our estimator is available at the following link: https://github.com/armin-behnamnia/lse-offpolicy-learning.

Semi-pessimistic Reinforcement Learning

May 25, 2025Abstract:Offline reinforcement learning (RL) aims to learn an optimal policy from pre-collected data. However, it faces challenges of distributional shift, where the learned policy may encounter unseen scenarios not covered in the offline data. Additionally, numerous applications suffer from a scarcity of labeled reward data. Relying on labeled data alone often leads to a narrow state-action distribution, further amplifying the distributional shift, and resulting in suboptimal policy learning. To address these issues, we first recognize that the volume of unlabeled data is typically substantially larger than that of labeled data. We then propose a semi-pessimistic RL method to effectively leverage abundant unlabeled data. Our approach offers several advantages. It considerably simplifies the learning process, as it seeks a lower bound of the reward function, rather than that of the Q-function or state transition function. It is highly flexible, and can be integrated with a range of model-free and model-based RL algorithms. It enjoys the guaranteed improvement when utilizing vast unlabeled data, but requires much less restrictive conditions. We compare our method with a number of alternative solutions, both analytically and numerically, and demonstrate its clear competitiveness. We further illustrate with an application to adaptive deep brain stimulation for Parkinson's disease.

Generalization Error of $f$-Divergence Stabilized Algorithms via Duality

Feb 20, 2025Abstract:The solution to empirical risk minimization with $f$-divergence regularization (ERM-$f$DR) is extended to constrained optimization problems, establishing conditions for equivalence between the solution and constraints. A dual formulation of ERM-$f$DR is introduced, providing a computationally efficient method to derive the normalization function of the ERM-$f$DR solution. This dual approach leverages the Legendre-Fenchel transform and the implicit function theorem, enabling explicit characterizations of the generalization error for general algorithms under mild conditions, and another for ERM-$f$DR solutions.

Private Synthetic Graph Generation and Fused Gromov-Wasserstein Distance

Feb 17, 2025

Abstract:Networks are popular for representing complex data. In particular, differentially private synthetic networks are much in demand for method and algorithm development. The network generator should be easy to implement and should come with theoretical guarantees. Here we start with complex data as input and jointly provide a network representation as well as a synthetic network generator. Using a random connection model, we devise an effective algorithmic approach for generating attributed synthetic graphs which is $\epsilon$-differentially private at the vertex level, while preserving utility under an appropriate notion of distance which we develop. We provide theoretical guarantees for the accuracy of the private synthetic graphs using the fused Gromov-Wasserstein distance, which extends the Wasserstein metric to structured data. Our method draws inspiration from the PSMM method of \citet{he2023}.

Theoretical Analysis of KL-regularized RLHF with Multiple Reference Models

Feb 03, 2025

Abstract:Recent methods for aligning large language models (LLMs) with human feedback predominantly rely on a single reference model, which limits diversity, model overfitting, and underutilizes the wide range of available pre-trained models. Incorporating multiple reference models has the potential to address these limitations by broadening perspectives, reducing bias, and leveraging the strengths of diverse open-source LLMs. However, integrating multiple reference models into reinforcement learning with human feedback (RLHF) frameworks poses significant theoretical challenges, particularly in reverse KL-regularization, where achieving exact solutions has remained an open problem. This paper presents the first \emph{exact solution} to the multiple reference model problem in reverse KL-regularized RLHF. We introduce a comprehensive theoretical framework that includes rigorous statistical analysis and provides sample complexity guarantees. Additionally, we extend our analysis to forward KL-regularized RLHF, offering new insights into sample complexity requirements in multiple reference scenarios. Our contributions lay the foundation for more advanced and adaptable LLM alignment techniques, enabling the effective use of multiple reference models. This work paves the way for developing alignment frameworks that are both theoretically sound and better suited to the challenges of modern AI ecosystems.

UPL: Uncertainty-aware Pseudo-labeling for Imbalance Transductive Node Classification

Feb 02, 2025

Abstract:Graph-structured datasets often suffer from class imbalance, which complicates node classification tasks. In this work, we address this issue by first providing an upper bound on population risk for imbalanced transductive node classification. We then propose a simple and novel algorithm, Uncertainty-aware Pseudo-labeling (UPL). Our approach leverages pseudo-labels assigned to unlabeled nodes to mitigate the adverse effects of imbalance on classification accuracy. Furthermore, the UPL algorithm enhances the accuracy of pseudo-labeling by reducing training noise of pseudo-labels through a novel uncertainty-aware approach. We comprehensively evaluate the UPL algorithm across various benchmark datasets, demonstrating its superior performance compared to existing state-of-the-art methods.

Understanding Transfer Learning via Mean-field Analysis

Oct 23, 2024

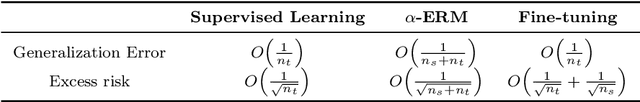

Abstract:We propose a novel framework for exploring generalization errors of transfer learning through the lens of differential calculus on the space of probability measures. In particular, we consider two main transfer learning scenarios, $\alpha$-ERM and fine-tuning with the KL-regularized empirical risk minimization and establish generic conditions under which the generalization error and the population risk convergence rates for these scenarios are studied. Based on our theoretical results, we show the benefits of transfer learning with a one-hidden-layer neural network in the mean-field regime under some suitable integrability and regularity assumptions on the loss and activation functions.

Generalization Error of the Tilted Empirical Risk

Sep 28, 2024Abstract:The generalization error (risk) of a supervised statistical learning algorithm quantifies its prediction ability on previously unseen data. Inspired by exponential tilting, Li et al. (2021) proposed the tilted empirical risk as a non-linear risk metric for machine learning applications such as classification and regression problems. In this work, we examine the generalization error of the tilted empirical risk. In particular, we provide uniform and information-theoretic bounds on the tilted generalization error, defined as the difference between the population risk and the tilted empirical risk, with a convergence rate of $O(1/\sqrt{n})$ where $n$ is the number of training samples. Furthermore, we study the solution to the KL-regularized expected tilted empirical risk minimization problem and derive an upper bound on the expected tilted generalization error with a convergence rate of $O(1/n)$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge