Gabriele Facciolo

CB, IUF

Diachronic Stereo Matching for Multi-Date Satellite Imagery

Jan 30, 2026Abstract:Recent advances in image-based satellite 3D reconstruction have progressed along two complementary directions. On one hand, multi-date approaches using NeRF or Gaussian-splatting jointly model appearance and geometry across many acquisitions, achieving accurate reconstructions on opportunistic imagery with numerous observations. On the other hand, classical stereoscopic reconstruction pipelines deliver robust and scalable results for simultaneous or quasi-simultaneous image pairs. However, when the two images are captured months apart, strong seasonal, illumination, and shadow changes violate standard stereoscopic assumptions, causing existing pipelines to fail. This work presents the first Diachronic Stereo Matching method for satellite imagery, enabling reliable 3D reconstruction from temporally distant pairs. Two advances make this possible: (1) fine-tuning a state-of-the-art deep stereo network that leverages monocular depth priors, and (2) exposing it to a dataset specifically curated to include a diverse set of diachronic image pairs. In particular, we start from a pretrained MonSter model, trained initially on a mix of synthetic and real datasets such as SceneFlow and KITTI, and fine-tune it on a set of stereo pairs derived from the DFC2019 remote sensing challenge. This dataset contains both synchronic and diachronic pairs under diverse seasonal and illumination conditions. Experiments on multi-date WorldView-3 imagery demonstrate that our approach consistently surpasses classical pipelines and unadapted deep stereo models on both synchronic and diachronic settings. Fine-tuning on temporally diverse images, together with monocular priors, proves essential for enabling 3D reconstruction from previously incompatible acquisition dates. Left image (winter) Right image (autumn) DSM geometry Ours (1.23 m) Zero-shot (3.99 m) LiDAR GT Figure 1. Output geometry for a winter-autumn image pair from Omaha (OMA 331 test scene). Our method recovers accurate geometry despite the diachronic nature of the pair, exhibiting strong appearance changes, which cause existing zero-shot methods to fail. Missing values due to perspective shown in black. Mean altitude error in parentheses; lower is better.

Remote Sensing Change Detection via Weak Temporal Supervision

Jan 05, 2026Abstract:Semantic change detection in remote sensing aims to identify land cover changes between bi-temporal image pairs. Progress in this area has been limited by the scarcity of annotated datasets, as pixel-level annotation is costly and time-consuming. To address this, recent methods leverage synthetic data or generate artificial change pairs, but out-of-domain generalization remains limited. In this work, we introduce a weak temporal supervision strategy that leverages additional temporal observations of existing single-temporal datasets, without requiring any new annotations. Specifically, we extend single-date remote sensing datasets with new observations acquired at different times and train a change detection model by assuming that real bi-temporal pairs mostly contain no change, while pairing images from different locations to generate change examples. To handle the inherent noise in these weak labels, we employ an object-aware change map generation and an iterative refinement process. We validate our approach on extended versions of the FLAIR and IAILD aerial datasets, achieving strong zero-shot and low-data regime performance across different benchmarks. Lastly, we showcase results over large areas in France, highlighting the scalability potential of our method.

EOGS: Gaussian Splatting for Earth Observation

Dec 17, 2024

Abstract:Recently, Gaussian splatting has emerged as a strong alternative to NeRF, demonstrating impressive 3D modeling capabilities while requiring only a fraction of the training and rendering time. In this paper, we show how the standard Gaussian splatting framework can be adapted for remote sensing, retaining its high efficiency. This enables us to achieve state-of-the-art performance in just a few minutes, compared to the day-long optimization required by the best-performing NeRF-based Earth observation methods. The proposed framework incorporates remote-sensing improvements from EO-NeRF, such as radiometric correction and shadow modeling, while introducing novel components, including sparsity, view consistency, and opacity regularizations.

Structure Tensor Representation for Robust Oriented Object Detection

Nov 15, 2024

Abstract:Oriented object detection predicts orientation in addition to object location and bounding box. Precisely predicting orientation remains challenging due to angular periodicity, which introduces boundary discontinuity issues and symmetry ambiguities. Inspired by classical works on edge and corner detection, this paper proposes to represent orientation in oriented bounding boxes as a structure tensor. This representation combines the strengths of Gaussian-based methods and angle-coder solutions, providing a simple yet efficient approach that is robust to angular periodicity issues without additional hyperparameters. Extensive evaluations across five datasets demonstrate that the proposed structure tensor representation outperforms previous methods in both fully-supervised and weakly supervised tasks, achieving high precision in angular prediction with minimal computational overhead. Thus, this work establishes structure tensors as a robust and modular alternative for encoding orientation in oriented object detection. We make our code publicly available, allowing for seamless integration into existing object detectors.

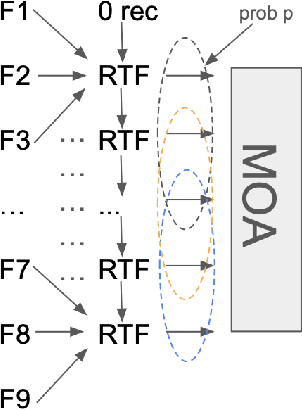

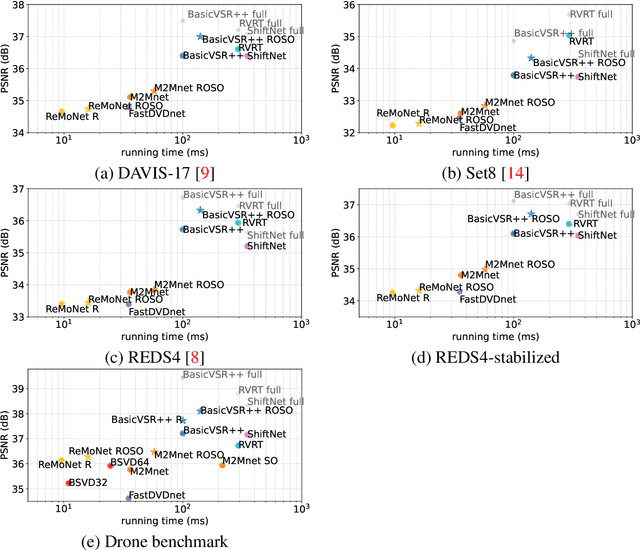

Adapting MIMO video restoration networks to low latency constraints

Aug 22, 2024

Abstract:MIMO (multiple input, multiple output) approaches are a recent trend in neural network architectures for video restoration problems, where each network evaluation produces multiple output frames. The video is split into non-overlapping stacks of frames that are processed independently, resulting in a very appealing trade-off between output quality and computational cost. In this work we focus on the low-latency setting by limiting the number of available future frames. We find that MIMO architectures suffer from problems that have received little attention so far, namely (1) the performance drops significantly due to the reduced temporal receptive field, particularly for frames at the borders of the stack, (2) there are strong temporal discontinuities at stack transitions which induce a step-wise motion artifact. We propose two simple solutions to alleviate these problems: recurrence across MIMO stacks to boost the output quality by implicitly increasing the temporal receptive field, and overlapping of the output stacks to smooth the temporal discontinuity at stack transitions. These modifications can be applied to any MIMO architecture. We test them on three state-of-the-art video denoising networks with different computational cost. The proposed contributions result in a new state-of-the-art for low-latency networks, both in terms of reconstruction error and temporal consistency. As an additional contribution, we introduce a new benchmark consisting of drone footage that highlights temporal consistency issues that are not apparent in the standard benchmarks.

How to Best Combine Demosaicing and Denoising?

Aug 13, 2024

Abstract:Image demosaicing and denoising play a critical role in the raw imaging pipeline. These processes have often been treated as independent, without considering their interactions. Indeed, most classic denoising methods handle noisy RGB images, not raw images. Conversely, most demosaicing methods address the demosaicing of noise free images. The real problem is to jointly denoise and demosaic noisy raw images. But the question of how to proceed is still not yet clarified. In this paper, we carry-out extensive experiments and a mathematical analysis to tackle this problem by low complexity algorithms. Indeed, both problems have been only addressed jointly by end-to-end heavy weight convolutional neural networks (CNNs), which are currently incompatible with low power portable imaging devices and remain by nature domain (or device) dependent. Our study leads us to conclude that, with moderate noise, demosaicing should be applied first, followed by denoising. This requires a simple adaptation of classic denoising algorithms to demosaiced noise, which we justify and specify. Although our main conclusion is ``demosaic first, then denoise'', we also discover that for high noise, there is a moderate PSNR gain by a more complex strategy: partial CFA denoising followed by demosaicing, and by a second denoising on the RGB image. These surprising results are obtained by a black-box optimization of the pipeline, which could be applied to any other pipeline. We validate our results on simulated and real noisy CFA images obtained from several benchmarks.

* This paper was accepted by Inverse Problems and Imaging on October, 2023

Leveraging edge detection and neural networks for better UAV localization

Apr 09, 2024

Abstract:We propose a novel method for geolocalizing Unmanned Aerial Vehicles (UAVs) in environments lacking Global Navigation Satellite Systems (GNSS). Current state-of-the-art techniques employ an offline-trained encoder to generate a vector representation (embedding) of the UAV's current view, which is then compared with pre-computed embeddings of geo-referenced images to determine the UAV's position. Here, we demonstrate that the performance of these methods can be significantly enhanced by preprocessing the images to extract their edges, which exhibit robustness to seasonal and illumination variations. Furthermore, we establish that utilizing edges enhances resilience to orientation and altitude inaccuracies. Additionally, we introduce a confidence criterion for localization. Our findings are substantiated through synthetic experiments.

Exploring Robust Features for Few-Shot Object Detection in Satellite Imagery

Mar 08, 2024

Abstract:The goal of this paper is to perform object detection in satellite imagery with only a few examples, thus enabling users to specify any object class with minimal annotation. To this end, we explore recent methods and ideas from open-vocabulary detection for the remote sensing domain. We develop a few-shot object detector based on a traditional two-stage architecture, where the classification block is replaced by a prototype-based classifier. A large-scale pre-trained model is used to build class-reference embeddings or prototypes, which are compared to region proposal contents for label prediction. In addition, we propose to fine-tune prototypes on available training images to boost performance and learn differences between similar classes, such as aircraft types. We perform extensive evaluations on two remote sensing datasets containing challenging and rare objects. Moreover, we study the performance of both visual and image-text features, namely DINOv2 and CLIP, including two CLIP models specifically tailored for remote sensing applications. Results indicate that visual features are largely superior to vision-language models, as the latter lack the necessary domain-specific vocabulary. Lastly, the developed detector outperforms fully supervised and few-shot methods evaluated on the SIMD and DIOR datasets, despite minimal training parameters.

Fast, nonlocal and neural: a lightweight high quality solution to image denoising

Mar 06, 2024

Abstract:With the widespread application of convolutional neural networks (CNNs), the traditional model based denoising algorithms are now outperformed. However, CNNs face two problems. First, they are computationally demanding, which makes their deployment especially difficult for mobile terminals. Second, experimental evidence shows that CNNs often over-smooth regular textures present in images, in contrast to traditional non-local models. In this letter, we propose a solution to both issues by combining a nonlocal algorithm with a lightweight residual CNN. This solution gives full latitude to the advantages of both models. We apply this framework to two GPU implementations of classic nonlocal algorithms (NLM and BM3D) and observe a substantial gain in both cases, performing better than the state-of-the-art with low computational requirements. Our solution is between 10 and 20 times faster than CNNs with equivalent performance and attains higher PSNR. In addition the final method shows a notable gain on images containing complex textures like the ones of the MIT Moire dataset.

* 5 pages. This paper was accepted by IEEE Signal Processing Letters on July 1, 2021

Radar Fields: An Extension of Radiance Fields to SAR

Dec 20, 2023

Abstract:Radiance fields have been a major breakthrough in the field of inverse rendering, novel view synthesis and 3D modeling of complex scenes from multi-view image collections. Since their introduction, it was shown that they could be extended to other modalities such as LiDAR, radio frequencies, X-ray or ultrasound. In this paper, we show that, despite the important difference between optical and synthetic aperture radar (SAR) image formation models, it is possible to extend radiance fields to radar images thus presenting the first "radar fields". This allows us to learn surface models using only collections of radar images, similar to how regular radiance fields are learned and with the same computational complexity on average. Thanks to similarities in how both fields are defined, this work also shows a potential for hybrid methods combining both optical and SAR images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge