Dawa Derksen

Densification and forecasting of Sentinel-2 time series from multimodal SAR and Optical satellite data using deep generative models

May 05, 2026Abstract:Optical satellite image time series are extensively used in many Earth observation applications, including agriculture, climate monitoring, and land surface analysis. However, clouds and swath edges result in irregular sampling along the temporal dimension, limiting continuous monitoring. To address this issue, a growing body of work has focused on temporal densification and reconstruction of satellite image time series, with the objective of filling missing or cloud-contaminated observations within the temporal extent of the available data. While these approaches improve temporal continuity, they are inherently restricted to the reconstruction of the gaps within the observed time periods, and do not address the prediction of future observations. This work proposes a probabilistic deep learning framework for the densification and forecasting of Sentinel-2 time series by generating optical images at arbitrary past or future dates. The approach leverages multimodal satellite data by jointly exploiting Sentinel-2 optical and Sentinel-1 SAR observations. Unlike most existing works, we propose to focus on the uncertainty of the generated images. Experimental results demonstrate effective densification and forecasting, on sparse and temporally misaligned time series.

Tile and Slide : A New Framework for Scaling NeRF from Local to Global 3D Earth Observation

Jul 02, 2025Abstract:Neural Radiance Fields (NeRF) have recently emerged as a paradigm for 3D reconstruction from multiview satellite imagery. However, state-of-the-art NeRF methods are typically constrained to small scenes due to the memory footprint during training, which we study in this paper. Previous work on large-scale NeRFs palliate this by dividing the scene into NeRFs. This paper introduces Snake-NeRF, a framework that scales to large scenes. Our out-of-core method eliminates the need to load all images and networks simultaneously, and operates on a single device. We achieve this by dividing the region of interest into NeRFs that 3D tile without overlap. Importantly, we crop the images with overlap to ensure each NeRFs is trained with all the necessary pixels. We introduce a novel $2\times 2$ 3D tile progression strategy and segmented sampler, which together prevent 3D reconstruction errors along the tile edges. Our experiments conclude that large satellite images can effectively be processed with linear time complexity, on a single GPU, and without compromise in quality.

Joint attitude estimation and 3D neural reconstruction of non-cooperative space objects

Jun 25, 2025Abstract:Obtaining a better knowledge of the current state and behavior of objects orbiting Earth has proven to be essential for a range of applications such as active debris removal, in-orbit maintenance, or anomaly detection. 3D models represent a valuable source of information in the field of Space Situational Awareness (SSA). In this work, we leveraged Neural Radiance Fields (NeRF) to perform 3D reconstruction of non-cooperative space objects from simulated images. This scenario is challenging for NeRF models due to unusual camera characteristics and environmental conditions : mono-chromatic images, unknown object orientation, limited viewing angles, absence of diffuse lighting etc. In this work we focus primarly on the joint optimization of camera poses alongside the NeRF. Our experimental results show that the most accurate 3D reconstruction is achieved when training with successive images one-by-one. We estimate camera poses by optimizing an uniform rotation and use regularization to prevent successive poses from being too far apart.

Parameter-Efficient Adaptation of Geospatial Foundation Models through Embedding Deflection

Mar 12, 2025

Abstract:As large-scale heterogeneous data sets become increasingly available, adapting foundation models at low cost has become a key issue. Seminal works in natural language processing, e.g. Low-Rank Adaptation (LoRA), leverage the low "intrinsic rank" of parameter updates during adaptation. In this paper, we argue that incorporating stronger inductive biases in both data and models can enhance the adaptation of Geospatial Foundation Models (GFMs), pretrained on RGB satellite images, to other types of optical satellite data. Specifically, the pretrained parameters of GFMs serve as a strong prior for the spatial structure of multispectral images. For this reason, we introduce DEFLECT (Deflecting Embeddings for Finetuning Latent representations for Earth and Climate Tasks), a novel strategy for adapting GFMs to multispectral satellite imagery with very few additional parameters. DEFLECT improves the representation capabilities of the extracted features, particularly enhancing spectral information, which is essential for geoscience and environmental-related tasks. We demonstrate the effectiveness of our method across three different GFMs and five diverse datasets, ranging from forest monitoring to marine environment segmentation. Compared to competing methods, DEFLECT achieves on-par or higher accuracy with 5-10$\times$ fewer parameters for classification and segmentation tasks. The code will be made publicly available.

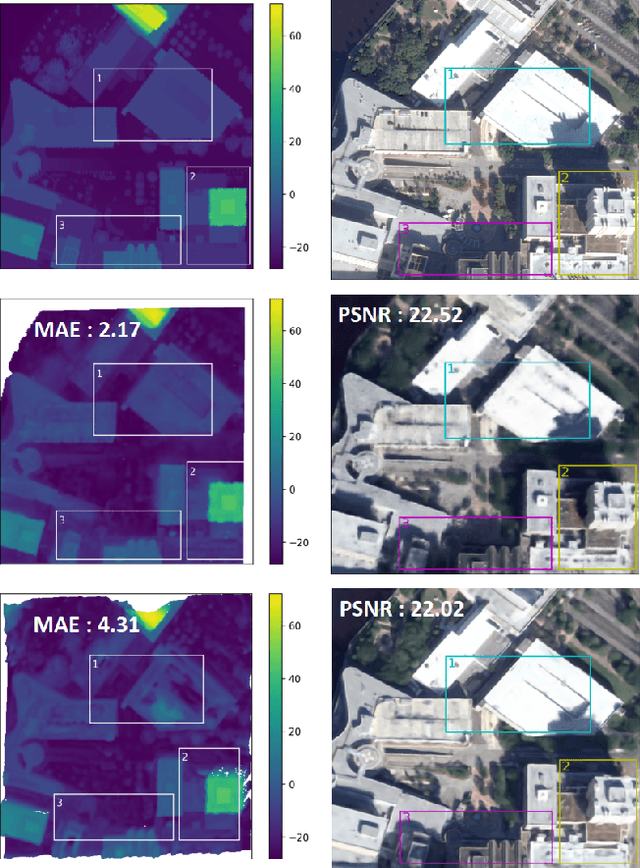

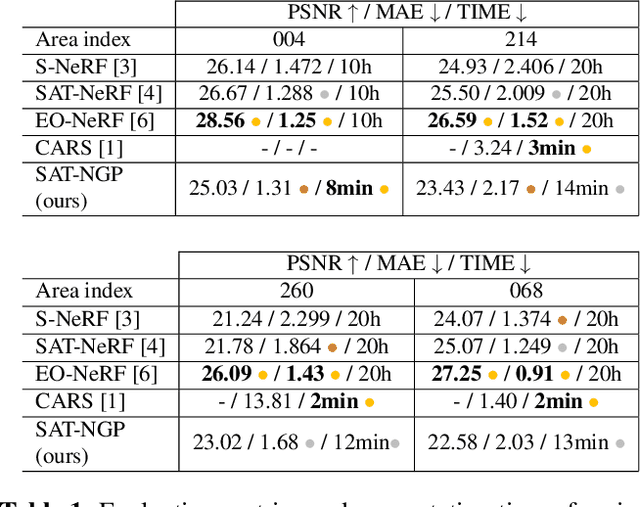

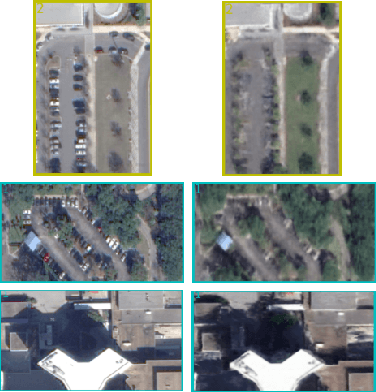

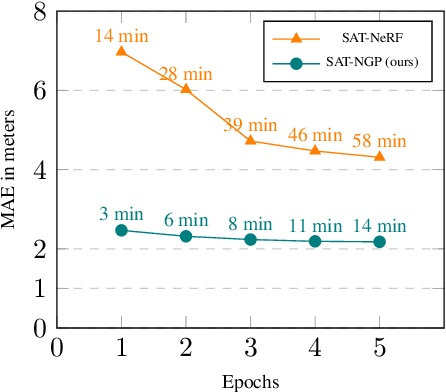

SAT-NGP : Unleashing Neural Graphics Primitives for Fast Relightable Transient-Free 3D reconstruction from Satellite Imagery

Mar 27, 2024

Abstract:Current stereo-vision pipelines produce high accuracy 3D reconstruction when using multiple pairs or triplets of satellite images. However, these pipelines are sensitive to the changes between images that can occur as a result of multi-date acquisitions. Such variations are mainly due to variable shadows, reflexions and transient objects (cars, vegetation). To take such changes into account, Neural Radiance Fields (NeRF) have recently been applied to multi-date satellite imagery. However, Neural methods are very compute-intensive, taking dozens of hours to learn, compared with minutes for standard stereo-vision pipelines. Following the ideas of Instant Neural Graphics Primitives we propose to use an efficient sampling strategy and multi-resolution hash encoding to accelerate the learning. Our model, Satellite Neural Graphics Primitives (SAT-NGP) decreases the learning time to 15 minutes while maintaining the quality of the 3D reconstruction.

Radar Fields: An Extension of Radiance Fields to SAR

Dec 20, 2023

Abstract:Radiance fields have been a major breakthrough in the field of inverse rendering, novel view synthesis and 3D modeling of complex scenes from multi-view image collections. Since their introduction, it was shown that they could be extended to other modalities such as LiDAR, radio frequencies, X-ray or ultrasound. In this paper, we show that, despite the important difference between optical and synthetic aperture radar (SAR) image formation models, it is possible to extend radiance fields to radar images thus presenting the first "radar fields". This allows us to learn surface models using only collections of radar images, similar to how regular radiance fields are learned and with the same computational complexity on average. Thanks to similarities in how both fields are defined, this work also shows a potential for hybrid methods combining both optical and SAR images.

Vision-based Neural Scene Representations for Spacecraft

May 11, 2021

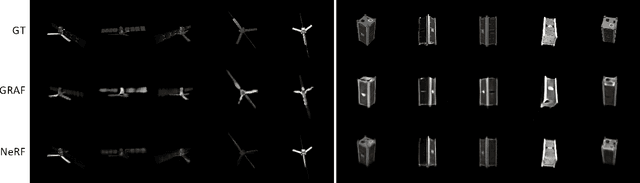

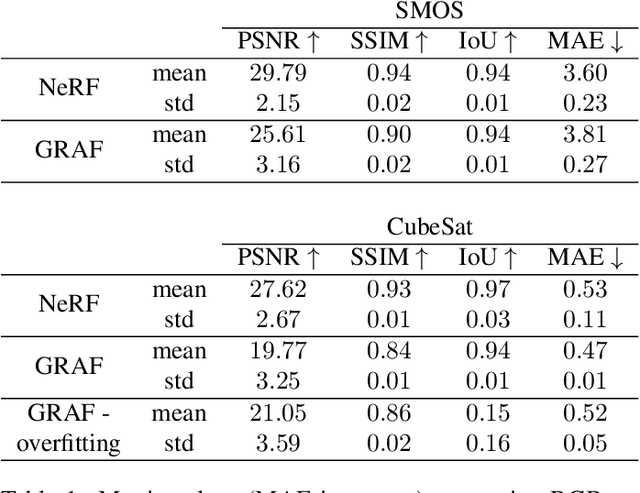

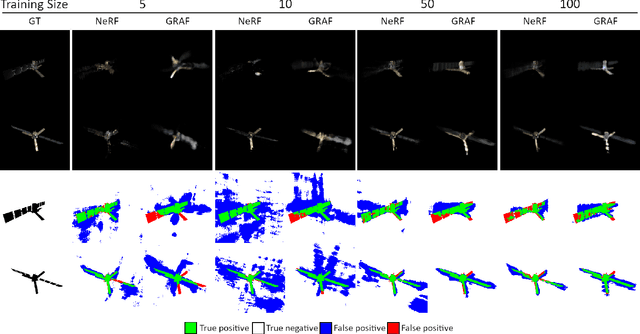

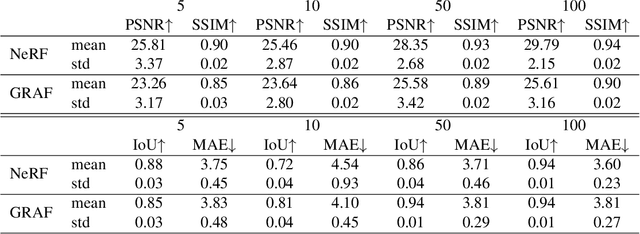

Abstract:In advanced mission concepts with high levels of autonomy, spacecraft need to internally model the pose and shape of nearby orbiting objects. Recent works in neural scene representations show promising results for inferring generic three-dimensional scenes from optical images. Neural Radiance Fields (NeRF) have shown success in rendering highly specular surfaces using a large number of images and their pose. More recently, Generative Radiance Fields (GRAF) achieved full volumetric reconstruction of a scene from unposed images only, thanks to the use of an adversarial framework to train a NeRF. In this paper, we compare and evaluate the potential of NeRF and GRAF to render novel views and extract the 3D shape of two different spacecraft, the Soil Moisture and Ocean Salinity satellite of ESA's Living Planet Programme and a generic cube sat. Considering the best performances of both models, we observe that NeRF has the ability to render more accurate images regarding the material specularity of the spacecraft and its pose. For its part, GRAF generates precise novel views with accurate details even when parts of the satellites are shadowed while having the significant advantage of not needing any information about the relative pose.

Shadow Neural Radiance Fields for Multi-view Satellite Photogrammetry

Apr 20, 2021

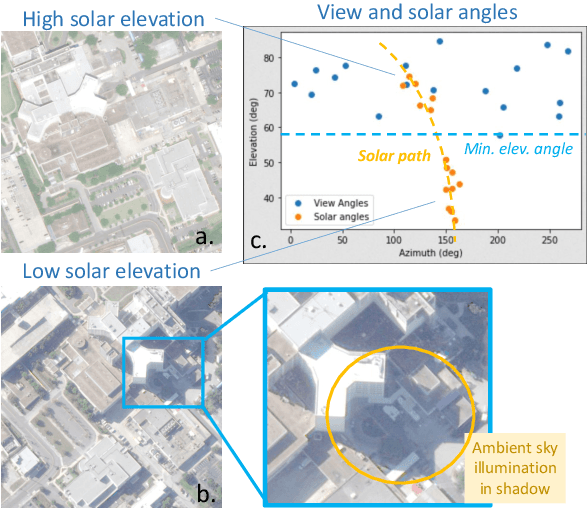

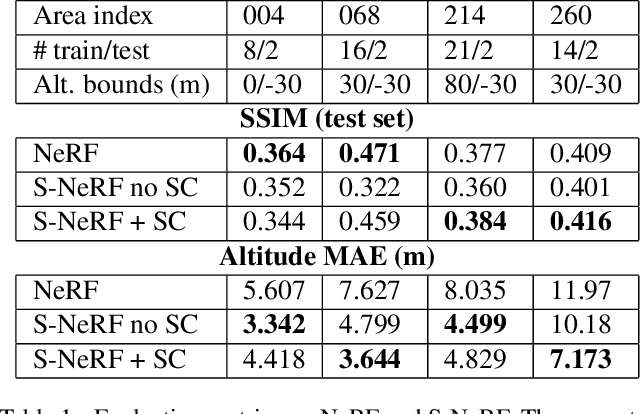

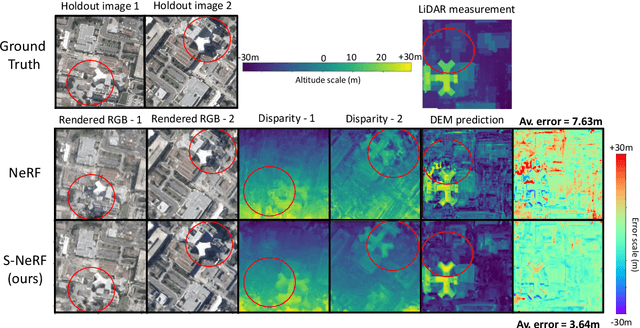

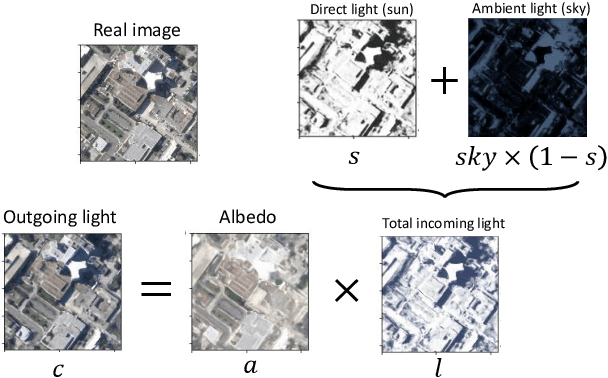

Abstract:We present a new generic method for shadow-aware multi-view satellite photogrammetry of Earth Observation scenes. Our proposed method, the Shadow Neural Radiance Field (S-NeRF) follows recent advances in implicit volumetric representation learning. For each scene, we train S-NeRF using very high spatial resolution optical images taken from known viewing angles. The learning requires no labels or shape priors: it is self-supervised by an image reconstruction loss. To accommodate for changing light source conditions both from a directional light source (the Sun) and a diffuse light source (the sky), we extend the NeRF approach in two ways. First, direct illumination from the Sun is modeled via a local light source visibility field. Second, indirect illumination from a diffuse light source is learned as a non-local color field as a function of the position of the Sun. Quantitatively, the combination of these factors reduces the altitude and color errors in shaded areas, compared to NeRF. The S-NeRF methodology not only performs novel view synthesis and full 3D shape estimation, it also enables shadow detection, albedo synthesis, and transient object filtering, without any explicit shape supervision.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge