Chaoyi Lin

From Noise to Latent: Generating Gaussian Latents for INR-Based Image Compression

Nov 11, 2025Abstract:Recent implicit neural representation (INR)-based image compression methods have shown competitive performance by overfitting image-specific latent codes. However, they remain inferior to end-to-end (E2E) compression approaches due to the absence of expressive latent representations. On the other hand, E2E methods rely on transmitting latent codes and requiring complex entropy models, leading to increased decoding complexity. Inspired by the normalization strategy in E2E codecs where latents are transformed into Gaussian noise to demonstrate the removal of spatial redundancy, we explore the inverse direction: generating latents directly from Gaussian noise. In this paper, we propose a novel image compression paradigm that reconstructs image-specific latents from a multi-scale Gaussian noise tensor, deterministically generated using a shared random seed. A Gaussian Parameter Prediction (GPP) module estimates the distribution parameters, enabling one-shot latent generation via reparameterization trick. The predicted latent is then passed through a synthesis network to reconstruct the image. Our method eliminates the need to transmit latent codes while preserving latent-based benefits, achieving competitive rate-distortion performance on Kodak and CLIC dataset. To the best of our knowledge, this is the first work to explore Gaussian latent generation for learned image compression.

Neural Video Compression with In-Loop Contextual Filtering and Out-of-Loop Reconstruction Enhancement

Sep 04, 2025Abstract:This paper explores the application of enhancement filtering techniques in neural video compression. Specifically, we categorize these techniques into in-loop contextual filtering and out-of-loop reconstruction enhancement based on whether the enhanced representation affects the subsequent coding loop. In-loop contextual filtering refines the temporal context by mitigating error propagation during frame-by-frame encoding. However, its influence on both the current and subsequent frames poses challenges in adaptively applying filtering throughout the sequence. To address this, we introduce an adaptive coding decision strategy that dynamically determines filtering application during encoding. Additionally, out-of-loop reconstruction enhancement is employed to refine the quality of reconstructed frames, providing a simple yet effective improvement in coding efficiency. To the best of our knowledge, this work presents the first systematic study of enhancement filtering in the context of conditional-based neural video compression. Extensive experiments demonstrate a 7.71% reduction in bit rate compared to state-of-the-art neural video codecs, validating the effectiveness of the proposed approach.

EHVC: Efficient Hierarchical Reference and Quality Structure for Neural Video Coding

Sep 04, 2025Abstract:Neural video codecs (NVCs), leveraging the power of end-to-end learning, have demonstrated remarkable coding efficiency improvements over traditional video codecs. Recent research has begun to pay attention to the quality structures in NVCs, optimizing them by introducing explicit hierarchical designs. However, less attention has been paid to the reference structure design, which fundamentally should be aligned with the hierarchical quality structure. In addition, there is still significant room for further optimization of the hierarchical quality structure. To address these challenges in NVCs, we propose EHVC, an efficient hierarchical neural video codec featuring three key innovations: (1) a hierarchical multi-reference scheme that draws on traditional video codec design to align reference and quality structures, thereby addressing the reference-quality mismatch; (2) a lookahead strategy to utilize an encoder-side context from future frames to enhance the quality structure; (3) a layer-wise quality scale with random quality training strategy to stabilize quality structures during inference. With these improvements, EHVC achieves significantly superior performance to the state-of-the-art NVCs. Code will be released in: https://github.com/bytedance/NEVC.

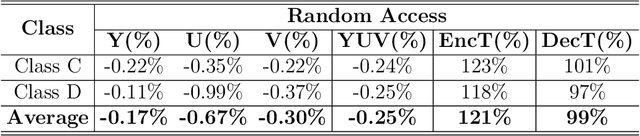

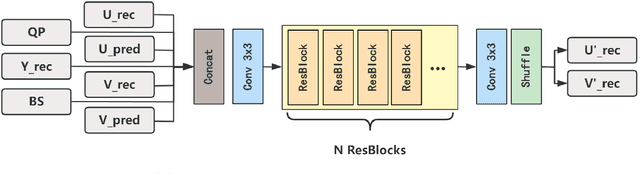

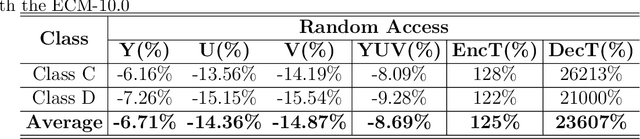

A Neural-network Enhanced Video Coding Framework beyond ECM

Feb 21, 2024

Abstract:In this paper, a hybrid video compression framework is proposed that serves as a demonstrative showcase of deep learning-based approaches extending beyond the confines of traditional coding methodologies. The proposed hybrid framework is founded upon the Enhanced Compression Model (ECM), which is a further enhancement of the Versatile Video Coding (VVC) standard. We have augmented the latest ECM reference software with well-designed coding techniques, including block partitioning, deep learning-based loop filter, and the activation of block importance mapping (BIM) which was integrated but previously inactive within ECM, further enhancing coding performance. Compared with ECM-10.0, our method achieves 6.26, 13.33, and 12.33 BD-rate savings for the Y, U, and V components under random access (RA) configuration, respectively.

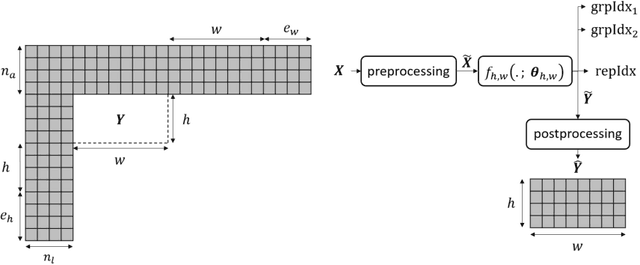

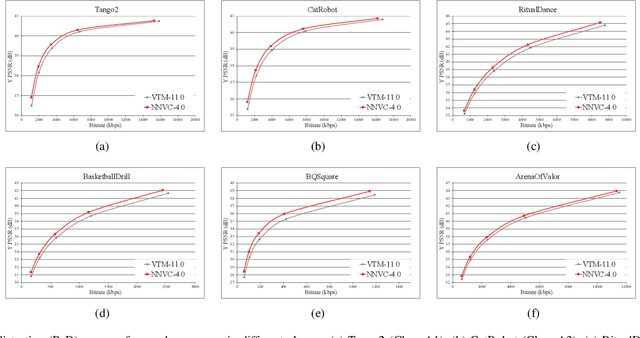

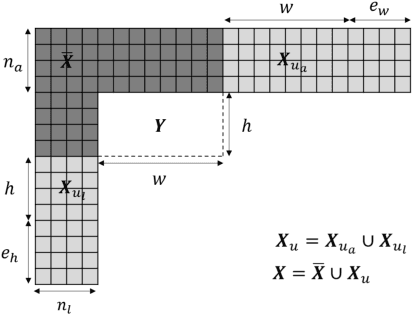

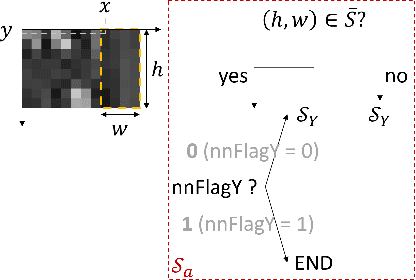

Designs and Implementations in Neural Network-based Video Coding

Sep 13, 2023

Abstract:The past decade has witnessed the huge success of deep learning in well-known artificial intelligence applications such as face recognition, autonomous driving, and large language model like ChatGPT. Recently, the application of deep learning has been extended to a much wider range, with neural network-based video coding being one of them. Neural network-based video coding can be performed at two different levels: embedding neural network-based (NN-based) coding tools into a classical video compression framework or building the entire compression framework upon neural networks. This paper elaborates some of the recent exploration efforts of JVET (Joint Video Experts Team of ITU-T SG 16 WP 3 and ISO/IEC JTC 1/SC29) in the name of neural network-based video coding (NNVC), falling in the former category. Specifically, this paper discusses two major NN-based video coding technologies, i.e. neural network-based intra prediction and neural network-based in-loop filtering, which have been investigated for several meeting cycles in JVET and finally adopted into the reference software of NNVC. Extensive experiments on top of the NNVC have been conducted to evaluate the effectiveness of the proposed techniques. Compared with VTM-11.0_nnvc, the proposed NN-based coding tools in NNVC-4.0 could achieve {11.94%, 21.86%, 22.59%}, {9.18%, 19.76%, 20.92%}, and {10.63%, 21.56%, 23.02%} BD-rate reductions on average for {Y, Cb, Cr} under random-access, low-delay, and all-intra configurations respectively.

Stereoscopic Omnidirectional Image Quality Assessment Based on Predictive Coding Theory

Jun 12, 2019

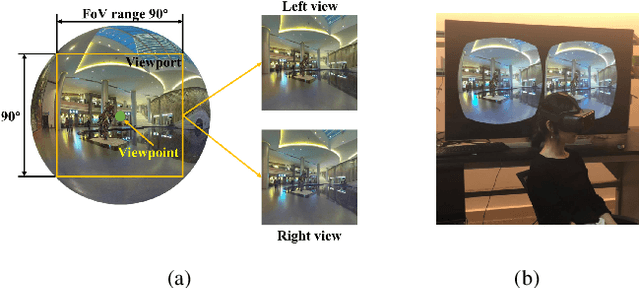

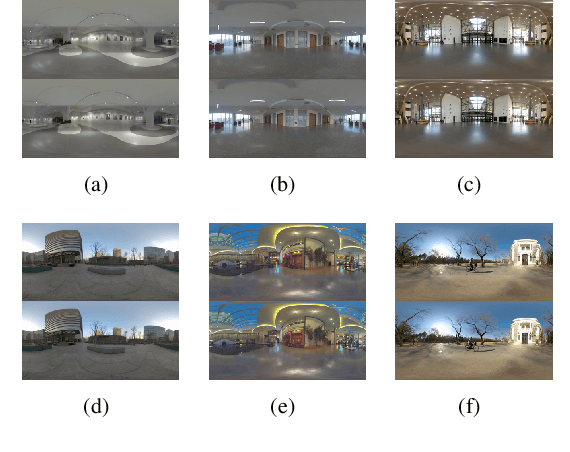

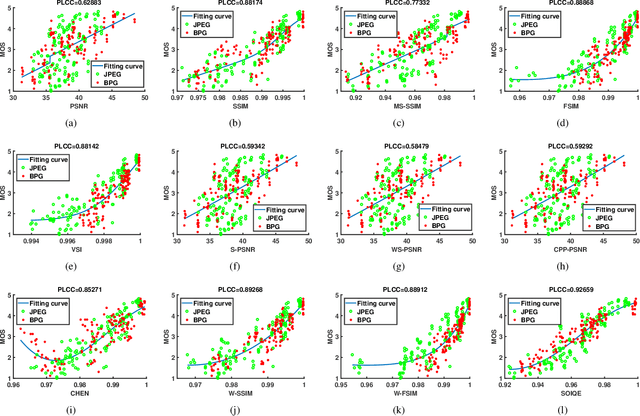

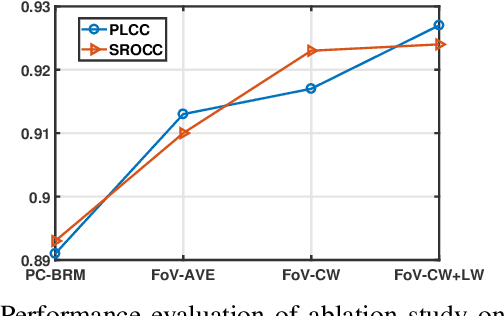

Abstract:Objective quality assessment of stereoscopic omnidirectional images is a challenging problem since it is influenced by multiple aspects such as projection deformation, field of view (FoV) range, binocular vision, visual comfort, etc. Existing studies show that classic 2D or 3D image quality assessment (IQA) metrics are not able to perform well for stereoscopic omnidirectional images. However, very few research works have focused on evaluating the perceptual visual quality of omnidirectional images, especially for stereoscopic omnidirectional images. In this paper, based on the predictive coding theory of the human vision system (HVS), we propose a stereoscopic omnidirectional image quality evaluator (SOIQE) to cope with the characteristics of 3D 360-degree images. Two modules are involved in SOIQE: predictive coding theory based binocular rivalry module and multi-view fusion module. In the binocular rivalry module, we introduce predictive coding theory to simulate the competition between high-level patterns and calculate the similarity and rivalry dominance to obtain the quality scores of viewport images. Moreover, we develop the multi-view fusion module to aggregate the quality scores of viewport images with the help of both content weight and location weight. The proposed SOIQE is a parametric model without necessary of regression learning, which ensures its interpretability and generalization performance. Experimental results on our published stereoscopic omnidirectional image quality assessment database (SOLID) demonstrate that our proposed SOIQE method outperforms state-of-the-art metrics. Furthermore, we also verify the effectiveness of each proposed module on both public stereoscopic image datasets and panoramic image datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge