Benedikt Mersch

KISS-SLAM: A Simple, Robust, and Accurate 3D LiDAR SLAM System With Enhanced Generalization Capabilities

Mar 16, 2025

Abstract:Robust and accurate localization and mapping of an environment using laser scanners, so-called LiDAR SLAM, is essential to many robotic applications. Early 3D LiDAR SLAM methods often exploited additional information from IMU or GNSS sensors to enhance localization accuracy and mitigate drift. Later, advanced systems further improved the estimation at the cost of a higher runtime and complexity. This paper explores the limits of what can be achieved with a LiDAR-only SLAM approach while following the "Keep It Small and Simple" (KISS) principle. By leveraging this minimalistic design principle, our system, KISS-SLAM, archives state-of-the-art performances in pose accuracy while requiring little to no parameter tuning for deployment across diverse environments, sensors, and motion profiles. We follow best practices in graph-based SLAM and build upon LiDAR odometry to compute the relative motion between scans and construct local maps of the environment. To correct drift, we match local maps and optimize the trajectory in a pose graph optimization step. The experimental results demonstrate that this design achieves competitive performance while reducing complexity and reliance on additional sensor modalities. By prioritizing simplicity, this work provides a new strong baseline for LiDAR-only SLAM and a high-performing starting point for future research. Further, our pipeline builds consistent maps that can be used directly for further downstream tasks like navigation. Our open-source system operates faster than the sensor frame rate in all presented datasets and is designed for real-world scenarios.

Efficiently Closing Loops in LiDAR-Based SLAM Using Point Cloud Density Maps

Jan 13, 2025

Abstract:Consistent maps are key for most autonomous mobile robots. They often use SLAM approaches to build such maps. Loop closures via place recognition help maintain accurate pose estimates by mitigating global drift. This paper presents a robust loop closure detection pipeline for outdoor SLAM with LiDAR-equipped robots. The method handles various LiDAR sensors with different scanning patterns, field of views and resolutions. It generates local maps from LiDAR scans and aligns them using a ground alignment module to handle both planar and non-planar motion of the LiDAR, ensuring applicability across platforms. The method uses density-preserving bird's eye view projections of these local maps and extracts ORB feature descriptors from them for place recognition. It stores the feature descriptors in a binary search tree for efficient retrieval, and self-similarity pruning addresses perceptual aliasing in repetitive environments. Extensive experiments on public and self-recorded datasets demonstrate accurate loop closure detection, long-term localization, and cross-platform multi-map alignment, agnostic to the LiDAR scanning patterns, fields of view, and motion profiles.

Kinematic-ICP: Enhancing LiDAR Odometry with Kinematic Constraints for Wheeled Mobile Robots Moving on Planar Surfaces

Oct 14, 2024

Abstract:LiDAR odometry is essential for many robotics applications, including 3D mapping, navigation, and simultaneous localization and mapping. LiDAR odometry systems are usually based on some form of point cloud registration to compute the ego-motion of a mobile robot. Yet, few of today's LiDAR odometry systems consider the domain-specific knowledge and the kinematic model of the mobile platform during the point cloud alignment. In this paper, we present Kinematic-ICP, a LiDAR odometry system that focuses on wheeled mobile robots equipped with a 3D LiDAR and moving on a planar surface, which is a common assumption for warehouses, offices, hospitals, etc. Our approach introduces kinematic constraints within the optimization of a traditional point-to-point iterative closest point scheme. In this way, the resulting motion follows the kinematic constraints of the platform, effectively exploiting the robot's wheel odometry and the 3D LiDAR observations. We dynamically adjust the influence of LiDAR measurements and wheel odometry in our optimization scheme, allowing the system to handle degenerate scenarios such as feature-poor corridors. We evaluate our approach on robots operating in large-scale warehouse environments, but also outdoors. The experiments show that our approach achieves top performances and is more accurate than wheel odometry and common LiDAR odometry systems. Kinematic-ICP has been recently deployed in the Dexory fleet of robots operating in warehouses worldwide at their customers' sites, showing that our method can run in the real world alongside a complete navigation stack.

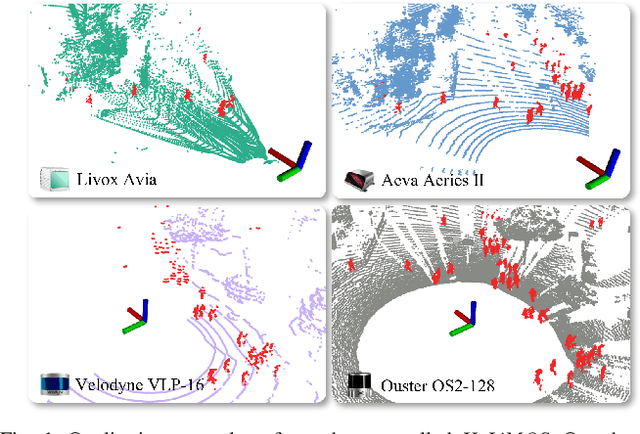

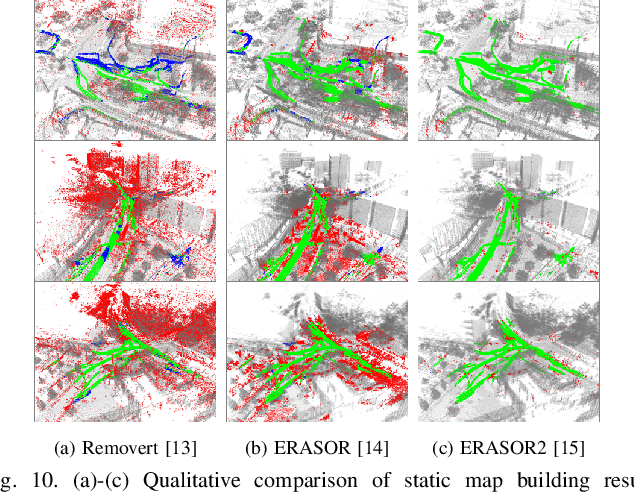

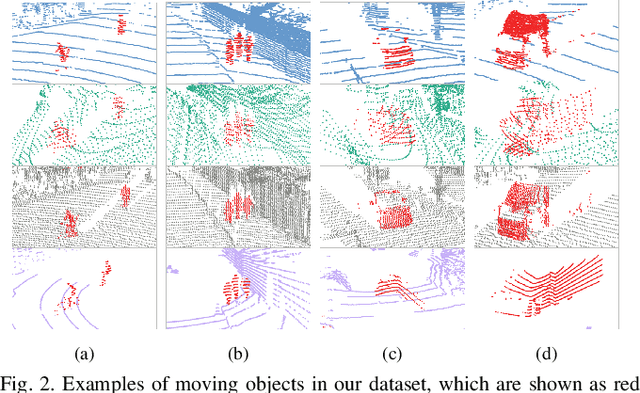

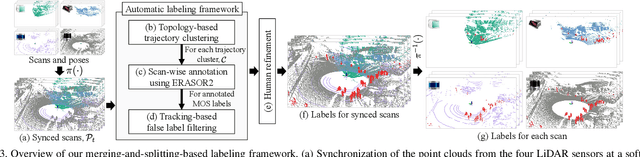

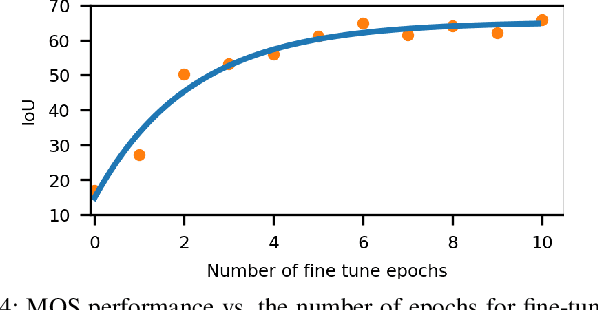

HeLiMOS: A Dataset for Moving Object Segmentation in 3D Point Clouds From Heterogeneous LiDAR Sensors

Aug 12, 2024

Abstract:Moving object segmentation (MOS) using a 3D light detection and ranging (LiDAR) sensor is crucial for scene understanding and identification of moving objects. Despite the availability of various types of 3D LiDAR sensors in the market, MOS research still predominantly focuses on 3D point clouds from mechanically spinning omnidirectional LiDAR sensors. Thus, we are, for example, lacking a dataset with MOS labels for point clouds from solid-state LiDAR sensors which have irregular scanning patterns. In this paper, we present a labeled dataset, called \textit{HeLiMOS}, that enables to test MOS approaches on four heterogeneous LiDAR sensors, including two solid-state LiDAR sensors. Furthermore, we introduce a novel automatic labeling method to substantially reduce the labeling effort required from human annotators. To this end, our framework exploits an instance-aware static map building approach and tracking-based false label filtering. Finally, we provide experimental results regarding the performance of commonly used state-of-the-art MOS approaches on HeLiMOS that suggest a new direction for a sensor-agnostic MOS, which generally works regardless of the type of LiDAR sensors used to capture 3D point clouds. Our dataset is available at https://sites.google.com/view/helimos.

Scaling Diffusion Models to Real-World 3D LiDAR Scene Completion

Mar 20, 2024

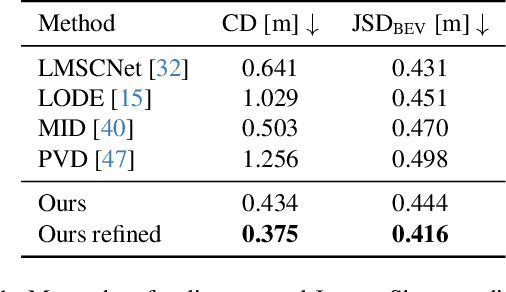

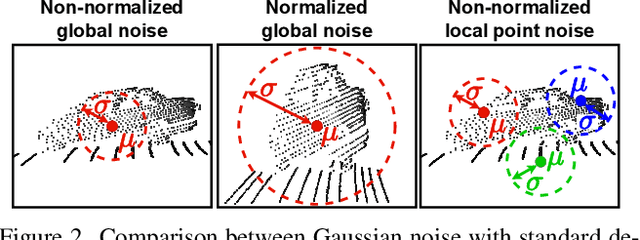

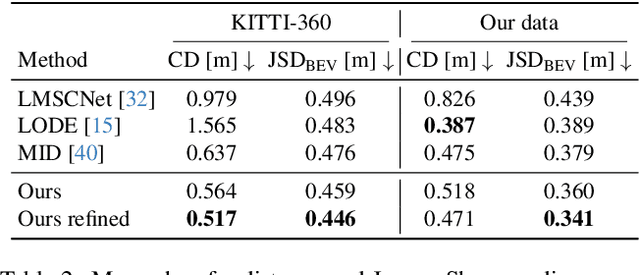

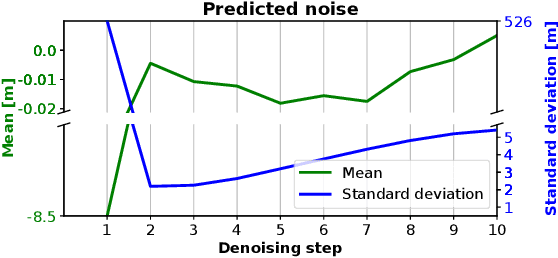

Abstract:Computer vision techniques play a central role in the perception stack of autonomous vehicles. Such methods are employed to perceive the vehicle surroundings given sensor data. 3D LiDAR sensors are commonly used to collect sparse 3D point clouds from the scene. However, compared to human perception, such systems struggle to deduce the unseen parts of the scene given those sparse point clouds. In this matter, the scene completion task aims at predicting the gaps in the LiDAR measurements to achieve a more complete scene representation. Given the promising results of recent diffusion models as generative models for images, we propose extending them to achieve scene completion from a single 3D LiDAR scan. Previous works used diffusion models over range images extracted from LiDAR data, directly applying image-based diffusion methods. Distinctly, we propose to directly operate on the points, reformulating the noising and denoising diffusion process such that it can efficiently work at scene scale. Together with our approach, we propose a regularization loss to stabilize the noise predicted during the denoising process. Our experimental evaluation shows that our method can complete the scene given a single LiDAR scan as input, producing a scene with more details compared to state-of-the-art scene completion methods. We believe that our proposed diffusion process formulation can support further research in diffusion models applied to scene-scale point cloud data.

Radar Instance Transformer: Reliable Moving Instance Segmentation in Sparse Radar Point Clouds

Sep 28, 2023

Abstract:The perception of moving objects is crucial for autonomous robots performing collision avoidance in dynamic environments. LiDARs and cameras tremendously enhance scene interpretation but do not provide direct motion information and face limitations under adverse weather. Radar sensors overcome these limitations and provide Doppler velocities, delivering direct information on dynamic objects. In this paper, we address the problem of moving instance segmentation in radar point clouds to enhance scene interpretation for safety-critical tasks. Our Radar Instance Transformer enriches the current radar scan with temporal information without passing aggregated scans through a neural network. We propose a full-resolution backbone to prevent information loss in sparse point cloud processing. Our instance transformer head incorporates essential information to enhance segmentation but also enables reliable, class-agnostic instance assignments. In sum, our approach shows superior performance on the new moving instance segmentation benchmarks, including diverse environments, and provides model-agnostic modules to enhance scene interpretation. The benchmark is based on the RadarScenes dataset and will be made available upon acceptance.

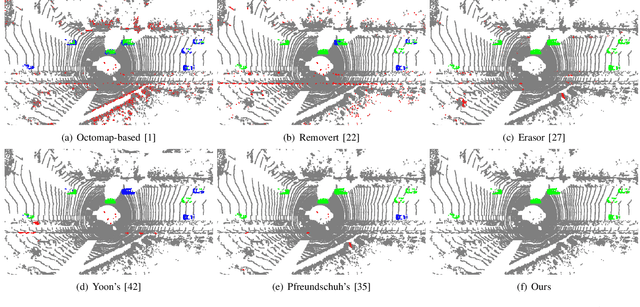

Building Volumetric Beliefs for Dynamic Environments Exploiting Map-Based Moving Object Segmentation

Jul 17, 2023

Abstract:Mobile robots that navigate in unknown environments need to be constantly aware of the dynamic objects in their surroundings for mapping, localization, and planning. It is key to reason about moving objects in the current observation and at the same time to also update the internal model of the static world to ensure safety. In this paper, we address the problem of jointly estimating moving objects in the current 3D LiDAR scan and a local map of the environment. We use sparse 4D convolutions to extract spatio-temporal features from scan and local map and segment all 3D points into moving and non-moving ones. Additionally, we propose to fuse these predictions in a probabilistic representation of the dynamic environment using a Bayes filter. This volumetric belief models, which parts of the environment can be occupied by moving objects. Our experiments show that our approach outperforms existing moving object segmentation baselines and even generalizes to different types of LiDAR sensors. We demonstrate that our volumetric belief fusion can increase the precision and recall of moving object segmentation and even retrieve previously missed moving objects in an online mapping scenario.

KISS-ICP: In Defense of Point-to-Point ICP -- Simple, Accurate, and Robust Registration If Done the Right Way

Sep 30, 2022

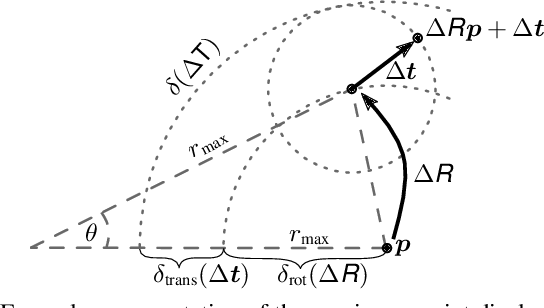

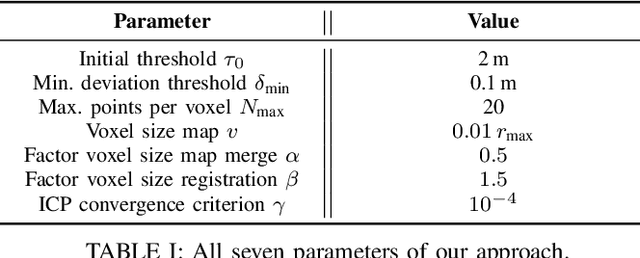

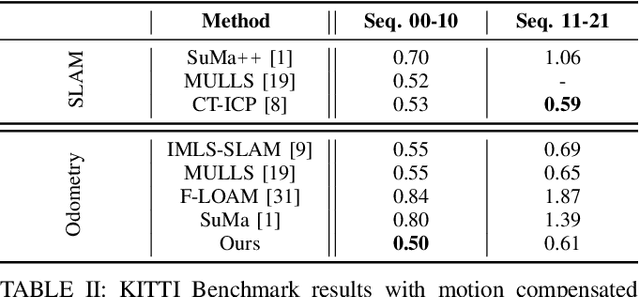

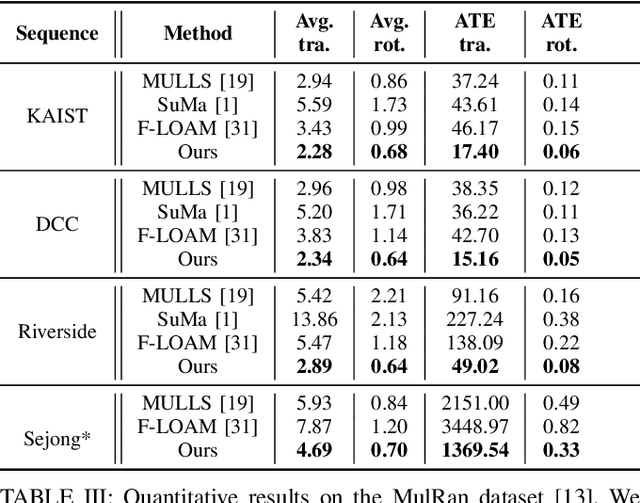

Abstract:Robust and accurate pose estimation of a robotic platform, so-called sensor-based odometry, is an essential part of many robotic applications. While many sensor odometry systems made progress by adding more complexity to the ego-motion estimation process, we move in the opposite direction. By removing a majority of parts and focusing on the core elements, we obtain a surprisingly effective system that is simple to realize and can operate under various environmental conditions using different LiDAR sensors. Our odometry estimation approach relies on point-to-point ICP combined with adaptive thresholding for correspondence matching, a robust kernel, a simple but widely applicable motion compensation approach, and a point cloud subsampling strategy. This yields a system with only a few parameters that in most cases do not even have to be tuned to a specific LiDAR sensor. Our system using the same parameters performs on par with state-of-the-art methods under various operating conditions using different platforms: automotive platforms, UAV-based operation, vehicles like segways, or handheld LiDARs. We do not require integrating IMU information and solely rely on 3D point cloud data obtained from a wide range of 3D LiDAR sensors, thus, enabling a broad spectrum of different applications and operating conditions. Our open-source system operates faster than the sensor frame rate in all presented datasets and is designed for real-world scenarios.

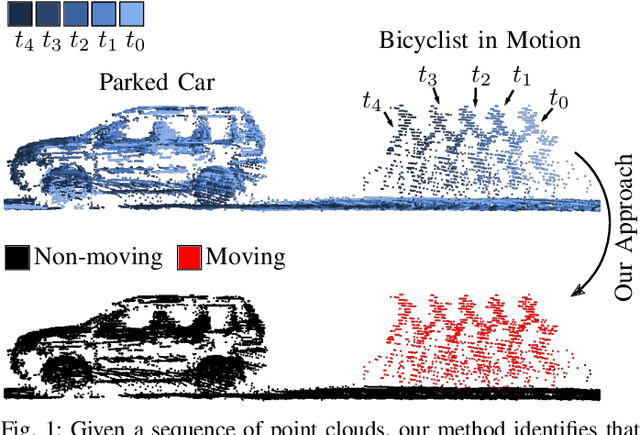

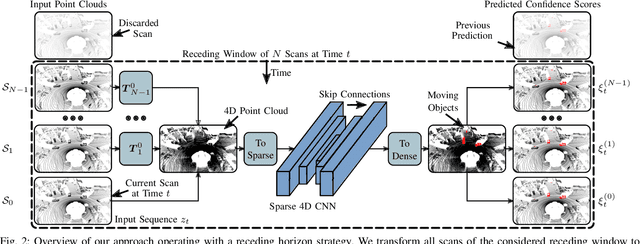

Receding Moving Object Segmentation in 3D LiDAR Data Using Sparse 4D Convolutions

Jun 08, 2022

Abstract:A key challenge for autonomous vehicles is to navigate in unseen dynamic environments. Separating moving objects from static ones is essential for navigation, pose estimation, and understanding how other traffic participants are likely to move in the near future. In this work, we tackle the problem of distinguishing 3D LiDAR points that belong to currently moving objects, like walking pedestrians or driving cars, from points that are obtained from non-moving objects, like walls but also parked cars. Our approach takes a sequence of observed LiDAR scans and turns them into a voxelized sparse 4D point cloud. We apply computationally efficient sparse 4D convolutions to jointly extract spatial and temporal features and predict moving object confidence scores for all points in the sequence. We develop a receding horizon strategy that allows us to predict moving objects online and to refine predictions on the go based on new observations. We use a binary Bayes filter to recursively integrate new predictions of a scan resulting in more robust estimation. We evaluate our approach on the SemanticKITTI moving object segmentation challenge and show more accurate predictions than existing methods. Since our approach only operates on the geometric information of point clouds over time, it generalizes well to new, unseen environments, which we evaluate on the Apollo dataset.

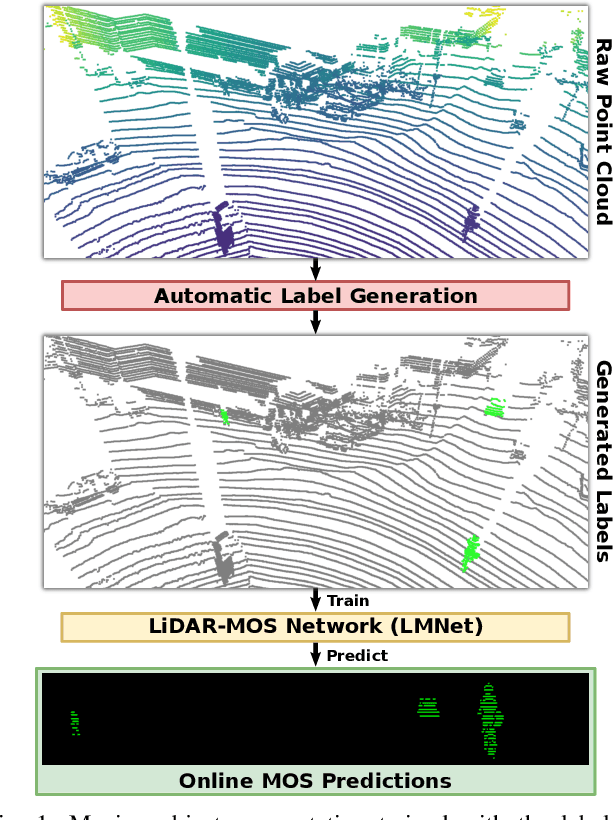

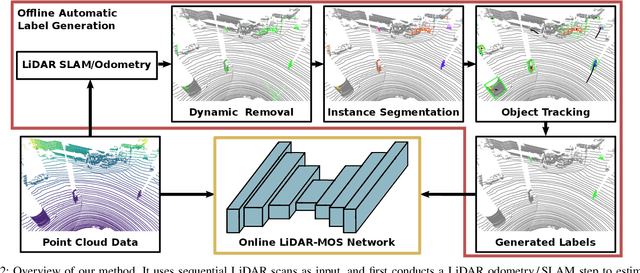

Automatic Labeling to Generate Training Data for Online LiDAR-based Moving Object Segmentation

Jan 12, 2022

Abstract:Understanding the scene is key for autonomously navigating vehicles and the ability to segment the surroundings online into moving and non-moving objects is a central ingredient for this task. Often, deep learning-based methods are used to perform moving object segmentation (MOS). The performance of these networks, however, strongly depends on the diversity and amount of labeled training data, information that may be costly to obtain. In this paper, we propose an automatic data labeling pipeline for 3D LiDAR data to save the extensive manual labeling effort and to improve the performance of existing learning-based MOS systems by automatically generating labeled training data. Our proposed approach achieves this by processing the data offline in batches. It first exploits an occupancy-based dynamic object removal to detect possible dynamic objects coarsely. Second, it extracts segments among the proposals and tracks them using a Kalman filter. Based on the tracked trajectories, it labels the actually moving objects such as driving cars and pedestrians as moving. In contrast, the non-moving objects, e.g., parked cars, lamps, roads, or buildings, are labeled as static. We show that this approach allows us to label LiDAR data highly effectively and compare our results to those of other label generation methods. We also train a deep neural network with our auto-generated labels and achieve similar performance compared to the one trained with manual labels on the same data, and an even better performance when using additional datasets with labels generated by our approach. Furthermore, we evaluate our method on multiple datasets using different sensors and our experiments indicate that our method can generate labels in diverse environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge