Bastian Wandt

QuaMo: Quaternion Motions for Vision-based 3D Human Kinematics Capture

Jan 27, 2026Abstract:Vision-based 3D human motion capture from videos remains a challenge in computer vision. Traditional 3D pose estimation approaches often ignore the temporal consistency between frames, causing implausible and jittery motion. The emerging field of kinematics-based 3D motion capture addresses these issues by estimating the temporal transitioning between poses instead. A major drawback in current kinematics approaches is their reliance on Euler angles. Despite their simplicity, Euler angles suffer from discontinuity that leads to unstable motion reconstructions, especially in online settings where trajectory refinement is unavailable. Contrarily, quaternions have no discontinuity and can produce continuous transitions between poses. In this paper, we propose QuaMo, a novel Quaternion Motions method using quaternion differential equations (QDE) for human kinematics capture. We utilize the state-space model, an effective system for describing real-time kinematics estimations, with quaternion state and the QDE describing quaternion velocity. The corresponding angular acceleration is computed from a meta-PD controller with a novel acceleration enhancement that adaptively regulates the control signals as the human quickly changes to a new pose. Unlike previous work, our QDE is solved under the quaternion unit-sphere constraint that results in more accurate estimations. Experimental results show that our novel formulation of the QDE with acceleration enhancement accurately estimates 3D human kinematics with no discontinuity and minimal implausibilities. QuaMo outperforms comparable state-of-the-art methods on multiple datasets, namely Human3.6M, Fit3D, SportsPose and AIST. The code is available at https://github.com/cuongle1206/QuaMo

Flow Matching for Probabilistic Monocular 3D Human Pose Estimation

Jan 23, 2026Abstract:Recovering 3D human poses from a monocular camera view is a highly ill-posed problem due to the depth ambiguity. Earlier studies on 3D human pose lifting from 2D often contain incorrect-yet-overconfident 3D estimations. To mitigate the problem, emerging probabilistic approaches treat the 3D estimations as a distribution, taking into account the uncertainty measurement of the poses. Falling in a similar category, we proposed FMPose, a probabilistic 3D human pose estimation method based on the flow matching generative approach. Conditioned on the 2D cues, the flow matching scheme learns the optimal transport from a simple source distribution to the plausible 3D human pose distribution via continuous normalizing flows. The 2D lifting condition is modeled via graph convolutional networks, leveraging the learnable connections between human body joints as the graph structure for feature aggregation. Compared to diffusion-based methods, the FMPose with optimal transport produces faster and more accurate 3D pose generations. Experimental results show major improvements of our FMPose over current state-of-the-art methods on three common benchmarks for 3D human pose estimation, namely Human3.6M, MPI-INF-3DHP and 3DPW.

On the Role of Rotation Equivariance in Monocular 3D Human Pose Estimation

Jan 20, 2026Abstract:Estimating 3D from 2D is one of the central tasks in computer vision. In this work, we consider the monocular setting, i.e. single-view input, for 3D human pose estimation (HPE). Here, the task is to predict a 3D point set of human skeletal joints from a single 2D input image. While by definition this is an ill-posed problem, recent work has presented methods that solve it with up to several-centimetre error. Typically, these methods employ a two-step approach, where the first step is to detect the 2D skeletal joints in the input image, followed by the step of 2D-to-3D lifting. We find that common lifting models fail when encountering a rotated input. We argue that learning a single human pose along with its in-plane rotations is considerably easier and more geometrically grounded than directly learning a point-to-point mapping. Furthermore, our intuition is that endowing the model with the notion of rotation equivariance without explicitly constraining its parameter space should lead to a more straightforward learning process than one with equivariance by design. Utilising the common HPE benchmarks, we confirm that the 2D rotation equivariance per se improves the model performance on human poses akin to rotations in the image plane, and can be efficiently and straightforwardly learned by augmentation, outperforming state-of-the-art equivariant-by-design methods.

Continuous Normalizing Flows for Uncertainty-Aware Human Pose Estimation

May 04, 2025

Abstract:Human Pose Estimation (HPE) is increasingly important for applications like virtual reality and motion analysis, yet current methods struggle with balancing accuracy, computational efficiency, and reliable uncertainty quantification (UQ). Traditional regression-based methods assume fixed distributions, which might lead to poor UQ. Heatmap-based methods effectively model the output distribution using likelihood heatmaps, however, they demand significant resources. To address this, we propose Continuous Flow Residual Estimation (CFRE), an integration of Continuous Normalizing Flows (CNFs) into regression-based models, which allows for dynamic distribution adaptation. Through extensive experiments, we show that CFRE leads to better accuracy and uncertainty quantification with retained computational efficiency on both 2D and 3D human pose estimation tasks.

Utilizing Uncertainty in 2D Pose Detectors for Probabilistic 3D Human Mesh Recovery

Nov 25, 2024Abstract:Monocular 3D human pose and shape estimation is an inherently ill-posed problem due to depth ambiguities, occlusions, and truncations. Recent probabilistic approaches learn a distribution over plausible 3D human meshes by maximizing the likelihood of the ground-truth pose given an image. We show that this objective function alone is not sufficient to best capture the full distributions. Instead, we propose to additionally supervise the learned distributions by minimizing the distance to distributions encoded in heatmaps of a 2D pose detector. Moreover, we reveal that current methods often generate incorrect hypotheses for invisible joints which is not detected by the evaluation protocols. We demonstrate that person segmentation masks can be utilized during training to significantly decrease the number of invalid samples and introduce two metrics to evaluate it. Our normalizing flow-based approach predicts plausible 3D human mesh hypotheses that are consistent with the image evidence while maintaining high diversity for ambiguous body parts. Experiments on 3DPW and EMDB show that we outperform other state-of-the-art probabilistic methods. Code is available for research purposes at https://github.com/twehrbein/humr.

Optimal-State Dynamics Estimation for Physics-based Human Motion Capture from Videos

Oct 10, 2024

Abstract:Human motion capture from monocular videos has made significant progress in recent years. However, modern approaches often produce temporal artifacts, e.g. in form of jittery motion and struggle to achieve smooth and physically plausible motions. Explicitly integrating physics, in form of internal forces and exterior torques, helps alleviating these artifacts. Current state-of-the-art approaches make use of an automatic PD controller to predict torques and reaction forces in order to re-simulate the input kinematics, i.e. the joint angles of a predefined skeleton. However, due to imperfect physical models, these methods often require simplifying assumptions and extensive preprocessing of the input kinematics to achieve good performance. To this end, we propose a novel method to selectively incorporate the physics models with the kinematics observations in an online setting, inspired by a neural Kalman-filtering approach. We develop a control loop as a meta-PD controller to predict internal joint torques and external reaction forces, followed by a physics-based motion simulation. A recurrent neural network is introduced to realize a Kalman filter that attentively balances the kinematics input and simulated motion, resulting in an optimal-state dynamics prediction. We show that this filtering step is crucial to provide an online supervision that helps balancing the shortcoming of the respective input motions, thus being important for not only capturing accurate global motion trajectories but also producing physically plausible human poses. The proposed approach excels in the physics-based human pose estimation task and demonstrates the physical plausibility of the predictive dynamics, compared to state of the art. The code is available on https://github.com/cuongle1206/OSDCap

Temporally-consistent 3D Reconstruction of Birds

Aug 24, 2024Abstract:This paper deals with 3D reconstruction of seabirds which recently came into focus of environmental scientists as valuable bio-indicators for environmental change. Such 3D information is beneficial for analyzing the bird's behavior and physiological shape, for example by tracking motion, shape, and appearance changes. From a computer vision perspective birds are especially challenging due to their rapid and oftentimes non-rigid motions. We propose an approach to reconstruct the 3D pose and shape from monocular videos of a specific breed of seabird - the common murre. Our approach comprises a full pipeline of detection, tracking, segmentation, and temporally consistent 3D reconstruction. Additionally, we propose a temporal loss that extends current single-image 3D bird pose estimators to the temporal domain. Moreover, we provide a real-world dataset of 10000 frames of video observations on average capture nine birds simultaneously, comprising a large variety of motions and interactions, including a smaller test set with bird-specific keypoint labels. Using our temporal optimization, we achieve state-of-the-art performance for the challenging sequences in our dataset.

Representing Animatable Avatar via Factorized Neural Fields

Jun 02, 2024Abstract:For reconstructing high-fidelity human 3D models from monocular videos, it is crucial to maintain consistent large-scale body shapes along with finely matched subtle wrinkles. This paper explores the observation that the per-frame rendering results can be factorized into a pose-independent component and a corresponding pose-dependent equivalent to facilitate frame consistency. Pose adaptive textures can be further improved by restricting frequency bands of these two components. In detail, pose-independent outputs are expected to be low-frequency, while highfrequency information is linked to pose-dependent factors. We achieve a coherent preservation of both coarse body contours across the entire input video and finegrained texture features that are time variant with a dual-branch network with distinct frequency components. The first branch takes coordinates in canonical space as input, while the second branch additionally considers features outputted by the first branch and pose information of each frame. Our network integrates the information predicted by both branches and utilizes volume rendering to generate photo-realistic 3D human images. Through experiments, we demonstrate that our network surpasses the neural radiance fields (NeRF) based state-of-the-art methods in preserving high-frequency details and ensuring consistent body contours.

CasCalib: Cascaded Calibration for Motion Capture from Sparse Unsynchronized Cameras

May 10, 2024

Abstract:It is now possible to estimate 3D human pose from monocular images with off-the-shelf 3D pose estimators. However, many practical applications require fine-grained absolute pose information for which multi-view cues and camera calibration are necessary. Such multi-view recordings are laborious because they require manual calibration, and are expensive when using dedicated hardware. Our goal is full automation, which includes temporal synchronization, as well as intrinsic and extrinsic camera calibration. This is done by using persons in the scene as the calibration objects. Existing methods either address only synchronization or calibration, assume one of the former as input, or have significant limitations. A common limitation is that they only consider single persons, which eases correspondence finding. We attain this generality by partitioning the high-dimensional time and calibration space into a cascade of subspaces and introduce tailored algorithms to optimize each efficiently and robustly. The outcome is an easy-to-use, flexible, and robust motion capture toolbox that we release to enable scientific applications, which we demonstrate on diverse multi-view benchmarks. Project website: https://github.com/jamestang1998/CasCalib.

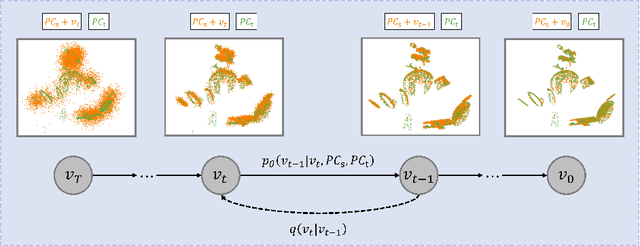

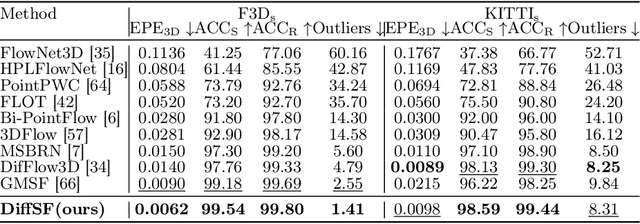

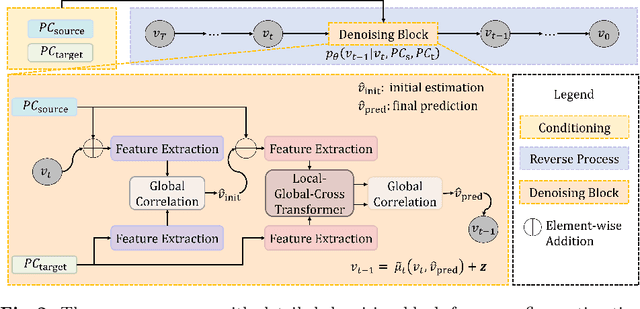

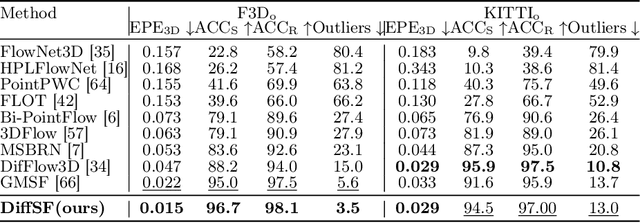

DiffSF: Diffusion Models for Scene Flow Estimation

Mar 14, 2024

Abstract:Scene flow estimation is an essential ingredient for a variety of real-world applications, especially for autonomous agents, such as self-driving cars and robots. While recent scene flow estimation approaches achieve a reasonable accuracy, their applicability to real-world systems additionally benefits from a reliability measure. Aiming at improving accuracy while additionally providing an estimate for uncertainty, we propose DiffSF that combines transformer-based scene flow estimation with denoising diffusion models. In the diffusion process, the ground truth scene flow vector field is gradually perturbed by adding Gaussian noise. In the reverse process, starting from randomly sampled Gaussian noise, the scene flow vector field prediction is recovered by conditioning on a source and a target point cloud. We show that the diffusion process greatly increases the robustness of predictions compared to prior approaches resulting in state-of-the-art performance on standard scene flow estimation benchmarks. Moreover, by sampling multiple times with different initial states, the denoising process predicts multiple hypotheses, which enables measuring the output uncertainty, allowing our approach to detect a majority of the inaccurate predictions. The code is available at https://github.com/ZhangYushan3/DiffSF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge