Anirban Chakraborty

Indian Institute of Science, Bangalore

Minimizing Layerwise Activation Norm Improves Generalization in Federated Learning

Dec 09, 2025Abstract:Federated Learning (FL) is an emerging machine learning framework that enables multiple clients (coordinated by a server) to collaboratively train a global model by aggregating the locally trained models without sharing any client's training data. It has been observed in recent works that learning in a federated manner may lead the aggregated global model to converge to a 'sharp minimum' thereby adversely affecting the generalizability of this FL-trained model. Therefore, in this work, we aim to improve the generalization performance of models trained in a federated setup by introducing a 'flatness' constrained FL optimization problem. This flatness constraint is imposed on the top eigenvalue of the Hessian computed from the training loss. As each client trains a model on its local data, we further re-formulate this complex problem utilizing the client loss functions and propose a new computationally efficient regularization technique, dubbed 'MAN,' which Minimizes Activation's Norm of each layer on client-side models. We also theoretically show that minimizing the activation norm reduces the top eigenvalue of the layer-wise Hessian of the client's loss, which in turn decreases the overall Hessian's top eigenvalue, ensuring convergence to a flat minimum. We apply our proposed flatness-constrained optimization to the existing FL techniques and obtain significant improvements, thereby establishing new state-of-the-art.

O3SLM: Open Weight, Open Data, and Open Vocabulary Sketch-Language Model

Nov 18, 2025Abstract:While Large Vision Language Models (LVLMs) are increasingly deployed in real-world applications, their ability to interpret abstract visual inputs remains limited. Specifically, they struggle to comprehend hand-drawn sketches, a modality that offers an intuitive means of expressing concepts that are difficult to describe textually. We identify the primary bottleneck as the absence of a large-scale dataset that jointly models sketches, photorealistic images, and corresponding natural language instructions. To address this, we present two key contributions: (1) a new, large-scale dataset of image-sketch-instruction triplets designed to facilitate both pretraining and instruction tuning, and (2) O3SLM, an LVLM trained on this dataset. Comprehensive evaluations on multiple sketch-based tasks: (a) object localization, (b) counting, (c) image retrieval i.e., (SBIR and fine-grained SBIR), and (d) visual question answering (VQA); while incorporating the three existing sketch datasets, namely QuickDraw!, Sketchy, and Tu Berlin, along with our generated SketchVCL dataset, show that O3SLM achieves state-of-the-art performance, substantially outperforming existing LVLMs in sketch comprehension and reasoning.

Optimizing Federated Learning for Scalable Power-demand Forecasting in Microgrids

Aug 11, 2025Abstract:Real-time monitoring of power consumption in cities and micro-grids through the Internet of Things (IoT) can help forecast future demand and optimize grid operations. But moving all consumer-level usage data to the cloud for predictions and analysis at fine time scales can expose activity patterns. Federated Learning~(FL) is a privacy-sensitive collaborative DNN training approach that retains data on edge devices, trains the models on private data locally, and aggregates the local models in the cloud. But key challenges exist: (i) clients can have non-independently identically distributed~(non-IID) data, and (ii) the learning should be computationally cheap while scaling to 1000s of (unseen) clients. In this paper, we develop and evaluate several optimizations to FL training across edge and cloud for time-series demand forecasting in micro-grids and city-scale utilities using DNNs to achieve a high prediction accuracy while minimizing the training cost. We showcase the benefit of using exponentially weighted loss while training and show that it further improves the prediction of the final model. Finally, we evaluate these strategies by validating over 1000s of clients for three states in the US from the OpenEIA corpus, and performing FL both in a pseudo-distributed setting and a Pi edge cluster. The results highlight the benefits of the proposed methods over baselines like ARIMA and DNNs trained for individual consumers, which are not scalable.

Pose-Transformation and Radial Distance Clustering for Unsupervised Person Re-identification

Nov 06, 2024

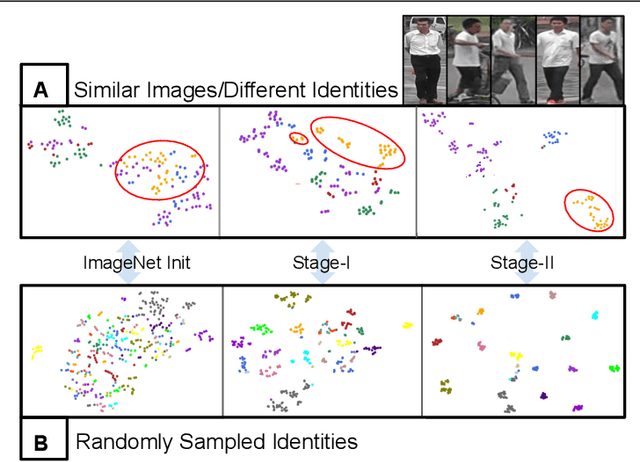

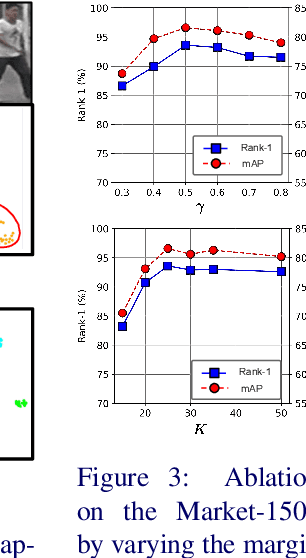

Abstract:Person re-identification (re-ID) aims to tackle the problem of matching identities across non-overlapping cameras. Supervised approaches require identity information that may be difficult to obtain and are inherently biased towards the dataset they are trained on, making them unscalable across domains. To overcome these challenges, we propose an unsupervised approach to the person re-ID setup. Having zero knowledge of true labels, our proposed method enhances the discriminating ability of the learned features via a novel two-stage training strategy. The first stage involves training a deep network on an expertly designed pose-transformed dataset obtained by generating multiple perturbations for each original image in the pose space. Next, the network learns to map similar features closer in the feature space using the proposed discriminative clustering algorithm. We introduce a novel radial distance loss, that attends to the fundamental aspects of feature learning - compact clusters with low intra-cluster and high inter-cluster variation. Extensive experiments on several large-scale re-ID datasets demonstrate the superiority of our method compared to state-of-the-art approaches.

Learning non-Gaussian spatial distributions via Bayesian transport maps with parametric shrinkage

Sep 28, 2024Abstract:Many applications, including climate-model analysis and stochastic weather generators, require learning or emulating the distribution of a high-dimensional and non-Gaussian spatial field based on relatively few training samples. To address this challenge, a recently proposed Bayesian transport map (BTM) approach consists of a triangular transport map with nonparametric Gaussian-process (GP) components, which is trained to transform the distribution of interest distribution to a Gaussian reference distribution. To improve the performance of this existing BTM, we propose to shrink the map components toward a ``base'' parametric Gaussian family combined with a Vecchia approximation for scalability. The resulting ShrinkTM approach is more accurate than the existing BTM, especially for small numbers of training samples. It can even outperform the ``base'' family when trained on a single sample of the spatial field. We demonstrate the advantage of ShrinkTM though numerical experiments on simulated data and on climate-model output.

Improving Domain Adaptation Through Class Aware Frequency Transformation

Jul 28, 2024Abstract:In this work, we explore the usage of the Frequency Transformation for reducing the domain shift between the source and target domain (e.g., synthetic image and real image respectively) towards solving the Domain Adaptation task. Most of the Unsupervised Domain Adaptation (UDA) algorithms focus on reducing the global domain shift between labelled source and unlabelled target domains by matching the marginal distributions under a small domain gap assumption. UDA performance degrades for the cases where the domain gap between source and target distribution is large. In order to bring the source and the target domains closer, we propose a novel approach based on traditional image processing technique Class Aware Frequency Transformation (CAFT) that utilizes pseudo label based class consistent low-frequency swapping for improving the overall performance of the existing UDA algorithms. The proposed approach, when compared with the state-of-the-art deep learning based methods, is computationally more efficient and can easily be plugged into any existing UDA algorithm to improve its performance. Additionally, we introduce a novel approach based on absolute difference of top-2 class prediction probabilities (ADT2P) for filtering target pseudo labels into clean and noisy sets. Samples with clean pseudo labels can be used to improve the performance of unsupervised learning algorithms. We name the overall framework as CAFT++. We evaluate the same on the top of different UDA algorithms across many public domain adaptation datasets. Our extensive experiments indicate that CAFT++ is able to achieve significant performance gains across all the popular benchmarks.

Sketch-guided Image Inpainting with Partial Discrete Diffusion Process

Apr 18, 2024Abstract:In this work, we study the task of sketch-guided image inpainting. Unlike the well-explored natural language-guided image inpainting, which excels in capturing semantic details, the relatively less-studied sketch-guided inpainting offers greater user control in specifying the object's shape and pose to be inpainted. As one of the early solutions to this task, we introduce a novel partial discrete diffusion process (PDDP). The forward pass of the PDDP corrupts the masked regions of the image and the backward pass reconstructs these masked regions conditioned on hand-drawn sketches using our proposed sketch-guided bi-directional transformer. The proposed novel transformer module accepts two inputs -- the image containing the masked region to be inpainted and the query sketch to model the reverse diffusion process. This strategy effectively addresses the domain gap between sketches and natural images, thereby, enhancing the quality of inpainting results. In the absence of a large-scale dataset specific to this task, we synthesize a dataset from the MS-COCO to train and extensively evaluate our proposed framework against various competent approaches in the literature. The qualitative and quantitative results and user studies establish that the proposed method inpaints realistic objects that fit the context in terms of the visual appearance of the provided sketch. To aid further research, we have made our code publicly available at https://github.com/vl2g/Sketch-Inpainting .

Scalable Model-Based Gaussian Process Clustering

Sep 14, 2023

Abstract:Gaussian process is an indispensable tool in clustering functional data, owing to it's flexibility and inherent uncertainty quantification. However, when the functional data is observed over a large grid (say, of length $p$), Gaussian process clustering quickly renders itself infeasible, incurring $O(p^2)$ space complexity and $O(p^3)$ time complexity per iteration; and thus prohibiting it's natural adaptation to large environmental applications. To ensure scalability of Gaussian process clustering in such applications, we propose to embed the popular Vecchia approximation for Gaussian processes at the heart of the clustering task, provide crucial theoretical insights towards algorithmic design, and finally develop a computationally efficient expectation maximization (EM) algorithm. Empirical evidence of the utility of our proposal is provided via simulations and analysis of polar temperature anomaly (\href{https://www.ncei.noaa.gov/access/monitoring/climate-at-a-glance/global/time-series}{noaa.gov}) data-sets.

DAD++: Improved Data-free Test Time Adversarial Defense

Sep 10, 2023Abstract:With the increasing deployment of deep neural networks in safety-critical applications such as self-driving cars, medical imaging, anomaly detection, etc., adversarial robustness has become a crucial concern in the reliability of these networks in real-world scenarios. A plethora of works based on adversarial training and regularization-based techniques have been proposed to make these deep networks robust against adversarial attacks. However, these methods require either retraining models or training them from scratch, making them infeasible to defend pre-trained models when access to training data is restricted. To address this problem, we propose a test time Data-free Adversarial Defense (DAD) containing detection and correction frameworks. Moreover, to further improve the efficacy of the correction framework in cases when the detector is under-confident, we propose a soft-detection scheme (dubbed as "DAD++"). We conduct a wide range of experiments and ablations on several datasets and network architectures to show the efficacy of our proposed approach. Furthermore, we demonstrate the applicability of our approach in imparting adversarial defense at test time under data-free (or data-efficient) applications/setups, such as Data-free Knowledge Distillation and Source-free Unsupervised Domain Adaptation, as well as Semi-supervised classification frameworks. We observe that in all the experiments and applications, our DAD++ gives an impressive performance against various adversarial attacks with a minimal drop in clean accuracy. The source code is available at: https://github.com/vcl-iisc/Improved-Data-free-Test-Time-Adversarial-Defense

Federated Learning on Heterogeneous Data via Adaptive Self-Distillation

May 31, 2023

Abstract:Federated Learning (FL) is a machine learning paradigm that enables clients to jointly train a global model by aggregating the locally trained models without sharing any local training data. In practice, there can often be substantial heterogeneity (e.g., class imbalance) across the local data distributions observed by each of these clients. Under such non-iid data distributions across clients, FL suffers from the 'client-drift' problem where every client converges to its own local optimum. This results in slower convergence and poor performance of the aggregated model. To address this limitation, we propose a novel regularization technique based on adaptive self-distillation (ASD) for training models on the client side. Our regularization scheme adaptively adjusts to the client's training data based on: (1) the closeness of the local model's predictions with that of the global model and (2) the client's label distribution. The proposed regularization can be easily integrated atop existing, state-of-the-art FL algorithms leading to a further boost in the performance of these off-the-shelf methods. We demonstrate the efficacy of our proposed FL approach through extensive experiments on multiple real-world benchmarks (including datasets with common corruptions and perturbations) and show substantial gains in performance over the state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge