Albert Meroño-Peñuela

Hatevolution: What Static Benchmarks Don't Tell Us

Jun 13, 2025Abstract:Language changes over time, including in the hate speech domain, which evolves quickly following social dynamics and cultural shifts. While NLP research has investigated the impact of language evolution on model training and has proposed several solutions for it, its impact on model benchmarking remains under-explored. Yet, hate speech benchmarks play a crucial role to ensure model safety. In this paper, we empirically evaluate the robustness of 20 language models across two evolving hate speech experiments, and we show the temporal misalignment between static and time-sensitive evaluations. Our findings call for time-sensitive linguistic benchmarks in order to correctly and reliably evaluate language models in the hate speech domain.

Towards Responsible AI Music: an Investigation of Trustworthy Features for Creative Systems

Mar 24, 2025Abstract:Generative AI is radically changing the creative arts, by fundamentally transforming the way we create and interact with cultural artefacts. While offering unprecedented opportunities for artistic expression and commercialisation, this technology also raises ethical, societal, and legal concerns. Key among these are the potential displacement of human creativity, copyright infringement stemming from vast training datasets, and the lack of transparency, explainability, and fairness mechanisms. As generative systems become pervasive in this domain, responsible design is crucial. Whilst previous work has tackled isolated aspects of generative systems (e.g., transparency, evaluation, data), we take a comprehensive approach, grounding these efforts within the Ethics Guidelines for Trustworthy Artificial Intelligence produced by the High-Level Expert Group on AI appointed by the European Commission - a framework for designing responsible AI systems across seven macro requirements. Focusing on generative music AI, we illustrate how these requirements can be contextualised for the field, addressing trustworthiness across multiple dimensions and integrating insights from the existing literature. We further propose a roadmap for operationalising these contextualised requirements, emphasising interdisciplinary collaboration and stakeholder engagement. Our work provides a foundation for designing and evaluating responsible music generation systems, calling for collaboration among AI experts, ethicists, legal scholars, and artists. This manuscript is accompanied by a website: https://amresearchlab.github.io/raim-framework/.

PathE: Leveraging Entity-Agnostic Paths for Parameter-Efficient Knowledge Graph Embeddings

Jan 31, 2025Abstract:Knowledge Graphs (KGs) store human knowledge in the form of entities (nodes) and relations, and are used extensively in various applications. KG embeddings are an effective approach to addressing tasks like knowledge discovery, link prediction, and reasoning. This is often done by allocating and learning embedding tables for all or a subset of the entities. As this scales linearly with the number of entities, learning embedding models in real-world KGs with millions of nodes can be computationally intractable. To address this scalability problem, our model, PathE, only allocates embedding tables for relations (which are typically orders of magnitude fewer than the entities) and requires less than 25% of the parameters of previous parameter efficient methods. Rather than storing entity embeddings, we learn to compute them by leveraging multiple entity-relation paths to contextualise individual entities within triples. Evaluated on four benchmarks, PathE achieves state-of-the-art performance in relation prediction, and remains competitive in link prediction on path-rich KGs while training on consumer-grade hardware. We perform ablation experiments to test our design choices and analyse the sensitivity of the model to key hyper-parameters. PathE is efficient and cost-effective for relationally diverse and well-connected KGs commonly found in real-world applications.

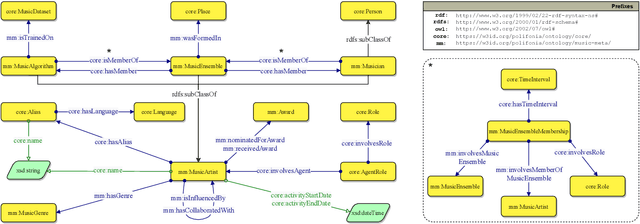

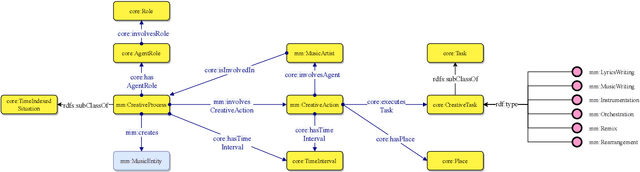

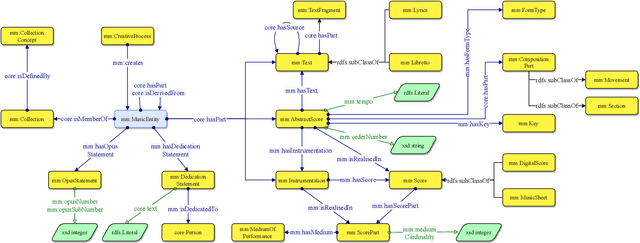

The Music Meta Ontology: a flexible semantic model for the interoperability of music metadata

Nov 07, 2023

Abstract:The semantic description of music metadata is a key requirement for the creation of music datasets that can be aligned, integrated, and accessed for information retrieval and knowledge discovery. It is nonetheless an open challenge due to the complexity of musical concepts arising from different genres, styles, and periods -- standing to benefit from a lingua franca to accommodate various stakeholders (musicologists, librarians, data engineers, etc.). To initiate this transition, we introduce the Music Meta ontology, a rich and flexible semantic model to describe music metadata related to artists, compositions, performances, recordings, and links. We follow eXtreme Design methodologies and best practices for data engineering, to reflect the perspectives and the requirements of various stakeholders into the design of the model, while leveraging ontology design patterns and accounting for provenance at different levels (claims, links). After presenting the main features of Music Meta, we provide a first evaluation of the model, alignments to other schema (Music Ontology, DOREMUS, Wikidata), and support for data transformation.

The Music Annotation Pattern

Mar 30, 2023Abstract:The annotation of music content is a complex process to represent due to its inherent multifaceted, subjectivity, and interdisciplinary nature. Numerous systems and conventions for annotating music have been developed as independent standards over the past decades. Little has been done to make them interoperable, which jeopardises cross-corpora studies as it requires users to familiarise with a multitude of conventions. Most of these systems lack the semantic expressiveness needed to represent the complexity of the musical language and cannot model multi-modal annotations originating from audio and symbolic sources. In this article, we introduce the Music Annotation Pattern, an Ontology Design Pattern (ODP) to homogenise different annotation systems and to represent several types of musical objects (e.g. chords, patterns, structures). This ODP preserves the semantics of the object's content at different levels and temporal granularity. Moreover, our ODP accounts for multi-modality upfront, to describe annotations derived from different sources, and it is the first to enable the integration of music datasets at a large scale.

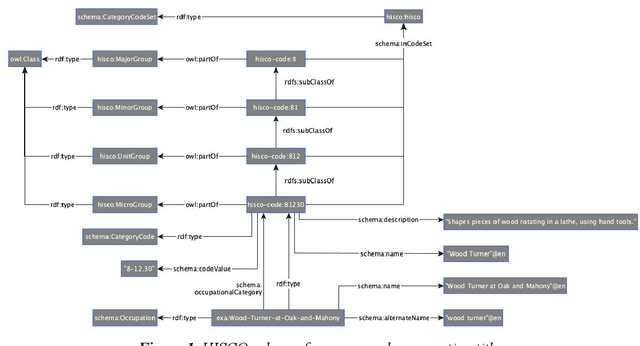

Ontologies in CLARIAH: Towards Interoperability in History, Language and Media

Apr 06, 2020

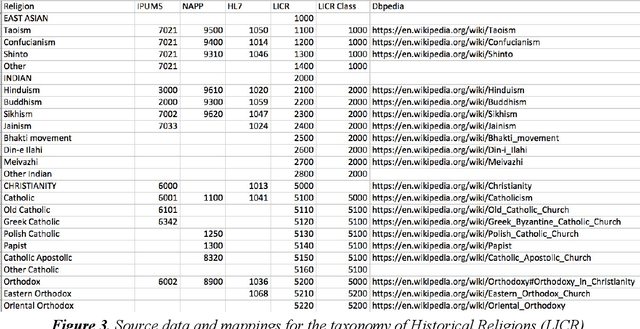

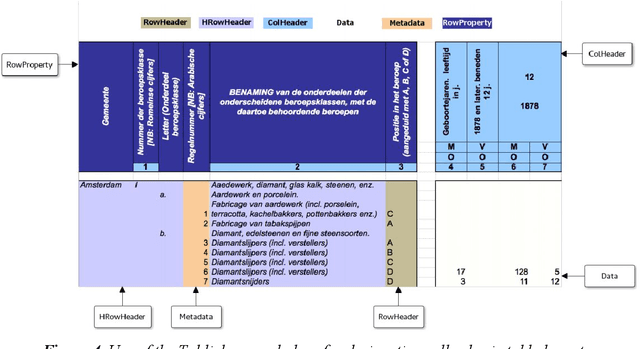

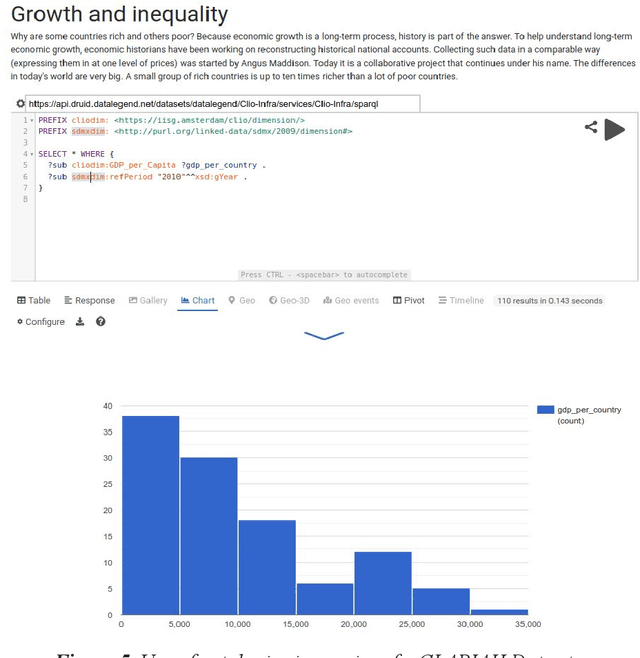

Abstract:One of the most important goals of digital humanities is to provide researchers with data and tools for new research questions, either by increasing the scale of scholarly studies, linking existing databases, or improving the accessibility of data. Here, the FAIR principles provide a useful framework as these state that data needs to be: Findable, as they are often scattered among various sources; Accessible, since some might be offline or behind paywalls; Interoperable, thus using standard knowledge representation formats and shared vocabularies; and Reusable, through adequate licensing and permissions. Integrating data from diverse humanities domains is not trivial, research questions such as "was economic wealth equally distributed in the 18th century?", or "what are narratives constructed around disruptive media events?") and preparation phases (e.g. data collection, knowledge organisation, cleaning) of scholars need to be taken into account. In this chapter, we describe the ontologies and tools developed and integrated in the Dutch national project CLARIAH to address these issues across datasets from three fundamental domains or "pillars" of the humanities (linguistics, social and economic history, and media studies) that have paradigmatic data representations (textual corpora, structured data, and multimedia). We summarise the lessons learnt from using such ontologies and tools in these domains from a generalisation and reusability perspective.

Release Early, Release Often: Predicting Change in Versioned Knowledge Organization Systems on the Web

Sep 15, 2015

Abstract:The Semantic Web is built on top of Knowledge Organization Systems (KOS) (vocabularies, ontologies, concept schemes) that provide a structured, interoperable and distributed access to Linked Data on the Web. The maintenance of these KOS over time has produced a number of KOS version chains: subsequent unique version identifiers to unique states of a KOS. However, the release of new KOS versions pose challenges to both KOS publishers and users. For publishers, updating a KOS is a knowledge intensive task that requires a lot of manual effort, often implying deep deliberation on the set of changes to introduce. For users that link their datasets to these KOS, a new version compromises the validity of their links, often creating ramifications. In this paper we describe a method to automatically detect which parts of a Web KOS are likely to change in a next version, using supervised learning on past versions in the KOS version chain. We use a set of ontology change features to model and predict change in arbitrary Web KOS. We apply our method on 139 varied datasets systematically retrieved from the Semantic Web, obtaining robust results at correctly predicting change. To illustrate the accuracy, genericity and domain independence of the method, we study the relationship between its effectiveness and several characterizations of the evaluated datasets, finding that predictors like the number of versions in a chain and their release frequency have a fundamental impact in predictability of change in Web KOS. Consequently, we argue for adopting a release early, release often philosophy in Web KOS development cycles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge