Aaron Flores

Optimal Downsampling for Imbalanced Classification with Generalized Linear Models

Oct 11, 2024

Abstract:Downsampling or under-sampling is a technique that is utilized in the context of large and highly imbalanced classification models. We study optimal downsampling for imbalanced classification using generalized linear models (GLMs). We propose a pseudo maximum likelihood estimator and study its asymptotic normality in the context of increasingly imbalanced populations relative to an increasingly large sample size. We provide theoretical guarantees for the introduced estimator. Additionally, we compute the optimal downsampling rate using a criterion that balances statistical accuracy and computational efficiency. Our numerical experiments, conducted on both synthetic and empirical data, further validate our theoretical results, and demonstrate that the introduced estimator outperforms commonly available alternatives.

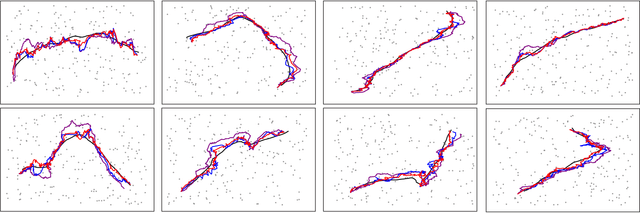

Nonlinear Kalman Filtering with Reparametrization Gradients

Mar 08, 2023

Abstract:We introduce a novel nonlinear Kalman filter that utilizes reparametrization gradients. The widely used parametric approximation is based on a jointly Gaussian assumption of the state-space model, which is in turn equivalent to minimizing an approximation to the Kullback-Leibler divergence. It is possible to obtain better approximations using the alpha divergence, but the resulting problem is substantially more complex. In this paper, we introduce an alternate formulation based on an energy function, which can be optimized instead of the alpha divergence. The optimization can be carried out using reparametrization gradients, a technique that has recently been utilized in a number of deep learning models.

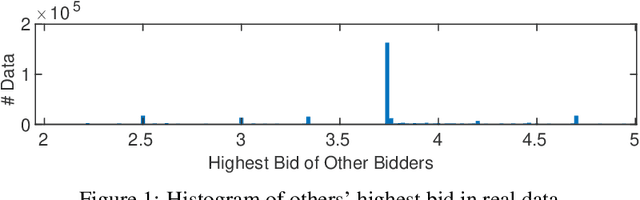

Leveraging the Hints: Adaptive Bidding in Repeated First-Price Auctions

Nov 05, 2022

Abstract:With the advent and increasing consolidation of e-commerce, digital advertising has very recently replaced traditional advertising as the main marketing force in the economy. In the past four years, a particularly important development in the digital advertising industry is the shift from second-price auctions to first-price auctions for online display ads. This shift immediately motivated the intellectually challenging question of how to bid in first-price auctions, because unlike in second-price auctions, bidding one's private value truthfully is no longer optimal. Following a series of recent works in this area, we consider a differentiated setup: we do not make any assumption about other bidders' maximum bid (i.e. it can be adversarial over time), and instead assume that we have access to a hint that serves as a prediction of other bidders' maximum bid, where the prediction is learned through some blackbox machine learning model. We consider two types of hints: one where a single point-prediction is available, and the other where a hint interval (representing a type of confidence region into which others' maximum bid falls) is available. We establish minimax optimal regret bounds for both cases and highlight the quantitatively different behavior between the two settings. We also provide improved regret bounds when the others' maximum bid exhibits the further structure of sparsity. Finally, we complement the theoretical results with demonstrations using real bidding data.

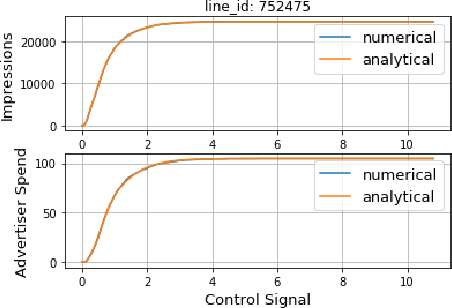

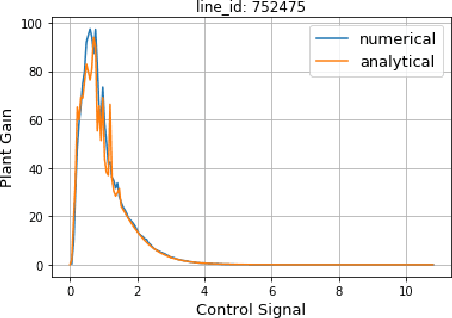

Mid-flight Forecasting for CPA Lines in Online Advertising

Jul 15, 2021

Abstract:For Verizon MediaDemand Side Platform(DSP), forecasting of ad campaign performance not only feeds key information to the optimization server to allow the system to operate on a high-performance mode, but also produces actionable insights to the advertisers. In this paper, the forecasting problem for CPA lines in the middle of the flight is investigated by taking the bidding mechanism into account. The proposed methodology generates relationships between various key performance metrics and optimization signals. It can also be used to estimate the sensitivity of ad campaign performance metrics to the adjustments of optimization signal, which is important to the design of a campaign management system. The relationship between advertiser spends and effective Cost Per Action(eCPA) is also characterized, which serves as a guidance for mid-flight line adjustment to the advertisers. Several practical issues in implementation, such as downsampling of the dataset, are also discussed in the paper. At last, the forecasting results are validated against actual deliveries and demonstrates promising accuracy.

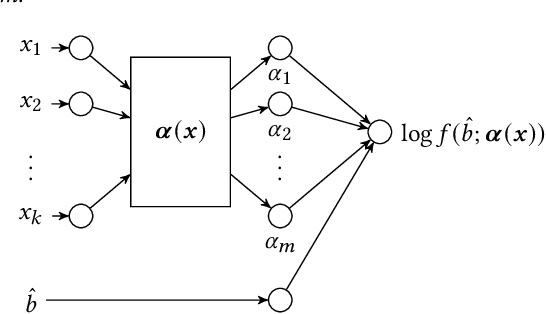

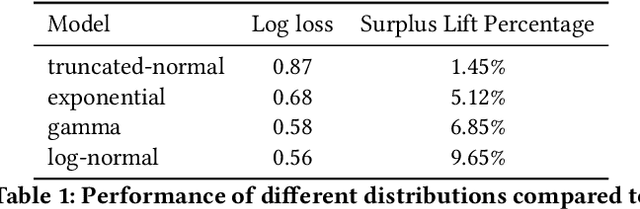

An Efficient Deep Distribution Network for Bid Shading in First-Price Auctions

Jul 15, 2021

Abstract:Since 2019, most ad exchanges and sell-side platforms (SSPs), in the online advertising industry, shifted from second to first price auctions. Due to the fundamental difference between these auctions, demand-side platforms (DSPs) have had to update their bidding strategies to avoid bidding unnecessarily high and hence overpaying. Bid shading was proposed to adjust the bid price intended for second-price auctions, in order to balance cost and winning probability in a first-price auction setup. In this study, we introduce a novel deep distribution network for optimal bidding in both open (non-censored) and closed (censored) online first-price auctions. Offline and online A/B testing results show that our algorithm outperforms previous state-of-art algorithms in terms of both surplus and effective cost per action (eCPX) metrics. Furthermore, the algorithm is optimized in run-time and has been deployed into VerizonMedia DSP as production algorithm, serving hundreds of billions of bid requests per day. Online A/B test shows that advertiser's ROI are improved by +2.4%, +2.4%, and +8.6% for impression based (CPM), click based (CPC), and conversion based (CPA) campaigns respectively.

$FM^2$: Field-matrixed Factorization Machines for Recommender Systems

Feb 20, 2021Abstract:Click-through rate (CTR) prediction plays a critical role in recommender systems and online advertising. The data used in these applications are multi-field categorical data, where each feature belongs to one field. Field information is proved to be important and there are several works considering fields in their models. In this paper, we proposed a novel approach to model the field information effectively and efficiently. The proposed approach is a direct improvement of FwFM, and is named as Field-matrixed Factorization Machines (FmFM, or $FM^2$). We also proposed a new explanation of FM and FwFM within the FmFM framework, and compared it with the FFM. Besides pruning the cross terms, our model supports field-specific variable dimensions of embedding vectors, which acts as soft pruning. We also proposed an efficient way to minimize the dimension while keeping the model performance. The FmFM model can also be optimized further by caching the intermediate vectors, and it only takes thousands of floating-point operations (FLOPs) to make a prediction. Our experiment results show that it can out-perform the FFM, which is more complex. The FmFM model's performance is also comparable to DNN models which require much more FLOPs in runtime.

Bid Shading by Win-Rate Estimation and Surplus Maximization

Sep 19, 2020

Abstract:This paper describes a new win-rate based bid shading algorithm (WR) that does not rely on the minimum-bid-to-win feedback from a Sell-Side Platform (SSP). The method uses a modified logistic regression to predict the profit from each possible shaded bid price. The function form allows fast maximization at run-time, a key requirement for Real-Time Bidding (RTB) systems. We report production results from this method along with several other algorithms. We found that bid shading, in general, can deliver significant value to advertisers, reducing price per impression to about 55% of the unshaded cost. Further, the particular approach described in this paper captures 7% more profit for advertisers, than do benchmark methods of just bidding the most probable winning price. We also report 4.3% higher surplus than an industry Sell-Side Platform shading service. Furthermore, we observed 3% - 7% lower eCPM, eCPC and eCPA when the algorithm was integrated with budget controllers. We attribute the gains above as being mainly due to the explicit maximization of the surplus function, and note that other algorithms can take advantage of this same approach.

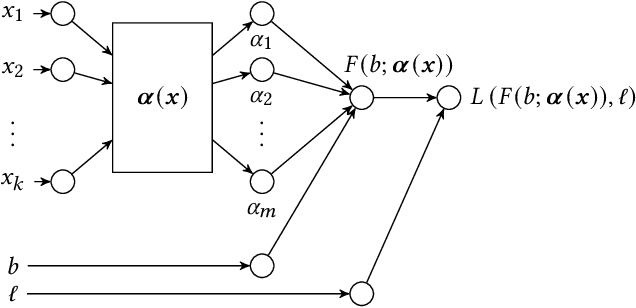

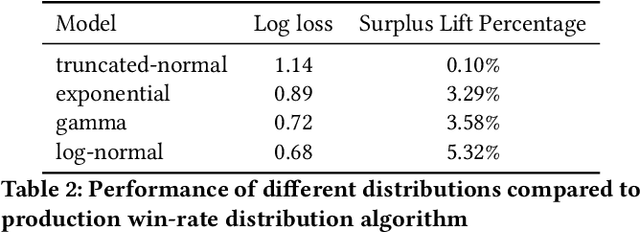

Bid Shading in The Brave New World of First-Price Auctions

Sep 02, 2020

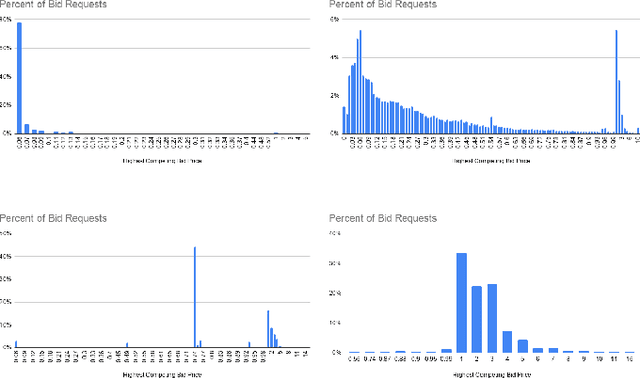

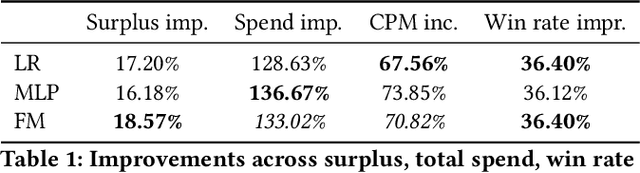

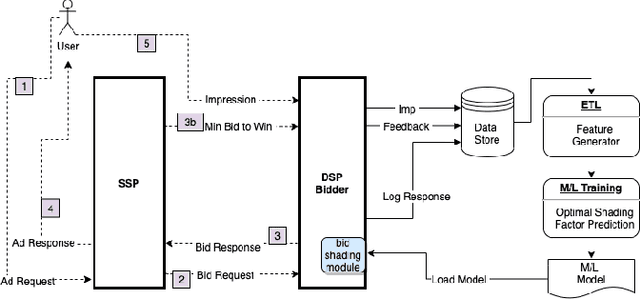

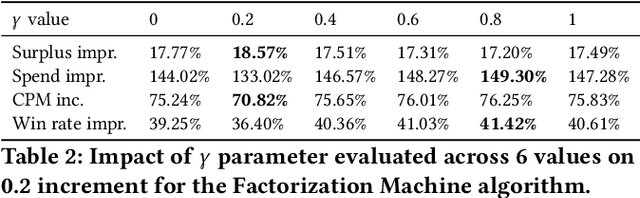

Abstract:Online auctions play a central role in online advertising, and are one of the main reasons for the industry's scalability and growth. With great changes in how auctions are being organized, such as changing the second- to first-price auction type, advertisers and demand platforms are compelled to adapt to a new volatile environment. Bid shading is a known technique for preventing overpaying in auction systems that can help maintain the strategy equilibrium in first-price auctions, tackling one of its greatest drawbacks. In this study, we propose a machine learning approach of modeling optimal bid shading for non-censored online first-price ad auctions. We clearly motivate the approach and extensively evaluate it in both offline and online settings on a major demand side platform. The results demonstrate the superiority and robustness of the new approach as compared to the existing approaches across a range of performance metrics.

Learning to Bid Optimally and Efficiently in Adversarial First-price Auctions

Jul 09, 2020

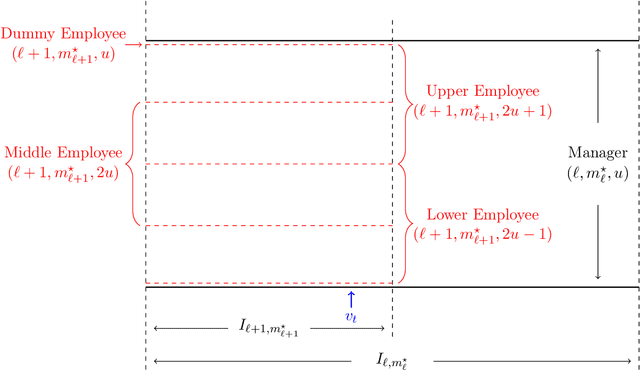

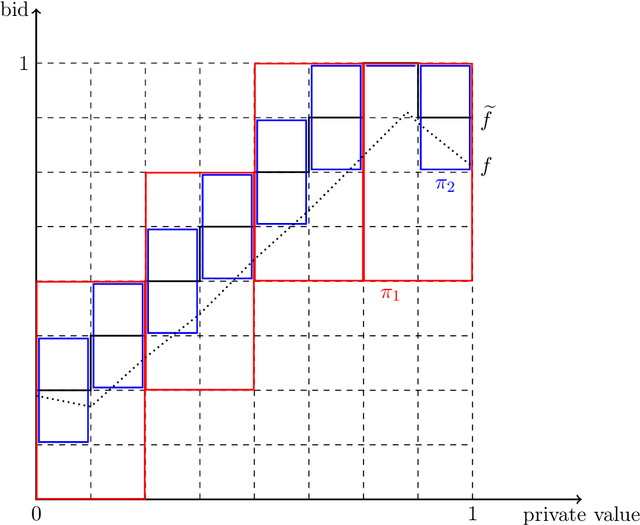

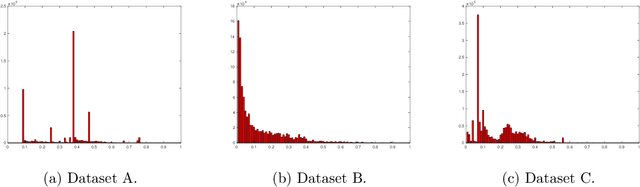

Abstract:First-price auctions have very recently swept the online advertising industry, replacing second-price auctions as the predominant auction mechanism on many platforms. This shift has brought forth important challenges for a bidder: how should one bid in a first-price auction, where unlike in second-price auctions, it is no longer optimal to bid one's private value truthfully and hard to know the others' bidding behaviors? In this paper, we take an online learning angle and address the fundamental problem of learning to bid in repeated first-price auctions, where both the bidder's private valuations and other bidders' bids can be arbitrary. We develop the first minimax optimal online bidding algorithm that achieves an $\widetilde{O}(\sqrt{T})$ regret when competing with the set of all Lipschitz bidding policies, a strong oracle that contains a rich set of bidding strategies. This novel algorithm is built on the insight that the presence of a good expert can be leveraged to improve performance, as well as an original hierarchical expert-chaining structure, both of which could be of independent interest in online learning. Further, by exploiting the product structure that exists in the problem, we modify this algorithm--in its vanilla form statistically optimal but computationally infeasible--to a computationally efficient and space efficient algorithm that also retains the same $\widetilde{O}(\sqrt{T})$ minimax optimal regret guarantee. Additionally, through an impossibility result, we highlight that one is unlikely to compete this favorably with a stronger oracle (than the considered Lipschitz bidding policies). Finally, we test our algorithm on three real-world first-price auction datasets obtained from Verizon Media and demonstrate our algorithm's superior performance compared to several existing bidding algorithms.

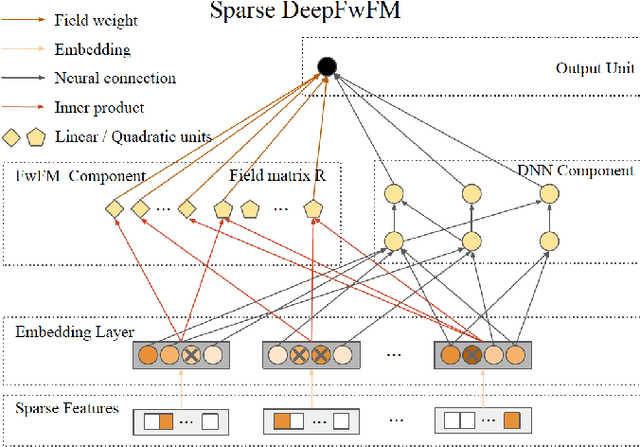

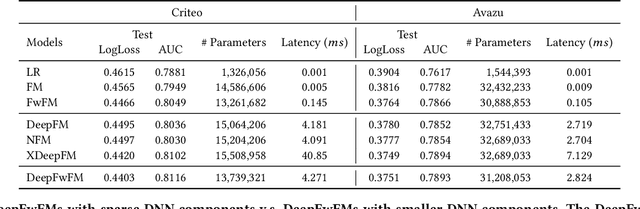

A Sparse Deep Factorization Machine for Efficient CTR prediction

Feb 17, 2020

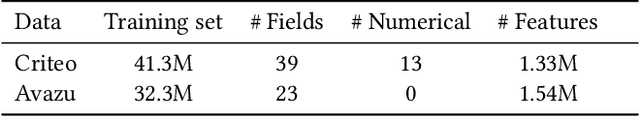

Abstract:Click-through rate (CTR) prediction is a crucial task in online display advertising and the key part is to learn important feature interactions. The mainstream models are embedding-based neural networks that provide end-to-end training by incorporating hybrid components to model both low-order and high-order feature interactions. These models, however, slow down the prediction inference by at least hundreds of times due to the deep neural network (DNN) component. Considering the challenge of deploying embedding-based neural networks for online advertising, we propose to prune the redundant parameters for the first time to accelerate the inference and reduce the run-time memory usage. Most notably, we can accelerate the inference by 46X on Criteo dataset and 27X on Avazu dataset without loss on the prediction accuracy. In addition, the deep model acceleration makes an efficient model ensemble possible with low latency and significant gains on the performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge