Zibo Zhang

AI-Driven Smart Sportswear for Real-Time Fitness Monitoring Using Textile Strain Sensors

Apr 11, 2025Abstract:Wearable biosensors have revolutionized human performance monitoring by enabling real-time assessment of physiological and biomechanical parameters. However, existing solutions lack the ability to simultaneously capture breath-force coordination and muscle activation symmetry in a seamless and non-invasive manner, limiting their applicability in strength training and rehabilitation. This work presents a wearable smart sportswear system that integrates screen-printed graphene-based strain sensors with a wireless deep learning framework for real-time classification of exercise execution quality. By leveraging 1D ResNet-18 for feature extraction, the system achieves 92.3% classification accuracy across six exercise conditions, distinguishing between breathing irregularities and asymmetric muscle exertion. Additionally, t-SNE analysis and Grad-CAM-based explainability visualization confirm that the network accurately captures biomechanically relevant features, ensuring robust interpretability. The proposed system establishes a foundation for next-generation AI-powered sportswear, with applications in fitness optimization, injury prevention, and adaptive rehabilitation training.

A Unified Platform for At-Home Post-Stroke Rehabilitation Enabled by Wearable Technologies and Artificial Intelligence

Nov 28, 2024

Abstract:At-home rehabilitation for post-stroke patients presents significant challenges, as continuous, personalized care is often limited outside clinical settings. Additionally, the absence of comprehensive solutions addressing diverse rehabilitation needs in home environments complicates recovery efforts. Here, we introduce a smart home platform that integrates wearable sensors, ambient monitoring, and large language model (LLM)-powered assistance to provide seamless health monitoring and intelligent support. The system leverages machine learning enabled plantar pressure arrays for motor recovery assessment (94% classification accuracy), a wearable eye-tracking module for cognitive evaluation, and ambient sensors for precise smart home control (100% operational success, <1 s latency). Additionally, the LLM-powered agent, Auto-Care, offers real-time interventions, such as health reminders and environmental adjustments, enhancing user satisfaction by 29%. This work establishes a fully integrated platform for long-term, personalized rehabilitation, offering new possibilities for managing chronic conditions and supporting aging populations.

Wearable intelligent throat enables natural speech in stroke patients with dysarthria

Nov 28, 2024

Abstract:Wearable silent speech systems hold significant potential for restoring communication in patients with speech impairments. However, seamless, coherent speech remains elusive, and clinical efficacy is still unproven. Here, we present an AI-driven intelligent throat (IT) system that integrates throat muscle vibrations and carotid pulse signal sensors with large language model (LLM) processing to enable fluent, emotionally expressive communication. The system utilizes ultrasensitive textile strain sensors to capture high-quality signals from the neck area and supports token-level processing for real-time, continuous speech decoding, enabling seamless, delay-free communication. In tests with five stroke patients with dysarthria, IT's LLM agents intelligently corrected token errors and enriched sentence-level emotional and logical coherence, achieving low error rates (4.2% word error rate, 2.9% sentence error rate) and a 55% increase in user satisfaction. This work establishes a portable, intuitive communication platform for patients with dysarthria with the potential to be applied broadly across different neurological conditions and in multi-language support systems.

HABD: a houma alliance book ancient handwritten character recognition database

Aug 26, 2024

Abstract:The Houma Alliance Book, one of history's earliest calligraphic examples, was unearthed in the 1970s. These artifacts were meticulously organized, reproduced, and copied by the Shanxi Provincial Institute of Cultural Relics. However, because of their ancient origins and severe ink erosion, identifying characters in the Houma Alliance Book is challenging, necessitating the use of digital technology. In this paper, we propose a new ancient handwritten character recognition database for the Houma alliance book, along with a novel benchmark based on deep learning architectures. More specifically, a collection of 26,732 characters samples from the Houma Alliance Book were gathered, encompassing 327 different types of ancient characters through iterative annotation. Furthermore, benchmark algorithms were proposed by combining four deep neural network classifiers with two data augmentation methods. This research provides valuable resources and technical support for further studies on the Houma Alliance Book and other ancient characters. This contributes to our understanding of ancient culture and history, as well as the preservation and inheritance of humanity's cultural heritage.

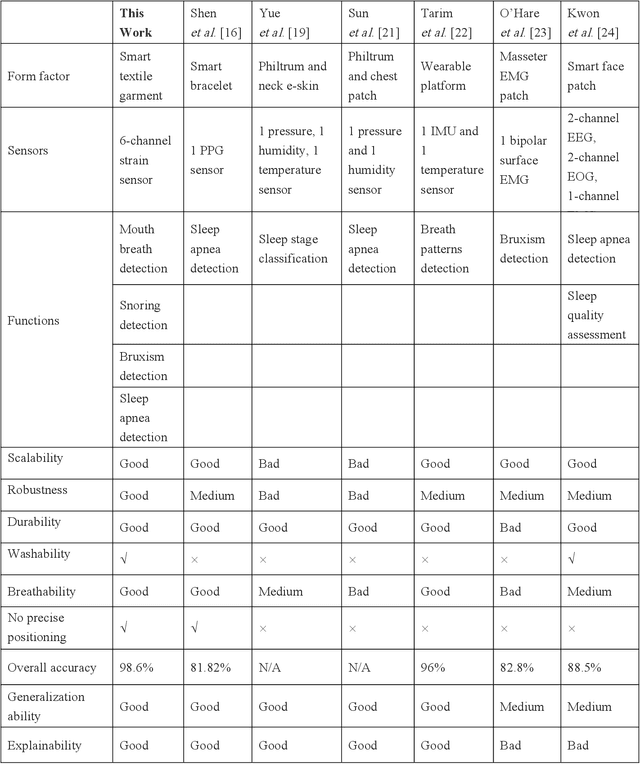

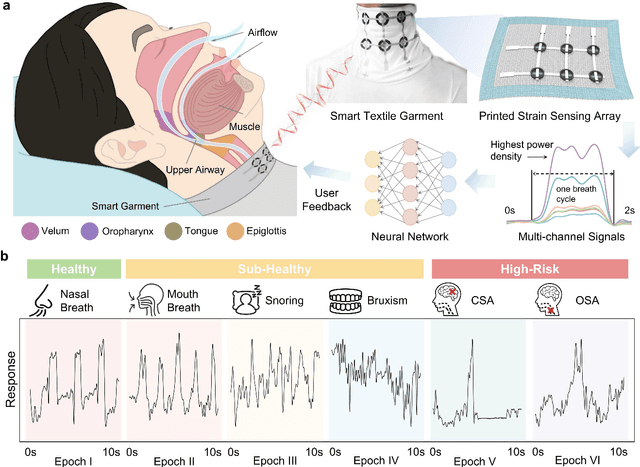

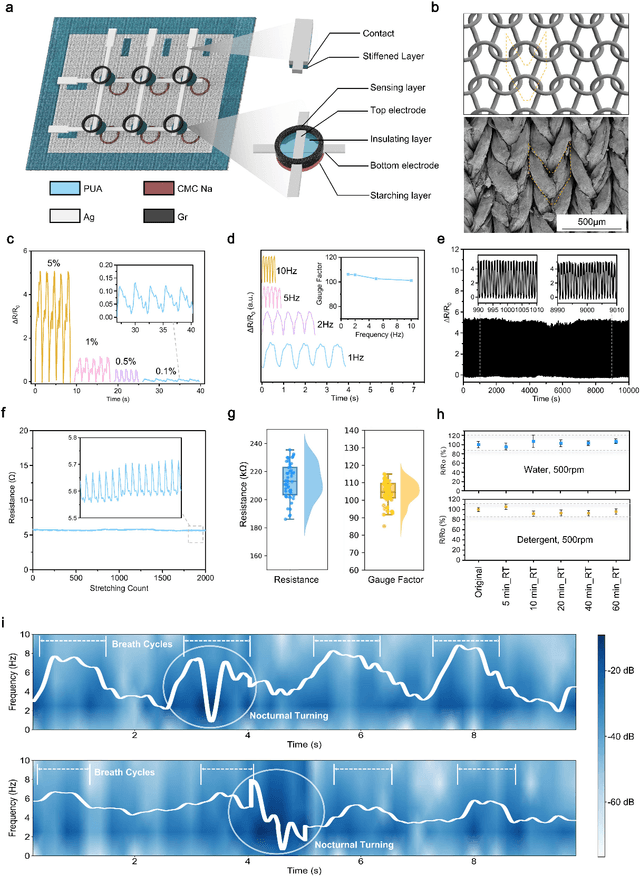

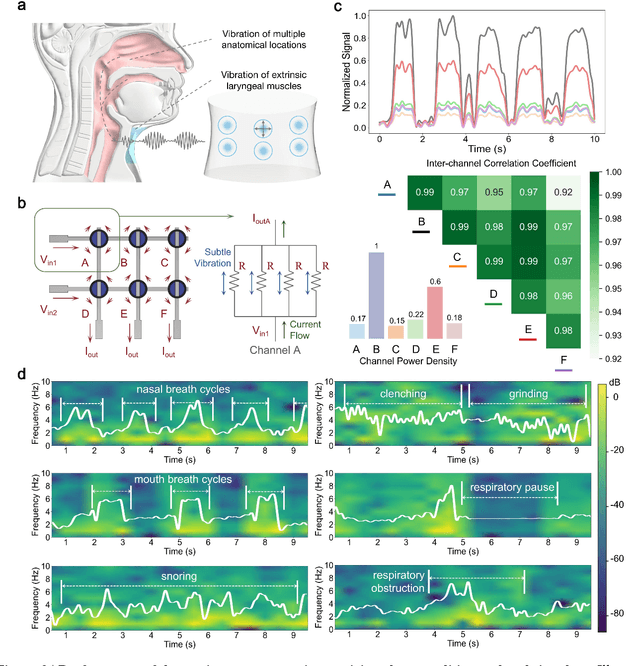

A deep learning-enabled smart garment for versatile sleep behaviour monitoring

Aug 01, 2024

Abstract:Continuous monitoring and accurate detection of complex sleep patterns associated to different sleep-related conditions is essential, not only for enhancing sleep quality but also for preventing the risk of developing chronic illnesses associated to unhealthy sleep. Despite significant advances in research, achieving versatile recognition of various unhealthy and sub-healthy sleep patterns with simple wearable devices at home remains a significant challenge. Here, we report a robust and durable ultrasensitive strain sensor array printed on a smart garment, in its collar region. This solution allows detecting subtle vibrations associated with multiple sleep patterns at the extrinsic laryngeal muscles. Equipped with a deep learning neural network, it can precisely identify six sleep states-nasal breathing, mouth breathing, snoring, bruxism, central sleep apnea (CSA), and obstructive sleep apnea (OSA)-with an impressive accuracy of 98.6%, all without requiring specific positioning. We further demonstrate its explainability and generalization capabilities in practical applications. Explainable artificial intelligence (XAI) visualizations reflect comprehensive signal pattern analysis with low bias. Transfer learning tests show that the system can achieve high accuracy (overall accuracy of 95%) on new users with very few-shot learning (less than 15 samples per class). The scalable manufacturing process, robustness, high accuracy, and excellent generalization of the smart garment make it a promising tool for next-generation continuous sleep monitoring.

EmoWear: Exploring Emotional Teasers for Voice Message Interaction on Smartwatches

Feb 11, 2024Abstract:Voice messages, by nature, prevent users from gauging the emotional tone without fully diving into the audio content. This hinders the shared emotional experience at the pre-retrieval stage. Research scarcely explored "Emotional Teasers"-pre-retrieval cues offering a glimpse into an awaiting message's emotional tone without disclosing its content. We introduce EmoWear, a smartwatch voice messaging system enabling users to apply 30 animation teasers on message bubbles to reflect emotions. EmoWear eases senders' choice by prioritizing emotions based on semantic and acoustic processing. EmoWear was evaluated in comparison with a mirroring system using color-coded message bubbles as emotional cues (N=24). Results showed EmoWear significantly enhanced emotional communication experience in both receiving and sending messages. The animated teasers were considered intuitive and valued for diverse expressions. Desirable interaction qualities and practical implications are distilled for future design. We thereby contribute both a novel system and empirical knowledge concerning emotional teasers for voice messaging.

Ultrasensitive Textile Strain Sensors Redefine Wearable Silent Speech Interfaces with High Machine Learning Efficiency

Dec 07, 2023Abstract:Our research presents a wearable Silent Speech Interface (SSI) technology that excels in device comfort, time-energy efficiency, and speech decoding accuracy for real-world use. We developed a biocompatible, durable textile choker with an embedded graphene-based strain sensor, capable of accurately detecting subtle throat movements. This sensor, surpassing other strain sensors in sensitivity by 420%, simplifies signal processing compared to traditional voice recognition methods. Our system uses a computationally efficient neural network, specifically a one-dimensional convolutional neural network with residual structures, to decode speech signals. This network is energy and time-efficient, reducing computational load by 90% while achieving 95.25% accuracy for a 20-word lexicon and swiftly adapting to new users and words with minimal samples. This innovation demonstrates a practical, sensitive, and precise wearable SSI suitable for daily communication applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge