Zhilu Wang

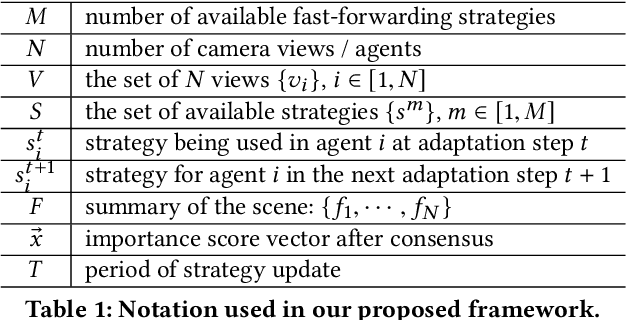

Collaborative Multi-Agent Video Fast-Forwarding

May 27, 2023Abstract:Multi-agent applications have recently gained significant popularity. In many computer vision tasks, a network of agents, such as a team of robots with cameras, could work collaboratively to perceive the environment for efficient and accurate situation awareness. However, these agents often have limited computation, communication, and storage resources. Thus, reducing resource consumption while still providing an accurate perception of the environment becomes an important goal when deploying multi-agent systems. To achieve this goal, we identify and leverage the overlap among different camera views in multi-agent systems for reducing the processing, transmission and storage of redundant/unimportant video frames. Specifically, we have developed two collaborative multi-agent video fast-forwarding frameworks in distributed and centralized settings, respectively. In these frameworks, each individual agent can selectively process or skip video frames at adjustable paces based on multiple strategies via reinforcement learning. Multiple agents then collaboratively sense the environment via either 1) a consensus-based distributed framework called DMVF that periodically updates the fast-forwarding strategies of agents by establishing communication and consensus among connected neighbors, or 2) a centralized framework called MFFNet that utilizes a central controller to decide the fast-forwarding strategies for agents based on collected data. We demonstrate the efficacy and efficiency of our proposed frameworks on a real-world surveillance video dataset VideoWeb and a new simulated driving dataset CarlaSim, through extensive simulations and deployment on an embedded platform with TCP communication. We show that compared with other approaches in the literature, our frameworks achieve better coverage of important frames, while significantly reducing the number of frames processed at each agent.

POLAR-Express: Efficient and Precise Formal Reachability Analysis of Neural-Network Controlled Systems

Apr 06, 2023

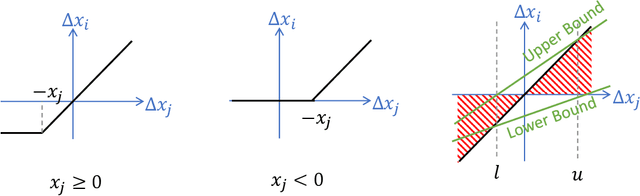

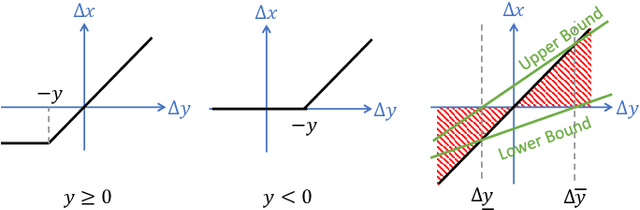

Abstract:Neural networks (NNs) playing the role of controllers have demonstrated impressive empirical performances on challenging control problems. However, the potential adoption of NN controllers in real-life applications also gives rise to a growing concern over the safety of these neural-network controlled systems (NNCSs), especially when used in safety-critical applications. In this work, we present POLAR-Express, an efficient and precise formal reachability analysis tool for verifying the safety of NNCSs. POLAR-Express uses Taylor model arithmetic to propagate Taylor models (TMs) across a neural network layer-by-layer to compute an overapproximation of the neural-network function. It can be applied to analyze any feed-forward neural network with continuous activation functions. We also present a novel approach to propagate TMs more efficiently and precisely across ReLU activation functions. In addition, POLAR-Express provides parallel computation support for the layer-by-layer propagation of TMs, thus significantly improving the efficiency and scalability over its earlier prototype POLAR. Across the comparison with six other state-of-the-art tools on a diverse set of benchmarks, POLAR-Express achieves the best verification efficiency and tightness in the reachable set analysis.

Enforcing Hard Constraints with Soft Barriers: Safe Reinforcement Learning in Unknown Stochastic Environments

Sep 29, 2022

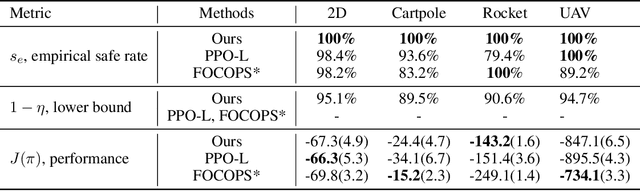

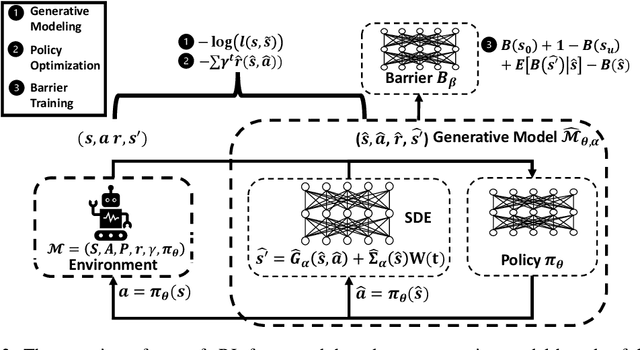

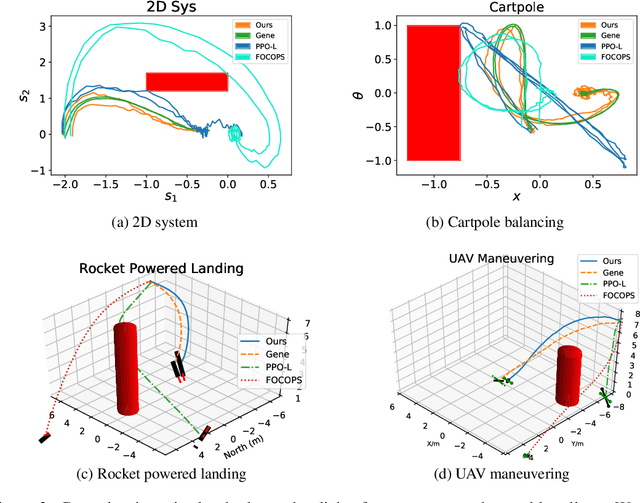

Abstract:It is quite challenging to ensure the safety of reinforcement learning (RL) agents in an unknown and stochastic environment under hard constraints that require the system state not to reach certain specified unsafe regions. Many popular safe RL methods such as those based on the Constrained Markov Decision Process (CMDP) paradigm formulate safety violations in a cost function and try to constrain the expectation of cumulative cost under a threshold. However, it is often difficult to effectively capture and enforce hard reachability-based safety constraints indirectly with such constraints on safety violation costs. In this work, we leverage the notion of barrier function to explicitly encode the hard safety constraints, and given that the environment is unknown, relax them to our design of \emph{generative-model-based soft barrier functions}. Based on such soft barriers, we propose a safe RL approach that can jointly learn the environment and optimize the control policy, while effectively avoiding unsafe regions with safety probability optimization. Experiments on a set of examples demonstrate that our approach can effectively enforce hard safety constraints and significantly outperform CMDP-based baseline methods in system safe rate measured via simulations.

A Tool for Neural Network Global Robustness Certification and Training

Aug 15, 2022

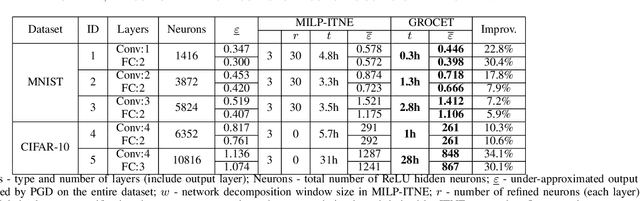

Abstract:With the increment of interest in leveraging machine learning technology in safety-critical systems, the robustness of neural networks under external disturbance receives more and more concerns. Global robustness is a robustness property defined on the entire input domain. And a certified globally robust network can ensure its robustness on any possible network input. However, the state-of-the-art global robustness certification algorithm can only certify networks with at most several thousand neurons. In this paper, we propose the GPU-supported global robustness certification framework GROCET, which is more efficient than the previous optimization-based certification approach. Moreover, GROCET provides differentiable global robustness, which is leveraged in the training of globally robust neural networks.

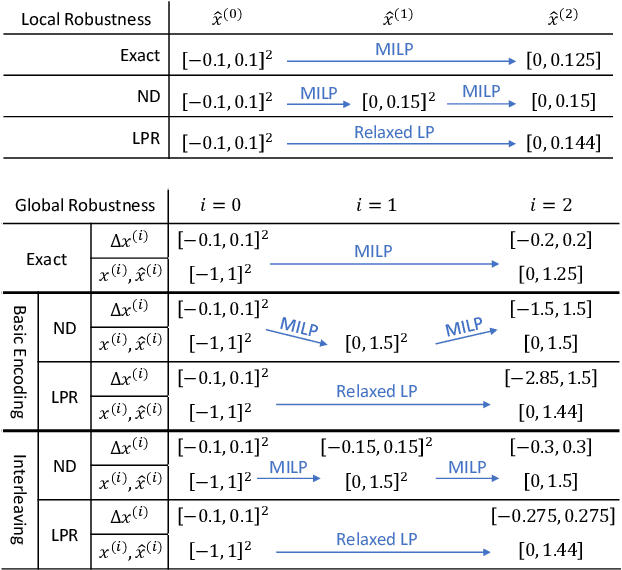

Efficient Global Robustness Certification of Neural Networks via Interleaving Twin-Network Encoding

Mar 26, 2022

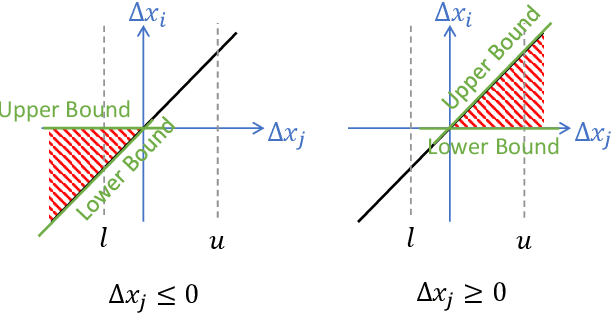

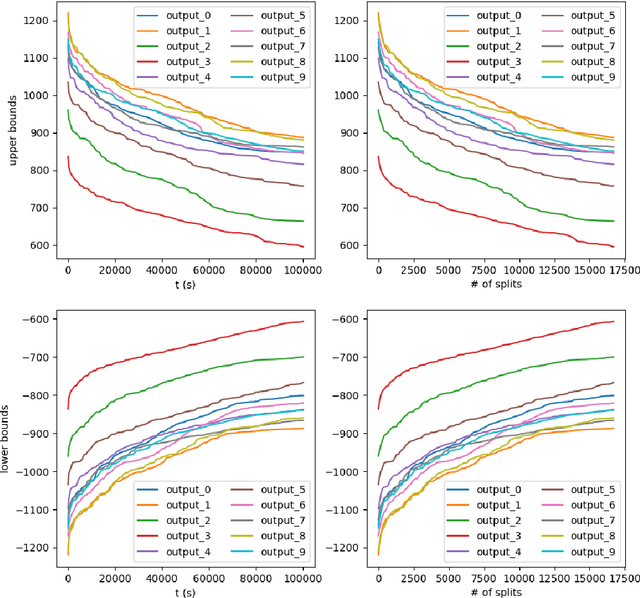

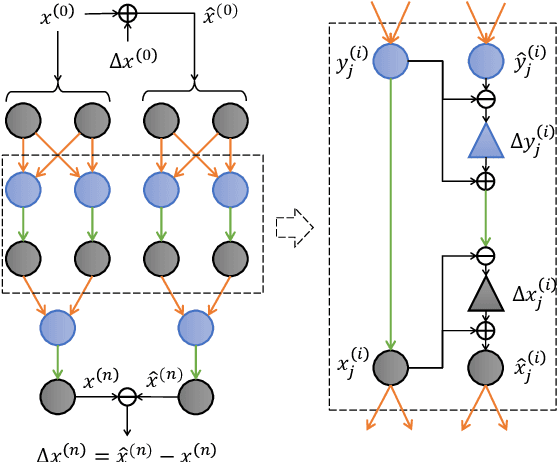

Abstract:The robustness of deep neural networks has received significant interest recently, especially when being deployed in safety-critical systems, as it is important to analyze how sensitive the model output is under input perturbations. While most previous works focused on the local robustness property around an input sample, the studies of the global robustness property, which bounds the maximum output change under perturbations over the entire input space, are still lacking. In this work, we formulate the global robustness certification for neural networks with ReLU activation functions as a mixed-integer linear programming (MILP) problem, and present an efficient approach to address it. Our approach includes a novel interleaving twin-network encoding scheme, where two copies of the neural network are encoded side-by-side with extra interleaving dependencies added between them, and an over-approximation algorithm leveraging relaxation and refinement techniques to reduce complexity. Experiments demonstrate the timing efficiency of our work when compared with previous global robustness certification methods and the tightness of our over-approximation. A case study of closed-loop control safety verification is conducted, and demonstrates the importance and practicality of our approach for certifying the global robustness of neural networks in safety-critical systems.

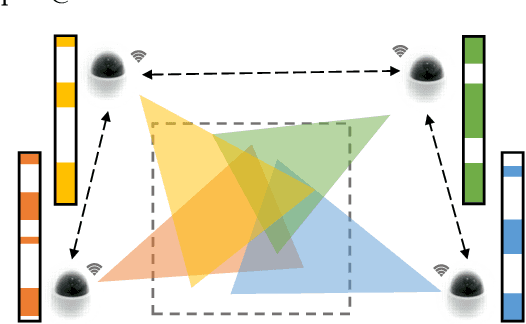

Distributed Multi-agent Video Fast-forwarding

Aug 10, 2020

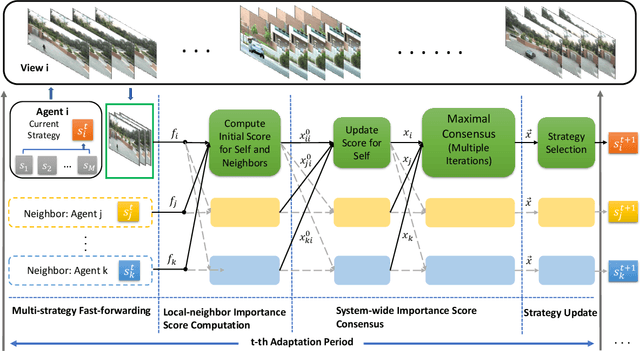

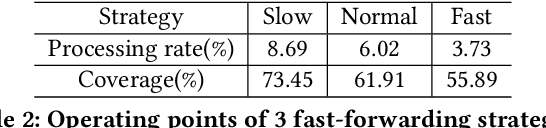

Abstract:In many intelligent systems, a network of agents collaboratively perceives the environment for better and more efficient situation awareness. As these agents often have limited resources, it could be greatly beneficial to identify the content overlapping among camera views from different agents and leverage it for reducing the processing, transmission and storage of redundant/unimportant video frames. This paper presents a consensus-based distributed multi-agent video fast-forwarding framework, named DMVF, that fast-forwards multi-view video streams collaboratively and adaptively. In our framework, each camera view is addressed by a reinforcement learning based fast-forwarding agent, which periodically chooses from multiple strategies to selectively process video frames and transmits the selected frames at adjustable paces. During every adaptation period, each agent communicates with a number of neighboring agents, evaluates the importance of the selected frames from itself and those from its neighbors, refines such evaluation together with other agents via a system-wide consensus algorithm, and uses such evaluation to decide their strategy for the next period. Compared with approaches in the literature on a real-world surveillance video dataset VideoWeb, our method significantly improves the coverage of important frames and also reduces the number of frames processed in the system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge