Yassine Himeur

Extracting Actionable Insights from Building Energy Data using Vision LLMs on Wavelet and 3D Recurrence Representations

Sep 26, 2025Abstract:The analysis of complex building time-series for actionable insights and recommendations remains challenging due to the nonlinear and multi-scale characteristics of energy data. To address this, we propose a framework that fine-tunes visual language large models (VLLMs) on 3D graphical representations of the data. The approach converts 1D time-series into 3D representations using continuous wavelet transforms (CWTs) and recurrence plots (RPs), which capture temporal dynamics and localize frequency anomalies. These 3D encodings enable VLLMs to visually interpret energy-consumption patterns, detect anomalies, and provide recommendations for energy efficiency. We demonstrate the framework on real-world building-energy datasets, where fine-tuned VLLMs successfully monitor building states, identify recurring anomalies, and generate optimization recommendations. Quantitatively, the Idefics-7B VLLM achieves validation losses of 0.0952 with CWTs and 0.1064 with RPs on the University of Sharjah energy dataset, outperforming direct fine-tuning on raw time-series data (0.1176) for anomaly detection. This work bridges time-series analysis and visualization, providing a scalable and interpretable framework for energy analytics.

Interpretable Deep Transfer Learning for Breast Ultrasound Cancer Detection: A Multi-Dataset Study

Sep 05, 2025

Abstract:Breast cancer remains a leading cause of cancer-related mortality among women worldwide. Ultrasound imaging, widely used due to its safety and cost-effectiveness, plays a key role in early detection, especially in patients with dense breast tissue. This paper presents a comprehensive study on the application of machine learning and deep learning techniques for breast cancer classification using ultrasound images. Using datasets such as BUSI, BUS-BRA, and BrEaST-Lesions USG, we evaluate classical machine learning models (SVM, KNN) and deep convolutional neural networks (ResNet-18, EfficientNet-B0, GoogLeNet). Experimental results show that ResNet-18 achieves the highest accuracy (99.7%) and perfect sensitivity for malignant lesions. Classical ML models, though outperformed by CNNs, achieve competitive performance when enhanced with deep feature extraction. Grad-CAM visualizations further improve model transparency by highlighting diagnostically relevant image regions. These findings support the integration of AI-based diagnostic tools into clinical workflows and demonstrate the feasibility of deploying high-performing, interpretable systems for ultrasound-based breast cancer detection.

Leveraging Transfer Learning and Mobile-enabled Convolutional Neural Networks for Improved Arabic Handwritten Character Recognition

Sep 05, 2025Abstract:The study explores the integration of transfer learning (TL) with mobile-enabled convolutional neural networks (MbNets) to enhance Arabic Handwritten Character Recognition (AHCR). Addressing challenges like extensive computational requirements and dataset scarcity, this research evaluates three TL strategies--full fine-tuning, partial fine-tuning, and training from scratch--using four lightweight MbNets: MobileNet, SqueezeNet, MnasNet, and ShuffleNet. Experiments were conducted on three benchmark datasets: AHCD, HIJJA, and IFHCDB. MobileNet emerged as the top-performing model, consistently achieving superior accuracy, robustness, and efficiency, with ShuffleNet excelling in generalization, particularly under full fine-tuning. The IFHCDB dataset yielded the highest results, with 99% accuracy using MnasNet under full fine-tuning, highlighting its suitability for robust character recognition. The AHCD dataset achieved competitive accuracy (97%) with ShuffleNet, while HIJJA posed significant challenges due to its variability, achieving a peak accuracy of 92% with ShuffleNet. Notably, full fine-tuning demonstrated the best overall performance, balancing accuracy and convergence speed, while partial fine-tuning underperformed across metrics. These findings underscore the potential of combining TL and MbNets for resource-efficient AHCR, paving the way for further optimizations and broader applications. Future work will explore architectural modifications, in-depth dataset feature analysis, data augmentation, and advanced sensitivity analysis to enhance model robustness and generalizability.

Exploring Image Transforms derived from Eye Gaze Variables for Progressive Autism Diagnosis

Jun 07, 2025Abstract:The prevalence of Autism Spectrum Disorder (ASD) has surged rapidly over the past decade, posing significant challenges in communication, behavior, and focus for affected individuals. Current diagnostic techniques, though effective, are time-intensive, leading to high social and economic costs. This work introduces an AI-powered assistive technology designed to streamline ASD diagnosis and management, enhancing convenience for individuals with ASD and efficiency for caregivers and therapists. The system integrates transfer learning with image transforms derived from eye gaze variables to diagnose ASD. This facilitates and opens opportunities for in-home periodical diagnosis, reducing stress for individuals and caregivers, while also preserving user privacy through the use of image transforms. The accessibility of the proposed method also offers opportunities for improved communication between guardians and therapists, ensuring regular updates on progress and evolving support needs. Overall, the approach proposed in this work ensures timely, accessible diagnosis while protecting the subjects' privacy, improving outcomes for individuals with ASD.

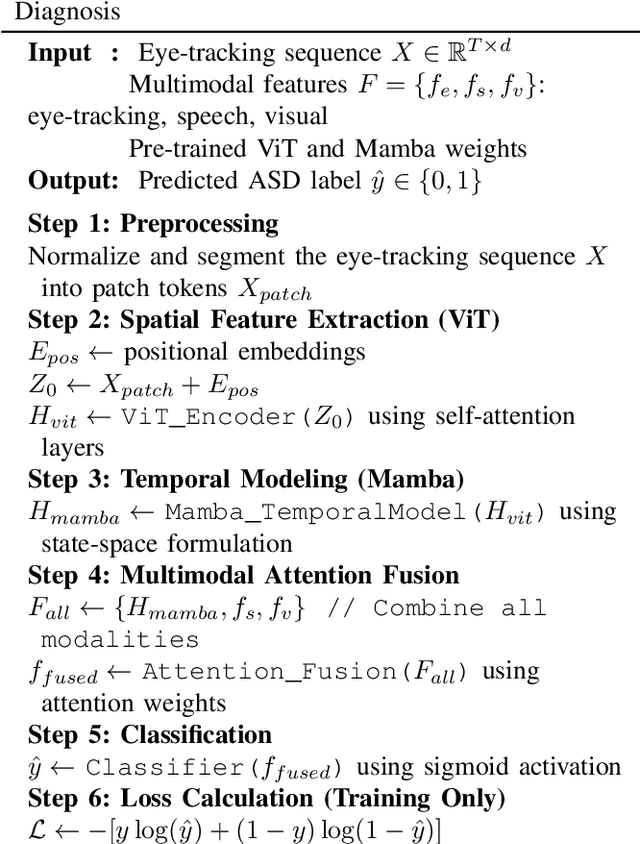

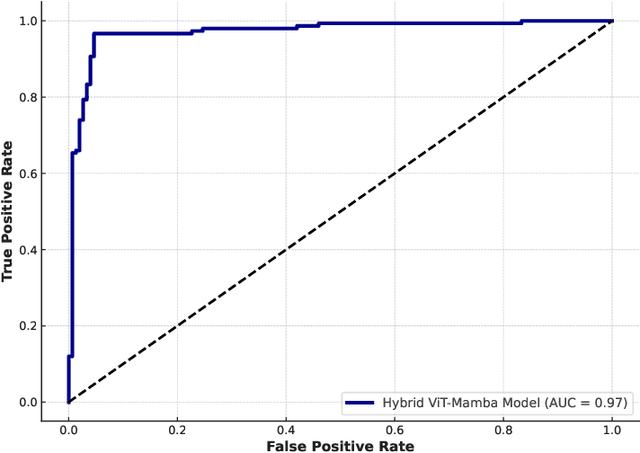

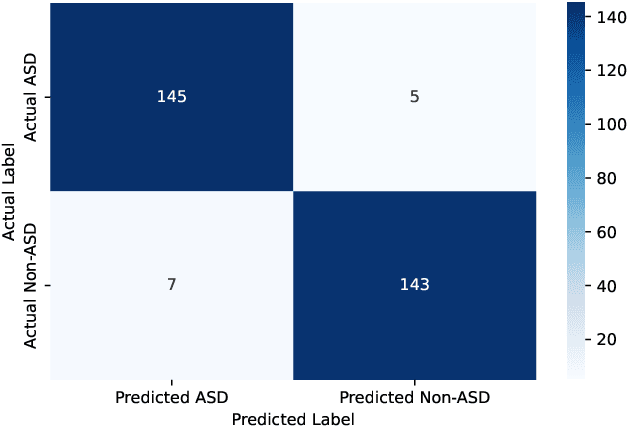

Hybrid Vision Transformer-Mamba Framework for Autism Diagnosis via Eye-Tracking Analysis

Jun 07, 2025

Abstract:Accurate Autism Spectrum Disorder (ASD) diagnosis is vital for early intervention. This study presents a hybrid deep learning framework combining Vision Transformers (ViT) and Vision Mamba to detect ASD using eye-tracking data. The model uses attention-based fusion to integrate visual, speech, and facial cues, capturing both spatial and temporal dynamics. Unlike traditional handcrafted methods, it applies state-of-the-art deep learning and explainable AI techniques to enhance diagnostic accuracy and transparency. Tested on the Saliency4ASD dataset, the proposed ViT-Mamba model outperformed existing methods, achieving 0.96 accuracy, 0.95 F1-score, 0.97 sensitivity, and 0.94 specificity. These findings show the model's promise for scalable, interpretable ASD screening, especially in resource-constrained or remote clinical settings where access to expert diagnosis is limited.

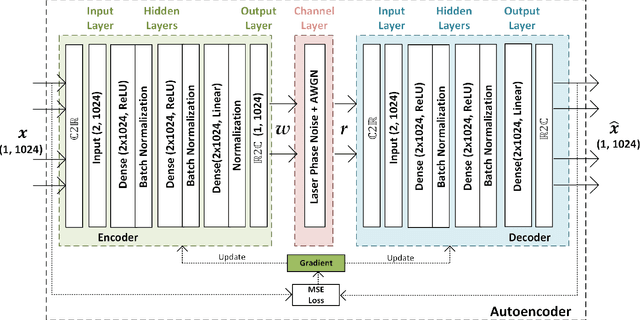

End-to-End Deep Learning in Phase Noisy Coherent Optical Link

Feb 28, 2025

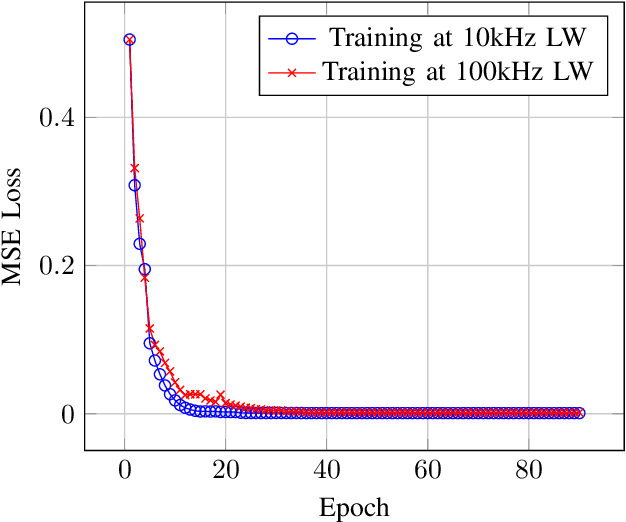

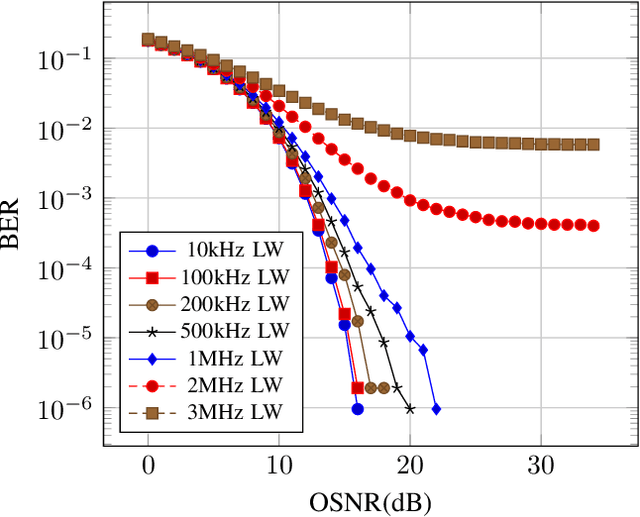

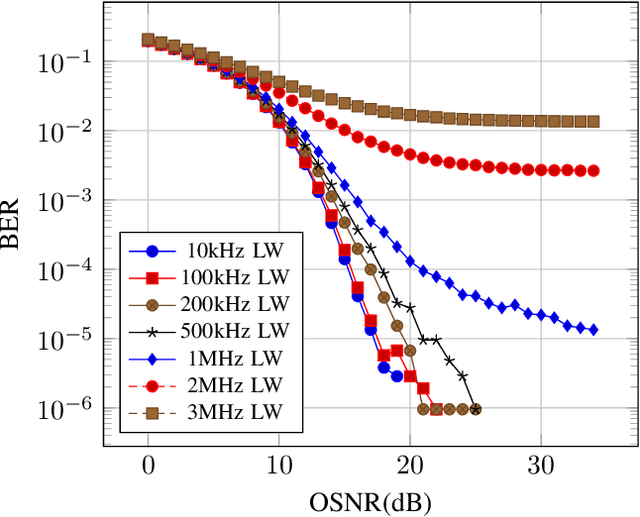

Abstract:In coherent optical orthogonal frequency-division multiplexing (CO-OFDM) fiber communications, a novel end-to-end learning framework to mitigate Laser Phase Noise (LPN) impairments is proposed in this paper. Inspired by Autoencoder (AE) principles, the proposed approach trains a model to learn robust symbol sequences capable of combat LPN, even from low-cost distributed feedback (DFB) lasers with linewidths up to 2 MHz. This allows for the use of high-level modulation formats and large-scale Fast Fourier Transform (FFT) processing, maximizing spectral efficiency in CO-OFDM systems. By eliminating the need for complex traditional techniques, this approach offers a potentially more efficient and streamlined solution for CO-OFDM systems. The most significant achievement of this study is the demonstration that the proposed AE-based model can enhance system performance by reducing the bit error rate (BER) to below the threshold of forward error correction (FEC), even under severe phase noise conditions, thus proving its effectiveness and efficiency in practical deployment scenarios.

A Review on Deep Learning Autoencoder in the Design of Next-Generation Communication Systems

Dec 18, 2024

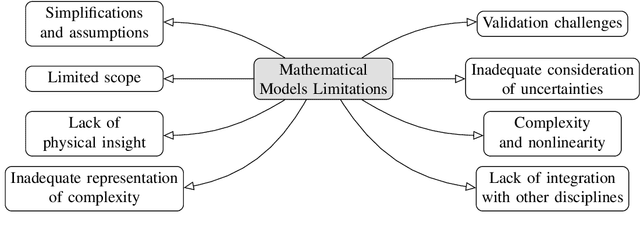

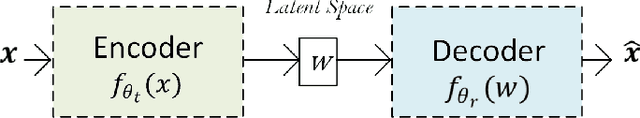

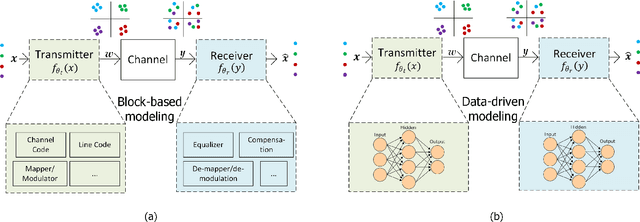

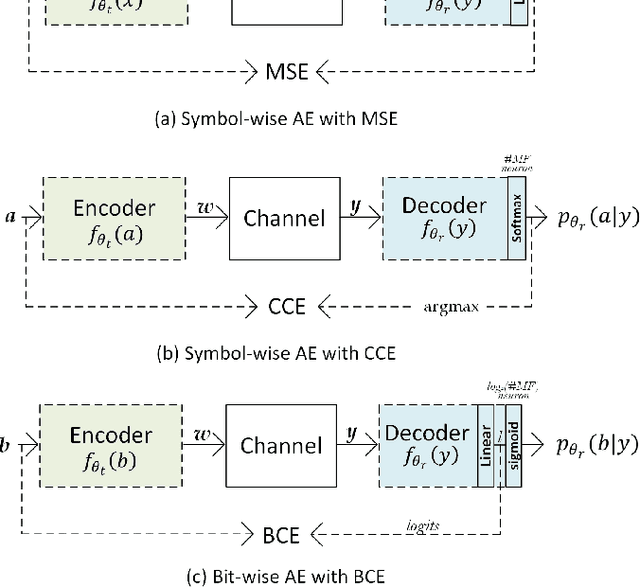

Abstract:Traditional mathematical models used in designing next-generation communication systems often fall short due to inherent simplifications, narrow scope, and computational limitations. In recent years, the incorporation of deep learning (DL) methodologies into communication systems has made significant progress in system design and performance optimisation. Autoencoders (AEs) have become essential, enabling end-to-end learning that allows for the combined optimisation of transmitters and receivers. Consequently, AEs offer a data-driven methodology capable of bridging the gap between theoretical models and real-world complexities. The paper presents a comprehensive survey of the application of AEs within communication systems, with a particular focus on their architectures, associated challenges, and future directions. We examine 120 recent studies across wireless, optical, semantic, and quantum communication fields, categorising them according to transceiver design, channel modelling, digital signal processing, and computational complexity. This paper further examines the challenges encountered in the implementation of AEs, including the need for extensive training data, the risk of overfitting, and the requirement for differentiable channel models. Through data-driven approaches, AEs provide robust solutions for end-to-end system optimisation, surpassing traditional mathematical models confined by simplifying assumptions. This paper also summarises the computational complexity associated with AE-based systems by conducting an in-depth analysis employing the metric of floating-point operations per second (FLOPS). This analysis encompasses the evaluation of matrix multiplications, bias additions, and activation functions. This survey aims to establish a roadmap for future research, emphasising the transformative potential of AEs in the formulation of next-generation communication systems.

Automatic Speech Recognition with BERT and CTC Transformers: A Review

Oct 12, 2024

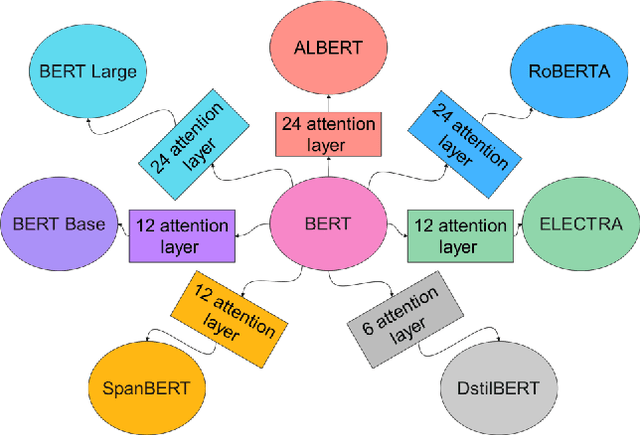

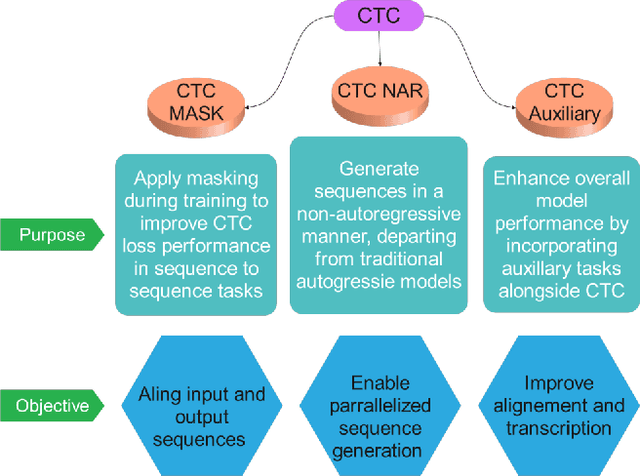

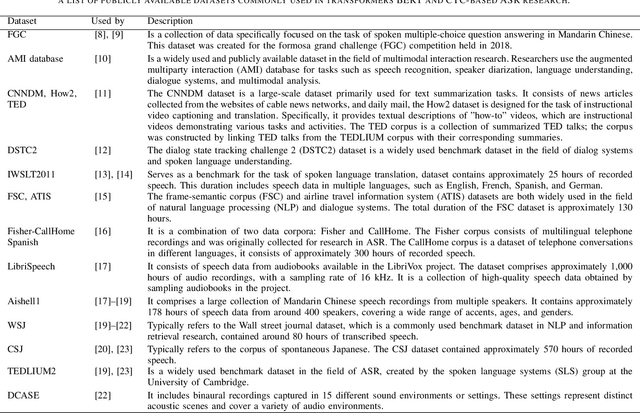

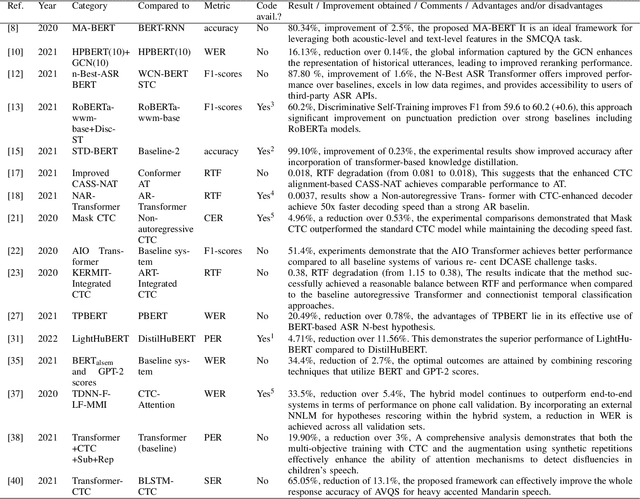

Abstract:This review paper provides a comprehensive analysis of recent advances in automatic speech recognition (ASR) with bidirectional encoder representations from transformers BERT and connectionist temporal classification (CTC) transformers. The paper first introduces the fundamental concepts of ASR and discusses the challenges associated with it. It then explains the architecture of BERT and CTC transformers and their potential applications in ASR. The paper reviews several studies that have used these models for speech recognition tasks and discusses the results obtained. Additionally, the paper highlights the limitations of these models and outlines potential areas for further research. All in all, this review provides valuable insights for researchers and practitioners who are interested in ASR with BERT and CTC transformers.

Applications of Knowledge Distillation in Remote Sensing: A Survey

Sep 18, 2024

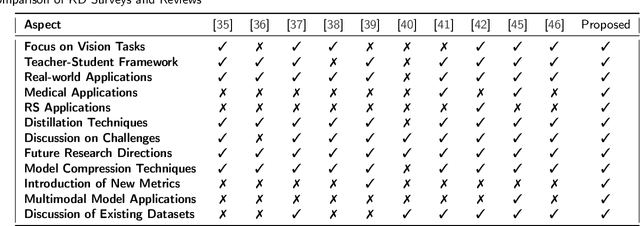

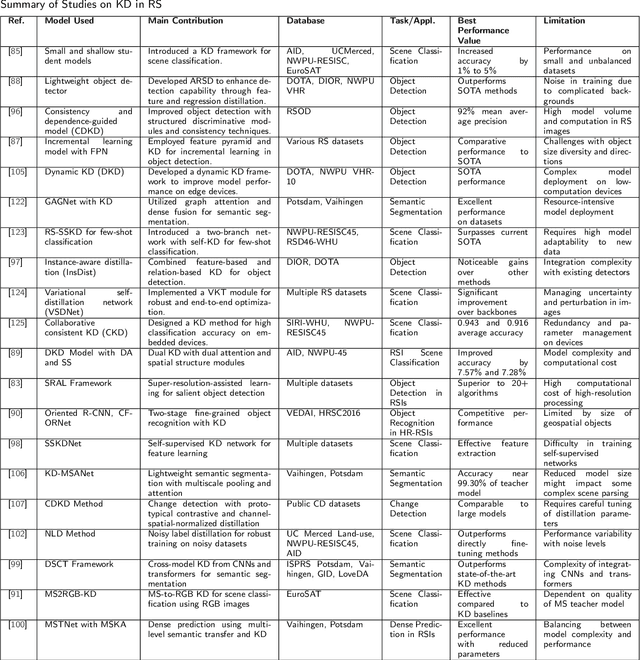

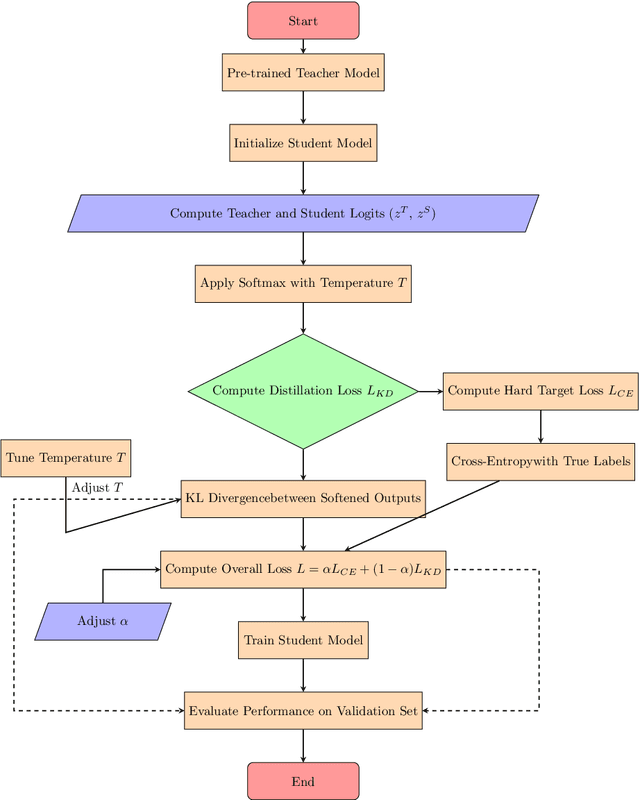

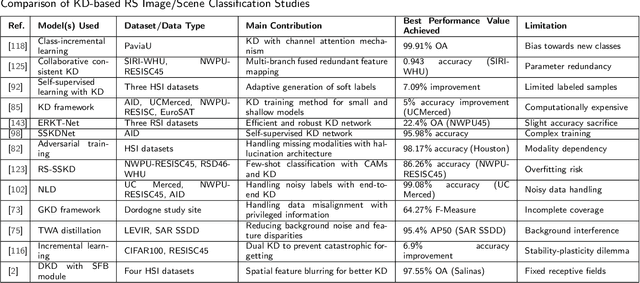

Abstract:With the ever-growing complexity of models in the field of remote sensing (RS), there is an increasing demand for solutions that balance model accuracy with computational efficiency. Knowledge distillation (KD) has emerged as a powerful tool to meet this need, enabling the transfer of knowledge from large, complex models to smaller, more efficient ones without significant loss in performance. This review article provides an extensive examination of KD and its innovative applications in RS. KD, a technique developed to transfer knowledge from a complex, often cumbersome model (teacher) to a more compact and efficient model (student), has seen significant evolution and application across various domains. Initially, we introduce the fundamental concepts and historical progression of KD methods. The advantages of employing KD are highlighted, particularly in terms of model compression, enhanced computational efficiency, and improved performance, which are pivotal for practical deployments in RS scenarios. The article provides a comprehensive taxonomy of KD techniques, where each category is critically analyzed to demonstrate the breadth and depth of the alternative options, and illustrates specific case studies that showcase the practical implementation of KD methods in RS tasks, such as instance segmentation and object detection. Further, the review discusses the challenges and limitations of KD in RS, including practical constraints and prospective future directions, providing a comprehensive overview for researchers and practitioners in the field of RS. Through this organization, the paper not only elucidates the current state of research in KD but also sets the stage for future research opportunities, thereby contributing significantly to both academic research and real-world applications.

PAPR Reduction based on Deep Learning Autoencoder in Coherent Optical OFDM Systems

Aug 26, 2024

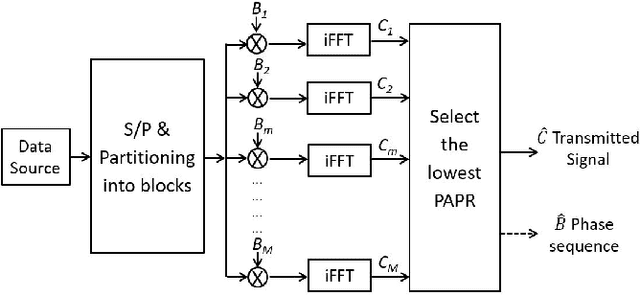

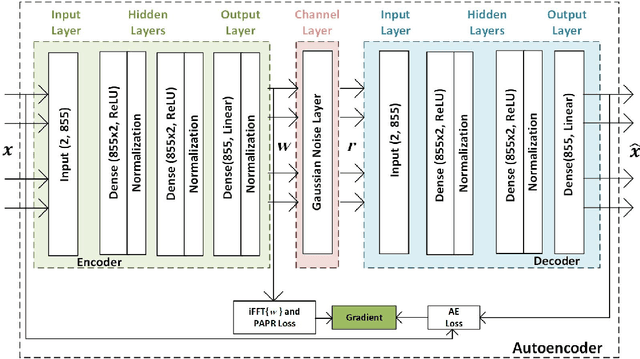

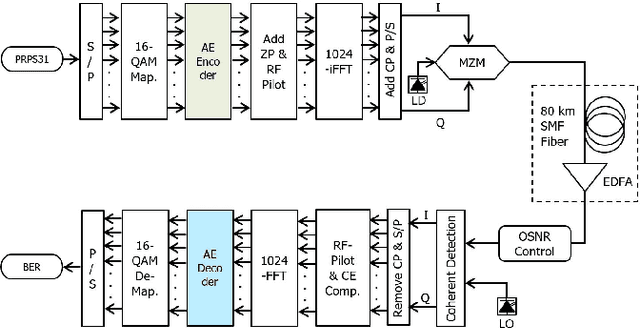

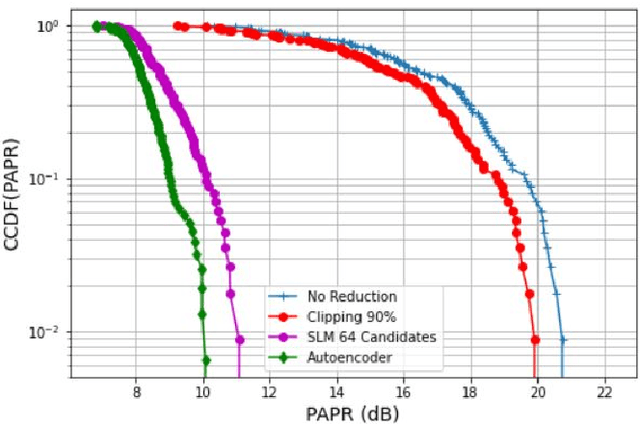

Abstract:This paper presents an innovative approach to reducing Peak-to-Average Power Ratio (PAPR) in Coherent Optical Orthogonal Frequency Division Multiplexing (CO-OFDM) systems. The proposed deep learning autoencoder-based model eliminates the computational complexity of existing PAPR reduction techniques, such as Selective Mapping (SLM), by leveraging a novel decoder architecture at the receiver. In addition, No side information is needed in our approach, unlike SLM which requires knowledge of the PAPR distribution. Simulation results demonstrate significant improvements in both PAPR reduction and Bit Error Rate (BER) performance compared to traditional techniques. It achieves error-free transmission with over 10 dB PAPR reduction compared to unmitigated and 1 dB gain over SLM technique. Furthermore, our approach exhibits robustness against noise and nonlinearity effects, enabling reliable transmission over optical channels with varying levels of impairment. The proposed technique has far-reaching implications for next-generation optical communication systems, where efficient PAPR reduction is crucial for ensuring reliable data transfer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge