Xu Wu

North Carolina State University

Prediction of Critical Heat Flux in Rod Bundles Using Tube-Based Hybrid Machine Learning Models in CTF

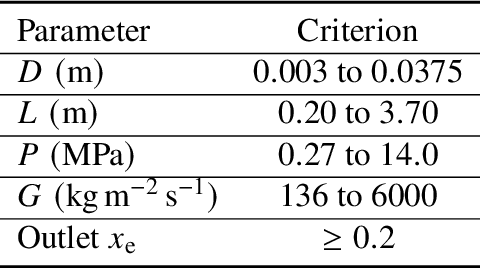

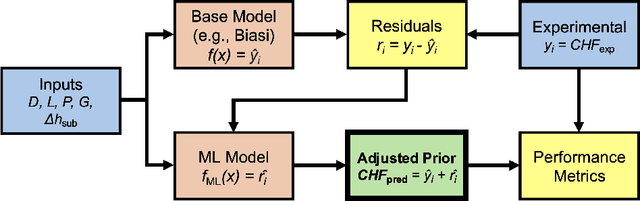

Feb 03, 2026Abstract:The prediction of critical heat flux (CHF) using machine learning (ML) approaches has become a highly active research activity in recent years, the goal of which is to build models more accurate than current conventional approaches such as empirical correlations or lookup tables (LUTs). Previous work developed and deployed tube-based pure and hybrid ML models in the CTF subchannel code, however, full-scale reactor core simulations require the use of rod bundle geometries. Unlike isolated subchannels, rod bundles experience complex thermal hydraulic phenomena such as channel crossflow, spacer grid losses, and effects from unheated conductors. This study investigates the generalization of ML-based CHF prediction models in rod bundles after being trained on tube-based CHF data. A purely data-driven DNN and two hybrid bias-correction models were implemented in the CTF subchannel code and used to predict CHF location and magnitude in the Combustion Engineering 5-by-5 bundle CHF test series. The W-3 correlation, Bowring correlation, and Groeneveld LUT were used as baseline comparators. On average, all three ML-based approaches produced magnitude and location predictions more accurate than the baseline models, with the hybrid LUT model exhibiting the most favorable performance metrics.

Vision KAN: Towards an Attention-Free Backbone for Vision with Kolmogorov-Arnold Networks

Jan 29, 2026Abstract:Attention mechanisms have become a key module in modern vision backbones due to their ability to model long-range dependencies. However, their quadratic complexity in sequence length and the difficulty of interpreting attention weights limit both scalability and clarity. Recent attention-free architectures demonstrate that strong performance can be achieved without pairwise attention, motivating the search for alternatives. In this work, we introduce Vision KAN (ViK), an attention-free backbone inspired by the Kolmogorov-Arnold Networks. At its core lies MultiPatch-RBFKAN, a unified token mixer that combines (a) patch-wise nonlinear transform with Radial Basis Function-based KANs, (b) axis-wise separable mixing for efficient local propagation, and (c) low-rank global mapping for long-range interaction. Employing as a drop-in replacement for attention modules, this formulation tackles the prohibitive cost of full KANs on high-resolution features by adopting a patch-wise grouping strategy with lightweight operators to restore cross-patch dependencies. Experiments on ImageNet-1K show that ViK achieves competitive accuracy with linear complexity, demonstrating the potential of KAN-based token mixing as an efficient and theoretically grounded alternative to attention.

FaNe: Towards Fine-Grained Cross-Modal Contrast with False-Negative Reduction and Text-Conditioned Sparse Attention

Nov 15, 2025Abstract:Medical vision-language pre-training (VLP) offers significant potential for advancing medical image understanding by leveraging paired image-report data. However, existing methods are limited by Fa}lse Negatives (FaNe) induced by semantically similar texts and insufficient fine-grained cross-modal alignment. To address these limitations, we propose FaNe, a semantic-enhanced VLP framework. To mitigate false negatives, we introduce a semantic-aware positive pair mining strategy based on text-text similarity with adaptive normalization. Furthermore, we design a text-conditioned sparse attention pooling module to enable fine-grained image-text alignment through localized visual representations guided by textual cues. To strengthen intra-modal discrimination, we develop a hard-negative aware contrastive loss that adaptively reweights semantically similar negatives. Extensive experiments on five downstream medical imaging benchmarks demonstrate that FaNe achieves state-of-the-art performance across image classification, object detection, and semantic segmentation, validating the effectiveness of our framework.

A Three-Stage Bayesian Transfer Learning Framework to Improve Predictions in Data-Scarce Domains

Oct 30, 2025Abstract:The use of ML in engineering has grown steadily to support a wide array of applications. Among these methods, deep neural networks have been widely adopted due to their performance and accessibility, but they require large, high-quality datasets. Experimental data are often sparse, noisy, or insufficient to build resilient data-driven models. Transfer learning, which leverages relevant data-abundant source domains to assist learning in data-scarce target domains, has shown efficacy. Parameter transfer, where pretrained weights are reused, is common but degrades under large domain shifts. Domain-adversarial neural networks (DANNs) help address this issue by learning domain-invariant representations, thereby improving transfer under greater domain shifts in a semi-supervised setting. However, DANNs can be unstable during training and lack a native means for uncertainty quantification. This study introduces a fully-supervised three-stage framework, the staged Bayesian domain-adversarial neural network (staged B-DANN), that combines parameter transfer and shared latent space adaptation. In Stage 1, a deterministic feature extractor is trained on the source domain. This feature extractor is then adversarially refined using a DANN in Stage 2. In Stage 3, a Bayesian neural network is built on the adapted feature extractor for fine-tuning on the target domain to handle conditional shifts and yield calibrated uncertainty estimates. This staged B-DANN approach was first validated on a synthetic benchmark, where it was shown to significantly outperform standard transfer techniques. It was then applied to the task of predicting critical heat flux in rectangular channels, leveraging data from tube experiments as the source domain. The results of this study show that the staged B-DANN method can improve predictive accuracy and generalization, potentially assisting other domains in nuclear engineering.

Generalist vs Specialist Time Series Foundation Models: Investigating Potential Emergent Behaviors in Assessing Human Health Using PPG Signals

Oct 16, 2025

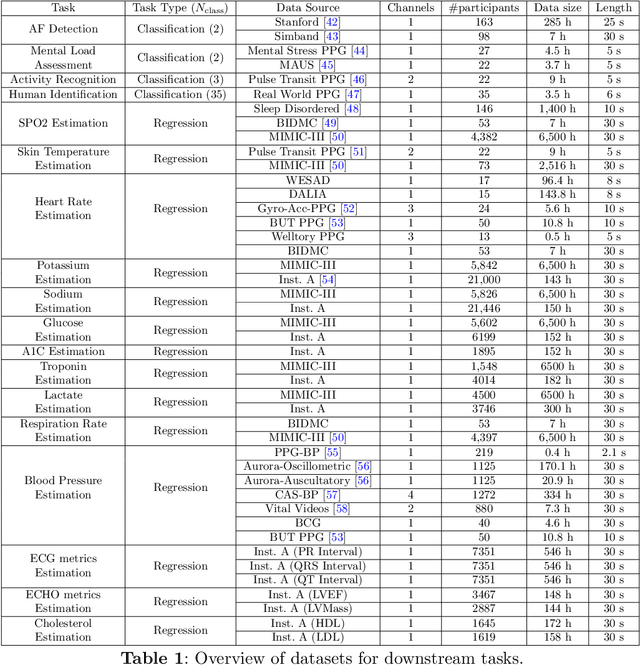

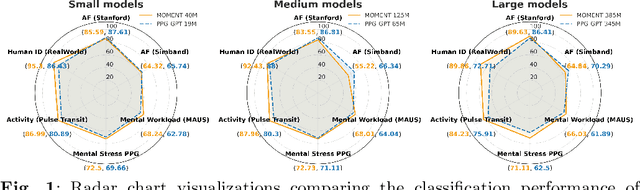

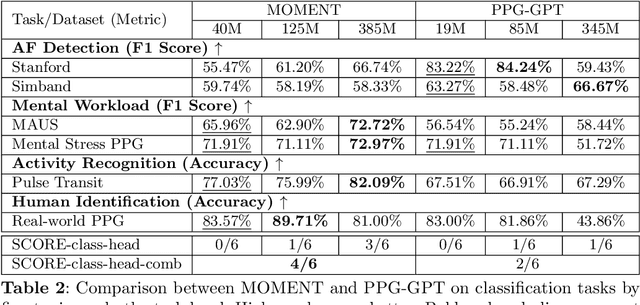

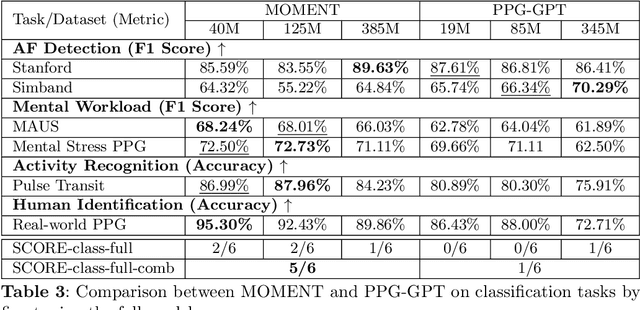

Abstract:Foundation models are large-scale machine learning models that are pre-trained on massive amounts of data and can be adapted for various downstream tasks. They have been extensively applied to tasks in Natural Language Processing and Computer Vision with models such as GPT, BERT, and CLIP. They are now also increasingly gaining attention in time-series analysis, particularly for physiological sensing. However, most time series foundation models are specialist models - with data in pre-training and testing of the same type, such as Electrocardiogram, Electroencephalogram, and Photoplethysmogram (PPG). Recent works, such as MOMENT, train a generalist time series foundation model with data from multiple domains, such as weather, traffic, and electricity. This paper aims to conduct a comprehensive benchmarking study to compare the performance of generalist and specialist models, with a focus on PPG signals. Through an extensive suite of total 51 tasks covering cardiac state assessment, laboratory value estimation, and cross-modal inference, we comprehensively evaluate both models across seven dimensions, including win score, average performance, feature quality, tuning gain, performance variance, transferability, and scalability. These metrics jointly capture not only the models' capability but also their adaptability, robustness, and efficiency under different fine-tuning strategies, providing a holistic understanding of their strengths and limitations for diverse downstream scenarios. In a full-tuning scenario, we demonstrate that the specialist model achieves a 27% higher win score. Finally, we provide further analysis on generalization, fairness, attention visualizations, and the importance of training data choice.

Nuclear Data Adjustment for Nonlinear Applications in the OECD/NEA WPNCS SG14 Benchmark -- A Bayesian Inverse UQ-based Approach for Data Assimilation

Sep 09, 2025Abstract:The Organization for Economic Cooperation and Development (OECD) Working Party on Nuclear Criticality Safety (WPNCS) proposed a benchmark exercise to assess the performance of current nuclear data adjustment techniques applied to nonlinear applications and experiments with low correlation to applications. This work introduces Bayesian Inverse Uncertainty Quantification (IUQ) as a method for nuclear data adjustments in this benchmark, and compares IUQ to the more traditional methods of Generalized Linear Least Squares (GLLS) and Monte Carlo Bayes (MOCABA). Posterior predictions from IUQ showed agreement with GLLS and MOCABA for linear applications. When comparing GLLS, MOCABA, and IUQ posterior predictions to computed model responses using adjusted parameters, we observe that GLLS predictions fail to replicate computed response distributions for nonlinear applications, while MOCABA shows near agreement, and IUQ uses computed model responses directly. We also discuss observations on why experiments with low correlation to applications can be informative to nuclear data adjustments and identify some properties useful in selecting experiments for inclusion in nuclear data adjustment. Performance in this benchmark indicates potential for Bayesian IUQ in nuclear data adjustments.

Seeing Sound, Hearing Sight: Uncovering Modality Bias and Conflict of AI models in Sound Localization

May 16, 2025Abstract:Imagine hearing a dog bark and turning toward the sound only to see a parked car, while the real, silent dog sits elsewhere. Such sensory conflicts test perception, yet humans reliably resolve them by prioritizing sound over misleading visuals. Despite advances in multimodal AI integrating vision and audio, little is known about how these systems handle cross-modal conflicts or whether they favor one modality. In this study, we systematically examine modality bias and conflict resolution in AI sound localization. We assess leading multimodal models and benchmark them against human performance in psychophysics experiments across six audiovisual conditions, including congruent, conflicting, and absent cues. Humans consistently outperform AI, demonstrating superior resilience to conflicting or missing visuals by relying on auditory information. In contrast, AI models often default to visual input, degrading performance to near chance levels. To address this, we finetune a state-of-the-art model using a stereo audio-image dataset generated via 3D simulations. Even with limited training data, the refined model surpasses existing benchmarks. Notably, it also mirrors human-like horizontal localization bias favoring left-right precision-likely due to the stereo audio structure reflecting human ear placement. These findings underscore how sensory input quality and system architecture shape multimodal representation accuracy.

Physics-Based Hybrid Machine Learning for Critical Heat Flux Prediction with Uncertainty Quantification

Feb 26, 2025

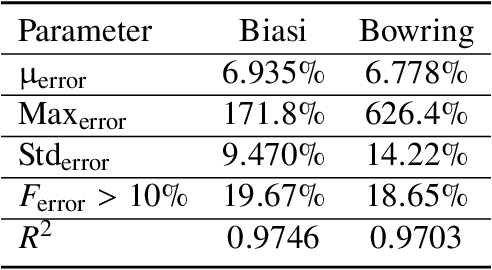

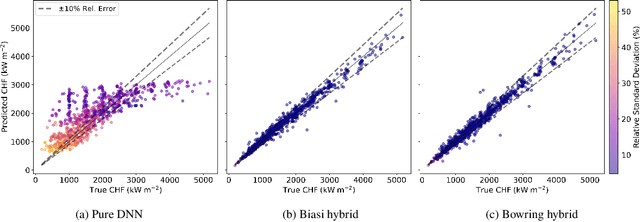

Abstract:Critical heat flux is a key quantity in boiling system modeling due to its impact on heat transfer and component temperature and performance. This study investigates the development and validation of an uncertainty-aware hybrid modeling approach that combines machine learning with physics-based models in the prediction of critical heat flux in nuclear reactors for cases of dryout. Two empirical correlations, Biasi and Bowring, were employed with three machine learning uncertainty quantification techniques: deep neural network ensembles, Bayesian neural networks, and deep Gaussian processes. A pure machine learning model without a base model served as a baseline for comparison. This study examines the performance and uncertainty of the models under both plentiful and limited training data scenarios using parity plots, uncertainty distributions, and calibration curves. The results indicate that the Biasi hybrid deep neural network ensemble achieved the most favorable performance (with a mean absolute relative error of 1.846% and stable uncertainty estimates), particularly in the plentiful data scenario. The Bayesian neural network models showed slightly higher error and uncertainty but superior calibration. By contrast, deep Gaussian process models underperformed by most metrics. All hybrid models outperformed pure machine learning configurations, demonstrating resistance against data scarcity.

Native Fortran Implementation of TensorFlow-Trained Deep and Bayesian Neural Networks

Feb 07, 2025Abstract:Over the past decade, the investigation of machine learning (ML) within the field of nuclear engineering has grown significantly. With many approaches reaching maturity, the next phase of investigation will determine the feasibility and usefulness of ML model implementation in a production setting. Several of the codes used for reactor design and assessment are primarily written in the Fortran language, which is not immediately compatible with TensorFlow-trained ML models. This study presents a framework for implementing deep neural networks (DNNs) and Bayesian neural networks (BNNs) in Fortran, allowing for native execution without TensorFlow's C API, Python runtime, or ONNX conversion. Designed for ease of use and computational efficiency, the framework can be implemented in any Fortran code, supporting iterative solvers and UQ via ensembles or BNNs. Verification was performed using a two-input, one-output test case composed of a noisy sinusoid to compare Fortran-based predictions to those from TensorFlow. The DNN predictions showed negligible differences and achieved a 19.6x speedup, whereas the BNN predictions exhibited minor disagreement, plausibly due to differences in random number generation. An 8.0x speedup was noted for BNN inference. The approach was then further verified on a nuclear-relevant problem predicting critical heat flux (CHF), which demonstrated similar behavior along with significant computational gains. Discussion regarding the framework's successful integration into the CTF thermal-hydraulics code is also included, outlining its practical usefulness. Overall, this framework was shown to be effective at implementing both DNN and BNN model inference within Fortran, allowing for the continued study of ML-based methods in real-world nuclear applications.

An Investigation on Machine Learning Predictive Accuracy Improvement and Uncertainty Reduction using VAE-based Data Augmentation

Oct 24, 2024

Abstract:The confluence of ultrafast computers with large memory, rapid progress in Machine Learning (ML) algorithms, and the availability of large datasets place multiple engineering fields at the threshold of dramatic progress. However, a unique challenge in nuclear engineering is data scarcity because experimentation on nuclear systems is usually more expensive and time-consuming than most other disciplines. One potential way to resolve the data scarcity issue is deep generative learning, which uses certain ML models to learn the underlying distribution of existing data and generate synthetic samples that resemble the real data. In this way, one can significantly expand the dataset to train more accurate predictive ML models. In this study, our objective is to evaluate the effectiveness of data augmentation using variational autoencoder (VAE)-based deep generative models. We investigated whether the data augmentation leads to improved accuracy in the predictions of a deep neural network (DNN) model trained using the augmented data. Additionally, the DNN prediction uncertainties are quantified using Bayesian Neural Networks (BNN) and conformal prediction (CP) to assess the impact on predictive uncertainty reduction. To test the proposed methodology, we used TRACE simulations of steady-state void fraction data based on the NUPEC Boiling Water Reactor Full-size Fine-mesh Bundle Test (BFBT) benchmark. We found that augmenting the training dataset using VAEs has improved the DNN model's predictive accuracy, improved the prediction confidence intervals, and reduced the prediction uncertainties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge