Tian-Ao Ren

SLAP: Slapband-based Autonomous Perching Drone with Failure Recovery for Vertical Tree Trunks

Jan 01, 2026Abstract:Perching allows unmanned aerial vehicles (UAVs) to reduce energy consumption, remain anchored for surface sampling operations, or stably survey their surroundings. Previous efforts for perching on vertical surfaces have predominantly focused on lightweight mechanical design solutions with relatively scant system-level integration. Furthermore, perching strategies for vertical surfaces commonly require high-speed, aggressive landing operations that are dangerous for a surveyor drone with sensitive electronics onboard. This work presents the preliminary investigation of a perching approach suitable for larger drones that both gently perches on vertical tree trunks and reacts and recovers from perch failures. The system in this work, called SLAP, consists of vision-based perch site detector, an IMU (inertial-measurement-unit)-based perch failure detector, an attitude controller for soft perching, an optical close-range detection system, and a fast active elastic gripper with microspines made from commercially-available slapbands. We validated this approach on a modified 1.2 kg commercial quadrotor with component and system analysis. Initial human-in-the-loop autonomous indoor flight experiments achieved a 75% perch success rate on a real oak tree segment across 20 flights, and 100% perch failure recovery across 2 flights with induced failures.

Multimodal Sensing for Robot-Assisted Sub-Tissue Feature Detection in Physiotherapy Palpation

Dec 24, 2025Abstract:Robotic palpation relies on force sensing, but force signals in soft-tissue environments are variable and cannot reliably reveal subtle subsurface features. We present a compact multimodal sensor that integrates high-resolution vision-based tactile imaging with a 6-axis force-torque sensor. In experiments on silicone phantoms with diverse subsurface tendon geometries, force signals alone frequently produce ambiguous responses, while tactile images reveal clear structural differences in presence, diameter, depth, crossings, and multiplicity. Yet accurate force tracking remains essential for maintaining safe, consistent contact during physiotherapeutic interaction. Preliminary results show that combining tactile and force modalities enables robust subsurface feature detection and controlled robotic palpation.

TacCap: A Wearable FBG-Based Tactile Sensor for Seamless Human-to-Robot Skill Transfer

Mar 03, 2025Abstract:Tactile sensing is essential for dexterous manipulation, yet large-scale human demonstration datasets lack tactile feedback, limiting their effectiveness in skill transfer to robots. To address this, we introduce TacCap, a wearable Fiber Bragg Grating (FBG)-based tactile sensor designed for seamless human-to-robot transfer. TacCap is lightweight, durable, and immune to electromagnetic interference, making it ideal for real-world data collection. We detail its design and fabrication, evaluate its sensitivity, repeatability, and cross-sensor consistency, and assess its effectiveness through grasp stability prediction and ablation studies. Our results demonstrate that TacCap enables transferable tactile data collection, bridging the gap between human demonstrations and robotic execution. To support further research and development, we open-source our hardware design and software.

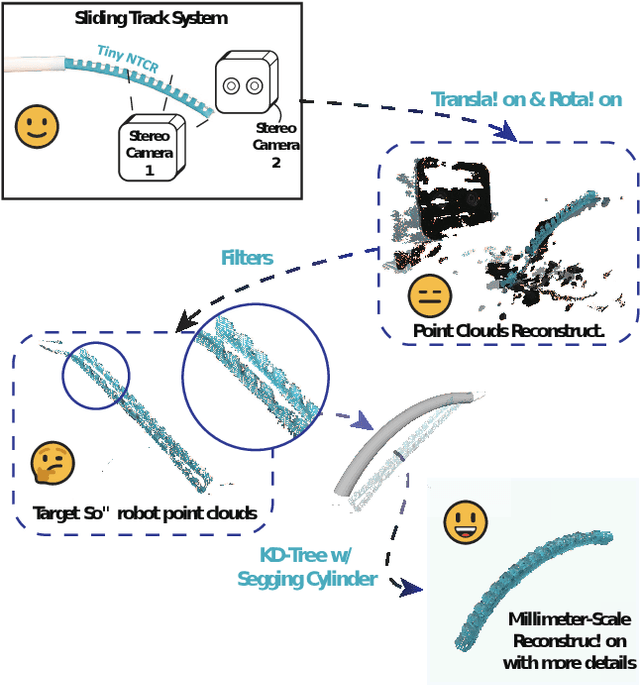

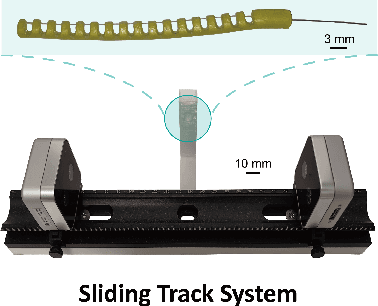

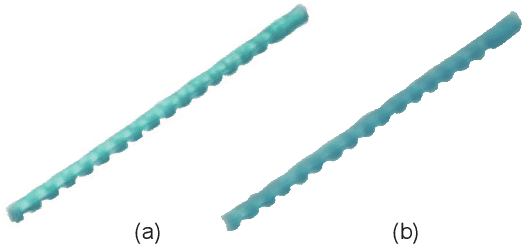

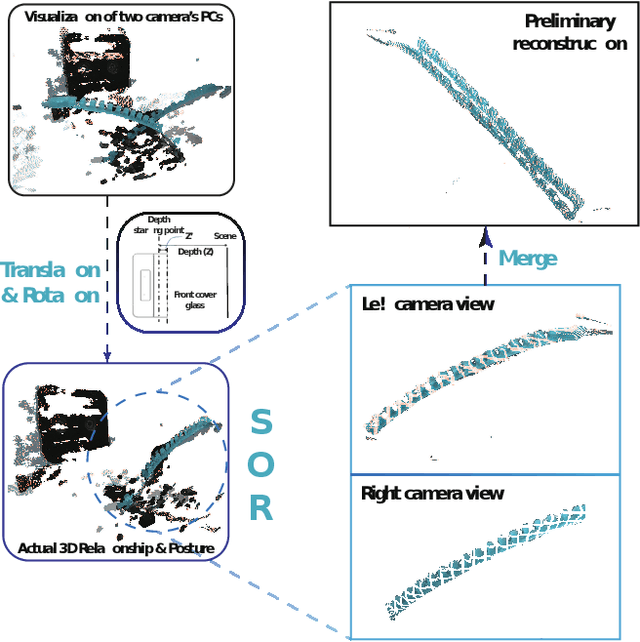

Three-dimensional Morphological Reconstruction of Millimeter-Scale Soft Continuum Robots based on Dual-Stereo-Vision

Aug 06, 2024

Abstract:Continuum robots can be miniaturized to just a few millimeters in diameter. Among these, notched tubular continuum robots (NTCR) show great potential in many delicate applications. Existing works in robotic modeling focus on kinematics and dynamics but still face challenges in reproducing the robot's morphology -- a significant factor that can expand the research landscape of continuum robots, especially for those with asymmetric continuum structures. This paper proposes a dual stereo vision-based method for the three-dimensional morphological reconstruction of millimeter-scale NTCRs. The method employs two oppositely located stationary binocular cameras to capture the point cloud of the NTCR, then utilizes predefined geometry as a reference for the KD tree method to relocate the capture point clouds, resulting in a morphologically correct NTCR despite the low-quality raw point cloud collection. The method has been proved feasible for an NTCR with a 3.5 mm diameter, capturing 14 out of 16 notch features, with the measurements generally centered around the standard of 1.5 mm, demonstrating the capability of revealing morphological details. Our proposed method paves the way for 3D morphological reconstruction of millimeter-scale soft robots for further self-modeling study.

Sim-to-Real Segmentation in Robot-assisted Transoral Tracheal Intubation

May 19, 2023Abstract:Robotic-assisted tracheal intubation requires the robot to distinguish anatomical features like an experienced physician using deep-learning techniques. However, real datasets of oropharyngeal organs are limited due to patient privacy issues, making it challenging to train deep-learning models for accurate image segmentation. We hereby consider generating a new data modality through a virtual environment to assist the training process. Specifically, this work introduces a virtual dataset generated by the Simulation Open Framework Architecture (SOFA) framework to overcome the limited availability of actual endoscopic images. We also propose a domain adaptive Sim-to-Real method for oropharyngeal organ image segmentation, which employs an image blending strategy called IoU-Ranking Blend (IRB) and style-transfer techniques to address discrepancies between datasets. Experimental results demonstrate the superior performance of the proposed approach with domain adaptive models, improving segmentation accuracy and training stability. In the practical application, the trained segmentation model holds great promise for robot-assisted intubation surgery and intelligent surgical navigation.

Domain Adaptive Sim-to-Real Segmentation of Oropharyngeal Organs

May 18, 2023Abstract:Video-assisted transoral tracheal intubation (TI) necessitates using an endoscope that helps the physician insert a tracheal tube into the glottis instead of the esophagus. The growing trend of robotic-assisted TI would require a medical robot to distinguish anatomical features like an experienced physician which can be imitated by utilizing supervised deep-learning techniques. However, the real datasets of oropharyngeal organs are often inaccessible due to limited open-source data and patient privacy. In this work, we propose a domain adaptive Sim-to-Real framework called IoU-Ranking Blend-ArtFlow (IRB-AF) for image segmentation of oropharyngeal organs. The framework includes an image blending strategy called IoU-Ranking Blend (IRB) and style-transfer method ArtFlow. Here, IRB alleviates the problem of poor segmentation performance caused by significant datasets domain differences; while ArtFlow is introduced to reduce the discrepancies between datasets further. A virtual oropharynx image dataset generated by the SOFA framework is used as the learning subject for semantic segmentation to deal with the limited availability of actual endoscopic images. We adapted IRB-AF with the state-of-the-art domain adaptive segmentation models. The results demonstrate the superior performance of our approach in further improving the segmentation accuracy and training stability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge