Theodore Tsesmelis

Re-assembling the past: The RePAIR dataset and benchmark for real world 2D and 3D puzzle solving

Oct 31, 2024

Abstract:This paper proposes the RePAIR dataset that represents a challenging benchmark to test modern computational and data driven methods for puzzle-solving and reassembly tasks. Our dataset has unique properties that are uncommon to current benchmarks for 2D and 3D puzzle solving. The fragments and fractures are realistic, caused by a collapse of a fresco during a World War II bombing at the Pompeii archaeological park. The fragments are also eroded and have missing pieces with irregular shapes and different dimensions, challenging further the reassembly algorithms. The dataset is multi-modal providing high resolution images with characteristic pictorial elements, detailed 3D scans of the fragments and meta-data annotated by the archaeologists. Ground truth has been generated through several years of unceasing fieldwork, including the excavation and cleaning of each fragment, followed by manual puzzle solving by archaeologists of a subset of approx. 1000 pieces among the 16000 available. After digitizing all the fragments in 3D, a benchmark was prepared to challenge current reassembly and puzzle-solving methods that often solve more simplistic synthetic scenarios. The tested baselines show that there clearly exists a gap to fill in solving this computationally complex problem.

6DGS: 6D Pose Estimation from a Single Image and a 3D Gaussian Splatting Model

Jul 22, 2024

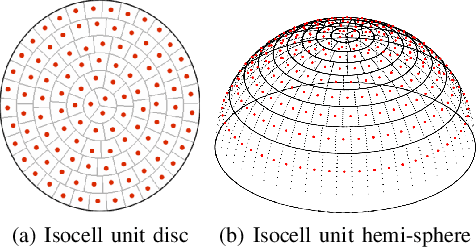

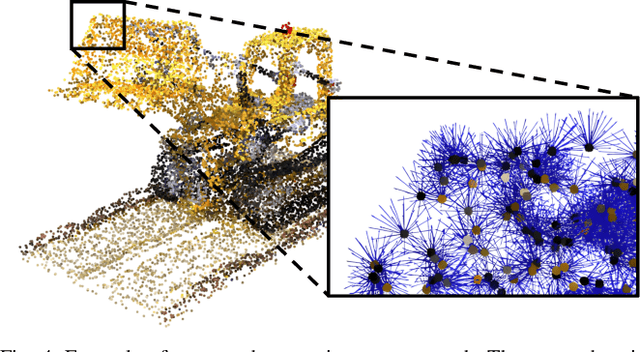

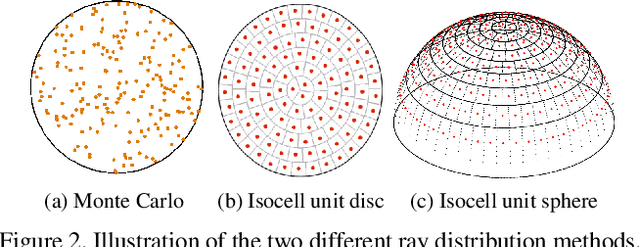

Abstract:We propose 6DGS to estimate the camera pose of a target RGB image given a 3D Gaussian Splatting (3DGS) model representing the scene. 6DGS avoids the iterative process typical of analysis-by-synthesis methods (e.g. iNeRF) that also require an initialization of the camera pose in order to converge. Instead, our method estimates a 6DoF pose by inverting the 3DGS rendering process. Starting from the object surface, we define a radiant Ellicell that uniformly generates rays departing from each ellipsoid that parameterize the 3DGS model. Each Ellicell ray is associated with the rendering parameters of each ellipsoid, which in turn is used to obtain the best bindings between the target image pixels and the cast rays. These pixel-ray bindings are then ranked to select the best scoring bundle of rays, which their intersection provides the camera center and, in turn, the camera rotation. The proposed solution obviates the necessity of an "a priori" pose for initialization, and it solves 6DoF pose estimation in closed form, without the need for iterations. Moreover, compared to the existing Novel View Synthesis (NVS) baselines for pose estimation, 6DGS can improve the overall average rotational accuracy by 12% and translation accuracy by 22% on real scenes, despite not requiring any initialization pose. At the same time, our method operates near real-time, reaching 15fps on consumer hardware.

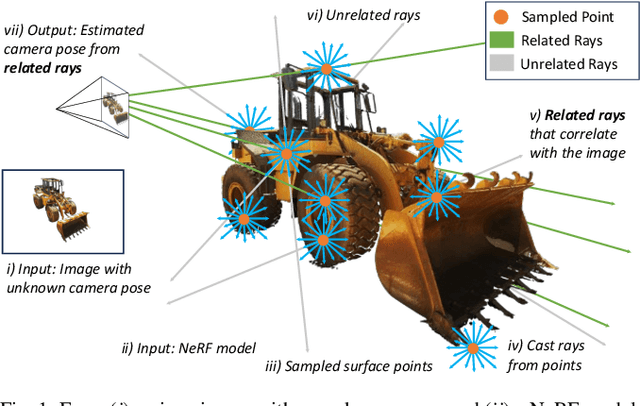

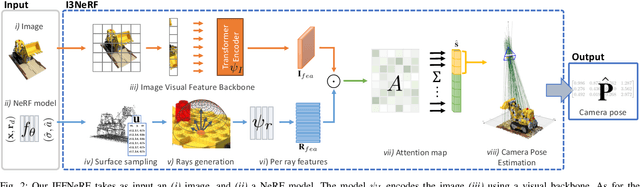

IFFNeRF: Initialisation Free and Fast 6DoF pose estimation from a single image and a NeRF model

Mar 19, 2024

Abstract:We introduce IFFNeRF to estimate the six degrees-of-freedom (6DoF) camera pose of a given image, building on the Neural Radiance Fields (NeRF) formulation. IFFNeRF is specifically designed to operate in real-time and eliminates the need for an initial pose guess that is proximate to the sought solution. IFFNeRF utilizes the Metropolis-Hasting algorithm to sample surface points from within the NeRF model. From these sampled points, we cast rays and deduce the color for each ray through pixel-level view synthesis. The camera pose can then be estimated as the solution to a Least Squares problem by selecting correspondences between the query image and the resulting bundle. We facilitate this process through a learned attention mechanism, bridging the query image embedding with the embedding of parameterized rays, thereby matching rays pertinent to the image. Through synthetic and real evaluation settings, we show that our method can improve the angular and translation error accuracy by 80.1% and 67.3%, respectively, compared to iNeRF while performing at 34fps on consumer hardware and not requiring the initial pose guess.

LIGHTS: LIGHT Specularity Dataset for specular detection in Multi-view

Jan 26, 2021

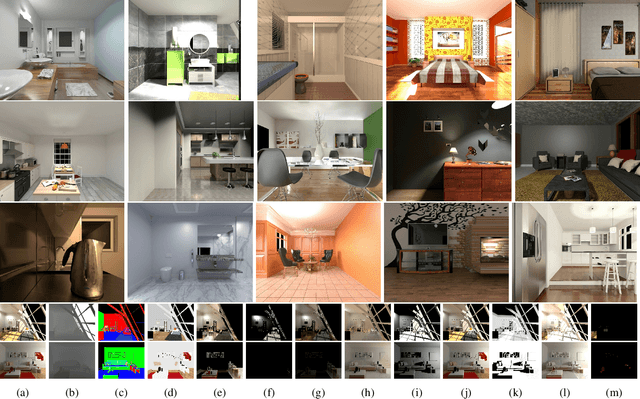

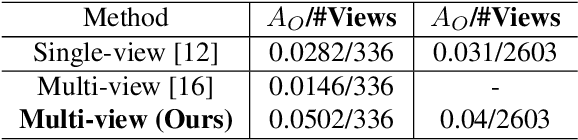

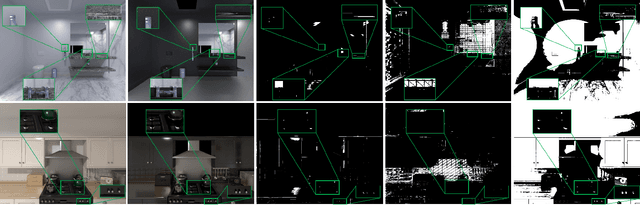

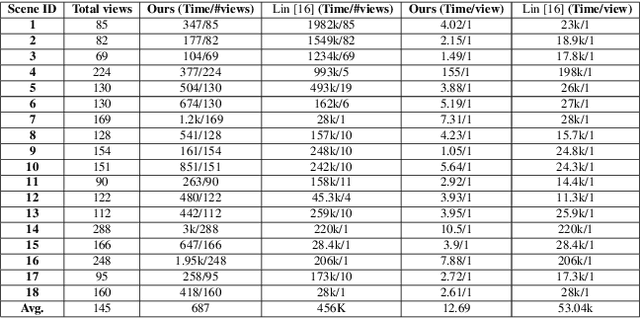

Abstract:Specular highlights are commonplace in images, however, methods for detecting them and in turn removing the phenomenon are particularly challenging. A reason for this, is due to the difficulty of creating a dataset for training or evaluation, as in the real-world we lack the necessary control over the environment. Therefore, we propose a novel physically-based rendered LIGHT Specularity (LIGHTS) Dataset for the evaluation of the specular highlight detection task. Our dataset consists of 18 high quality architectural scenes, where each scene is rendered with multiple views. In total we have 2,603 views with an average of 145 views per scene. Additionally we propose a simple aggregation based method for specular highlight detection that outperforms prior work by 3.6% in two orders of magnitude less time on our dataset.

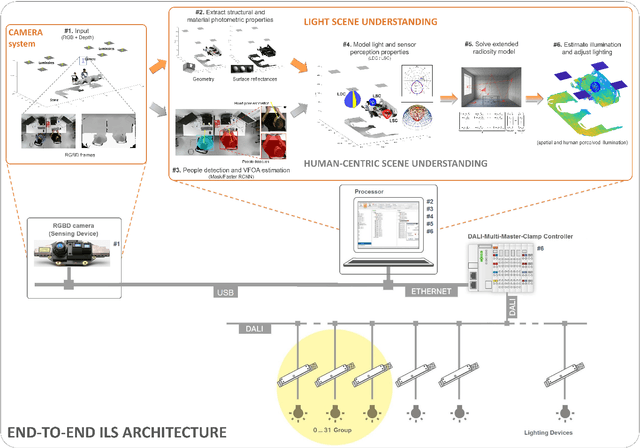

An integrated light management system with real-time light measurement and human perception

Apr 17, 2020

Abstract:Illumination is important for well-being, productivity and safety across several environments, including offices, retail shops and industrial warehouses. Current techniques for setting up lighting require extensive and expert support and need to be repeated if the scene changes. Here we propose the first fully-automated light management system (LMS) which measures lighting in real-time, leveraging an RGBD sensor and a radiosity-based light propagation model. Thanks to the integration of light distribution and perception curves into the radiosity, we outperform a commercial software (Relux) on a newly introduced dataset. Furthermore, our proposed LMS is the first to estimate both the presence and the attention of the people in the environment, as well as their light perception. Our new LMS adapts therefore lighting to the scene and human activity and it is capable of saving up to 66%, as we experimentally quantify,without compromising the lighting quality.

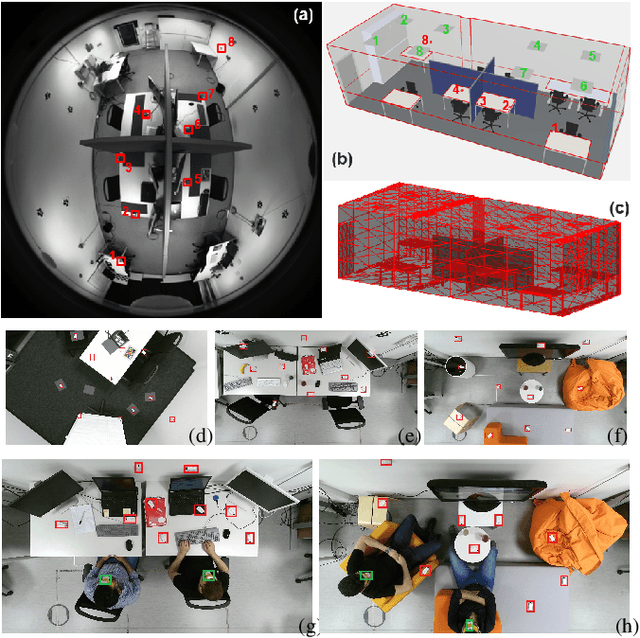

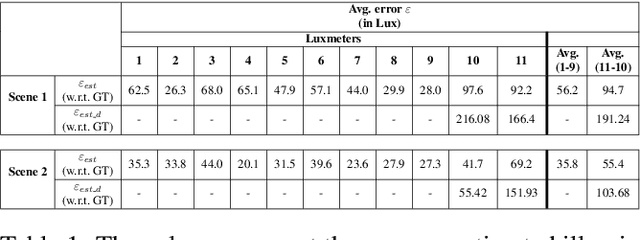

Human-centric light sensing and estimation from RGBD images: The invisible light switch

Jan 30, 2019

Abstract:Lighting design in indoor environments is of primary importance for at least two reasons: 1) people should perceive an adequate light; 2) an effective lighting design means consistent energy saving. We present the Invisible Light Switch (ILS) to address both aspects. ILS dynamically adjusts the room illumination level to save energy while maintaining constant the light level perception of the users. So the energy saving is invisible to them. Our proposed ILS leverages a radiosity model to estimate the light level which is perceived by a person within an indoor environment, taking into account the person position and her/his viewing frustum (head pose). ILS may therefore dim those luminaires, which are not seen by the user, resulting in an effective energy saving, especially in large open offices (where light may otherwise be ON everywhere for a single person). To quantify the system performance, we have collected a new dataset where people wear luxmeter devices while working in office rooms. The luxmeters measure the amount of light (in Lux) reaching the people gaze, which we consider a proxy to their illumination level perception. Our initial results are promising: in a room with 8 LED luminaires, the energy consumption in a day may be reduced from 18585 to 6206 watts with ILS (currently needing 1560 watts for operations). While doing so, the drop in perceived lighting decreases by just 200 lux, a value considered negligible when the original illumination level is above 1200 lux, as is normally the case in offices.

Forecasting People Trajectories and Head Poses by Jointly Reasoning on Tracklets and Vislets

Jan 07, 2019

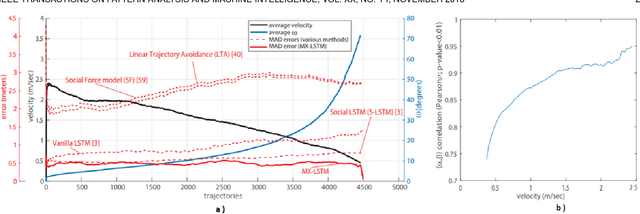

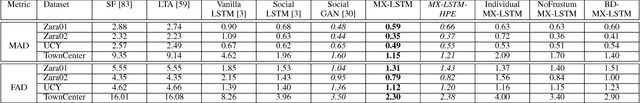

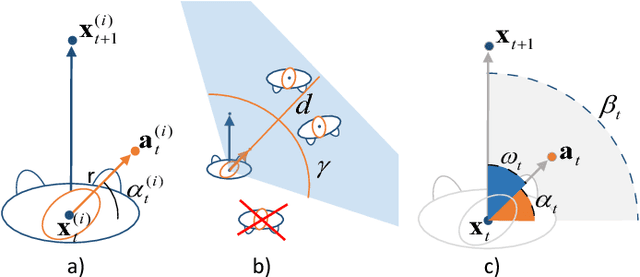

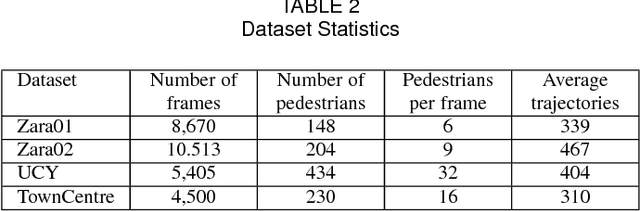

Abstract:In this work, we explore the correlation between people trajectories and their head orientations. We argue that people trajectory and head pose forecasting can be modelled as a joint problem. Recent approaches on trajectory forecasting leverage short-term trajectories (aka tracklets) of pedestrians to predict their future paths. In addition, sociological cues, such as expected destination or pedestrian interaction, are often combined with tracklets. In this paper, we propose MiXing-LSTM (MX-LSTM) to capture the interplay between positions and head orientations (vislets) thanks to a joint unconstrained optimization of full covariance matrices during the LSTM backpropagation. We additionally exploit the head orientations as a proxy for the visual attention, when modeling social interactions. MX-LSTM predicts future pedestrians location and head pose, increasing the standard capabilities of the current approaches on long-term trajectory forecasting. Compared to the state-of-the-art, our approach shows better performances on an extensive set of public benchmarks. MX-LSTM is particularly effective when people move slowly, i.e. the most challenging scenario for all other models. The proposed approach also allows for accurate predictions on a longer time horizon.

RGBD2lux: Dense light intensity estimation with an RGBD sensor

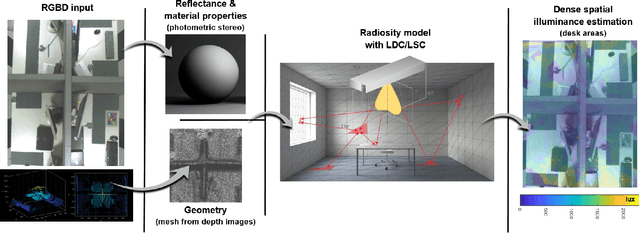

Oct 22, 2018

Abstract:Lighting design and modelling %(the efficient and aesthetic placement of luminaires in a virtual or real scene) or industrial applications like luminaire planning and commissioning %(the luminaire's installation and evaluation process along to the scene's geometry and structure) rely heavily on physically correct simulations. The current typical approaches are based on CAD modeling simulations and offline rendering, with long processing times and therefore inflexible workflows. Thus, in this paper we propose a computer vision based system to measure lighting with just a single RGBD camera. The proposed method uses both depth data and images from the sensor to provide a dense measure of light intensity in the field of view of the camera.

MX-LSTM: mixing tracklets and vislets to jointly forecast trajectories and head poses

May 02, 2018

Abstract:Recent approaches on trajectory forecasting use tracklets to predict the future positions of pedestrians exploiting Long Short Term Memory (LSTM) architectures. This paper shows that adding vislets, that is, short sequences of head pose estimations, allows to increase significantly the trajectory forecasting performance. We then propose to use vislets in a novel framework called MX-LSTM, capturing the interplay between tracklets and vislets thanks to a joint unconstrained optimization of full covariance matrices during the LSTM backpropagation. At the same time, MX-LSTM predicts the future head poses, increasing the standard capabilities of the long-term trajectory forecasting approaches. With standard head pose estimators and an attentional-based social pooling, MX-LSTM scores the new trajectory forecasting state-of-the-art in all the considered datasets (Zara01, Zara02, UCY, and TownCentre) with a dramatic margin when the pedestrians slow down, a case where most of the forecasting approaches struggle to provide an accurate solution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge