Takashi Morie

Techniques for Enhancing Memory Capacity of Reservoir Computing

Feb 25, 2025

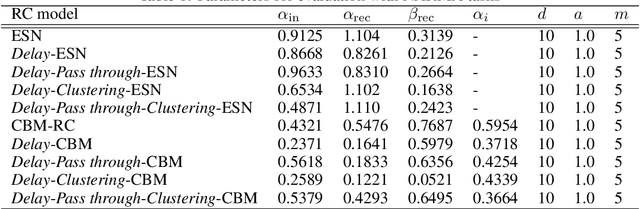

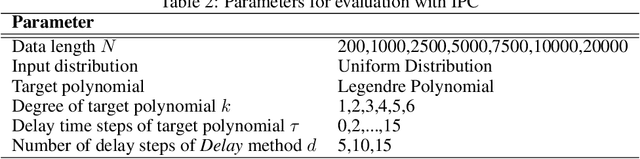

Abstract:Reservoir Computing (RC) is a bio-inspired machine learning framework, and various models have been proposed. RC is a well-suited model for time series data processing, but there is a trade-off between memory capacity and nonlinearity. In this study, we propose methods to improve the memory capacity of reservoir models by modifying their network configuration except for the inside of reservoirs. The Delay method retains past inputs by adding delay node chains to the input layer with the specified number of delay steps. To suppress the effect of input value increase due to the Delay method, we divide the input weights by the number of added delay steps. The Pass through method feeds input values directly to the output layer. The Clustering method divides the input and reservoir nodes into multiple parts and integrates them at the output layer. We applied these methods to an echo state network (ESN), a typical RC model, and the chaotic Boltzmann machine (CBM)-RC, which can be efficiently implemented in integrated circuits. We evaluated their performance on the NARMA task, and measured information processing capacity (IPC) to evaluate the trade-off between memory capacity and nonlinearity.

Training Physical Neural Networks for Analog In-Memory Computing

Dec 12, 2024

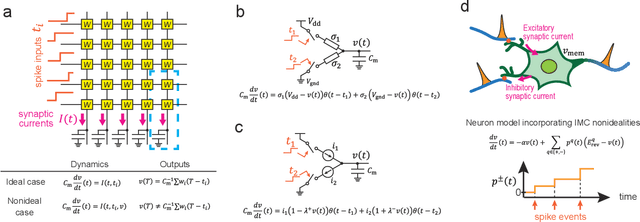

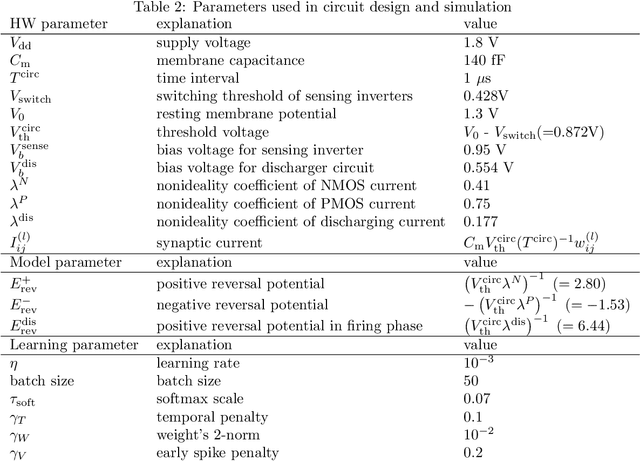

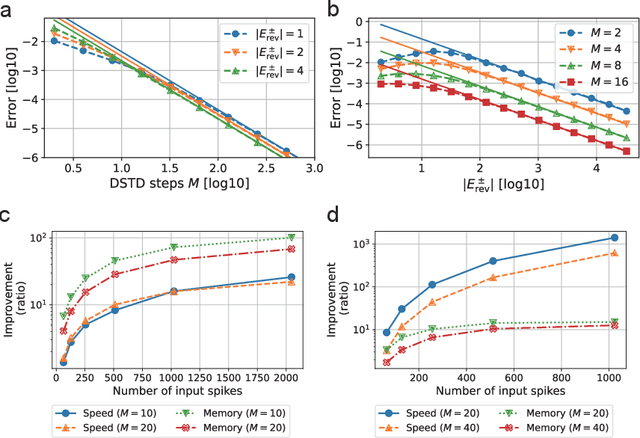

Abstract:In-memory computing (IMC) architectures mitigate the von Neumann bottleneck encountered in traditional deep learning accelerators. Its energy efficiency can realize deep learning-based edge applications. However, because IMC is implemented using analog circuits, inherent non-idealities in the hardware pose significant challenges. This paper presents physical neural networks (PNNs) for constructing physical models of IMC. PNNs can address the synaptic current's dependence on membrane potential, a challenge in charge-domain IMC systems. The proposed model is mathematically equivalent to spiking neural networks with reversal potentials. With a novel technique called differentiable spike-time discretization, the PNNs are efficiently trained. We show that hardware non-idealities traditionally viewed as detrimental can enhance the model's learning performance. This bottom-up methodology was validated by designing an IMC circuit with non-ideal characteristics using the sky130 process. When employing this bottom-up approach, the modeling error reduced by an order of magnitude compared to conventional top-down methods in post-layout simulations.

Hibikino-Musashi@Home 2024 Team Description Paper

Oct 08, 2024

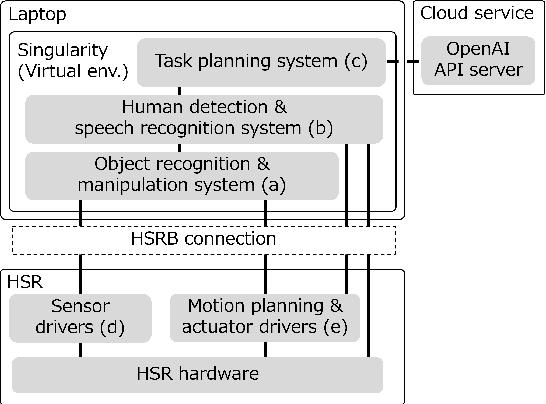

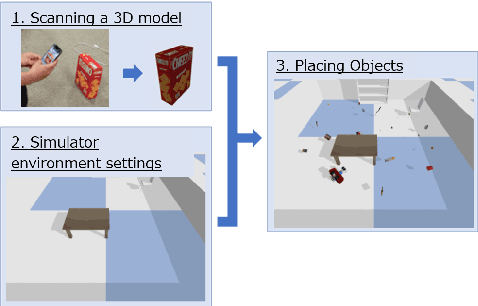

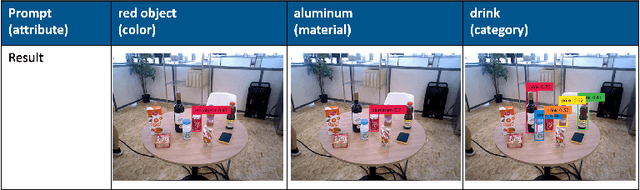

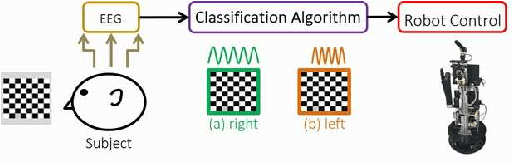

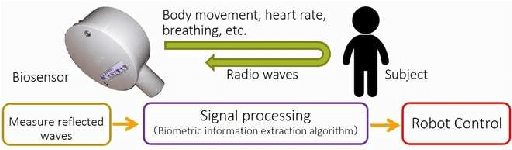

Abstract:This paper provides an overview of the techniques employed by Hibikino-Musashi@Home, which intends to participate in the domestic standard platform league. The team has developed a dataset generator for training a robot vision system and an open-source development environment running on a Human Support Robot simulator. The large language model powered task planner selects appropriate primitive skills to perform the task requested by users. The team aims to design a home service robot that can assist humans in their homes and continuously attends competitions to evaluate and improve the developed system.

Hibikino-Musashi@Home 2023 Team Description Paper

Oct 19, 2023Abstract:This paper describes an overview of the techniques of Hibikino-Musashi@Home, which intends to participate in the domestic standard platform league. The team has developed a dataset generator for the training of a robot vision system and an open-source development environment running on a human support robot simulator. The robot system comprises self-developed libraries including those for motion synthesis and open-source software works on the robot operating system. The team aims to realize a home service robot that assists humans in a home, and continuously attend the competition to evaluate the developed system. The brain-inspired artificial intelligence system is also proposed for service robots which are expected to work in a real home environment.

Learning Reservoir Dynamics with Temporal Self-Modulation

Jan 23, 2023

Abstract:Reservoir computing (RC) can efficiently process time-series data by transferring the input signal to randomly connected recurrent neural networks (RNNs), which are referred to as a reservoir. The high-dimensional representation of time-series data in the reservoir significantly simplifies subsequent learning tasks. Although this simple architecture allows fast learning and facile physical implementation, the learning performance is inferior to that of other state-of-the-art RNN models. In this paper, to improve the learning ability of RC, we propose self-modulated RC (SM-RC), which extends RC by adding a self-modulation mechanism. The self-modulation mechanism is realized with two gating variables: an input gate and a reservoir gate. The input gate modulates the input signal, and the reservoir gate modulates the dynamical properties of the reservoir. We demonstrated that SM-RC can perform attention tasks where input information is retained or discarded depending on the input signal. We also found that a chaotic state emerged as a result of learning in SM-RC. This indicates that self-modulation mechanisms provide RC with qualitatively different information-processing capabilities. Furthermore, SM-RC outperformed RC in NARMA and Lorentz model tasks. In particular, SM-RC achieved a higher prediction accuracy than RC with a reservoir 10 times larger in the Lorentz model tasks. Because the SM-RC architecture only requires two additional gates, it is physically implementable as RC, providing a new direction for realizing edge AI.

Hibikino-Musashi@Home 2022 Team Description Paper

Nov 12, 2022

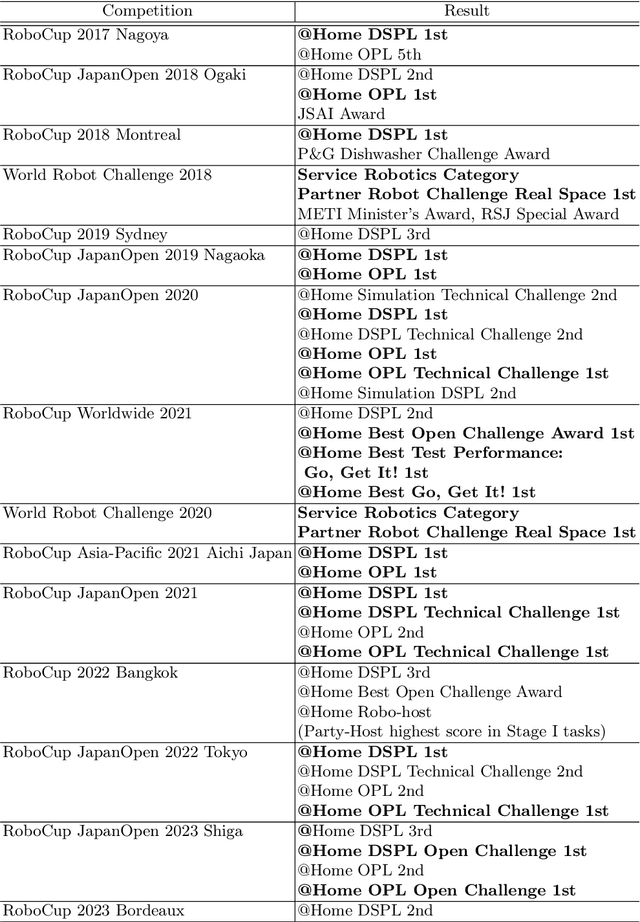

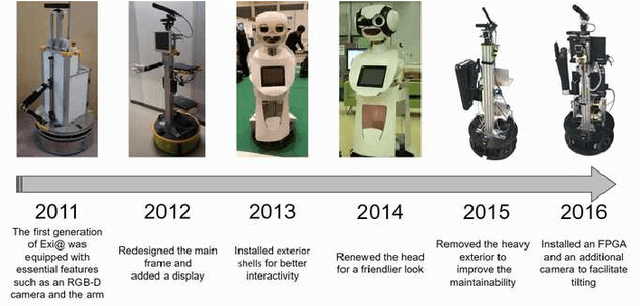

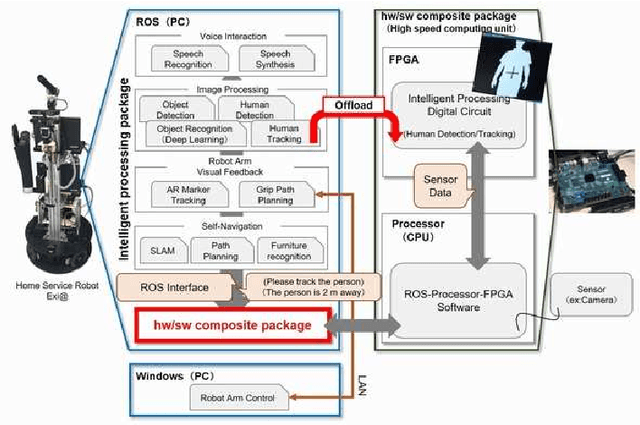

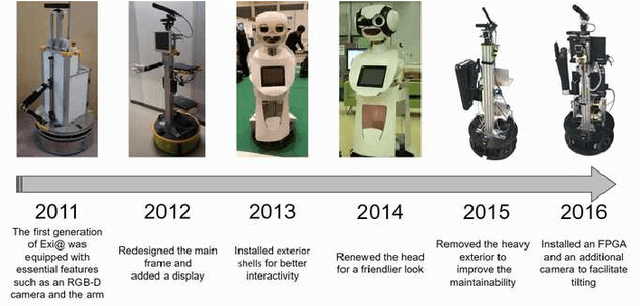

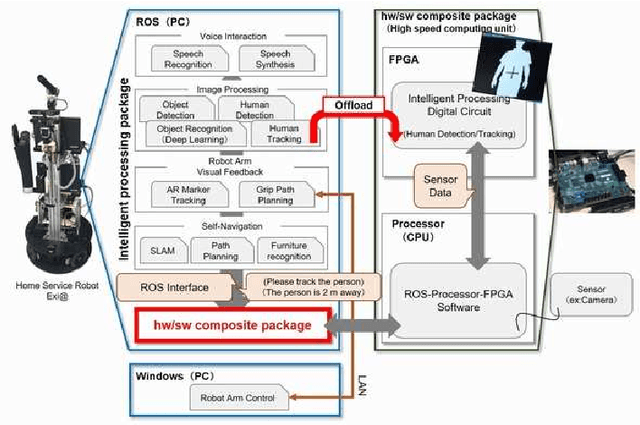

Abstract:Our team, Hibikino-Musashi@Home (HMA), was founded in 2010. It is based in Japan in the Kitakyushu Science and Research Park. Since 2010, we have annually participated in the RoboCup@Home Japan Open competition in the open platform league (OPL).We participated as an open platform league team in the 2017 Nagoya RoboCup competition and as a domestic standard platform league (DSPL) team in the 2017 Nagoya, 2018 Montreal, 2019 Sydney, and 2021 Worldwide RoboCup competitions.We also participated in theWorld Robot Challenge (WRC) 2018 in the service-robotics category of the partner-robot challenge (real space) and won first place. Currently, we have 27 members from nine different laboratories within the Kyushu Institute of Technology and the university of Kitakyushu. In this paper, we introduce the activities that have been performed by our team and the technologies that we use.

Hibikino-Musashi@Home 2018 Team Description Paper

Nov 09, 2022

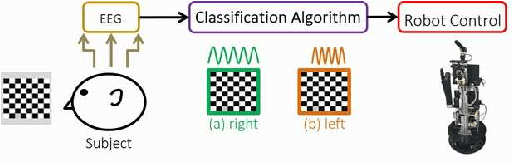

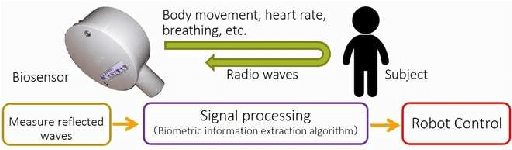

Abstract:Our team, Hibikino-Musashi@Home (the shortened name is HMA), was founded in 2010. It is based in the Kitakyushu Science and Research Park, Japan. We have participated in the RoboCup@Home Japan open competition open platform league every year since 2010. Moreover, we participated in the RoboCup 2017 Nagoya as open platform league and domestic standard platform league teams. Currently, the Hibikino-Musashi@Home team has 20 members from seven different laboratories based in the Kyushu Institute of Technology. In this paper, we introduce the activities of our team and the technologies.

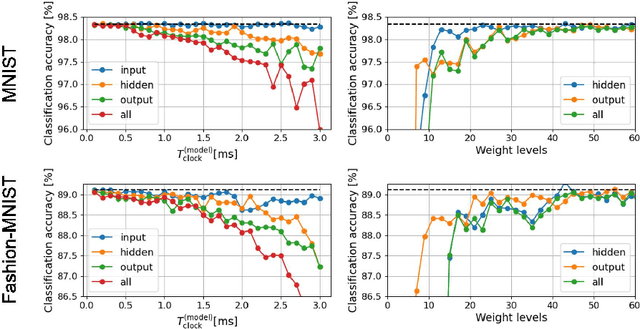

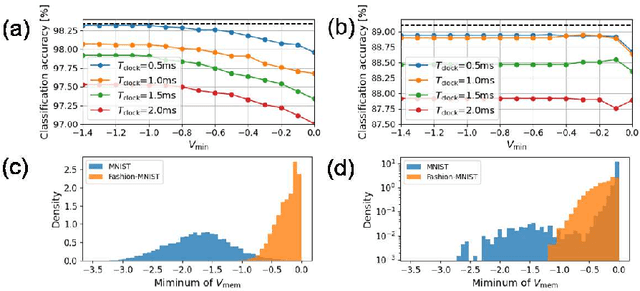

Effects of VLSI Circuit Constraints on Temporal-Coding Multilayer Spiking Neural Networks

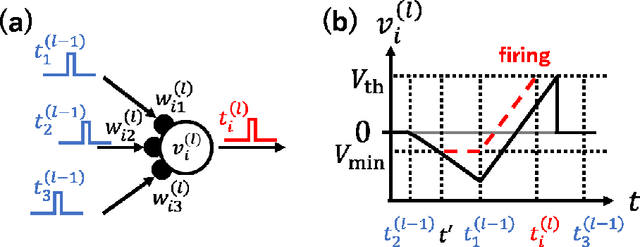

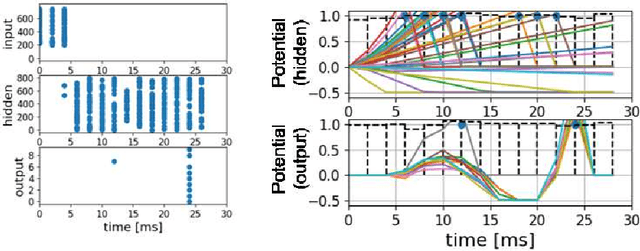

Jun 25, 2021

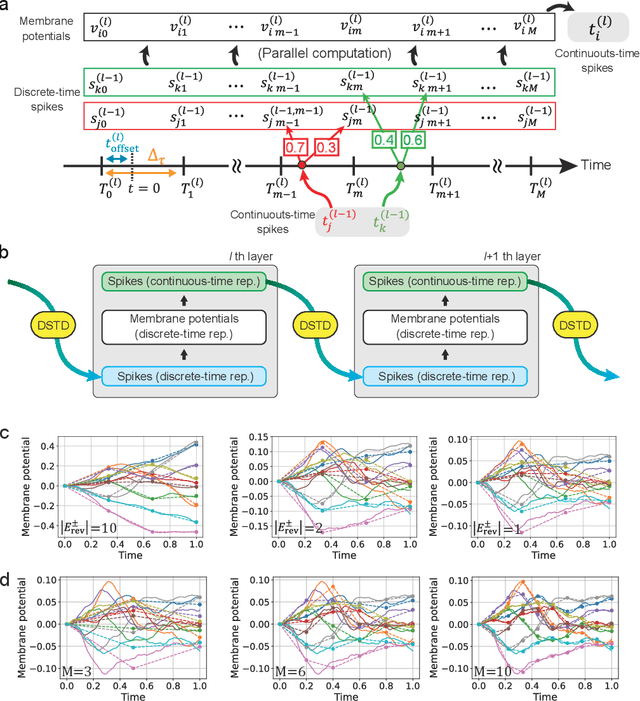

Abstract:The spiking neural network (SNN) has been attracting considerable attention not only as a mathematical model for the brain, but also as an energy-efficient information processing model for real-world applications. In particular, SNNs based on temporal coding are expected to be much more efficient than those based on rate coding, because the former requires substantially fewer spikes to carry out tasks. As SNNs are continuous-state and continuous-time models, it is favorable to implement them with analog VLSI circuits. However, the construction of the entire system with continuous-time analog circuits would be infeasible when the system size is very large. Therefore, mixed-signal circuits must be employed, and the time discretization and quantization of the synaptic weights are necessary. Moreover, the analog VLSI implementation of SNNs exhibits non-idealities, such as the effects of noise and device mismatches, as well as other constraints arising from the analog circuit operation. In this study, we investigated the effects of the time discretization and/or weight quantization on the performance of SNNs. Furthermore, we elucidated the effects the lower bound of the membrane potentials and the temporal fluctuation of the firing threshold. Finally, we propose an optimal approach for the mapping of mathematical SNN models to analog circuits with discretized time.

Hibikino-Musashi@Home 2019 Team Description Paper

May 29, 2020

Abstract:Our team, Hibikino-Musashi@Home (HMA), was founded in 2010. It is based in the Kitakyushu Science and Research Park, Japan. Since 2010, we have participated in the RoboCup@Home Japan Open competition open platform league annually. We have also participated in the RoboCup 2017 Nagoya as an open platform league and domestic standard platform league teams, and in the RoboCup 2018 Montreal as a domestic standard platform league team. Currently, we have 23 members from seven different laboratories based in Kyushu Institute of Technology. This paper aims to introduce the activities that are performed by our team and the technologies that we use.

Hibikino-Musashi@Home 2020 Team Description Paper

May 29, 2020

Abstract:Our team, Hibikino-Musashi@Home (HMA), was founded in 2010. It is based in Japan in the Kitakyushu Science and Research Park. Since 2010, we have annually participated in the RoboCup@Home Japan Open competition in the open platform league (OPL). We participated as an open platform league team in the 2017 Nagoya RoboCup competition and as a domestic standard platform league (DSPL) team in the 2017 Nagoya, 2018 Montreal, and 2019 Sydney RoboCup competitions. We also participated in the World Robot Challenge (WRC) 2018 in the service-robotics category of the partner-robot challenge (real space) and won first place. Currently, we have 20 members from eight different laboratories within the Kyushu Institute of Technology. In this paper, we introduce the activities that have been performed by our team and the technologies that we use.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge