Sunghee Jung

Kanana: Compute-efficient Bilingual Language Models

Feb 26, 2025

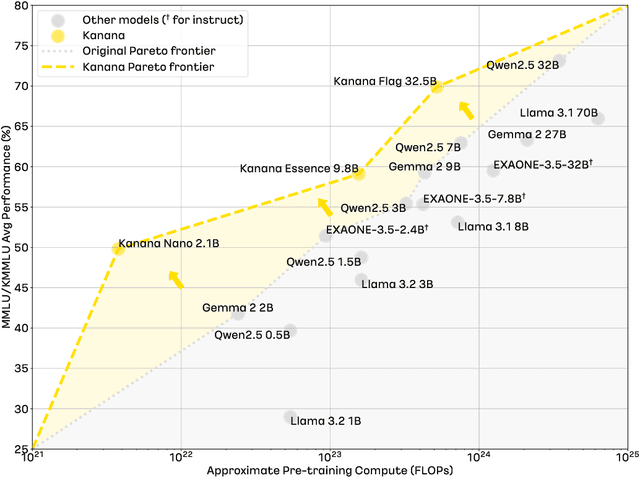

Abstract:We introduce Kanana, a series of bilingual language models that demonstrate exceeding performance in Korean and competitive performance in English. The computational cost of Kanana is significantly lower than that of state-of-the-art models of similar size. The report details the techniques employed during pre-training to achieve compute-efficient yet competitive models, including high quality data filtering, staged pre-training, depth up-scaling, and pruning and distillation. Furthermore, the report outlines the methodologies utilized during the post-training of the Kanana models, encompassing supervised fine-tuning and preference optimization, aimed at enhancing their capability for seamless interaction with users. Lastly, the report elaborates on plausible approaches used for language model adaptation to specific scenarios, such as embedding, retrieval augmented generation, and function calling. The Kanana model series spans from 2.1B to 32.5B parameters with 2.1B models (base, instruct, embedding) publicly released to promote research on Korean language models.

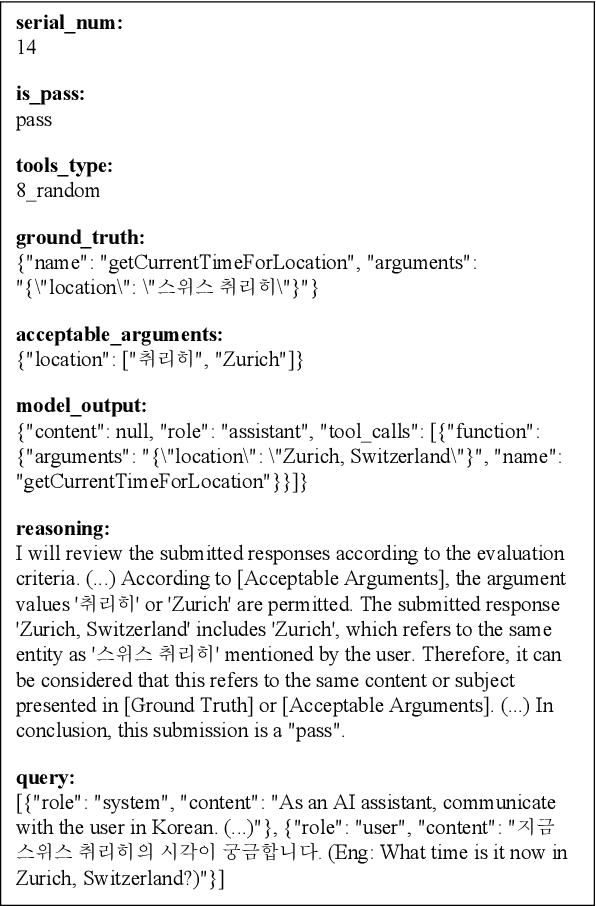

FunctionChat-Bench: Comprehensive Evaluation of Language Models' Generative Capabilities in Korean Tool-use Dialogs

Nov 21, 2024

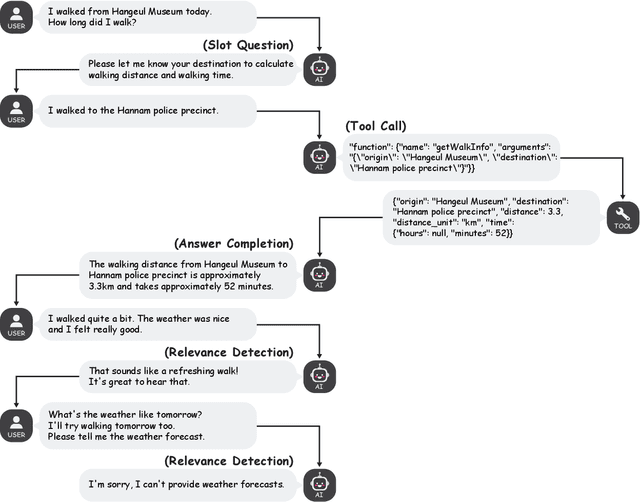

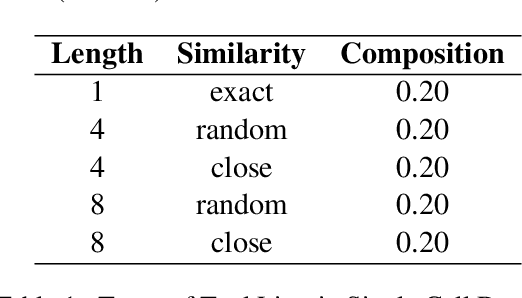

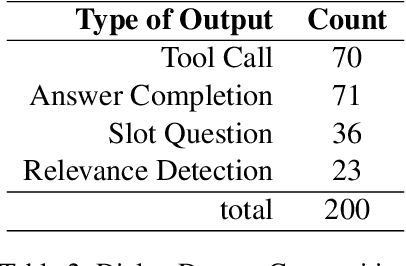

Abstract:This study investigates language models' generative capabilities in tool-use dialogs. We categorize the models' outputs in tool-use dialogs into four distinct types: Tool Call, Answer Completion, Slot Question, and Relevance Detection, which serve as aspects for evaluation. We introduce FunctionChat-Bench, comprising 700 evaluation items and automated assessment programs. Using this benchmark, we evaluate several language models that support function calling. Our findings indicate that while language models may exhibit high accuracy in single-turn Tool Call scenarios, this does not necessarily translate to superior generative performance in multi-turn environments. We argue that the capabilities required for function calling extend beyond generating tool call messages; they must also effectively generate conversational messages that engage the user.

Intelli-Z: Toward Intelligible Zero-Shot TTS

Jan 25, 2024Abstract:Although numerous recent studies have suggested new frameworks for zero-shot TTS using large-scale, real-world data, studies that focus on the intelligibility of zero-shot TTS are relatively scarce. Zero-shot TTS demands additional efforts to ensure clear pronunciation and speech quality due to its inherent requirement of replacing a core parameter (speaker embedding or acoustic prompt) with a new one at the inference stage. In this study, we propose a zero-shot TTS model focused on intelligibility, which we refer to as Intelli-Z. Intelli-Z learns speaker embeddings by using multi-speaker TTS as its teacher and is trained with a cycle-consistency loss to include mismatched text-speech pairs for training. Additionally, it selectively aggregates speaker embeddings along the temporal dimension to minimize the interference of the text content of reference speech at the inference stage. We substantiate the effectiveness of the proposed methods with an ablation study. The Mean Opinion Score (MOS) increases by 9% for unseen speakers when the first two methods are applied, and it further improves by 16% when selective temporal aggregation is applied.

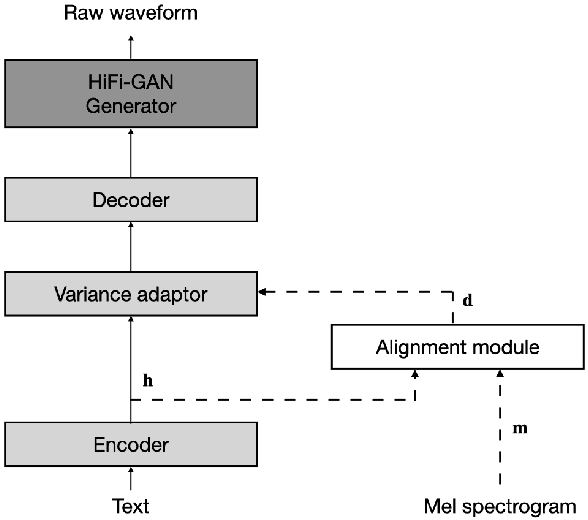

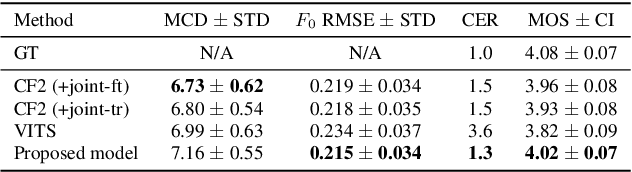

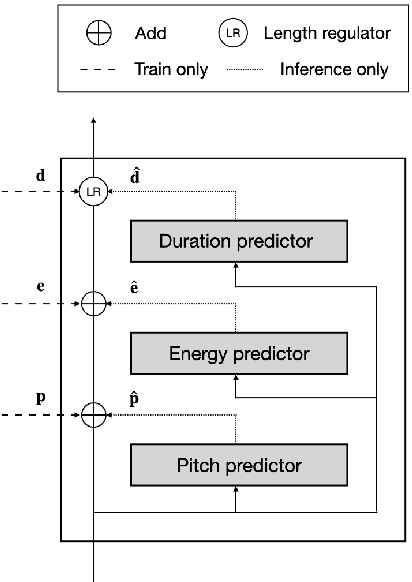

JETS: Jointly Training FastSpeech2 and HiFi-GAN for End to End Text to Speech

Mar 31, 2022

Abstract:In neural text-to-speech (TTS), two-stage system or a cascade of separately learned models have shown synthesis quality close to human speech. For example, FastSpeech2 transforms an input text to a mel-spectrogram and then HiFi-GAN generates a raw waveform from a mel-spectogram where they are called an acoustic feature generator and a neural vocoder respectively. However, their training pipeline is somewhat cumbersome in that it requires a fine-tuning and an accurate speech-text alignment for optimal performance. In this work, we present end-to-end text-to-speech (E2E-TTS) model which has a simplified training pipeline and outperforms a cascade of separately learned models. Specifically, our proposed model is jointly trained FastSpeech2 and HiFi-GAN with an alignment module. Since there is no acoustic feature mismatch between training and inference, it does not requires fine-tuning. Furthermore, we remove dependency on an external speech-text alignment tool by adopting an alignment learning objective in our joint training framework. Experiments on LJSpeech corpus shows that the proposed model outperforms publicly available, state-of-the-art implementations of ESPNet2-TTS on subjective evaluation (MOS) and some objective evaluations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge