Sheng Ge

Graph Edge Representation via Tensor Product Graph Convolutional Representation

Jun 21, 2024

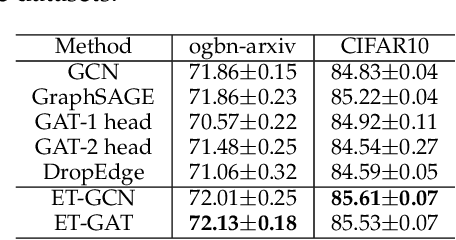

Abstract:Graph Convolutional Networks (GCNs) have been widely studied. The core of GCNs is the definition of convolution operators on graphs. However, existing Graph Convolution (GC) operators are mainly defined on adjacency matrix and node features and generally focus on obtaining effective node embeddings which cannot be utilized to address the graphs with (high-dimensional) edge features. To address this problem, by leveraging tensor contraction representation and tensor product graph diffusion theories, this paper analogously defines an effective convolution operator on graphs with edge features which is named as Tensor Product Graph Convolution (TPGC). The proposed TPGC aims to obtain effective edge embeddings. It provides a complementary model to traditional graph convolutions (GCs) to address the more general graph data analysis with both node and edge features. Experimental results on several graph learning tasks demonstrate the effectiveness of the proposed TPGC.

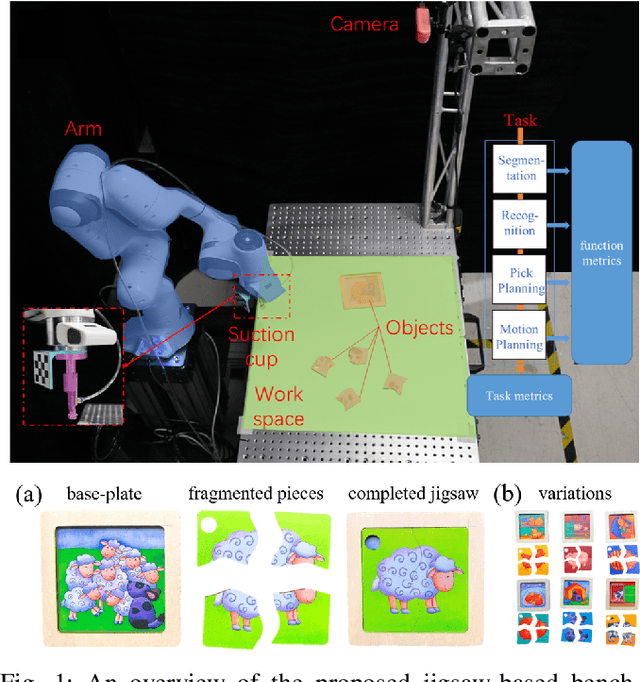

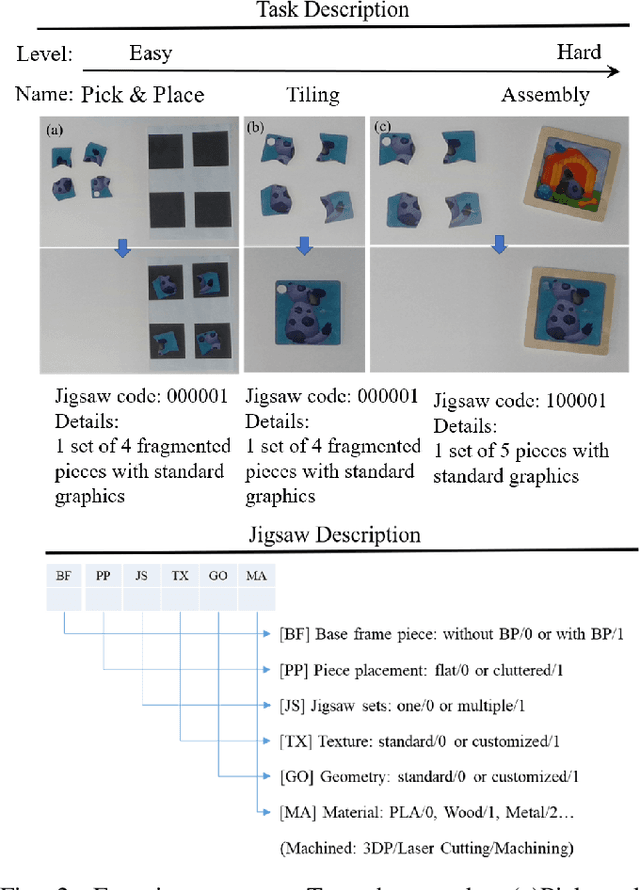

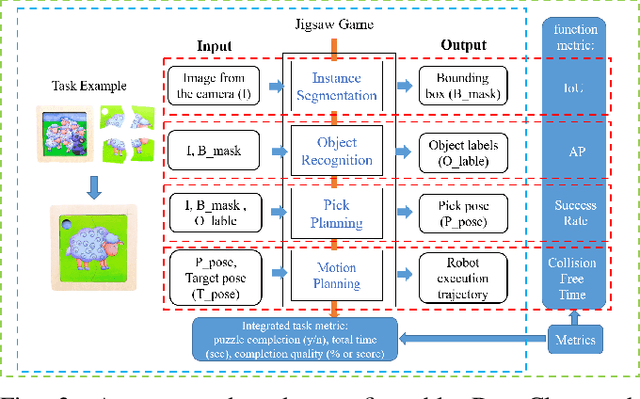

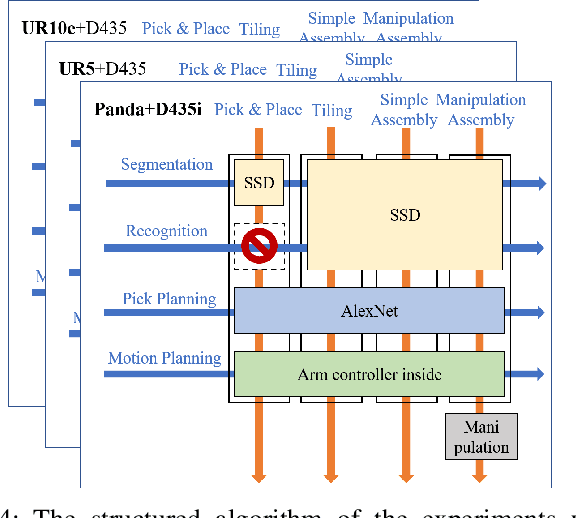

Jigsaw-based Benchmarking for Learning Robotic Manipulation

Jun 08, 2023

Abstract:Benchmarking provides experimental evidence of the scientific baseline to enhance the progression of fundamental research, which is also applicable to robotics. In this paper, we propose a method to benchmark metrics of robotic manipulation, which addresses the spatial-temporal reasoning skills for robot learning with the jigsaw game. In particular, our approach exploits a simple set of jigsaw pieces by designing a structured protocol, which can be highly customizable according to a wide range of task specifications. Researchers can selectively adopt the proposed protocol to benchmark their research outputs, on a comparable scale in the functional, task, and system-level of details. The purpose is to provide a potential look-up table for learning-based robot manipulation, commonly available in other engineering disciplines, to facilitate the adoption of robotics through calculated, empirical, and systematic experimental evidence.

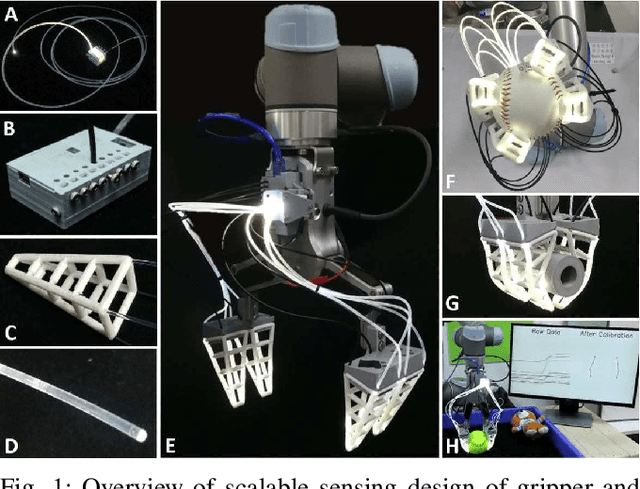

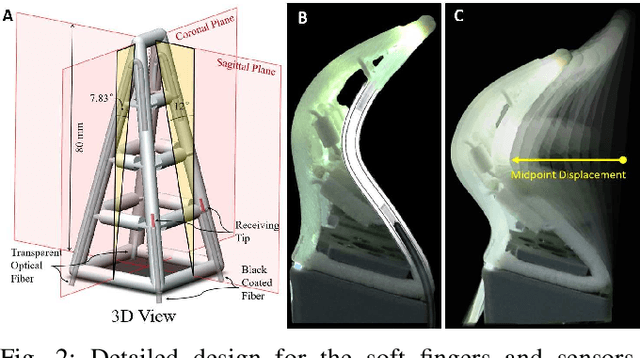

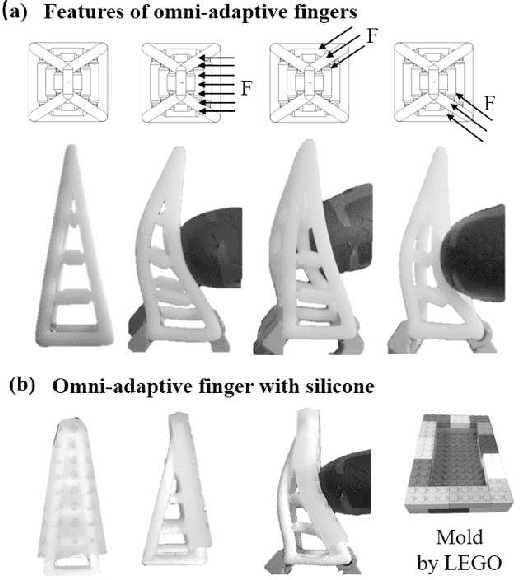

Scalable Tactile Sensing for an Omni-adaptive Soft Robot Finger

Feb 29, 2020

Abstract:Robotic fingers made of soft material and compliant structures usually lead to superior adaptation when interacting with the unstructured physical environment. In this paper, we present an embedded sensing solution using optical fibers for an omni-adaptive soft robotic finger with exceptional adaptation in all directions. In particular, we managed to insert a pair of optical fibers inside the finger's structural cavity without interfering with its adaptive performance. The resultant integration is scalable as a versatile, low-cost, and moisture-proof solution for physically safe human-robot interaction. In addition, we experimented with our finger design for an object sorting task and identified sectional diameters of 94\% objects within the $\pm$6mm error and measured 80\% of the structural strains within $\pm$0.1mm/mm error. The proposed sensor design opens many doors in future applications of soft robotics for scalable and adaptive physical interactions in the unstructured environment.

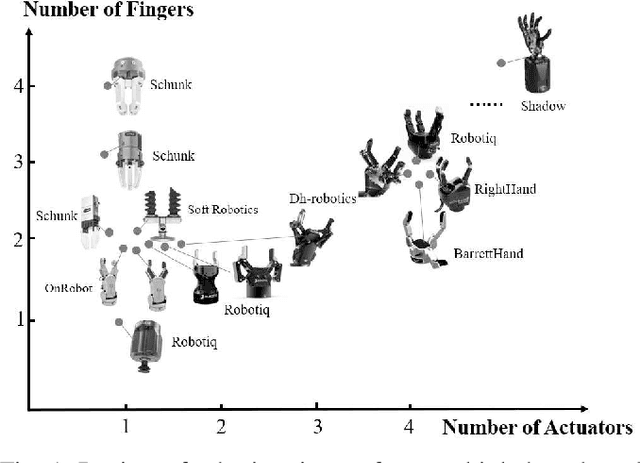

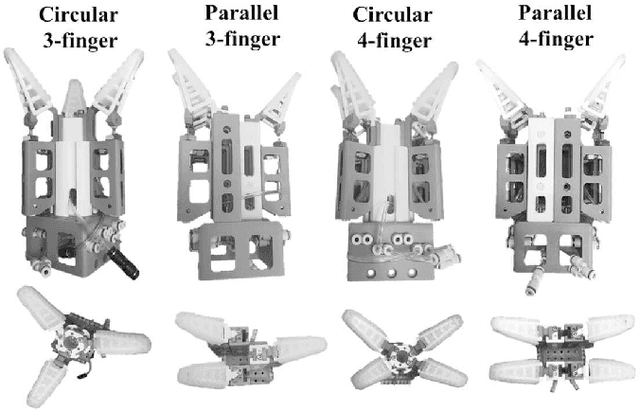

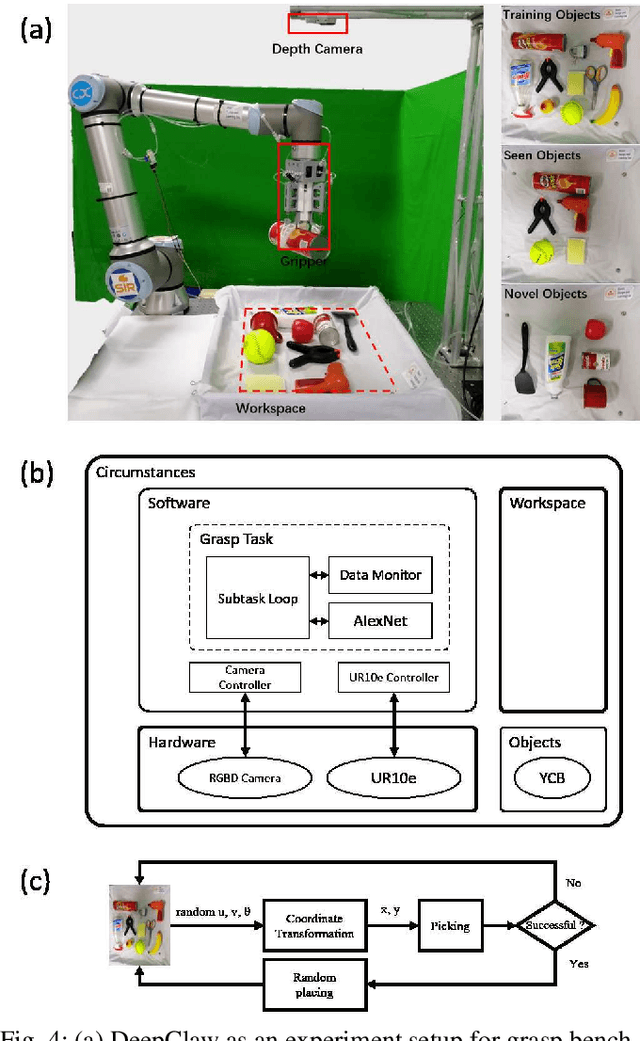

Reconfigurable Design for Omni-adaptive Grasp Learning

Feb 29, 2020

Abstract:The engineering design of robotic grippers presents an ample design space for optimization towards robust grasping. In this paper, we adopt the reconfigurable design of the robotic gripper using a novel soft finger structure with omni-directional adaptation, which generates a large number of possible gripper configurations by rearranging these fingers. Such reconfigurable design with these omni-adaptive fingers enables us to systematically investigate the optimal arrangement of the fingers towards robust grasping. Furthermore, we adopt a learning-based method as the baseline to benchmark the effectiveness of each design configuration. As a result, we found that a 3-finger and 4-finger radial configuration is the most effective one achieving an average 96\% grasp success rate on seen and novel objects selected from the YCB dataset. We also discussed the influence of the frictional surface on the finger to improve the grasp robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge