Sebastiano Battiato

Department of Mathematics and Computer Science, University of Catania, Italy

Reinforcing the Weakest Links: Modernizing SIENA with Targeted Deep Learning Integration

Mar 13, 2026Abstract:Percentage Brain Volume Change (PBVC) derived from Magnetic Resonance Imaging (MRI) is a widely used biomarker of brain atrophy, with SIENA among the most established methods for its estimation. However, SIENA relies on classical image processing steps, particularly skull stripping and tissue segmentation, whose failures can propagate through the pipeline and bias atrophy estimates. In this work, we examine whether targeted deep learning substitutions can improve SIENA while preserving its established and interpretable framework. To this end, we integrate SynthStrip and SynthSeg into SIENA and evaluate three pipeline variants on the ADNI and PPMI longitudinal cohorts. Performance is assessed using three complementary criteria: correlation with longitudinal clinical and structural decline, scan-order consistency, and end-to-end runtime. Replacing the skull-stripping module yields the most consistent gains: in ADNI, it substantially strengthens associations between PBVC and multiple measures of disease progression relative to the standard SIENA pipeline, while across both datasets it markedly improves robustness under scan reversal. The fully integrated pipeline achieves the strongest scan-order consistency, reducing the error by up to 99.1%. In addition, GPU-enabled variants reduce execution time by up to 46% while maintaining CPU runtimes comparable to standard SIENA. Overall, these findings show that deep learning can meaningfully strengthen established longitudinal atrophy pipelines when used to reinforce their weakest image processing steps. More broadly, this study highlights the value of modularly modernizing clinically trusted neuroimaging tools without sacrificing their interpretability. Code is publicly available at https://github.com/Raciti/Enhanced-SIENA.git.

Proto-LeakNet: Towards Signal-Leak Aware Attribution in Synthetic Human Face Imagery

Nov 15, 2025

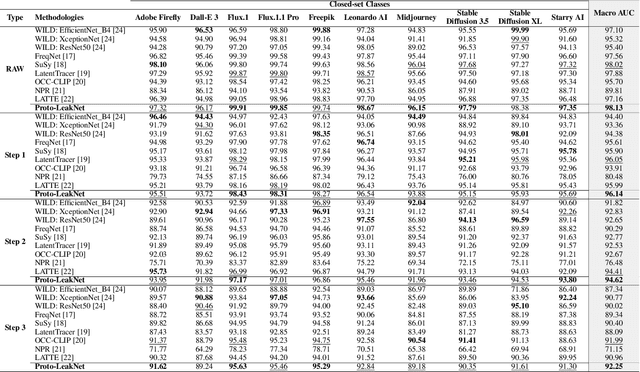

Abstract:The growing sophistication of synthetic image and deepfake generation models has turned source attribution and authenticity verification into a critical challenge for modern computer vision systems. Recent studies suggest that diffusion pipelines unintentionally imprint persistent statistical traces, known as signal-leaks, within their outputs, particularly in latent representations. Building on this observation, we propose Proto-LeakNet, a signal-leak-aware and interpretable attribution framework that integrates closed-set classification with a density-based open-set evaluation on the learned embeddings, enabling analysis of unseen generators without retraining. Acting in the latent domain of diffusion models, our method re-simulates partial forward diffusion to expose residual generator-specific cues. A temporal attention encoder aggregates multi-step latent features, while a feature-weighted prototype head structures the embedding space and enables transparent attribution. Trained solely on closed data and achieving a Macro AUC of 98.13%, Proto-LeakNet learns a latent geometry that remains robust under post-processing, surpassing state-of-the-art methods, and achieves strong separability both between real images and known generators, and between known and unseen ones. The codebase will be available after acceptance.

TRUST: Leveraging Text Robustness for Unsupervised Domain Adaptation

Aug 08, 2025Abstract:Recent unsupervised domain adaptation (UDA) methods have shown great success in addressing classical domain shifts (e.g., synthetic-to-real), but they still suffer under complex shifts (e.g. geographical shift), where both the background and object appearances differ significantly across domains. Prior works showed that the language modality can help in the adaptation process, exhibiting more robustness to such complex shifts. In this paper, we introduce TRUST, a novel UDA approach that exploits the robustness of the language modality to guide the adaptation of a vision model. TRUST generates pseudo-labels for target samples from their captions and introduces a novel uncertainty estimation strategy that uses normalised CLIP similarity scores to estimate the uncertainty of the generated pseudo-labels. Such estimated uncertainty is then used to reweight the classification loss, mitigating the adverse effects of wrong pseudo-labels obtained from low-quality captions. To further increase the robustness of the vision model, we propose a multimodal soft-contrastive learning loss that aligns the vision and language feature spaces, by leveraging captions to guide the contrastive training of the vision model on target images. In our contrastive loss, each pair of images acts as both a positive and a negative pair and their feature representations are attracted and repulsed with a strength proportional to the similarity of their captions. This solution avoids the need for hardly determining positive and negative pairs, which is critical in the UDA setting. Our approach outperforms previous methods, setting the new state-of-the-art on classical (DomainNet) and complex (GeoNet) domain shifts. The code will be available upon acceptance.

Deepfake Forensic Analysis: Source Dataset Attribution and Legal Implications of Synthetic Media Manipulation

May 16, 2025Abstract:Synthetic media generated by Generative Adversarial Networks (GANs) pose significant challenges in verifying authenticity and tracing dataset origins, raising critical concerns in copyright enforcement, privacy protection, and legal compliance. This paper introduces a novel forensic framework for identifying the training dataset (e.g., CelebA or FFHQ) of GAN-generated images through interpretable feature analysis. By integrating spectral transforms (Fourier/DCT), color distribution metrics, and local feature descriptors (SIFT), our pipeline extracts discriminative statistical signatures embedded in synthetic outputs. Supervised classifiers (Random Forest, SVM, XGBoost) achieve 98-99% accuracy in binary classification (real vs. synthetic) and multi-class dataset attribution across diverse GAN architectures (StyleGAN, AttGAN, GDWCT, StarGAN, and StyleGAN2). Experimental results highlight the dominance of frequency-domain features (DCT/FFT) in capturing dataset-specific artifacts, such as upsampling patterns and spectral irregularities, while color histograms reveal implicit regularization strategies in GAN training. We further examine legal and ethical implications, showing how dataset attribution can address copyright infringement, unauthorized use of personal data, and regulatory compliance under frameworks like GDPR and California's AB 602. Our framework advances accountability and governance in generative modeling, with applications in digital forensics, content moderation, and intellectual property litigation.

End-to-end Audio Deepfake Detection from RAW Waveforms: a RawNet-Based Approach with Cross-Dataset Evaluation

Apr 30, 2025Abstract:Audio deepfakes represent a growing threat to digital security and trust, leveraging advanced generative models to produce synthetic speech that closely mimics real human voices. Detecting such manipulations is especially challenging under open-world conditions, where spoofing methods encountered during testing may differ from those seen during training. In this work, we propose an end-to-end deep learning framework for audio deepfake detection that operates directly on raw waveforms. Our model, RawNetLite, is a lightweight convolutional-recurrent architecture designed to capture both spectral and temporal features without handcrafted preprocessing. To enhance robustness, we introduce a training strategy that combines data from multiple domains and adopts Focal Loss to emphasize difficult or ambiguous samples. We further demonstrate that incorporating codec-based manipulations and applying waveform-level audio augmentations (e.g., pitch shifting, noise, and time stretching) leads to significant generalization improvements under realistic acoustic conditions. The proposed model achieves over 99.7% F1 and 0.25% EER on in-domain data (FakeOrReal), and up to 83.4% F1 with 16.4% EER on a challenging out-of-distribution test set (AVSpoof2021 + CodecFake). These findings highlight the importance of diverse training data, tailored objective functions and audio augmentations in building resilient and generalizable audio forgery detectors. Code and pretrained models are available at https://iplab.dmi.unict.it/mfs/Deepfakes/PaperRawNet2025/.

WILD: a new in-the-Wild Image Linkage Dataset for synthetic image attribution

Apr 29, 2025Abstract:Synthetic image source attribution is an open challenge, with an increasing number of image generators being released yearly. The complexity and the sheer number of available generative techniques, as well as the scarcity of high-quality open source datasets of diverse nature for this task, make training and benchmarking synthetic image source attribution models very challenging. WILD is a new in-the-Wild Image Linkage Dataset designed to provide a powerful training and benchmarking tool for synthetic image attribution models. The dataset is built out of a closed set of 10 popular commercial generators, which constitutes the training base of attribution models, and an open set of 10 additional generators, simulating a real-world in-the-wild scenario. Each generator is represented by 1,000 images, for a total of 10,000 images in the closed set and 10,000 images in the open set. Half of the images are post-processed with a wide range of operators. WILD allows benchmarking attribution models in a wide range of tasks, including closed and open set identification and verification, and robust attribution with respect to post-processing and adversarial attacks. Models trained on WILD are expected to benefit from the challenging scenario represented by the dataset itself. Moreover, an assessment of seven baseline methodologies on closed and open set attribution is presented, including robustness tests with respect to post-processing.

ICPR 2024 Competition on Multiple Sclerosis Lesion Segmentation -- Methods and Results

Oct 10, 2024

Abstract:This report summarizes the outcomes of the ICPR 2024 Competition on Multiple Sclerosis Lesion Segmentation (MSLesSeg). The competition aimed to develop methods capable of automatically segmenting multiple sclerosis lesions in MRI scans. Participants were provided with a novel annotated dataset comprising a heterogeneous cohort of MS patients, featuring both baseline and follow-up MRI scans acquired at different hospitals. MSLesSeg focuses on developing algorithms that can independently segment multiple sclerosis lesions of an unexamined cohort of patients. This segmentation approach aims to overcome current benchmarks by eliminating user interaction and ensuring robust lesion detection at different timepoints, encouraging innovation and promoting methodological advances.

Enhancing Crime Scene Investigations through Virtual Reality and Deep Learning Techniques

Sep 27, 2024Abstract:The analysis of a crime scene is a pivotal activity in forensic investigations. Crime Scene Investigators and forensic science practitioners rely on best practices, standard operating procedures, and critical thinking, to produce rigorous scientific reports to document the scenes of interest and meet the quality standards expected in the courts. However, crime scene examination is a complex and multifaceted task often performed in environments susceptible to deterioration, contamination, and alteration, despite the use of contact-free and non-destructive methods of analysis. In this context, the documentation of the sites, and the identification and isolation of traces of evidential value remain challenging endeavours. In this paper, we propose a photogrammetric reconstruction of the crime scene for inspection in virtual reality (VR) and focus on fully automatic object recognition with deep learning (DL) algorithms through a client-server architecture. A pre-trained Faster-RCNN model was chosen as the best method that can best categorize relevant objects at the scene, selected by experts in the VR environment. These operations can considerably improve and accelerate crime scene analysis and help the forensic expert in extracting measurements and analysing in detail the objects under analysis. Experimental results on a simulated crime scene have shown that the proposed method can be effective in finding and recognizing objects with potential evidentiary value, enabling timely analyses of crime scenes, particularly those with health and safety risks (e.g. fires, explosions, chemicals, etc.), while minimizing subjective bias and contamination of the scene.

Deepfake Media Forensics: State of the Art and Challenges Ahead

Aug 01, 2024Abstract:AI-generated synthetic media, also called Deepfakes, have significantly influenced so many domains, from entertainment to cybersecurity. Generative Adversarial Networks (GANs) and Diffusion Models (DMs) are the main frameworks used to create Deepfakes, producing highly realistic yet fabricated content. While these technologies open up new creative possibilities, they also bring substantial ethical and security risks due to their potential misuse. The rise of such advanced media has led to the development of a cognitive bias known as Impostor Bias, where individuals doubt the authenticity of multimedia due to the awareness of AI's capabilities. As a result, Deepfake detection has become a vital area of research, focusing on identifying subtle inconsistencies and artifacts with machine learning techniques, especially Convolutional Neural Networks (CNNs). Research in forensic Deepfake technology encompasses five main areas: detection, attribution and recognition, passive authentication, detection in realistic scenarios, and active authentication. Each area tackles specific challenges, from tracing the origins of synthetic media and examining its inherent characteristics for authenticity. This paper reviews the primary algorithms that address these challenges, examining their advantages, limitations, and future prospects.

TADM: Temporally-Aware Diffusion Model for Neurodegenerative Progression on Brain MRI

Jun 18, 2024

Abstract:Generating realistic images to accurately predict changes in the structure of brain MRI is a crucial tool for clinicians. Such applications help assess patients' outcomes and analyze how diseases progress at the individual level. However, existing methods for this task present some limitations. Some approaches attempt to model the distribution of MRI scans directly by conditioning the model on patients' ages, but they fail to explicitly capture the relationship between structural changes in the brain and time intervals, especially on age-unbalanced datasets. Other approaches simply rely on interpolation between scans, which limits their clinical application as they do not predict future MRIs. To address these challenges, we propose a Temporally-Aware Diffusion Model (TADM), which introduces a novel approach to accurately infer progression in brain MRIs. TADM learns the distribution of structural changes in terms of intensity differences between scans and combines the prediction of these changes with the initial baseline scans to generate future MRIs. Furthermore, during training, we propose to leverage a pre-trained Brain-Age Estimator (BAE) to refine the model's training process, enhancing its ability to produce accurate MRIs that match the expected age gap between baseline and generated scans. Our assessment, conducted on the OASIS-3 dataset, uses similarity metrics and region sizes computed by comparing predicted and real follow-up scans on 3 relevant brain regions. TADM achieves large improvements over existing approaches, with an average decrease of 24% in region size error and an improvement of 4% in similarity metrics. These evaluations demonstrate the improvement of our model in mimicking temporal brain neurodegenerative progression compared to existing methods. Our approach will benefit applications, such as predicting patient outcomes or improving treatments for patients.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge