Sean Trott

Department of Cognitive Science, University of California San Diego

How Open Must Language Models be to Enable Reliable Scientific Inference?

Mar 27, 2026Abstract:How does the extent to which a model is open or closed impact the scientific inferences that can be drawn from research that involves it? In this paper, we analyze how restrictions on information about model construction and deployment threaten reliable inference. We argue that current closed models are generally ill-suited for scientific purposes, with some notable exceptions, and discuss ways in which the issues they present to reliable inference can be resolved or mitigated. We recommend that when models are used in research, potential threats to inference should be systematically identified along with the steps taken to mitigate them, and that specific justifications for model selection should be provided.

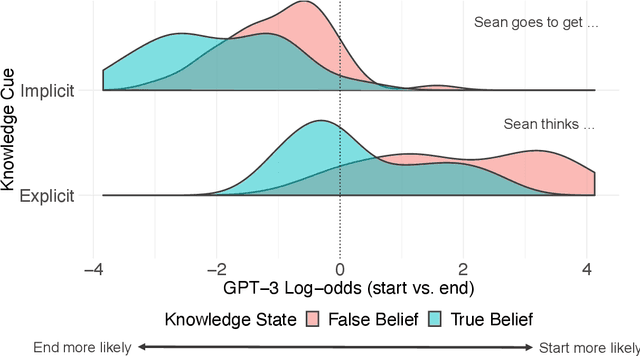

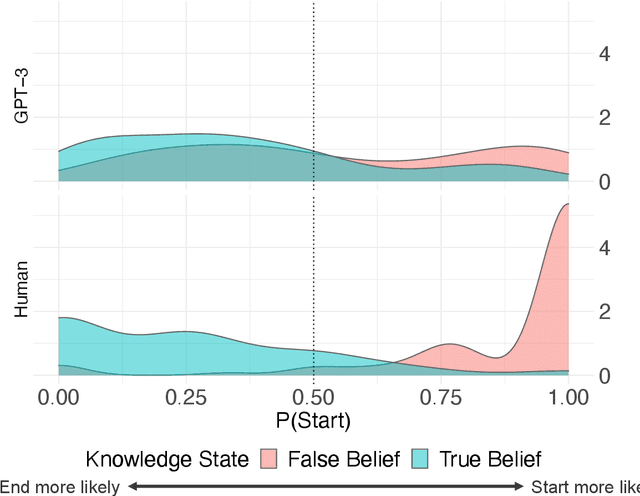

Language Statistics and False Belief Reasoning: Evidence from 41 Open-Weight LMs

Feb 17, 2026Abstract:Research on mental state reasoning in language models (LMs) has the potential to inform theories of human social cognition--such as the theory that mental state reasoning emerges in part from language exposure--and our understanding of LMs themselves. Yet much published work on LMs relies on a relatively small sample of closed-source LMs, limiting our ability to rigorously test psychological theories and evaluate LM capacities. Here, we replicate and extend published work on the false belief task by assessing LM mental state reasoning behavior across 41 open-weight models (from distinct model families). We find sensitivity to implied knowledge states in 34% of the LMs tested; however, consistent with prior work, none fully ``explain away'' the effect in humans. Larger LMs show increased sensitivity and also exhibit higher psychometric predictive power. Finally, we use LM behavior to generate and test a novel hypothesis about human cognition: both humans and LMs show a bias towards attributing false beliefs when knowledge states are cued using a non-factive verb (``John thinks...'') than when cued indirectly (``John looks in the...''). Unlike the primary effect of knowledge states, where human sensitivity exceeds that of LMs, the magnitude of the human knowledge cue effect falls squarely within the distribution of LM effect sizes-suggesting that distributional statistics of language can in principle account for the latter but not the former in humans. These results demonstrate the value of using larger samples of open-weight LMs to test theories of human cognition and evaluate LM capacities.

Capacity Constraints and the Multilingual Penalty for Lexical Disambiguation

Feb 04, 2026Abstract:Multilingual language models (LMs) sometimes under-perform their monolingual counterparts, possibly due to capacity limitations. We quantify this ``multilingual penalty'' for lexical disambiguation--a task requiring precise semantic representations and contextualization mechanisms--using controlled datasets of human relatedness judgments for ambiguous words in both English and Spanish. Comparing monolingual and multilingual LMs from the same families, we find consistently reduced performance in multilingual LMs. We then explore three potential capacity constraints: representational (reduced embedding isotropy), attentional (reduced attention to disambiguating cues), and vocabulary-related (increased multi-token segmentation). Multilingual LMs show some evidence of all three limitations; moreover, these factors statistically account for the variance formerly attributed to a model's multilingual status. These findings suggest both that multilingual LMs do suffer from multiple capacity constraints, and that these constraints correlate with reduced disambiguation performance.

Adding LLMs to the psycholinguistic norming toolbox: A practical guide to getting the most out of human ratings

Sep 17, 2025

Abstract:Word-level psycholinguistic norms lend empirical support to theories of language processing. However, obtaining such human-based measures is not always feasible or straightforward. One promising approach is to augment human norming datasets by using Large Language Models (LLMs) to predict these characteristics directly, a practice that is rapidly gaining popularity in psycholinguistics and cognitive science. However, the novelty of this approach (and the relative inscrutability of LLMs) necessitates the adoption of rigorous methodologies that guide researchers through this process, present the range of possible approaches, and clarify limitations that are not immediately apparent, but may, in some cases, render the use of LLMs impractical. In this work, we present a comprehensive methodology for estimating word characteristics with LLMs, enriched with practical advice and lessons learned from our own experience. Our approach covers both the direct use of base LLMs and the fine-tuning of models, an alternative that can yield substantial performance gains in certain scenarios. A major emphasis in the guide is the validation of LLM-generated data with human "gold standard" norms. We also present a software framework that implements our methodology and supports both commercial and open-weight models. We illustrate the proposed approach with a case study on estimating word familiarity in English. Using base models, we achieved a Spearman correlation of 0.8 with human ratings, which increased to 0.9 when employing fine-tuned models. This methodology, framework, and set of best practices aim to serve as a reference for future research on leveraging LLMs for psycholinguistic and lexical studies.

Measuring and Modifying the Readability of English Texts with GPT-4

Oct 17, 2024

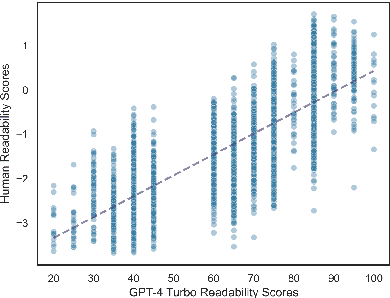

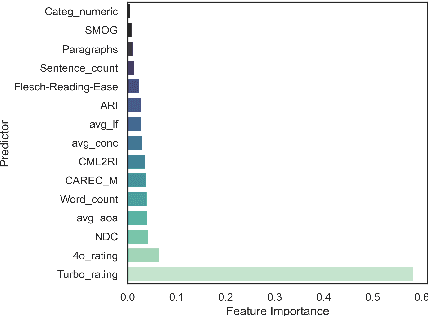

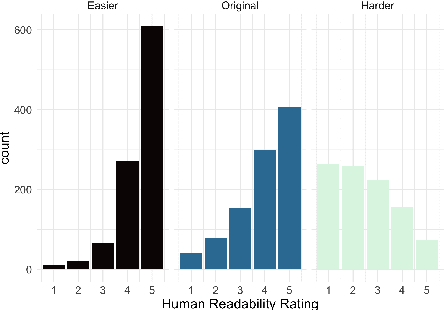

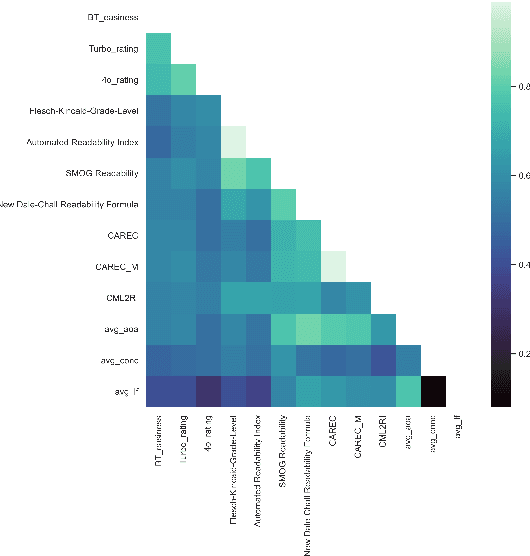

Abstract:The success of Large Language Models (LLMs) in other domains has raised the question of whether LLMs can reliably assess and manipulate the readability of text. We approach this question empirically. First, using a published corpus of 4,724 English text excerpts, we find that readability estimates produced ``zero-shot'' from GPT-4 Turbo and GPT-4o mini exhibit relatively high correlation with human judgments (r = 0.76 and r = 0.74, respectively), out-performing estimates derived from traditional readability formulas and various psycholinguistic indices. Then, in a pre-registered human experiment (N = 59), we ask whether Turbo can reliably make text easier or harder to read. We find evidence to support this hypothesis, though considerable variance in human judgments remains unexplained. We conclude by discussing the limitations of this approach, including limited scope, as well as the validity of the ``readability'' construct and its dependence on context, audience, and goal.

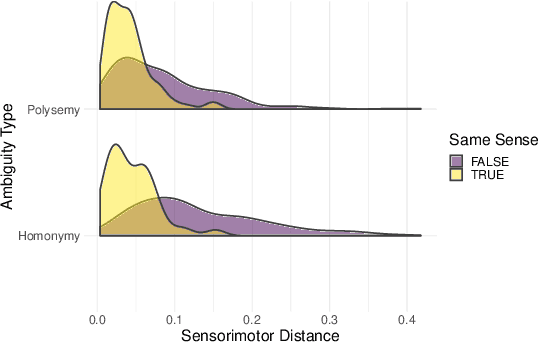

Bidirectional Transformer Representations of (Spanish) Ambiguous Words in Context: A New Lexical Resource and Empirical Analysis

Jun 20, 2024Abstract:Lexical ambiguity -- where a single wordform takes on distinct, context-dependent meanings -- serves as a useful tool to compare across different large language models' (LLMs') ability to form distinct, contextualized representations of the same stimulus. Few studies have systematically compared LLMs' contextualized word embeddings for languages beyond English. Here, we evaluate multiple bidirectional transformers' (BERTs') semantic representations of Spanish ambiguous nouns in context. We develop a novel dataset of minimal-pair sentences evoking the same or different sense for a target ambiguous noun. In a pre-registered study, we collect contextualized human relatedness judgments for each sentence pair. We find that various BERT-based LLMs' contextualized semantic representations capture some variance in human judgments but fall short of the human benchmark, and for Spanish -- unlike English -- model scale is uncorrelated with performance. We also identify stereotyped trajectories of target noun disambiguation as a proportion of traversal through a given LLM family's architecture, which we partially replicate in English. We contribute (1) a dataset of controlled, Spanish sentence stimuli with human relatedness norms, and (2) to our evolving understanding of the impact that LLM specification (architectures, training protocols) exerts on contextualized embeddings.

Do language models capture implied discourse meanings? An investigation with exhaustivity implicatures of Korean morphology

May 15, 2024Abstract:Markedness in natural language is often associated with non-literal meanings in discourse. Differential Object Marking (DOM) in Korean is one instance of this phenomenon, where post-positional markers are selected based on both the semantic features of the noun phrases and the discourse features that are orthogonal to the semantic features. Previous work has shown that distributional models of language recover certain semantic features of words -- do these models capture implied discourse-level meanings as well? We evaluate whether a set of large language models are capable of associating discourse meanings with different object markings in Korean. Results suggest that discourse meanings of a grammatical marker can be more challenging to encode than that of a discourse marker.

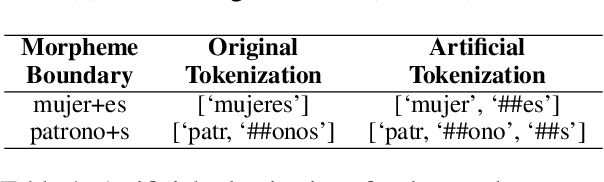

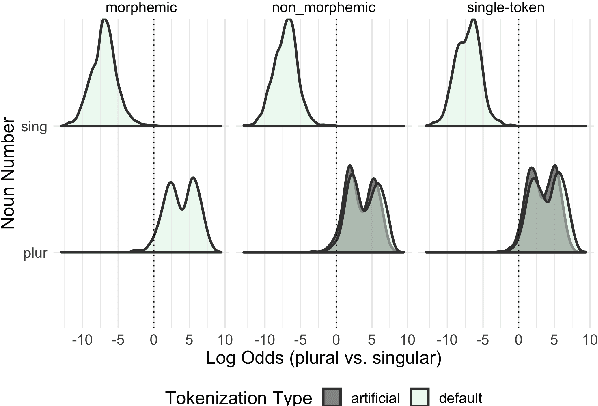

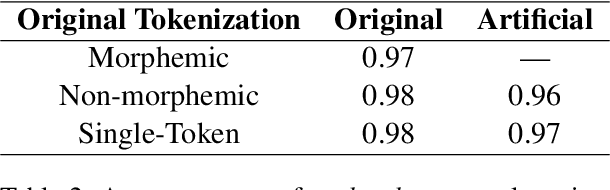

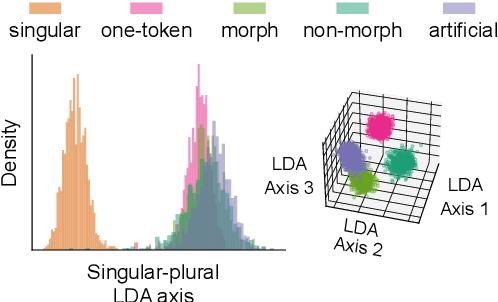

Different Tokenization Schemes Lead to Comparable Performance in Spanish Number Agreement

Mar 20, 2024

Abstract:The relationship between language model tokenization and performance is an open area of research. Here, we investigate how different tokenization schemes impact number agreement in Spanish plurals. We find that morphologically-aligned tokenization performs similarly to other tokenization schemes, even when induced artificially for words that would not be tokenized that way during training. We then present exploratory analyses demonstrating that language model embeddings for different plural tokenizations have similar distributions along the embedding space axis that maximally distinguishes singular and plural nouns. Our results suggest that morphologically-aligned tokenization is a viable tokenization approach, and existing models already generalize some morphological patterns to new items. However, our results indicate that morphological tokenization is not strictly required for performance.

Do Large Language Models know what humans know?

Sep 04, 2022

Abstract:Humans can attribute mental states to others, a capacity known as Theory of Mind. However, it is unknown to what extent this ability results from an innate biological endowment or from experience accrued through child development, particularly exposure to language describing others' mental states. We test the viability of the language exposure hypothesis by assessing whether models exposed to large quantities of human language develop evidence of Theory of Mind. In a pre-registered analysis, we present a linguistic version of the False Belief Task, widely used to assess Theory of Mind, to both human participants and a state-of-the-art Large Language Model, GPT-3. Both are sensitive to others' beliefs, but the language model does not perform as well as the humans, nor does it explain the full extent of their behavior, despite being exposed to more language than a human would in a lifetime. This suggests that while language exposure may in part explain how humans develop Theory of Mind, other mechanisms are also responsible.

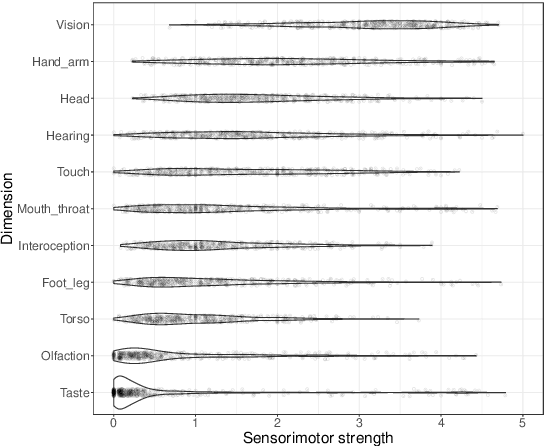

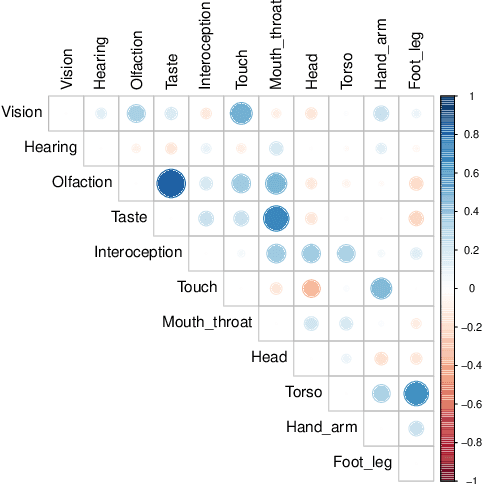

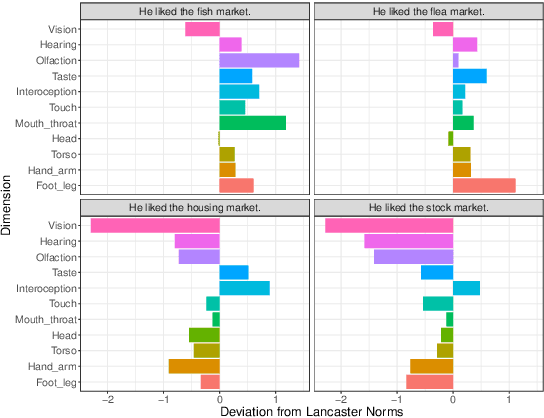

Contextualized Sensorimotor Norms: multi-dimensional measures of sensorimotor strength for ambiguous English words, in context

Mar 10, 2022

Abstract:Most large language models are trained on linguistic input alone, yet humans appear to ground their understanding of words in sensorimotor experience. A natural solution is to augment LM representations with human judgments of a word's sensorimotor associations (e.g., the Lancaster Sensorimotor Norms), but this raises another challenge: most words are ambiguous, and judgments of words in isolation fail to account for this multiplicity of meaning (e.g., "wooden table" vs. "data table"). We attempted to address this problem by building a new lexical resource of contextualized sensorimotor judgments for 112 English words, each rated in four different contexts (448 sentences total). We show that these ratings encode overlapping but distinct information from the Lancaster Sensorimotor Norms, and that they also predict other measures of interest (e.g., relatedness), above and beyond measures derived from BERT. Beyond shedding light on theoretical questions, we suggest that these ratings could be of use as a "challenge set" for researchers building grounded language models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge