Sahar Salimpour

Seamless Outdoor-Indoor Pedestrian Positioning System with GNSS/UWB/IMU Fusion: A Comparison of EKF, FGO, and PF

Dec 11, 2025Abstract:Accurate and continuous pedestrian positioning across outdoor-indoor environments remains challenging because GNSS, UWB, and inertial PDR are complementary yet individually fragile under signal blockage, multipath, and drift. This paper presents a unified GNSS/UWB/IMU fusion framework for seamless pedestrian localization and provides a controlled comparison of three probabilistic back-ends: an error-state extended Kalman filter, sliding-window factor graph optimization, and a particle filter. The system uses chest-mounted IMU-based PDR as the motion backbone and integrates absolute updates from GNSS outdoors and UWB indoors. To enhance transition robustness and mitigate urban GNSS degradation, we introduce a lightweight map-based feasibility constraint derived from OpenStreetMap building footprints, treating most building interiors as non-navigable while allowing motion inside a designated UWB-instrumented building. The framework is implemented in ROS 2 and runs in real time on a wearable platform, with visualization in Foxglove. We evaluate three scenarios: indoor (UWB+PDR), outdoor (GNSS+PDR), and seamless outdoor-indoor (GNSS+UWB+PDR). Results show that the ESKF provides the most consistent overall performance in our implementation.

Follow-Me in Micro-Mobility with End-to-End Imitation Learning

Nov 07, 2025Abstract:Autonomous micro-mobility platforms face challenges from the perspective of the typical deployment environment: large indoor spaces or urban areas that are potentially crowded and highly dynamic. While social navigation algorithms have progressed significantly, optimizing user comfort and overall user experience over other typical metrics in robotics (e.g., time or distance traveled) is understudied. Specifically, these metrics are critical in commercial applications. In this paper, we show how imitation learning delivers smoother and overall better controllers, versus previously used manually-tuned controllers. We demonstrate how DAAV's autonomous wheelchair achieves state-of-the-art comfort in follow-me mode, in which it follows a human operator assisting persons with reduced mobility (PRM). This paper analyzes different neural network architectures for end-to-end control and demonstrates their usability in real-world production-level deployments.

ROSBag MCP Server: Analyzing Robot Data with LLMs for Agentic Embodied AI Applications

Nov 05, 2025

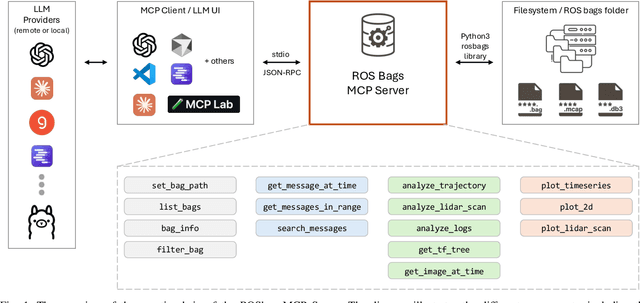

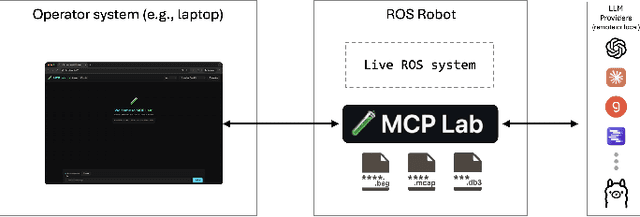

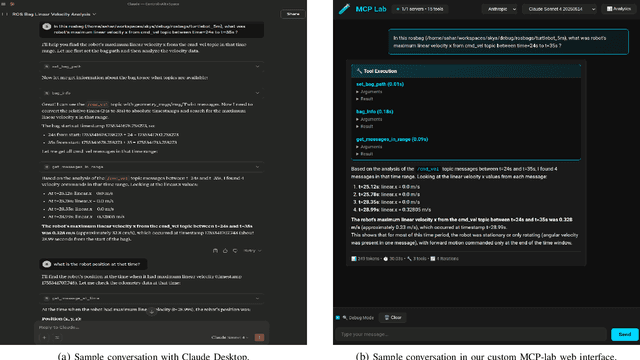

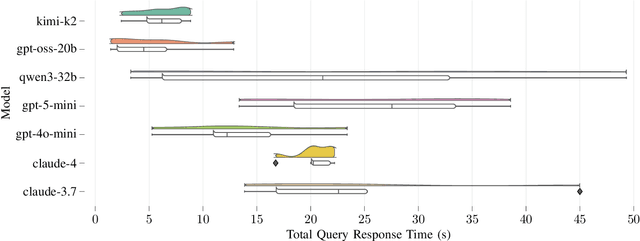

Abstract:Agentic AI systems and Physical or Embodied AI systems have been two key research verticals at the forefront of Artificial Intelligence and Robotics, with Model Context Protocol (MCP) increasingly becoming a key component and enabler of agentic applications. However, the literature at the intersection of these verticals, i.e., Agentic Embodied AI, remains scarce. This paper introduces an MCP server for analyzing ROS and ROS 2 bags, allowing for analyzing, visualizing and processing robot data with natural language through LLMs and VLMs. We describe specific tooling built with robotics domain knowledge, with our initial release focused on mobile robotics and supporting natively the analysis of trajectories, laser scan data, transforms, or time series data. This is in addition to providing an interface to standard ROS 2 CLI tools ("ros2 bag list" or "ros2 bag info"), as well as the ability to filter bags with a subset of topics or trimmed in time. Coupled with the MCP server, we provide a lightweight UI that allows the benchmarking of the tooling with different LLMs, both proprietary (Anthropic, OpenAI) and open-source (through Groq). Our experimental results include the analysis of tool calling capabilities of eight different state-of-the-art LLM/VLM models, both proprietary and open-source, large and small. Our experiments indicate that there is a large divide in tool calling capabilities, with Kimi K2 and Claude Sonnet 4 demonstrating clearly superior performance. We also conclude that there are multiple factors affecting the success rates, from the tool description schema to the number of arguments, as well as the number of tools available to the models. The code is available with a permissive license at https://github.com/binabik-ai/mcp-rosbags.

Towards Embodied Agentic AI: Review and Classification of LLM- and VLM-Driven Robot Autonomy and Interaction

Aug 07, 2025

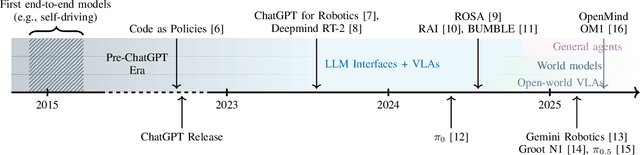

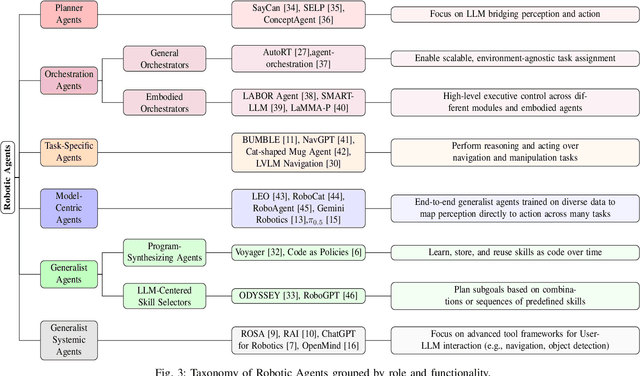

Abstract:Foundation models, including large language models (LLMs) and vision-language models (VLMs), have recently enabled novel approaches to robot autonomy and human-robot interfaces. In parallel, vision-language-action models (VLAs) or large behavior models (BLMs) are increasing the dexterity and capabilities of robotic systems. This survey paper focuses on those words advancing towards agentic applications and architectures. This includes initial efforts exploring GPT-style interfaces to tooling, as well as more complex system where AI agents are coordinators, planners, perception actors, or generalist interfaces. Such agentic architectures allow robots to reason over natural language instructions, invoke APIs, plan task sequences, or assist in operations and diagnostics. In addition to peer-reviewed research, due to the fast-evolving nature of the field, we highlight and include community-driven projects, ROS packages, and industrial frameworks that show emerging trends. We propose a taxonomy for classifying model integration approaches and present a comparative analysis of the role that agents play in different solutions in today's literature.

Sim-to-Real Transfer for Mobile Robots with Reinforcement Learning: from NVIDIA Isaac Sim to Gazebo and Real ROS 2 Robots

Jan 06, 2025

Abstract:Unprecedented agility and dexterous manipulation have been demonstrated with controllers based on deep reinforcement learning (RL), with a significant impact on legged and humanoid robots. Modern tooling and simulation platforms, such as NVIDIA Isaac Sim, have been enabling such advances. This article focuses on demonstrating the applications of Isaac in local planning and obstacle avoidance as one of the most fundamental ways in which a mobile robot interacts with its environments. Although there is extensive research on proprioception-based RL policies, the article highlights less standardized and reproducible approaches to exteroception. At the same time, the article aims to provide a base framework for end-to-end local navigation policies and how a custom robot can be trained in such simulation environment. We benchmark end-to-end policies with the state-of-the-art Nav2, navigation stack in Robot Operating System (ROS). We also cover the sim-to-real transfer process by demonstrating zero-shot transferability of policies trained in the Isaac simulator to real-world robots. This is further evidenced by the tests with different simulated robots, which show the generalization of the learned policy. Finally, the benchmarks demonstrate comparable performance to Nav2, opening the door to quick deployment of state-of-the-art end-to-end local planners for custom robot platforms, but importantly furthering the possibilities by expanding the state and action spaces or task definitions for more complex missions. Overall, with this article we introduce the most important steps, and aspects to consider, in deploying RL policies for local path planning and obstacle avoidance with Isaac Sim training, Gazebo testing, and ROS 2 for real-time inference in real robots. The code is available at https://github.com/sahars93/RL-Navigation.

Benchmarking ML Approaches to UWB-Based Range-Only Posture Recognition for Human Robot-Interaction

Aug 28, 2024

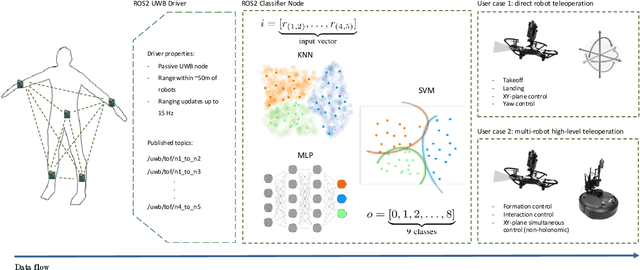

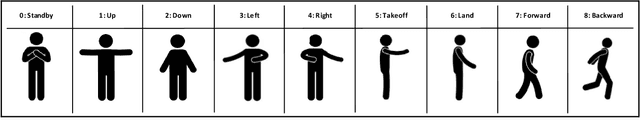

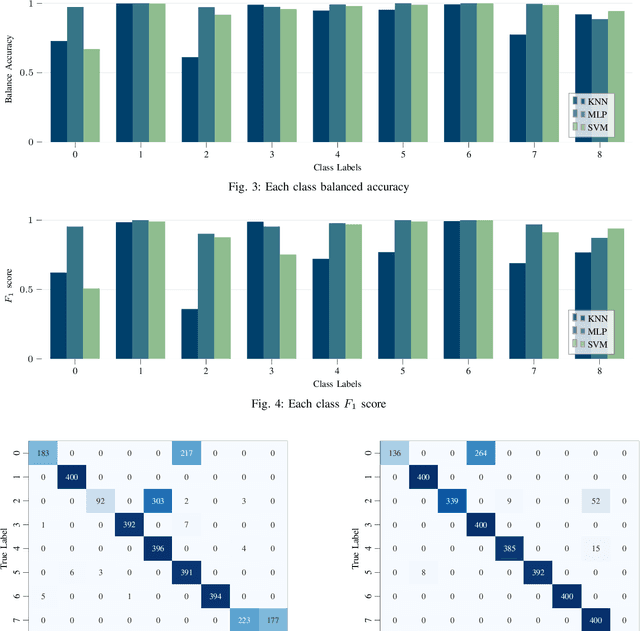

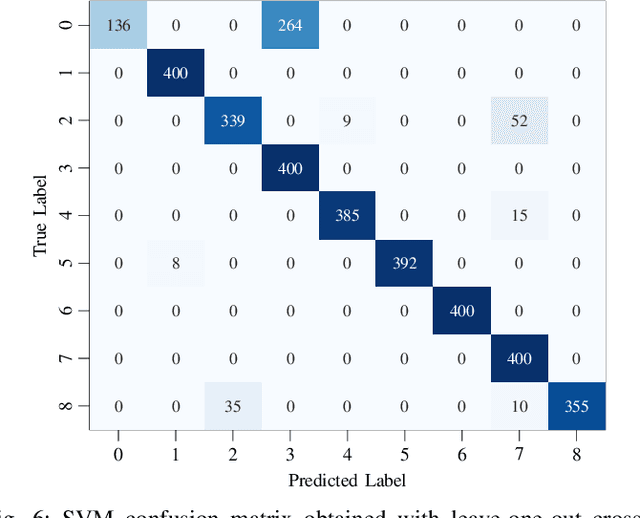

Abstract:Human pose estimation involves detecting and tracking the positions of various body parts using input data from sources such as images, videos, or motion and inertial sensors. This paper presents a novel approach to human pose estimation using machine learning algorithms to predict human posture and translate them into robot motion commands using ultra-wideband (UWB) nodes, as an alternative to motion sensors. The study utilizes five UWB sensors implemented on the human body to enable the classification of still poses and more robust posture recognition. This approach ensures effective posture recognition across a variety of subjects. These range measurements serve as input features for posture prediction models, which are implemented and compared for accuracy. For this purpose, machine learning algorithms including K-Nearest Neighbors (KNN), Support Vector Machine (SVM), and deep Multi-Layer Perceptron (MLP) neural network are employed and compared in predicting corresponding postures. We demonstrate the proposed approach for real-time control of different mobile/aerial robots with inference implemented in a ROS 2 node. Experimental results demonstrate the efficacy of the approach, showcasing successful prediction of human posture and corresponding robot movements with high accuracy.

Benchmarking UWB-Based Infrastructure-Free Positioning and Multi-Robot Relative Localization: Dataset and Characterization

May 15, 2023

Abstract:Ultra-wideband (UWB) positioning has emerged as a low-cost and dependable localization solution for multiple use cases, from mobile robots to asset tracking within the Industrial IoT. The technology is mature and the scientific literature contains multiple datasets and methods for localization based on fixed UWB nodes. At the same time, research in UWB-based relative localization and infrastructure-free localization is gaining traction, further domains. tools and datasets in this domain are scarce. Therefore, we introduce in this paper a novel dataset for benchmarking infrastructure-free relative localization targeting the domain of multi-robot systems. Compared to previous datasets, we analyze the performance of different relative localization approaches for a much wider variety of scenarios with varying numbers of fixed and mobile nodes. A motion capture system provides ground truth data, are multi-modal and include inertial or odometry measurements for benchmarking sensor fusion methods. Additionally, the dataset contains measurements of ranging accuracy based on the relative orientation of antennas and a comprehensive set of measurements for ranging between a single pair of nodes. Our experimental analysis shows that high accuracy can be localization, but the variability of the ranging error is significant across different settings and setups.

Loosely Coupled Odometry, UWB Ranging, and Cooperative Spatial Detection for Relative Monte-Carlo Multi-Robot Localization

Apr 13, 2023

Abstract:As mobile robots become more ubiquitous, their deployments grow across use cases where GNSS positioning is either unavailable or unreliable. This has led to increased interest in multi-modal relative localization methods. Complementing onboard odometry, ranging allows for relative state estimation, with ultra-wideband (UWB) ranging having gained widespread recognition due to its low cost and centimeter-level out-of-box accuracy. Infrastructure-free localization methods allow for more dynamic, ad-hoc, and flexible deployments, yet they have received less attention from the research community. In this work, we propose a cooperative relative multi-robot localization where we leverage inter-robot ranging and simultaneous spatial detections of objects in the environment. To achieve this, we equip robots with a single UWB transceiver and a stereo camera. We propose a novel Monte-Carlo approach to estimate relative states by either employing only UWB ranges or dynamically integrating simultaneous spatial detections from the stereo cameras. We also address the challenges for UWB ranging error mitigation, especially in non-line-of-sight, with a study on different LSTM networks to estimate the ranging error. The proposed approach has multiple benefits. First, we show that a single range is enough to estimate the accurate relative states of two robots when fusing odometry measurements. Second, our experiments also demonstrate that our approach surpasses traditional methods such as multilateration in terms of accuracy. Third, to increase accuracy even further, we allow for the integration of cooperative spatial detections. Finally, we show how ROS 2 and Zenoh can be integrated to build a scalable wireless communication solution for multi-robot systems. The experimental validation includes real-time deployment and autonomous navigation based on the relative positioning method.

Exploiting Redundancy for UWB Anomaly Detection in Infrastructure-Free Multi-Robot Relative Localization

Mar 30, 2023Abstract:Ultra-wideband (UWB) localization methods have emerged as a cost-effective and accurate solution for GNSS-denied environments. There is a significant amount of previous research in terms of resilience of UWB ranging, with non-line-of-sight and multipath detection methods. However, little attention has been paid to resilience against disturbances in relative localization systems involving multiple nodes. This paper presents an approach to detecting range anomalies in UWB ranging measurements from the perspective of multi-robot cooperative localization. We introduce an approach to exploiting redundancy for relative localization in multi-robot systems, where the position of each node is calculated using different subsets of available data. This enables us to effectively identify nodes that present ranging anomalies and eliminate their effect within the cooperative localization scheme. We analyze anomalies created by timing errors in the ranging process, e.g., owing to malfunctioning hardware. However, our method is generic and can be extended to other types of ranging anomalies. Our approach results in a more resilient cooperative localization framework with a negligible impact in terms of the computational workload.

Decentralized Vision-Based Byzantine Agent Detection in Multi-Robot Systems with IOTA Smart Contracts

Oct 07, 2022

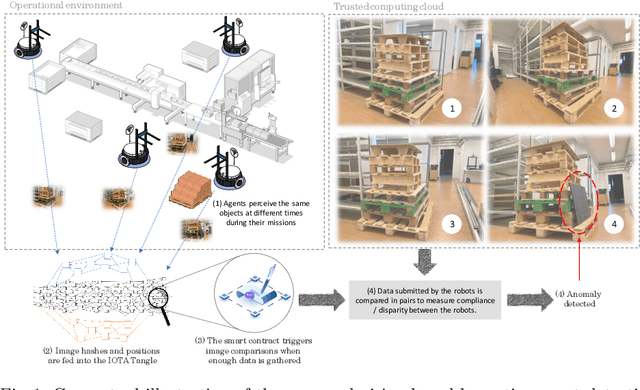

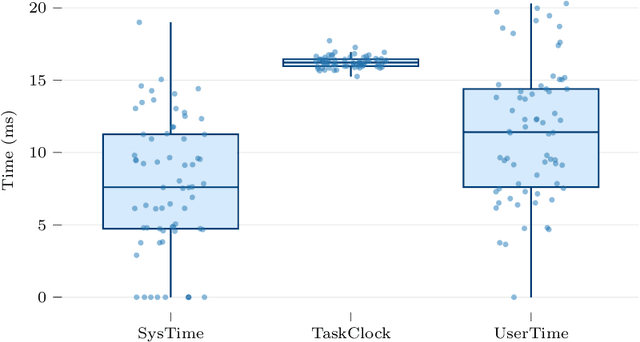

Abstract:Multiple opportunities lie at the intersection of multi-robot systems and distributed ledger technologies (DLTs). In this work, we investigate the potential of new DLT solutions such as IOTA, for detecting anomalies and byzantine agents in multi-robot systems in a decentralized manner. Traditional blockchain approaches are not applicable to real-world networked and decentralized robotic systems where connectivity conditions are not ideal. To address this, we leverage recent advances in partition-tolerant and byzantine-tolerant collaborative decision-making processes with IOTA smart contracts. We show how our work in vision-based anomaly and change detection can be applied to detecting byzantine agents within multiple robots operating in the same environment. We show that IOTA smart contracts add a low computational overhead while allowing to build trust within the multi-robot system. The proposed approach effectively enables byzantine robot detection based on the comparison of images submitted by the different robots and detection of anomalies and changes between them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge