Pranay Reddy

PyPose: A Library for Robot Learning with Physics-based Optimization

Sep 30, 2022

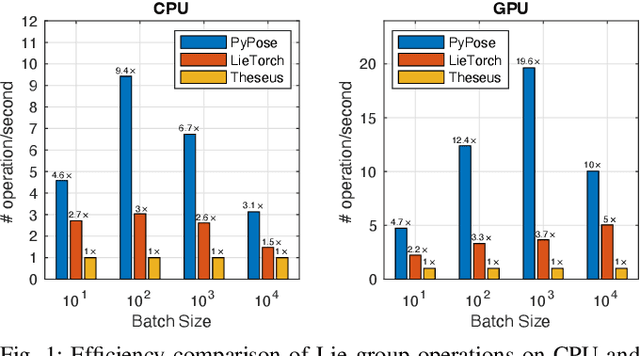

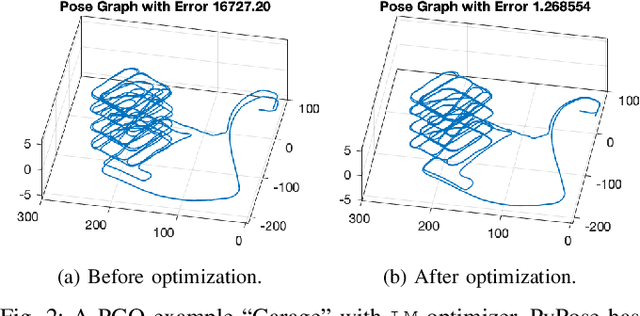

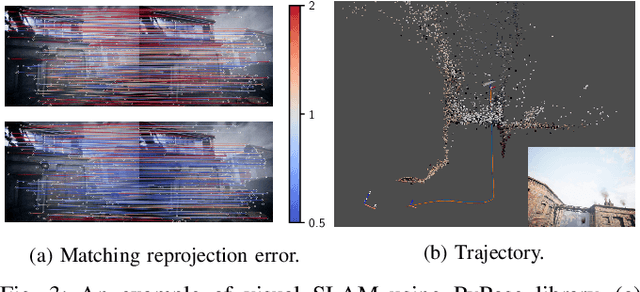

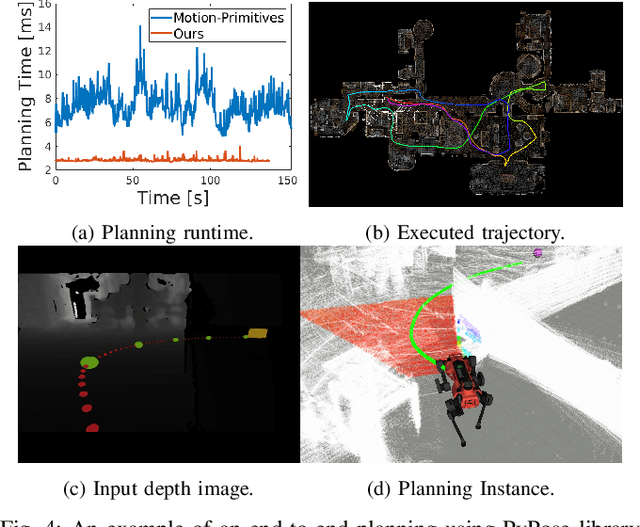

Abstract:Deep learning has had remarkable success in robotic perception, but its data-centric nature suffers when it comes to generalizing to ever-changing environments. By contrast, physics-based optimization generalizes better, but it does not perform as well in complicated tasks due to the lack of high-level semantic information and the reliance on manual parametric tuning. To take advantage of these two complementary worlds, we present PyPose: a robotics-oriented, PyTorch-based library that combines deep perceptual models with physics-based optimization techniques. Our design goal for PyPose is to make it user-friendly, efficient, and interpretable with a tidy and well-organized architecture. Using an imperative style interface, it can be easily integrated into real-world robotic applications. Besides, it supports parallel computing of any order gradients of Lie groups and Lie algebras and $2^{\text{nd}}$-order optimizers, such as trust region methods. Experiments show that PyPose achieves 3-20$\times$ speedup in computation compared to state-of-the-art libraries. To boost future research, we provide concrete examples across several fields of robotics, including SLAM, inertial navigation, planning, and control.

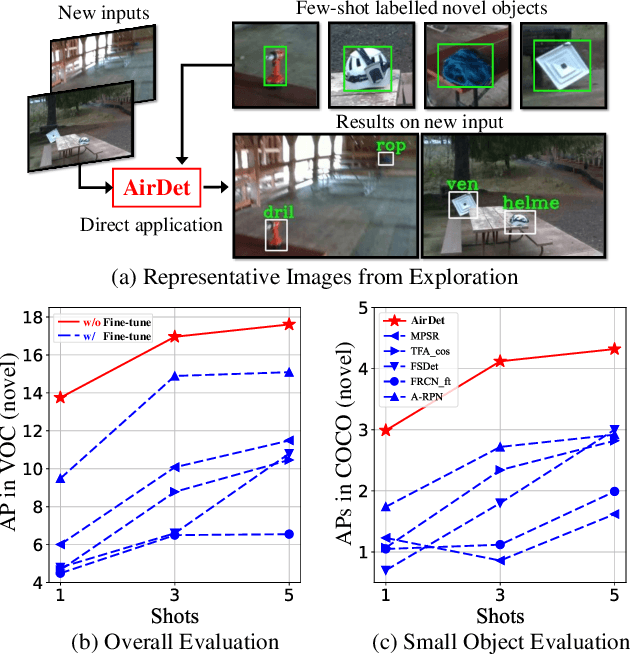

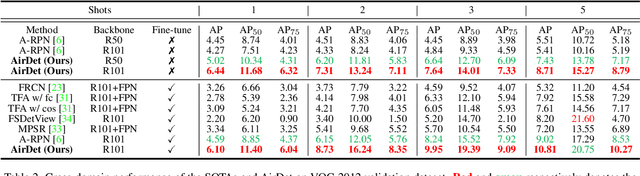

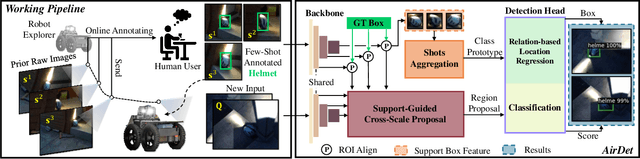

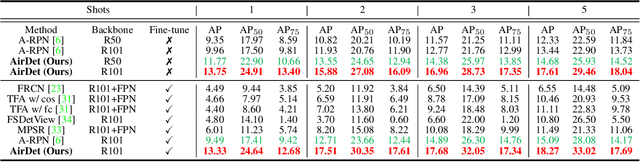

AirDet: Few-Shot Detection without Fine-tuning for Autonomous Exploration

Dec 03, 2021

Abstract:Few-shot object detection has rapidly progressed owing to the success of meta-learning strategies. However, the requirement of a fine-tuning stage in existing methods is timeconsuming and significantly hinders their usage in real-time applications such as autonomous exploration of low-power robots. To solve this problem, we present a brand new architecture, AirDet, which is free of fine-tuning by learning class agnostic relation with support images. Specifically, we propose a support-guided cross-scale (SCS) feature fusion network to generate object proposals, a global-local relation network (GLR) for shots aggregation, and a relation-based prototype embedding network (R-PEN) for precise localization. Exhaustive experiments are conducted on COCO and PASCAL VOC datasets, where surprisingly, AirDet achieves comparable or even better results than the exhaustively finetuned methods, reaching up to 40-60% improvements on the baseline. To our excitement, AirDet obtains favorable performance on multi-scale objects, especially the small ones. Furthermore, we present evaluation results on real-world exploration tests from the DARPA Subterranean Challenge, which strongly validate the feasibility of AirDet in robotics. The source code, pre-trained models, along with the real world data for exploration, will be made public.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge