Nico Hoffmann

Ensuring Topological Data-Structure Preservation under Autoencoder Compression due to Latent Space Regularization in Gauss--Legendre nodes

Sep 21, 2023

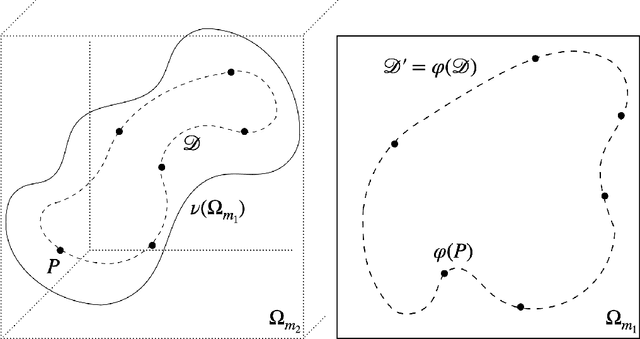

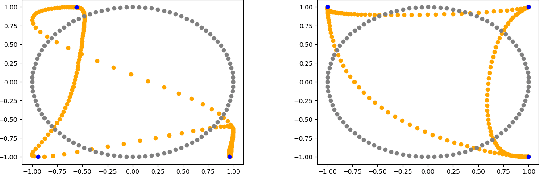

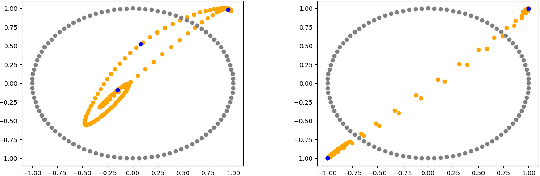

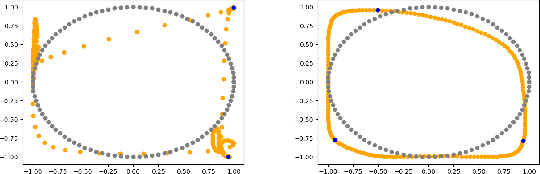

Abstract:We formulate a data independent latent space regularisation constraint for general unsupervised autoencoders. The regularisation rests on sampling the autoencoder Jacobian in Legendre nodes, being the centre of the Gauss-Legendre quadrature. Revisiting this classic enables to prove that regularised autoencoders ensure a one-to-one re-embedding of the initial data manifold to its latent representation. Demonstrations show that prior proposed regularisation strategies, such as contractive autoencoding, cause topological defects already for simple examples, and so do convolutional based (variational) autoencoders. In contrast, topological preservation is ensured already by standard multilayer perceptron neural networks when being regularised due to our contribution. This observation extends through the classic FashionMNIST dataset up to real world encoding problems for MRI brain scans, suggesting that, across disciplines, reliable low dimensional representations of complex high-dimensional datasets can be delivered due to this regularisation technique.

Learning Electron Bunch Distribution along a FEL Beamline by Normalising Flows

Feb 27, 2023Abstract:Understanding and control of Laser-driven Free Electron Lasers remain to be difficult problems that require highly intensive experimental and theoretical research. The gap between simulated and experimentally collected data might complicate studies and interpretation of obtained results. In this work we developed a deep learning based surrogate that could help to fill in this gap. We introduce a surrogate model based on normalising flows for conditional phase-space representation of electron clouds in a FEL beamline. Achieved results let us discuss further benefits and limitations in exploitability of the models to gain deeper understanding of fundamental processes within a beamline.

* 7 pages, 5 figures

LiFe-net: Data-driven Modelling of Time-dependent Temperatures and Charging Statistics Of Tesla's LiFePo4 EV Battery

Dec 16, 2022Abstract:Modelling the temperature of Electric Vehicle (EV) batteries is a fundamental task of EV manufacturing. Extreme temperatures in the battery packs can affect their longevity and power output. Although theoretical models exist for describing heat transfer in battery packs, they are computationally expensive to simulate. Furthermore, it is difficult to acquire data measurements from within the battery cell. In this work, we propose a data-driven surrogate model (LiFe-net) that uses readily accessible driving diagnostics for battery temperature estimation to overcome these limitations. This model incorporates Neural Operators with a traditional numerical integration scheme to estimate the temperature evolution. Moreover, we propose two further variations of the baseline model: LiFe-net trained with a regulariser and LiFe-net trained with time stability loss. We compared these models in terms of generalization error on test data. The results showed that LiFe-net trained with time stability loss outperforms the other two models and can estimate the temperature evolution on unseen data with a relative error of 2.77 % on average.

Implicit Neural Convolutional Kernels for Steerable CNNs

Dec 12, 2022

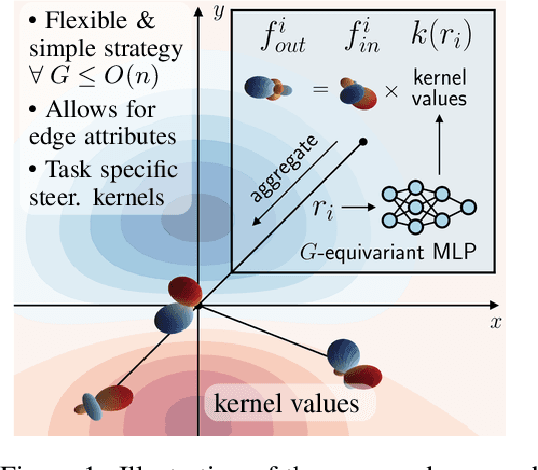

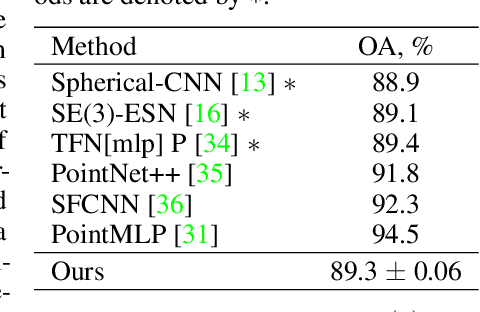

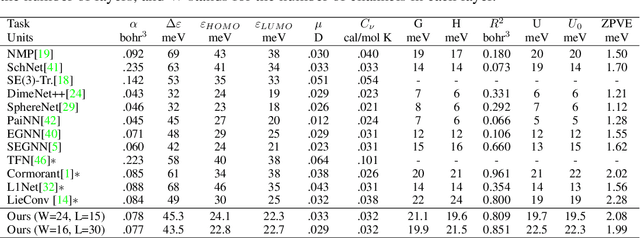

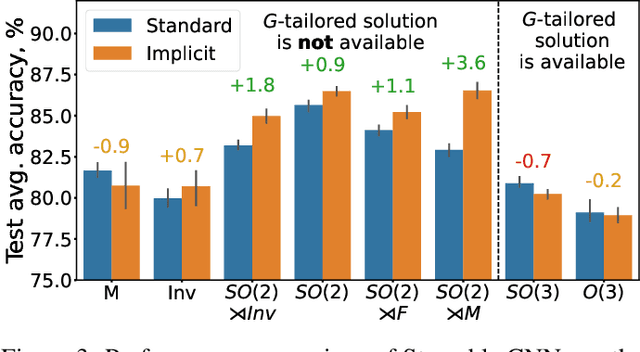

Abstract:Steerable convolutional neural networks (CNNs) provide a general framework for building neural networks equivariant to translations and other transformations belonging to an origin-preserving group $G$, such as reflections and rotations. They rely on standard convolutions with $G$-steerable kernels obtained by analytically solving the group-specific equivariance constraint imposed onto the kernel space. As the solution is tailored to a particular group $G$, the implementation of a kernel basis does not generalize to other symmetry transformations, which complicates the development of group equivariant models. We propose using implicit neural representation via multi-layer perceptrons (MLPs) to parameterize $G$-steerable kernels. The resulting framework offers a simple and flexible way to implement Steerable CNNs and generalizes to any group $G$ for which a $G$-equivariant MLP can be built. We apply our method to point cloud (ModelNet-40) and molecular data (QM9) and demonstrate a significant improvement in performance compared to standard Steerable CNNs.

Acceptance Rates of Invertible Neural Networks on Electron Spectra from Near-Critical Laser-Plasmas: A Comparison

Dec 12, 2022

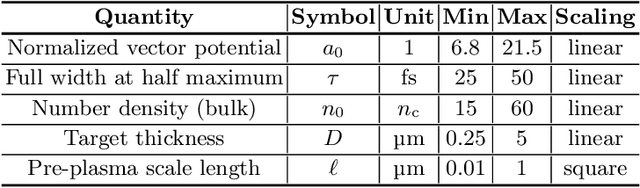

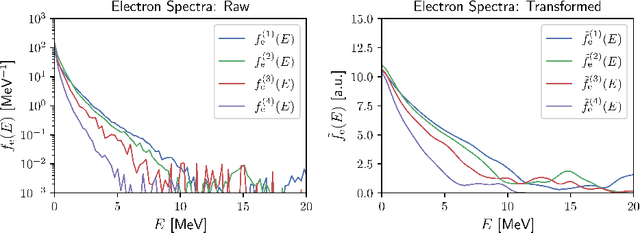

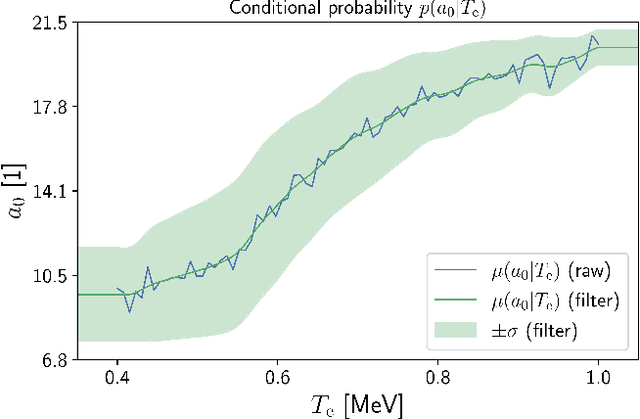

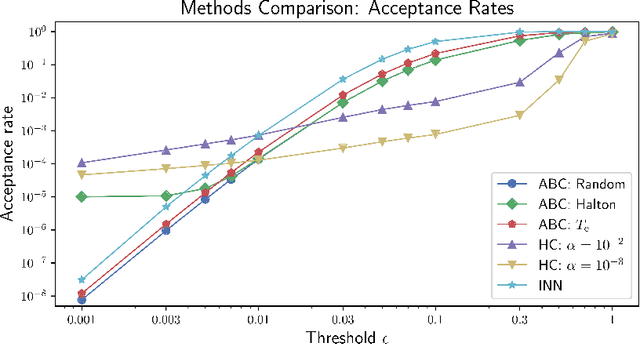

Abstract:While the interaction of ultra-intense ultra-short laser pulses with near- and overcritical plasmas cannot be directly observed, experimentally accessible quantities (observables) often only indirectly give information about the underlying plasma dynamics. Furthermore, the information provided by observables is incomplete, making the inverse problem highly ambiguous. Therefore, in order to infer plasma dynamics as well as experimental parameter, the full distribution over parameters given an observation needs to considered, requiring that models are flexible and account for the information lost in the forward process. Invertible Neural Networks (INNs) have been designed to efficiently model both the forward and inverse process, providing the full conditional posterior given a specific measurement. In this work, we benchmark INNs and standard statistical methods on synthetic electron spectra. First, we provide experimental results with respect to the acceptance rate, where our results show increases in acceptance rates up to a factor of 10. Additionally, we show that this increased acceptance rate also results in an increased speed-up for INNs to the same extent. Lastly, we propose a composite algorithm that utilizes INNs and promises low runtimes while preserving high accuracy.

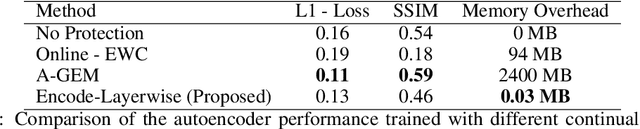

Continual learning autoencoder training for a particle-in-cell simulation via streaming

Nov 09, 2022

Abstract:The upcoming exascale era will provide a new generation of physics simulations. These simulations will have a high spatiotemporal resolution, which will impact the training of machine learning models since storing a high amount of simulation data on disk is nearly impossible. Therefore, we need to rethink the training of machine learning models for simulations for the upcoming exascale era. This work presents an approach that trains a neural network concurrently to a running simulation without storing data on a disk. The training pipeline accesses the training data by in-memory streaming. Furthermore, we apply methods from the domain of continual learning to enhance the generalization of the model. We tested our pipeline on the training of a 3d autoencoder trained concurrently to laser wakefield acceleration particle-in-cell simulation. Furthermore, we experimented with various continual learning methods and their effect on the generalization.

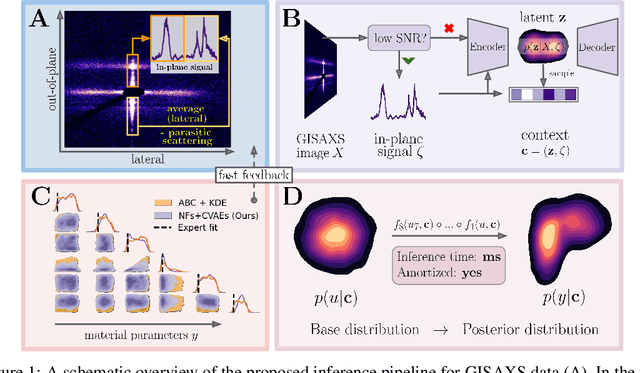

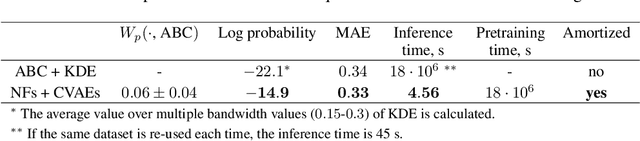

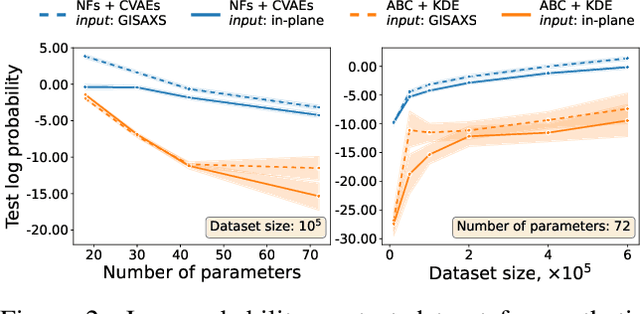

Amortized Bayesian Inference of GISAXS Data with Normalizing Flows

Oct 04, 2022

Abstract:Grazing-Incidence Small-Angle X-ray Scattering (GISAXS) is a modern imaging technique used in material research to study nanoscale materials. Reconstruction of the parameters of an imaged object imposes an ill-posed inverse problem that is further complicated when only an in-plane GISAXS signal is available. Traditionally used inference algorithms such as Approximate Bayesian Computation (ABC) rely on computationally expensive scattering simulation software, rendering analysis highly time-consuming. We propose a simulation-based framework that combines variational auto-encoders and normalizing flows to estimate the posterior distribution of object parameters given its GISAXS data. We apply the inference pipeline to experimental data and demonstrate that our method reduces the inference cost by orders of magnitude while producing consistent results with ABC.

Learning Generative Factors of Neuroimaging Data with Variational auto-encoders

Jun 04, 2022

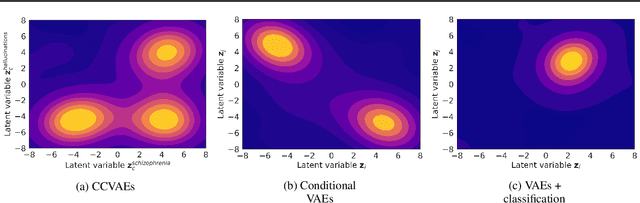

Abstract:Neuroimaging techniques produce high-dimensional, stochastic data from which it might be challenging to extract high-level knowledge about the phenomena of interest. We address this challenge by applying the framework of generative modelling to 1) classify multiple pathologies, 2) recover neurological mechanisms of those pathologies in a data-driven manner and 3) learn robust representations of neuroimaging data. We illustrate the applicability of the proposed approach to identifying schizophrenia, either followed or not by auditory verbal hallucinations. We further demonstrate the ability of the framework to learn disease-related mechanisms that are consistent with current domain knowledge. We also compare the proposed framework with several benchmark approaches and indicate its advantages.

Investigating Brain Connectivity with Graph Neural Networks and GNNExplainer

Jun 04, 2022

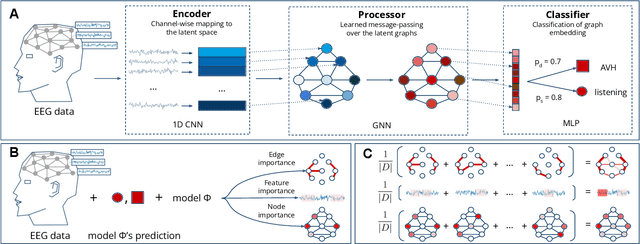

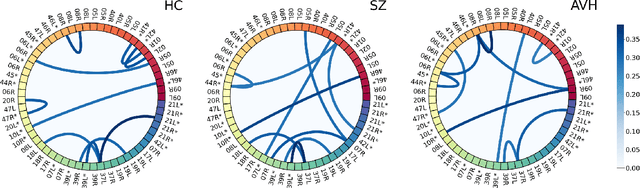

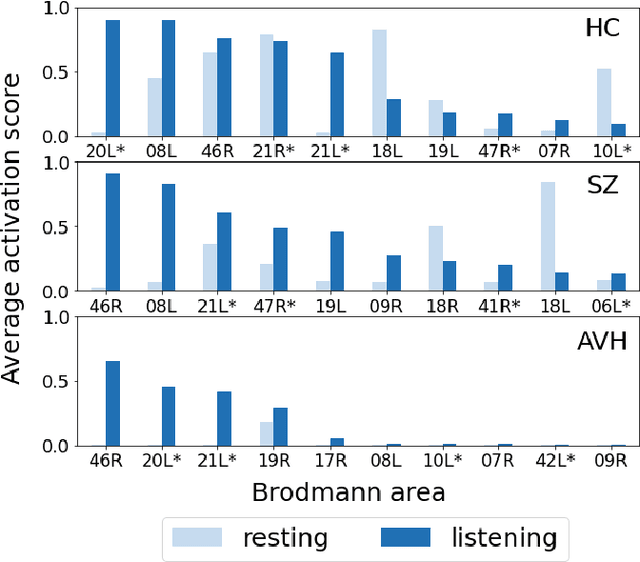

Abstract:Functional connectivity plays an essential role in modern neuroscience. The modality sheds light on the brain's functional and structural aspects, including mechanisms behind multiple pathologies. One such pathology is schizophrenia which is often followed by auditory verbal hallucinations. The latter is commonly studied by observing functional connectivity during speech processing. In this work, we have made a step toward an in-depth examination of functional connectivity during a dichotic listening task via deep learning for three groups of people: schizophrenia patients with and without auditory verbal hallucinations and healthy controls. We propose a graph neural network-based framework within which we represent EEG data as signals in the graph domain. The framework allows one to 1) predict a brain mental disorder based on EEG recording, 2) differentiate the listening state from the resting state for each group and 3) recognize characteristic task-depending connectivity. Experimental results show that the proposed model can differentiate between the above groups with state-of-the-art performance. Besides, it provides a researcher with meaningful information regarding each group's functional connectivity, which we validated on the current domain knowledge.

Global Attention Mechanism: Retain Information to Enhance Channel-Spatial Interactions

Dec 10, 2021

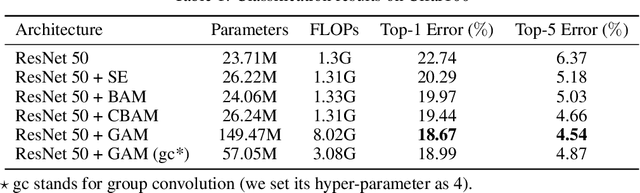

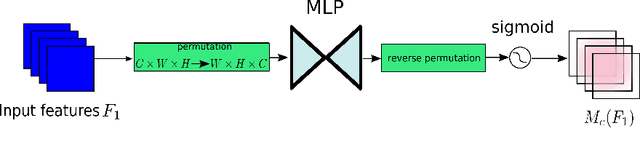

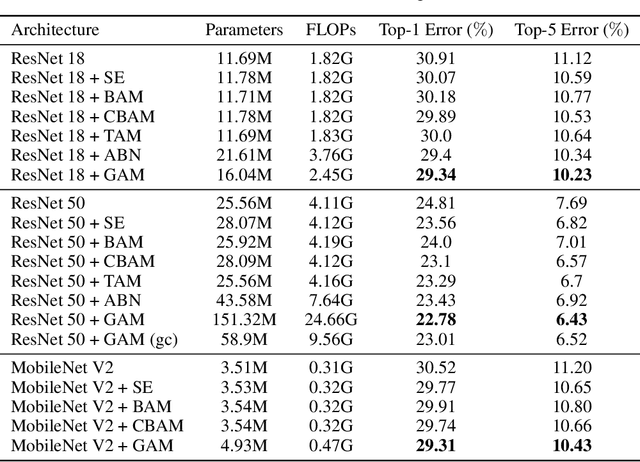

Abstract:A variety of attention mechanisms have been studied to improve the performance of various computer vision tasks. However, the prior methods overlooked the significance of retaining the information on both channel and spatial aspects to enhance the cross-dimension interactions. Therefore, we propose a global attention mechanism that boosts the performance of deep neural networks by reducing information reduction and magnifying the global interactive representations. We introduce 3D-permutation with multilayer-perceptron for channel attention alongside a convolutional spatial attention submodule. The evaluation of the proposed mechanism for the image classification task on CIFAR-100 and ImageNet-1K indicates that our method stably outperforms several recent attention mechanisms with both ResNet and lightweight MobileNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge