Richard Pausch

The Artificial Scientist -- in-transit Machine Learning of Plasma Simulations

Jan 06, 2025

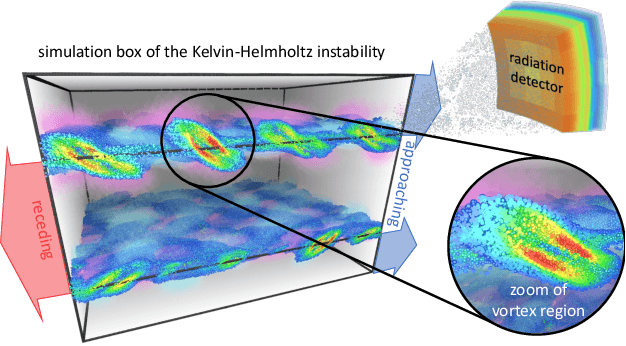

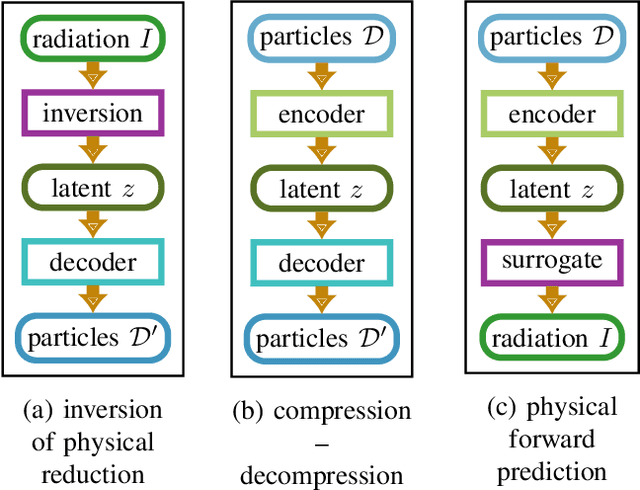

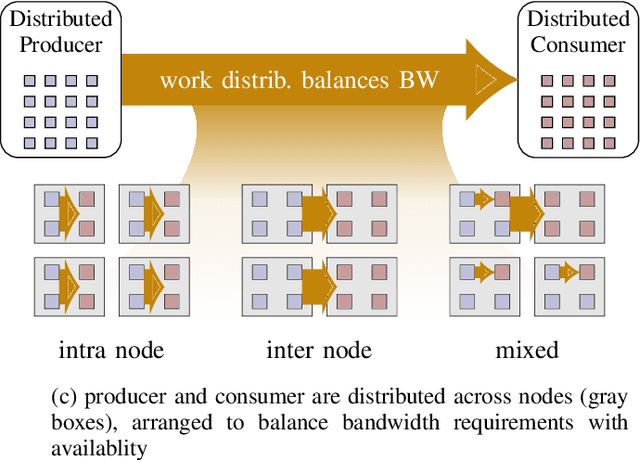

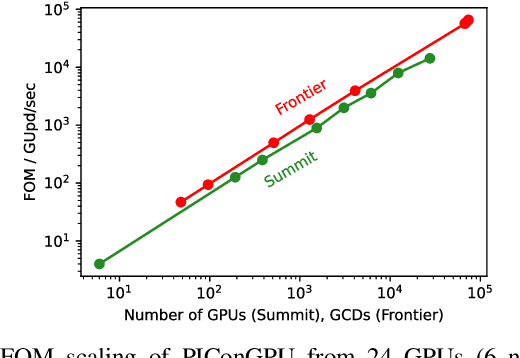

Abstract:Increasing HPC cluster sizes and large-scale simulations that produce petabytes of data per run, create massive IO and storage challenges for analysis. Deep learning-based techniques, in particular, make use of these amounts of domain data to extract patterns that help build scientific understanding. Here, we demonstrate a streaming workflow in which simulation data is streamed directly to a machine-learning (ML) framework, circumventing the file system bottleneck. Data is transformed in transit, asynchronously to the simulation and the training of the model. With the presented workflow, data operations can be performed in common and easy-to-use programming languages, freeing the application user from adapting the application output routines. As a proof-of-concept we consider a GPU accelerated particle-in-cell (PIConGPU) simulation of the Kelvin- Helmholtz instability (KHI). We employ experience replay to avoid catastrophic forgetting in learning from this non-steady process in a continual manner. We detail challenges addressed while porting and scaling to Frontier exascale system.

Learning Electron Bunch Distribution along a FEL Beamline by Normalising Flows

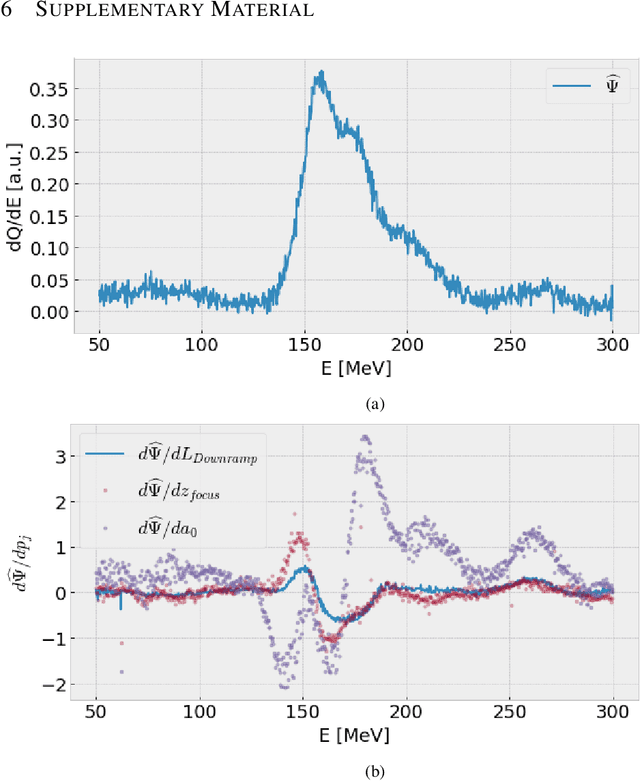

Feb 27, 2023Abstract:Understanding and control of Laser-driven Free Electron Lasers remain to be difficult problems that require highly intensive experimental and theoretical research. The gap between simulated and experimentally collected data might complicate studies and interpretation of obtained results. In this work we developed a deep learning based surrogate that could help to fill in this gap. We introduce a surrogate model based on normalising flows for conditional phase-space representation of electron clouds in a FEL beamline. Achieved results let us discuss further benefits and limitations in exploitability of the models to gain deeper understanding of fundamental processes within a beamline.

* 7 pages, 5 figures

Continual learning autoencoder training for a particle-in-cell simulation via streaming

Nov 09, 2022

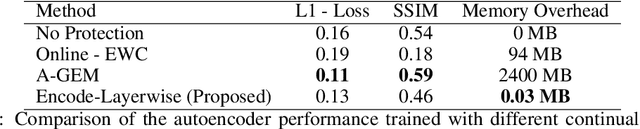

Abstract:The upcoming exascale era will provide a new generation of physics simulations. These simulations will have a high spatiotemporal resolution, which will impact the training of machine learning models since storing a high amount of simulation data on disk is nearly impossible. Therefore, we need to rethink the training of machine learning models for simulations for the upcoming exascale era. This work presents an approach that trains a neural network concurrently to a running simulation without storing data on a disk. The training pipeline accesses the training data by in-memory streaming. Furthermore, we apply methods from the domain of continual learning to enhance the generalization of the model. We tested our pipeline on the training of a 3d autoencoder trained concurrently to laser wakefield acceleration particle-in-cell simulation. Furthermore, we experimented with various continual learning methods and their effect on the generalization.

Invertible Surrogate Models: Joint surrogate modelling and reconstruction of Laser-Wakefield Acceleration by invertible neural networks

Jun 01, 2021

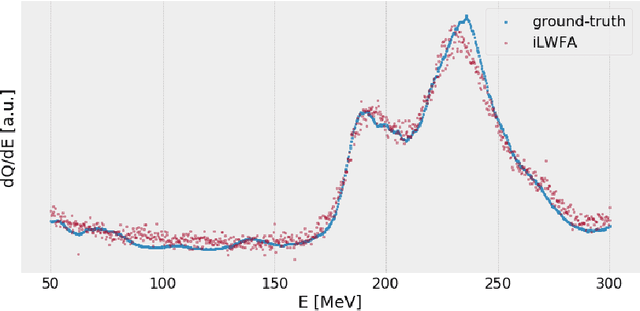

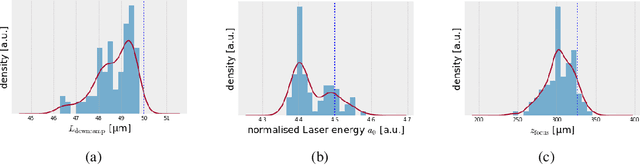

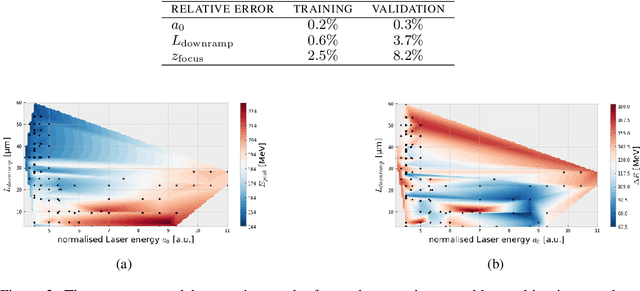

Abstract:Invertible neural networks are a recent technique in machine learning promising neural network architectures that can be run in forward and reverse mode. In this paper, we will be introducing invertible surrogate models that approximate complex forward simulation of the physics involved in laser plasma accelerators: iLWFA. The bijective design of the surrogate model also provides all means for reconstruction of experimentally acquired diagnostics. The quality of our invertible laser wakefield acceleration network will be verified on a large set of numerical LWFA simulations.

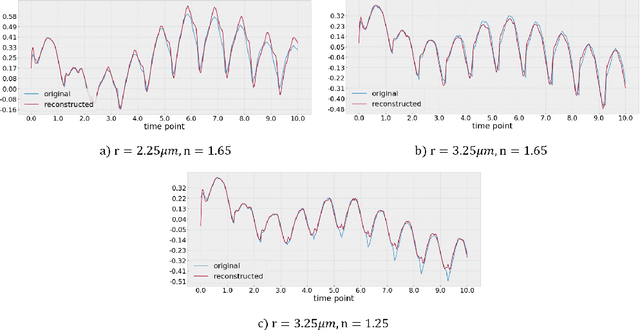

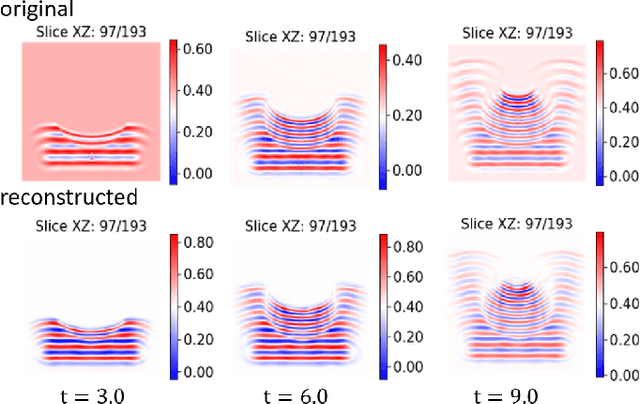

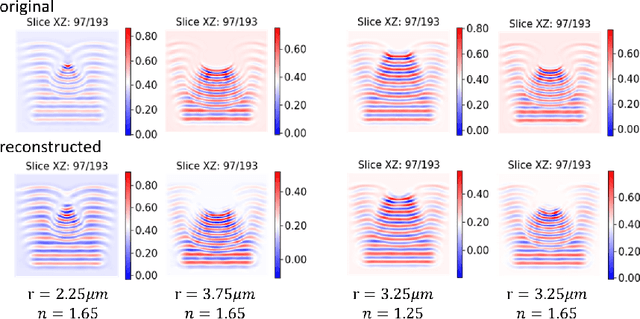

Data-Driven Shadowgraph Simulation of a 3D Object

Jun 01, 2021

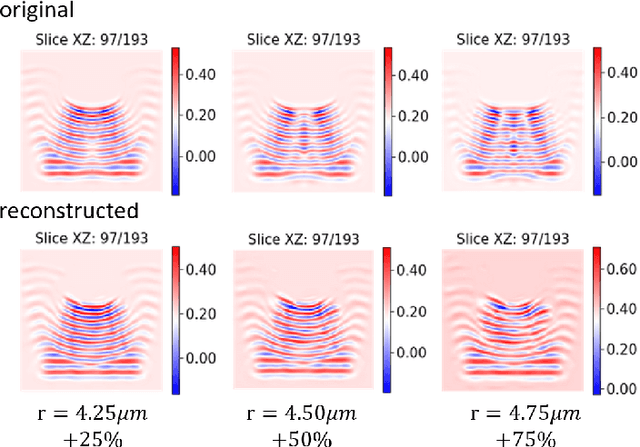

Abstract:In this work we propose a deep neural network based surrogate model for a plasma shadowgraph - a technique for visualization of perturbations in a transparent medium. We are substituting the numerical code by a computationally cheaper projection based surrogate model that is able to approximate the electric fields at a given time without computing all preceding electric fields as required by numerical methods. This means that the projection based surrogate model allows to recover the solution of the governing 3D partial differential equation, 3D wave equation, at any point of a given compute domain and configuration without the need to run a full simulation. This model has shown a good quality of reconstruction in a problem of interpolation of data within a narrow range of simulation parameters and can be used for input data of large size.

Large-scale Neural Solvers for Partial Differential Equations

Sep 08, 2020

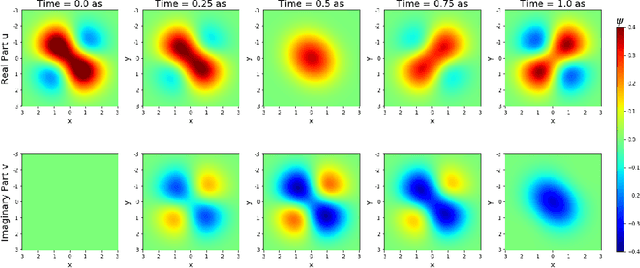

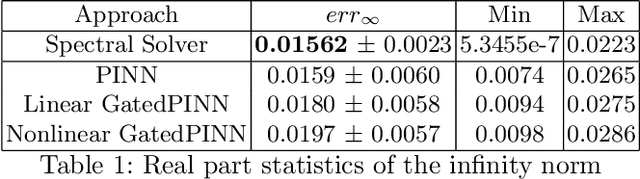

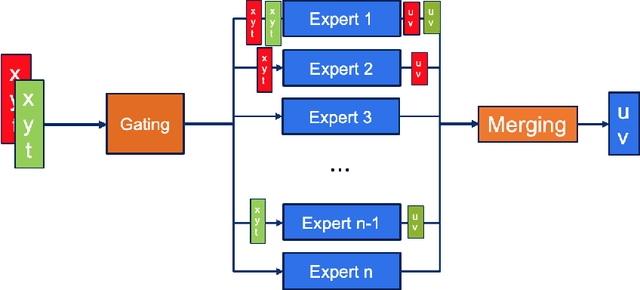

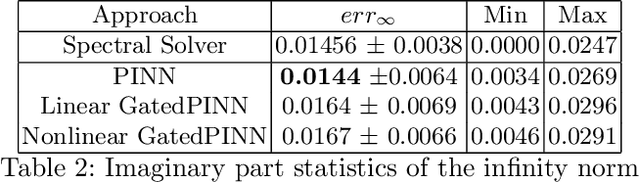

Abstract:Solving partial differential equations (PDE) is an indispensable part of many branches of science as many processes can be modelled in terms of PDEs. However, recent numerical solvers require manual discretization of the underlying equation as well as sophisticated, tailored code for distributed computing. Scanning the parameters of the underlying model significantly increases the runtime as the simulations have to be cold-started for each parameter configuration. Machine Learning based surrogate models denote promising ways for learning complex relationship among input, parameter and solution. However, recent generative neural networks require lots of training data, i.e. full simulation runs making them costly. In contrast, we examine the applicability of continuous, mesh-free neural solvers for partial differential equations, physics-informed neural networks (PINNs) solely requiring initial/boundary values and validation points for training but no simulation data. The induced curse of dimensionality is approached by learning a domain decomposition that steers the number of neurons per unit volume and significantly improves runtime. Distributed training on large-scale cluster systems also promises great utilization of large quantities of GPUs which we assess by a comprehensive evaluation study. Finally, we discuss the accuracy of GatedPINN with respect to analytical solutions -- as well as state-of-the-art numerical solvers, such as spectral solvers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge