Narjes Torabi

Jack

The Llama 3 Herd of Models

Jul 31, 2024Abstract:Modern artificial intelligence (AI) systems are powered by foundation models. This paper presents a new set of foundation models, called Llama 3. It is a herd of language models that natively support multilinguality, coding, reasoning, and tool usage. Our largest model is a dense Transformer with 405B parameters and a context window of up to 128K tokens. This paper presents an extensive empirical evaluation of Llama 3. We find that Llama 3 delivers comparable quality to leading language models such as GPT-4 on a plethora of tasks. We publicly release Llama 3, including pre-trained and post-trained versions of the 405B parameter language model and our Llama Guard 3 model for input and output safety. The paper also presents the results of experiments in which we integrate image, video, and speech capabilities into Llama 3 via a compositional approach. We observe this approach performs competitively with the state-of-the-art on image, video, and speech recognition tasks. The resulting models are not yet being broadly released as they are still under development.

PPL Bench: Evaluation Framework For Probabilistic Programming Languages

Oct 17, 2020

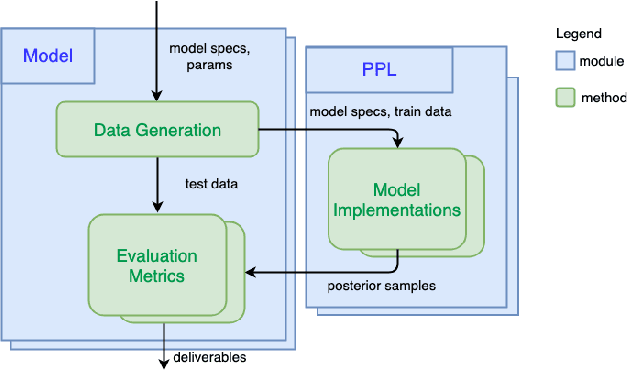

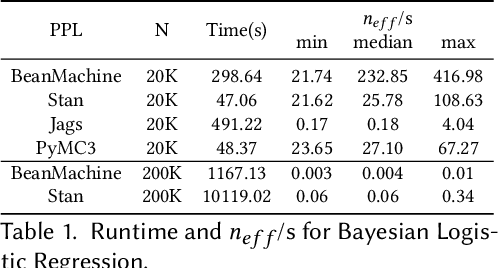

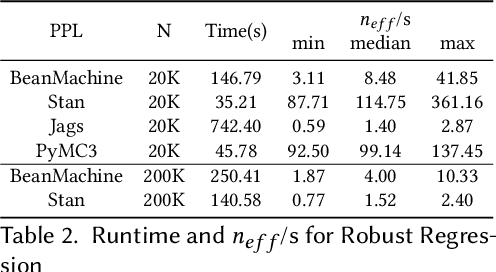

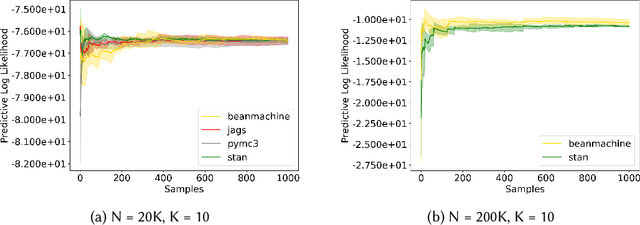

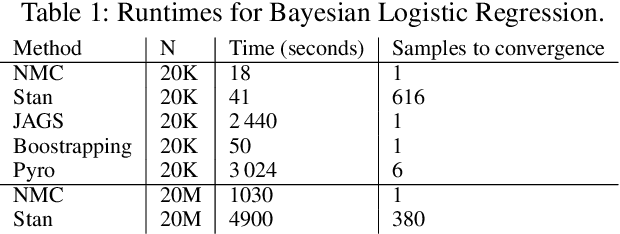

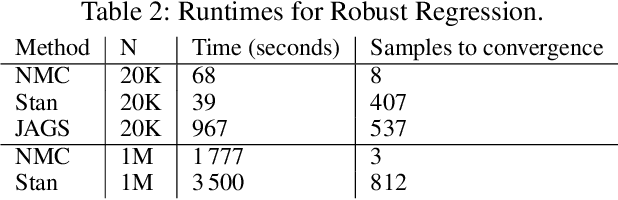

Abstract:We introduce PPL Bench, a new benchmark for evaluating Probabilistic Programming Languages (PPLs) on a variety of statistical models. The benchmark includes data generation and evaluation code for a number of models as well as implementations in some common PPLs. All of the benchmark code and PPL implementations are available on Github. We welcome contributions of new models and PPLs and as well as improvements in existing PPL implementations. The purpose of the benchmark is two-fold. First, we want researchers as well as conference reviewers to be able to evaluate improvements in PPLs in a standardized setting. Second, we want end users to be able to pick the PPL that is most suited for their modeling application. In particular, we are interested in evaluating the accuracy and speed of convergence of the inferred posterior. Each PPL only needs to provide posterior samples given a model and observation data. The framework automatically computes and plots growth in predictive log-likelihood on held out data in addition to reporting other common metrics such as effective sample size and $\hat{r}$.

Uncertainty Estimation For Community Standards Violation In Online Social Networks

Sep 30, 2020

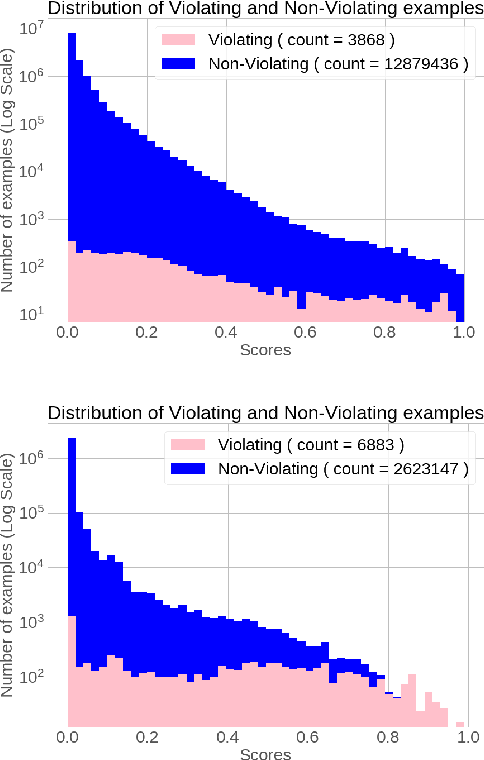

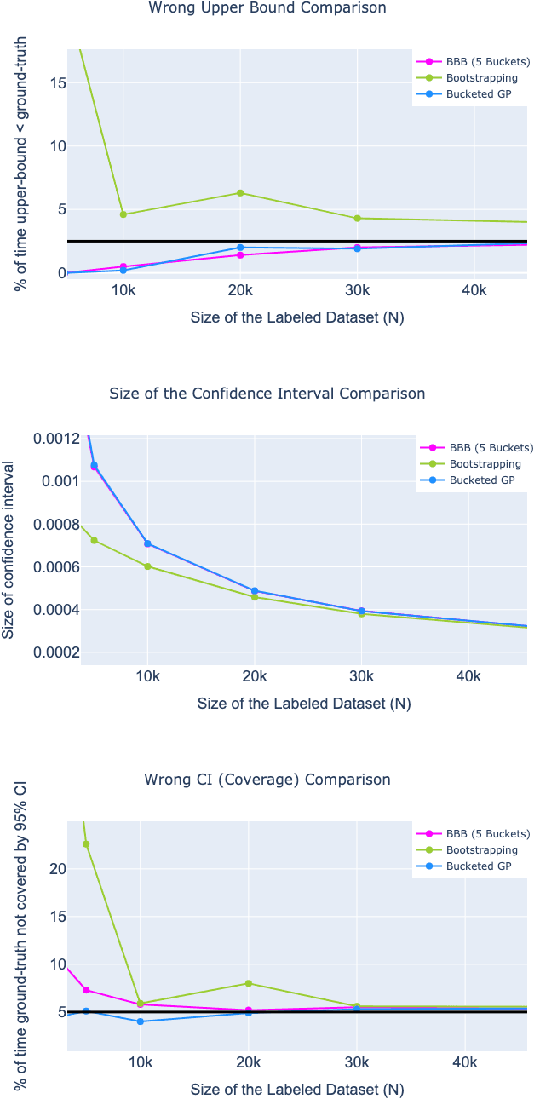

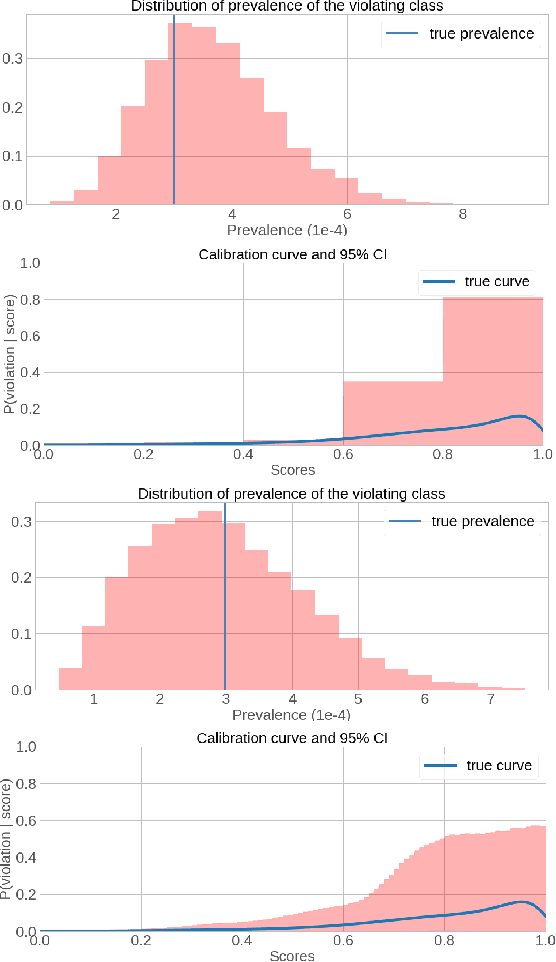

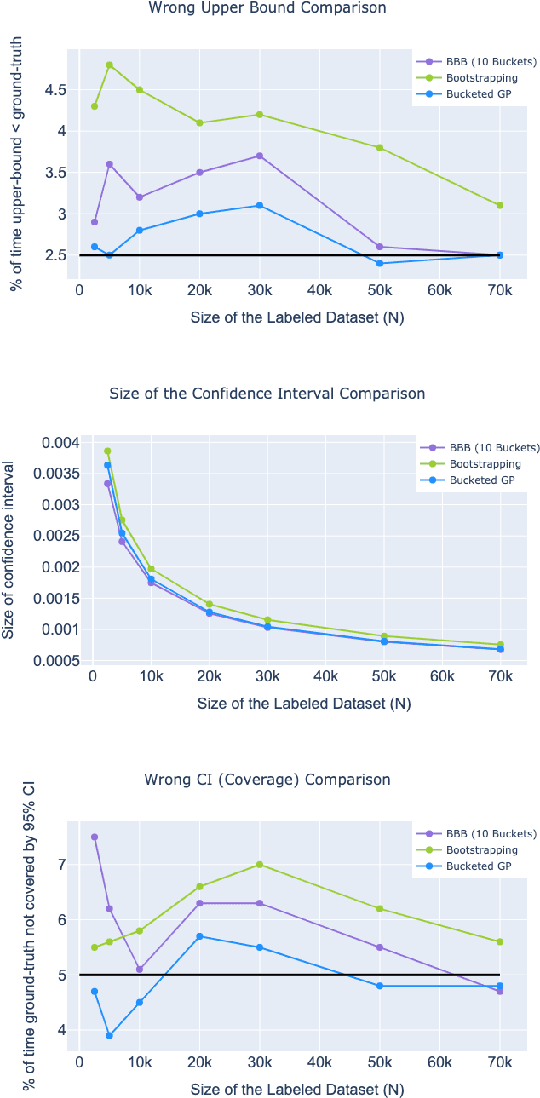

Abstract:Online Social Networks (OSNs) provide a platform for users to share their thoughts and opinions with their community of friends or to the general public. In order to keep the platform safe for all users, as well as to keep it compliant with local laws, OSNs typically create a set of community standards organized into policy groups, and use Machine Learning (ML) models to identify and remove content that violates any of the policies. However, out of the billions of content that is uploaded on a daily basis only a small fraction is so unambiguously violating that it can be removed by the automated models. Prevalence estimation is the task of estimating the fraction of violating content in the residual items by sending a small sample of these items to human labelers to get ground truth labels. This task is exceedingly hard because even though we can easily get the ML scores or features for all of the billions of items we can only get ground truth labels on a few thousands of these items due to practical considerations. Indeed the prevalence can be so low that even after a judicious choice of items to be labeled there can be many days in which not even a single item is labeled violating. A pragmatic choice for such low prevalence, $10^{-4}$ to $10^{-5}$, regimes is to report the upper bound, or $97.5\%$ confidence interval, prevalence (UBP) that takes the uncertainties of the sampling and labeling processes into account and gives a smoothed estimate. In this work we present two novel techniques Bucketed-Beta-Binomial and a Bucketed-Gaussian Process for this UBP task and demonstrate on real and simulated data that it has much better coverage than the commonly used bootstrapping technique.

Newtonian Monte Carlo: single-site MCMC meets second-order gradient methods

Jan 15, 2020

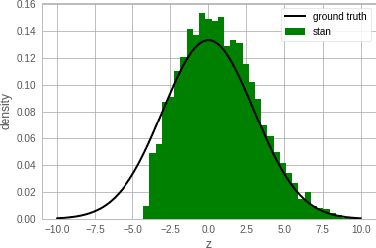

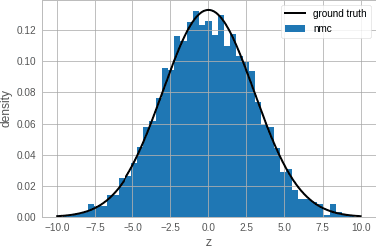

Abstract:Single-site Markov Chain Monte Carlo (MCMC) is a variant of MCMC in which a single coordinate in the state space is modified in each step. Structured relational models are a good candidate for this style of inference. In the single-site context, second order methods become feasible because the typical cubic costs associated with these methods is now restricted to the dimension of each coordinate. Our work, which we call Newtonian Monte Carlo (NMC), is a method to improve MCMC convergence by analyzing the first and second order gradients of the target density to determine a suitable proposal density at each point. Existing first order gradient-based methods suffer from the problem of determining an appropriate step size. Too small a step size and it will take a large number of steps to converge, while a very large step size will cause it to overshoot the high density region. NMC is similar to the Newton-Raphson update in optimization where the second order gradient is used to automatically scale the step size in each dimension. However, our objective is to find a parameterized proposal density rather than the maxima. As a further improvement on existing first and second order methods, we show that random variables with constrained supports don't need to be transformed before taking a gradient step. We demonstrate the efficiency of NMC on a number of different domains. For statistical models where the prior is conjugate to the likelihood, our method recovers the posterior quite trivially in one step. However, we also show results on fairly large non-conjugate models, where NMC performs better than adaptive first order methods such as NUTS or other inexact scalable inference methods such as Stochastic Variational Inference or bootstrapping.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge