Nalini Singh

Computationally Efficient Information-Driven Optical Design with Interchanging Optimization

Jul 10, 2025Abstract:Recent work has demonstrated that imaging systems can be evaluated through the information content of their measurements alone, enabling application-agnostic optical design that avoids computational decoding challenges. Information-Driven Encoder Analysis Learning (IDEAL) was proposed to automate this process through gradient-based. In this work, we study IDEAL across diverse imaging systems and find that it suffers from high memory usage, long runtimes, and a potentially mismatched objective function due to end-to-end differentiability requirements. We introduce IDEAL with Interchanging Optimization (IDEAL-IO), a method that decouples density estimation from optical parameter optimization by alternating between fitting models to current measurements and updating optical parameters using fixed models for information estimation. This approach reduces runtime and memory usage by up to 6x while enabling more expressive density models that guide optimization toward superior designs. We validate our method on diffractive optics, lensless imaging, and snapshot 3D microscopy applications, establishing information-theoretic optimization as a practical, scalable strategy for real-world imaging system design.

Deep-ER: Deep Learning ECCENTRIC Reconstruction for fast high-resolution neurometabolic imaging

Sep 26, 2024

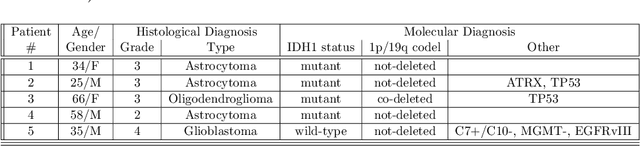

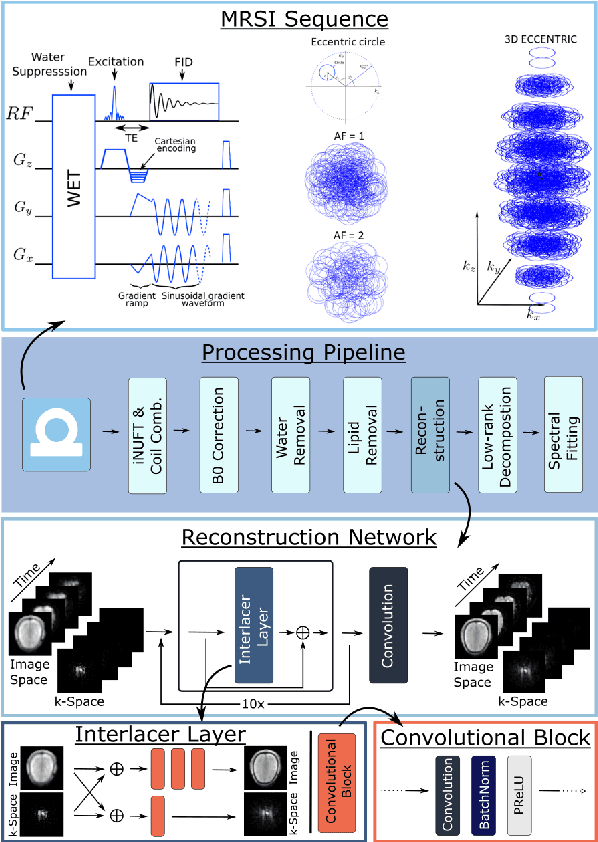

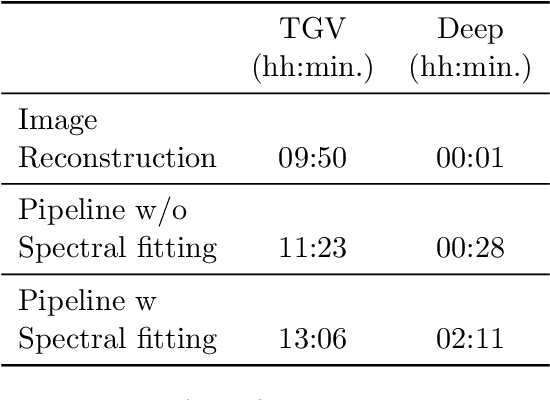

Abstract:Introduction: Altered neurometabolism is an important pathological mechanism in many neurological diseases and brain cancer, which can be mapped non-invasively by Magnetic Resonance Spectroscopic Imaging (MRSI). Advanced MRSI using non-cartesian compressed-sense acquisition enables fast high-resolution metabolic imaging but has lengthy reconstruction times that limits throughput and needs expert user interaction. Here, we present a robust and efficient Deep Learning reconstruction to obtain high-quality metabolic maps. Methods: Fast high-resolution whole-brain metabolic imaging was performed at 3.4 mm$^3$ isotropic resolution with acquisition times between 4:11-9:21 min:s using ECCENTRIC pulse sequence on a 7T MRI scanner. Data were acquired in a high-resolution phantom and 27 human participants, including 22 healthy volunteers and 5 glioma patients. A deep neural network using recurring interlaced convolutional layers with joint dual-space feature representation was developed for deep learning ECCENTRIC reconstruction (Deep-ER). 21 subjects were used for training and 6 subjects for testing. Deep-ER performance was compared to conventional iterative Total Generalized Variation reconstruction using image and spectral quality metrics. Results: Deep-ER demonstrated 600-fold faster reconstruction than conventional methods, providing improved spatial-spectral quality and metabolite quantification with 12%-45% (P<0.05) higher signal-to-noise and 8%-50% (P<0.05) smaller Cramer-Rao lower bounds. Metabolic images clearly visualize glioma tumor heterogeneity and boundary. Conclusion: Deep-ER provides efficient and robust reconstruction for sparse-sampled MRSI. The accelerated acquisition-reconstruction MRSI is compatible with high-throughput imaging workflow. It is expected that such improved performance will facilitate basic and clinical MRSI applications.

Every Answer Matters: Evaluating Commonsense with Probabilistic Measures

Jun 06, 2024

Abstract:Large language models have demonstrated impressive performance on commonsense tasks; however, these tasks are often posed as multiple-choice questions, allowing models to exploit systematic biases. Commonsense is also inherently probabilistic with multiple correct answers. The purpose of "boiling water" could be making tea and cooking, but it also could be killing germs. Existing tasks do not capture the probabilistic nature of common sense. To this end, we present commonsense frame completion (CFC), a new generative task that evaluates common sense via multiple open-ended generations. We also propose a method of probabilistic evaluation that strongly correlates with human judgments. Humans drastically outperform strong language model baselines on our dataset, indicating this approach is both a challenging and useful evaluation of machine common sense.

UncertaINR: Uncertainty Quantification of End-to-End Implicit Neural Representations for Computed Tomography

Feb 22, 2022

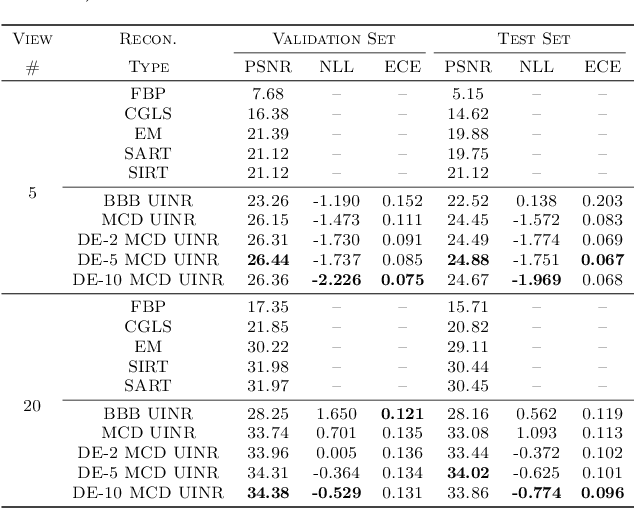

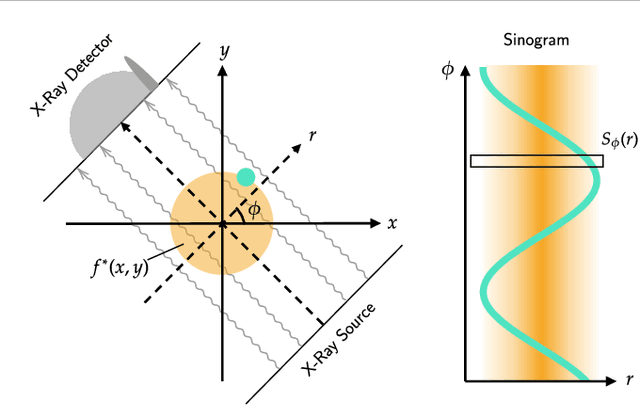

Abstract:Implicit neural representations (INRs) have achieved impressive results for scene reconstruction and computer graphics, where their performance has primarily been assessed on reconstruction accuracy. However, in medical imaging, where the reconstruction problem is underdetermined and model predictions inform high-stakes diagnoses, uncertainty quantification of INR inference is critical. To that end, we study UncertaINR: a Bayesian reformulation of INR-based image reconstruction, for computed tomography (CT). We test several Bayesian deep learning implementations of UncertaINR and find that they achieve well-calibrated uncertainty, while retaining accuracy competitive with other classical, INR-based, and CNN-based reconstruction techniques. In contrast to the best-performing prior approaches, UncertaINR does not require a large training dataset, but only a handful of validation images.

A deep learning system for differential diagnosis of skin diseases

Sep 11, 2019

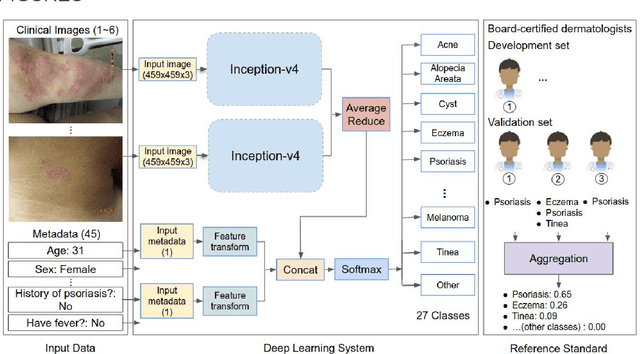

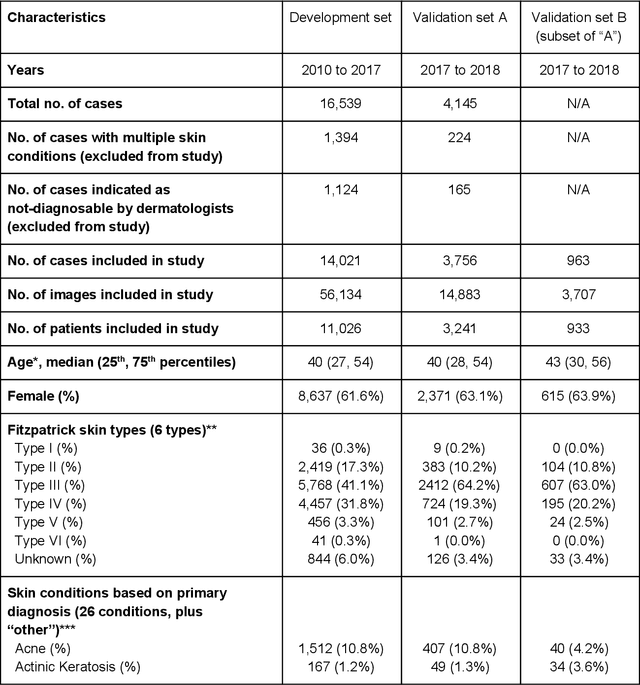

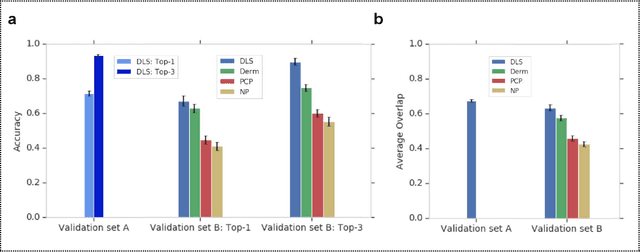

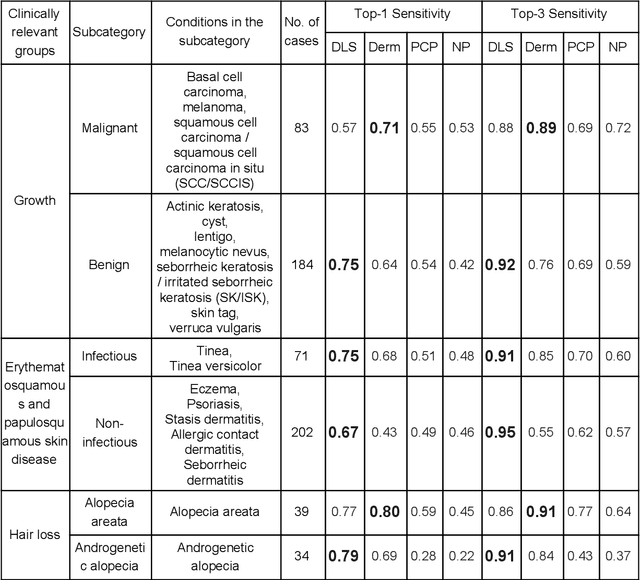

Abstract:Skin conditions affect an estimated 1.9 billion people worldwide. A shortage of dermatologists causes long wait times and leads patients to seek dermatologic care from general practitioners. However, the diagnostic accuracy of general practitioners has been reported to be only 0.24-0.70 (compared to 0.77-0.96 for dermatologists), resulting in referral errors, delays in care, and errors in diagnosis and treatment. In this paper, we developed a deep learning system (DLS) to provide a differential diagnosis of skin conditions for clinical cases (skin photographs and associated medical histories). The DLS distinguishes between 26 skin conditions that represent roughly 80% of the volume of skin conditions seen in primary care. The DLS was developed and validated using de-identified cases from a teledermatology practice serving 17 clinical sites via a temporal split: the first 14,021 cases for development and the last 3,756 cases for validation. On the validation set, where a panel of three board-certified dermatologists defined the reference standard for every case, the DLS achieved 0.71 and 0.93 top-1 and top-3 accuracies respectively. For a random subset of the validation set (n=963 cases), 18 clinicians reviewed the cases for comparison. On this subset, the DLS achieved a 0.67 top-1 accuracy, non-inferior to board-certified dermatologists (0.63, p<0.001), and higher than primary care physicians (PCPs, 0.45) and nurse practitioners (NPs, 0.41). The top-3 accuracy showed a similar trend: 0.90 DLS, 0.75 dermatologists, 0.60 PCPs, and 0.55 NPs. These results highlight the potential of the DLS to augment general practitioners to accurately diagnose skin conditions by suggesting differential diagnoses that may not have been considered. Future work will be needed to prospectively assess the clinical impact of using this tool in actual clinical workflows.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge