Mischa Dohler

Telecom Foundation Models: Applications, Challenges, and Future Trends

Aug 02, 2024Abstract:Telecom networks are becoming increasingly complex, with diversified deployment scenarios, multi-standards, and multi-vendor support. The intricate nature of the telecom network ecosystem presents challenges to effectively manage, operate, and optimize networks. To address these hurdles, Artificial Intelligence (AI) has been widely adopted to solve different tasks in telecom networks. However, these conventional AI models are often designed for specific tasks, rely on extensive and costly-to-collect labeled data that require specialized telecom expertise for development and maintenance. The AI models usually fail to generalize and support diverse deployment scenarios and applications. In contrast, Foundation Models (FMs) show effective generalization capabilities in various domains in language, vision, and decision-making tasks. FMs can be trained on multiple data modalities generated from the telecom ecosystem and leverage specialized domain knowledge. Moreover, FMs can be fine-tuned to solve numerous specialized tasks with minimal task-specific labeled data and, in some instances, are able to leverage context to solve previously unseen problems. At the dawn of 6G, this paper investigates the potential opportunities of using FMs to shape the future of telecom technologies and standards. In particular, the paper outlines a conceptual process for developing Telecom FMs (TFMs) and discusses emerging opportunities for orchestrating specialized TFMs for network configuration, operation, and maintenance. Finally, the paper discusses the limitations and challenges of developing and deploying TFMs.

Empowering the 6G Cellular Architecture with Open RAN

Dec 05, 2023

Abstract:Innovation and standardization in 5G have brought advancements to every facet of the cellular architecture. This ranges from the introduction of new frequency bands and signaling technologies for the radio access network (RAN), to a core network underpinned by micro-services and network function virtualization (NFV). However, like any emerging technology, the pace of real-world deployments does not instantly match the pace of innovation. To address this discrepancy, one of the key aspects under continuous development is the RAN with the aim of making it more open, adaptive, functional, and easy to manage. In this paper, we highlight the transformative potential of embracing novel cellular architectures by transitioning from conventional systems to the progressive principles of Open RAN. This promises to make 6G networks more agile, cost-effective, energy-efficient, and resilient. It opens up a plethora of novel use cases, ranging from ubiquitous support for autonomous devices to cost-effective expansions in regions previously underserved. The principles of Open RAN encompass: (i) a disaggregated architecture with modular and standardized interfaces; (ii) cloudification, programmability and orchestration; and (iii) AI-enabled data-centric closed-loop control and automation. We first discuss the transformative role Open RAN principles have played in the 5G era. Then, we adopt a system-level approach and describe how these Open RAN principles will support 6G RAN and architecture innovation. We qualitatively discuss potential performance gains that Open RAN principles yield for specific 6G use cases. For each principle, we outline the steps that research, development and standardization communities ought to take to make Open RAN principles central to next-generation cellular network designs.

* This paper is part of the IEEE JSAC SI on Open RAN. Please cite as: M. Polese, M. Dohler, F. Dressler, M. Erol-Kantarci, R. Jana, R. Knopp, T. Melodia, "Empowering the 6G Cellular Architecture with Open RAN," in IEEE Journal on Selected Areas in Communications, doi: 10.1109/JSAC.2023.3334610

Task-Oriented Metaverse Design in the 6G Era

Jun 05, 2023

Abstract:As an emerging concept, the Metaverse has the potential to revolutionize the social interaction in the post-pandemic era by establishing a digital world for online education, remote healthcare, immersive business, intelligent transportation, and advanced manufacturing. The goal is ambitious, yet the methodologies and technologies to achieve the full vision of the Metaverse remain unclear. In this paper, we first introduce the three infrastructure pillars that lay the foundation of the Metaverse, i.e., human-computer interfaces, sensing and communication systems, and network architectures. Then, we depict the roadmap towards the Metaverse that consists of four stages with different applications. To support diverse applications in the Metaverse, we put forward a novel design methodology: task-oriented design, and further review the challenges and the potential solutions. In the case study, we develop a prototype to illustrate how to synchronize a real-world device and its digital model in the Metaverse by task-oriented design, where a deep reinforcement learning algorithm is adopted to minimize the required communication throughput by optimizing the sampling and prediction systems subject to a synchronization error constraint.

Energy-Efficient Cellular-Connected UAV Swarm Control Optimization

Mar 18, 2023

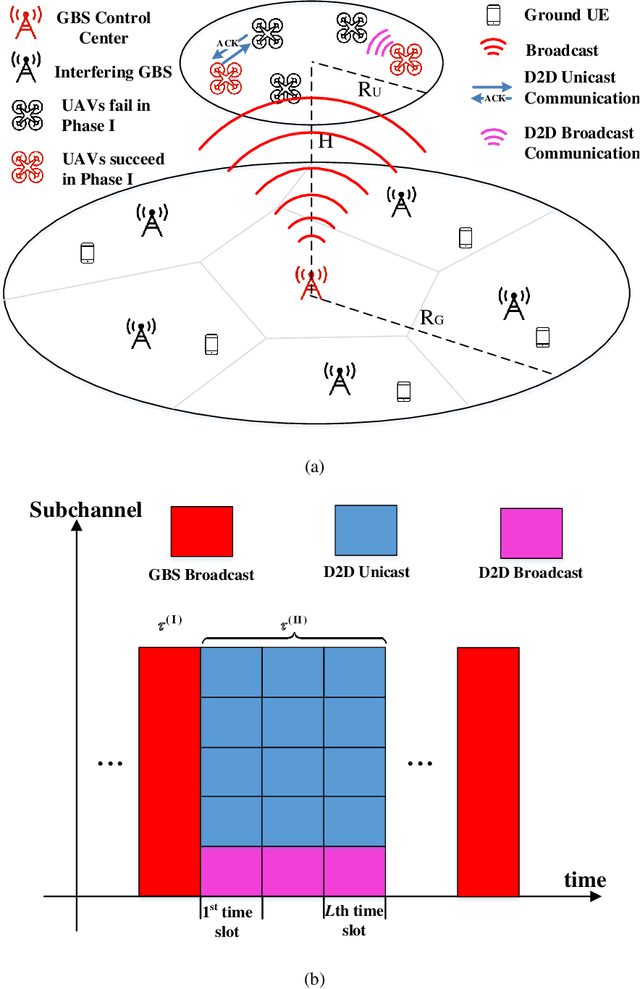

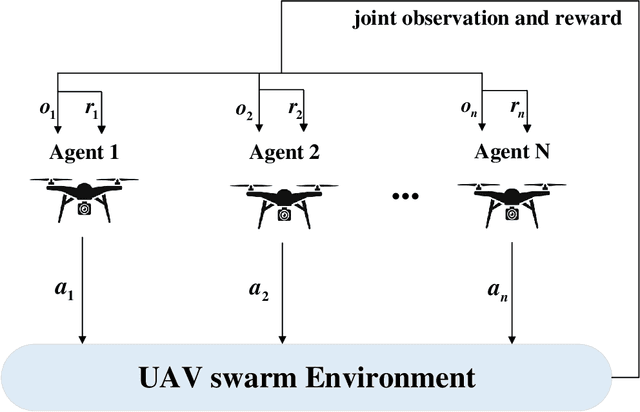

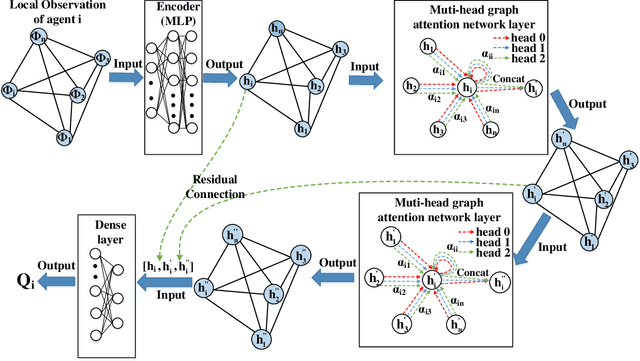

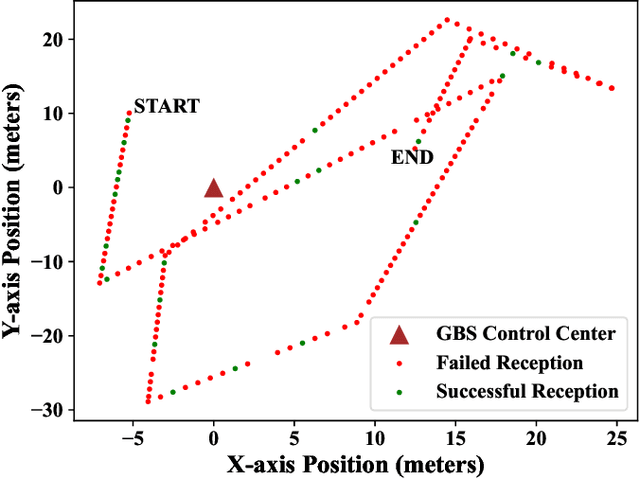

Abstract:Cellular-connected unmanned aerial vehicle (UAV) swarm is a promising solution for diverse applications, including cargo delivery and traffic control. However, it is still challenging to communicate with and control the UAV swarm with high reliability, low latency, and high energy efficiency. In this paper, we propose a two-phase command and control (C&C) transmission scheme in a cellular-connected UAV swarm network, where the ground base station (GBS) broadcasts the common C&C message in Phase I. In Phase II, the UAVs that have successfully decoded the C&C message will relay the message to the rest of UAVs via device-to-device (D2D) communications in either broadcast or unicast mode, under latency and energy constraints. To maximize the number of UAVs that receive the message successfully within the latency and energy constraints, we formulate the problem as a Constrained Markov Decision Process to find the optimal policy. To address this problem, we propose a decentralized constrained graph attention multi-agent Deep-Q-network (DCGA-MADQN) algorithm based on Lagrangian primal-dual policy optimization, where a PID-controller algorithm is utilized to update the Lagrange Multiplier. Simulation results show that our algorithm could maximize the number of UAVs that successfully receive the common C&C under energy constraints.

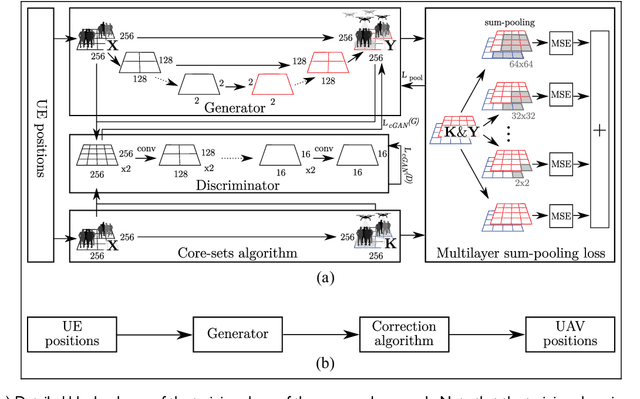

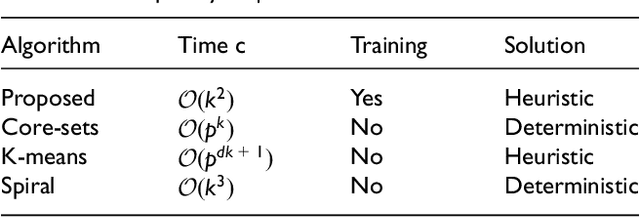

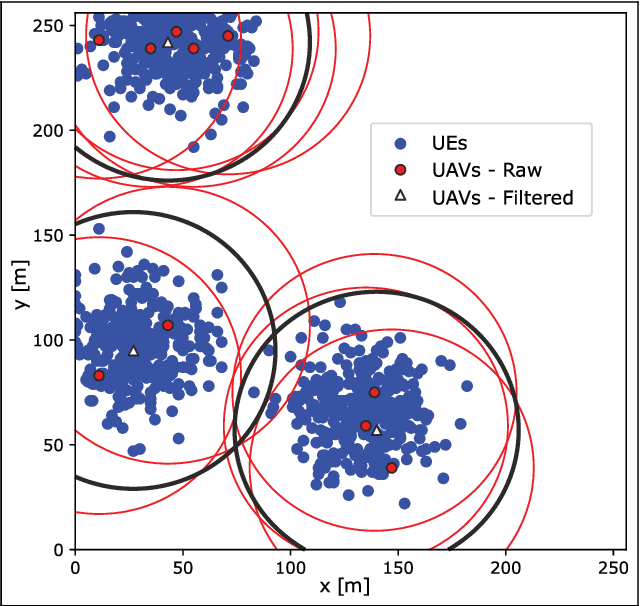

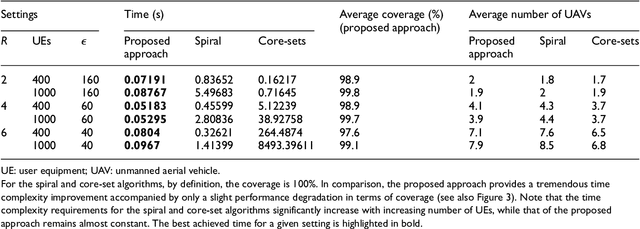

Fast and computationally efficient generative adversarial network algorithm for unmanned aerial vehicle-based network coverage optimization

Mar 25, 2022

Abstract:The challenge of dynamic traffic demand in mobile networks is tackled by moving cells based on unmanned aerial vehicles. Considering the tremendous potential of unmanned aerial vehicles in the future, we propose a new heuristic algorithm for coverage optimization. The proposed algorithm is implemented based on a conditional generative adversarial neural network, with a unique multilayer sum-pooling loss function. To assess the performance of the proposed approach, we compare it with the optimal core-set algorithm and quasi-optimal spiral algorithm. Simulation results show that the proposed approach converges to the quasi-optimal solution with a negligible difference from the global optimum while maintaining a quadratic complexity regardless of the number of users.

Machine Learning for Massive Industrial Internet of Things

Mar 10, 2021

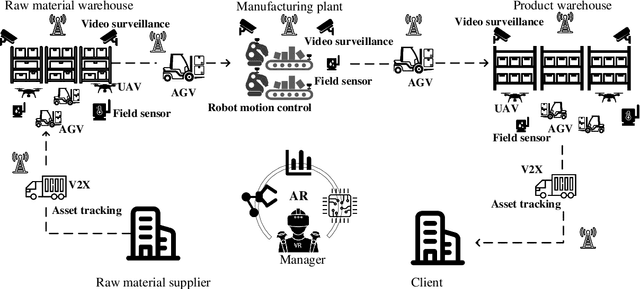

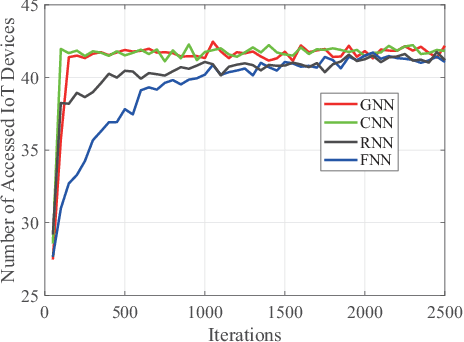

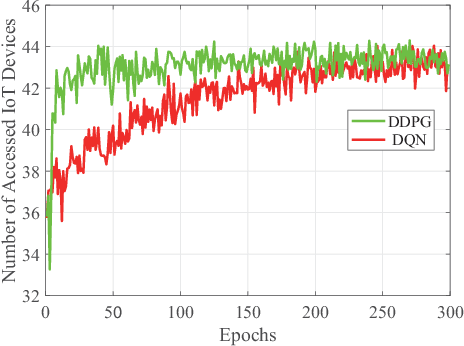

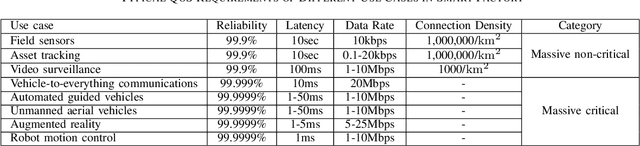

Abstract:Industrial Internet of Things (IIoT) revolutionizes the future manufacturing facilities by integrating the Internet of Things technologies into industrial settings. With the deployment of massive IIoT devices, it is difficult for the wireless network to support the ubiquitous connections with diverse quality-of-service (QoS) requirements. Although machine learning is regarded as a powerful data-driven tool to optimize wireless network, how to apply machine learning to deal with the massive IIoT problems with unique characteristics remains unsolved. In this paper, we first summarize the QoS requirements of the typical massive non-critical and critical IIoT use cases. We then identify unique characteristics in the massive IIoT scenario, and the corresponding machine learning solutions with its limitations and potential research directions. We further present the existing machine learning solutions for individual layer and cross-layer problems in massive IIoT. Last but not the least, we present a case study of massive access problem based on deep neural network and deep reinforcement learning techniques, respectively, to validate the effectiveness of machine learning in massive IIoT scenario.

A Learning Theoretic Approach to Energy Harvesting Communication System Optimization

Dec 06, 2012

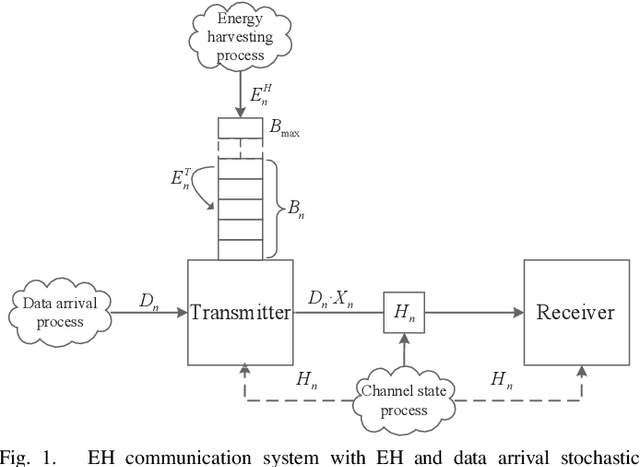

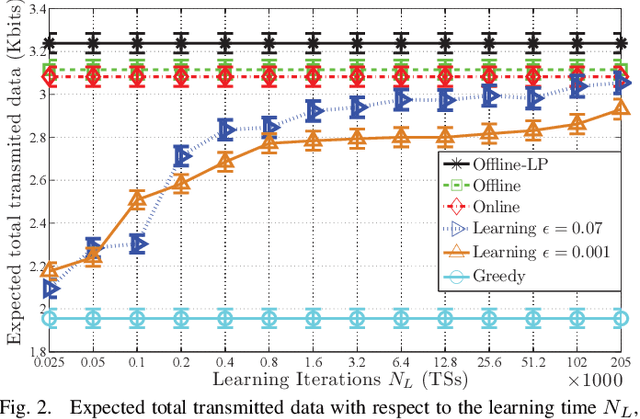

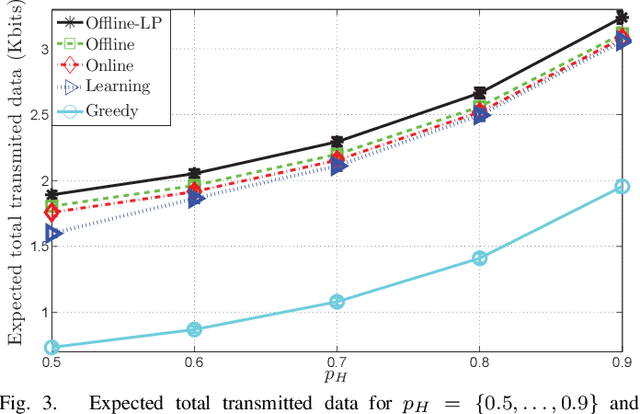

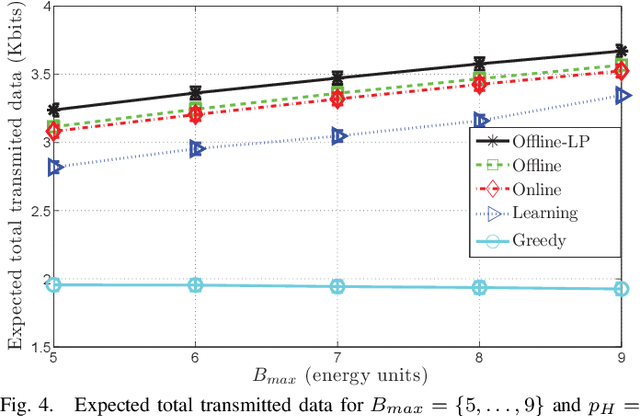

Abstract:A point-to-point wireless communication system in which the transmitter is equipped with an energy harvesting device and a rechargeable battery, is studied. Both the energy and the data arrivals at the transmitter are modeled as Markov processes. Delay-limited communication is considered assuming that the underlying channel is block fading with memory, and the instantaneous channel state information is available at both the transmitter and the receiver. The expected total transmitted data during the transmitter's activation time is maximized under three different sets of assumptions regarding the information available at the transmitter about the underlying stochastic processes. A learning theoretic approach is introduced, which does not assume any a priori information on the Markov processes governing the communication system. In addition, online and offline optimization problems are studied for the same setting. Full statistical knowledge and causal information on the realizations of the underlying stochastic processes are assumed in the online optimization problem, while the offline optimization problem assumes non-causal knowledge of the realizations in advance. Comparing the optimal solutions in all three frameworks, the performance loss due to the lack of the transmitter's information regarding the behaviors of the underlying Markov processes is quantified.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge