Md. Abrar Istiak

Department of Electrical and Electronic Engineering, Bangladesh University of Engineering and Technology

MIBINET: Real-time Proctoring of Cardiovascular Inter-Beat-Intervals using a Multifaceted CNN from mm-Wave Ballistocardiography Signal

Nov 14, 2022

Abstract:The recent pandemic has refocused the medical world's attention on the diagnostic techniques associated with cardiovascular disease. Heart rate provides a real-time snapshot of cardiovascular health. A more precise heart rate reading provides a better understanding of cardiac muscle activity. Although many existing diagnostic techniques are approaching the limits of perfection, there remains potential for further development. In this paper, we propose MIBINET, a convolutional neural network for real-time proctoring of heart rate via inter-beat-interval (IBI) from millimeter wave (mm-wave) radar ballistocardiography signals. This network can be used in hospitals, homes, and passenger vehicles due to its lightweight and contactless properties. It employs classical signal processing prior to fitting the data into the network. Although MIBINET is primarily designed to work on mm-wave signals, it is found equally effective on signals of various modalities such as PCG, ECG, and PPG. Extensive experimental results and a thorough comparison with the current state-of-the-art on mm-wave signals demonstrate the viability and versatility of the proposed methodology. Keywords: Cardiovascular disease, contactless measurement, heart rate, IBI, mm-wave radar, neural network

A Sequence Agnostic Multimodal Preprocessing for Clogged Blood Vessel Detection in Alzheimer's Diagnosis

Nov 06, 2022

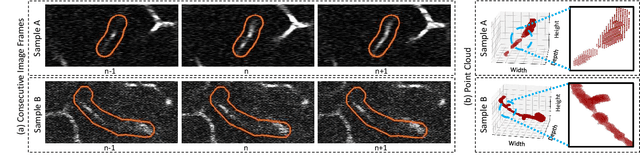

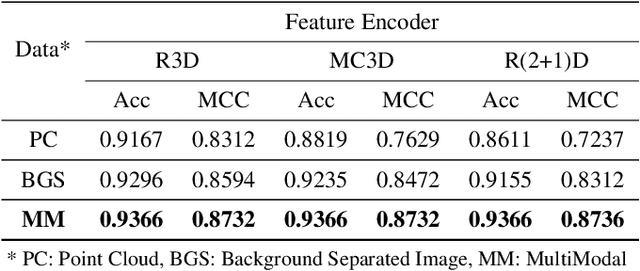

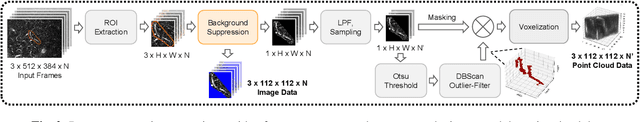

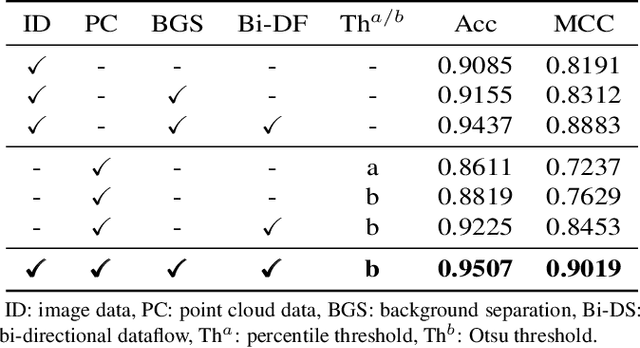

Abstract:Successful identification of blood vessel blockage is a crucial step for Alzheimer's disease diagnosis. These blocks can be identified from the spatial and time-depth variable Two-Photon Excitation Microscopy (TPEF) images of the brain blood vessels using machine learning methods. In this study, we propose several preprocessing schemes to improve the performance of these methods. Our method includes 3D-point cloud data extraction from image modality and their feature-space fusion to leverage complementary information inherent in different modalities. We also enforce the learned representation to be sequence-order invariant by utilizing bi-direction dataflow. Experimental results on The Clog Loss dataset show that our proposed method consistently outperforms the state-of-the-art preprocessing methods in stalled and non-stalled vessel classification.

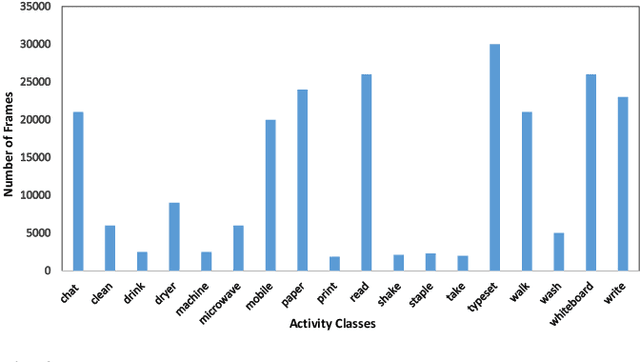

BON: An extended public domain dataset for human activity recognition

Sep 12, 2022

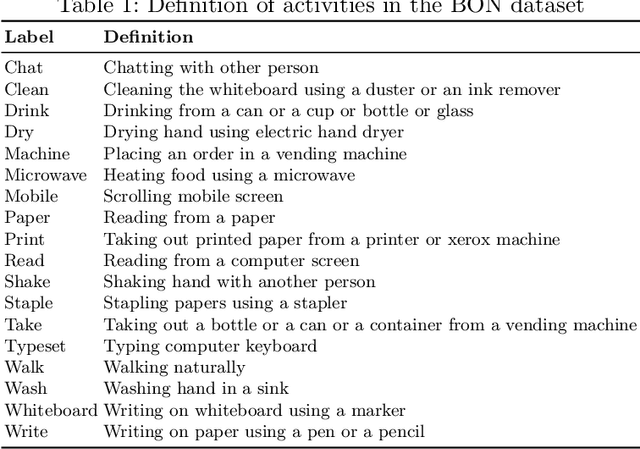

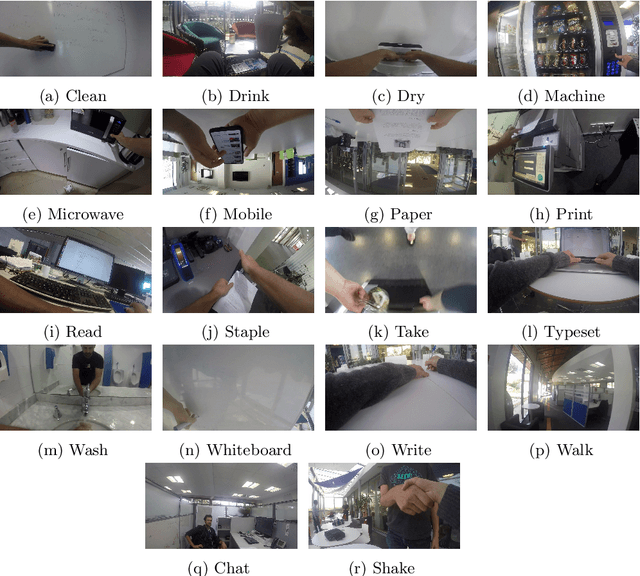

Abstract:Body-worn first-person vision (FPV) camera enables to extract a rich source of information on the environment from the subject's viewpoint. However, the research progress in wearable camera-based egocentric office activity understanding is slow compared to other activity environments (e.g., kitchen and outdoor ambulatory), mainly due to the lack of adequate datasets to train more sophisticated (e.g., deep learning) models for human activity recognition in office environments. This paper provides details of a large and publicly available office activity dataset (BON) collected in different office settings across three geographical locations: Barcelona (Spain), Oxford (UK) and Nairobi (Kenya), using a chest-mounted GoPro Hero camera. The BON dataset contains eighteen common office activities that can be categorised into person-to-person interactions (e.g., Chat with colleagues), person-to-object (e.g., Writing on a whiteboard), and proprioceptive (e.g., Walking). Annotation is provided for each segment of video with 5-seconds duration. Generally, BON contains 25 subjects and 2639 total segments. In order to facilitate further research in the sub-domain, we have also provided results that could be used as baselines for future studies.

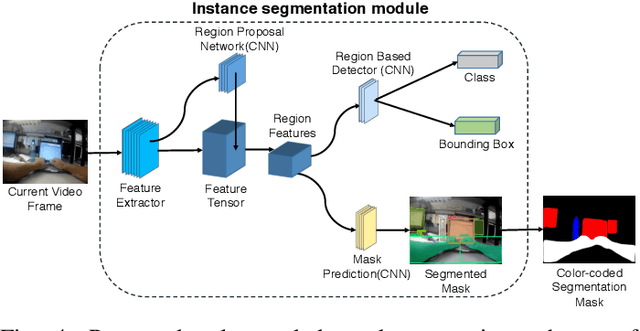

SegCodeNet: Color-Coded Segmentation Masks for Activity Detection from Wearable Cameras

Aug 19, 2020

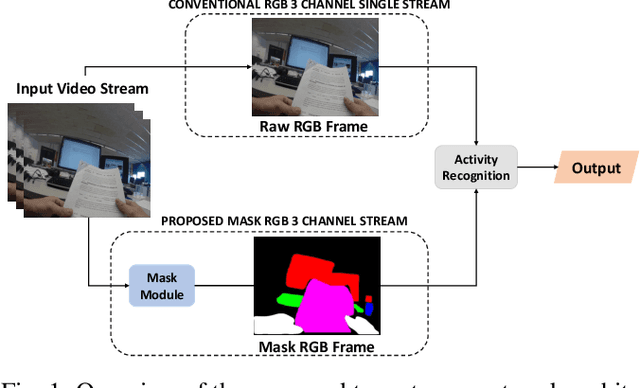

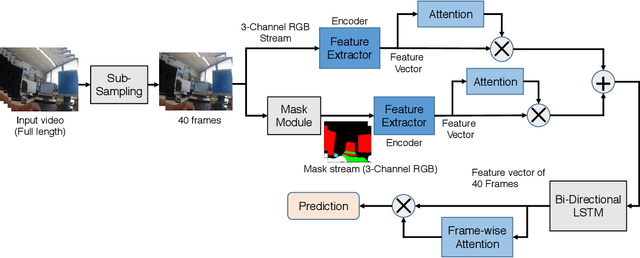

Abstract:Activity detection from first-person videos (FPV) captured using a wearable camera is an active research field with potential applications in many sectors, including healthcare, law enforcement, and rehabilitation. State-of-the-art methods use optical flow-based hybrid techniques that rely on features derived from the motion of objects from consecutive frames. In this work, we developed a two-stream network, the \emph{SegCodeNet}, that uses a network branch containing video-streams with color-coded semantic segmentation masks of relevant objects in addition to the original RGB video-stream. We also include a stream-wise attention gating that prioritizes between the two streams and a frame-wise attention module that prioritizes the video frames that contain relevant features. Experiments are conducted on an FPV dataset containing $18$ activity classes in office environments. In comparison to a single-stream network, the proposed two-stream method achieves an absolute improvement of $14.366\%$ and $10.324\%$ for averaged F1 score and accuracy, respectively, when average results are compared for three different frame sizes $224\times224$, $112\times112$, and $64\times64$. The proposed method provides significant performance gains for lower-resolution images with absolute improvements of $17\%$ and $26\%$ in F1 score for input dimensions of $112\times112$ and $64\times64$, respectively. The best performance is achieved for a frame size of $224\times224$ yielding an F1 score and accuracy of $90.176\%$ and $90.799\%$ which outperforms the state-of-the-art Inflated 3D ConvNet (I3D) \cite{carreira2017quo} method by an absolute margin of $4.529\%$ and $2.419\%$, respectively.

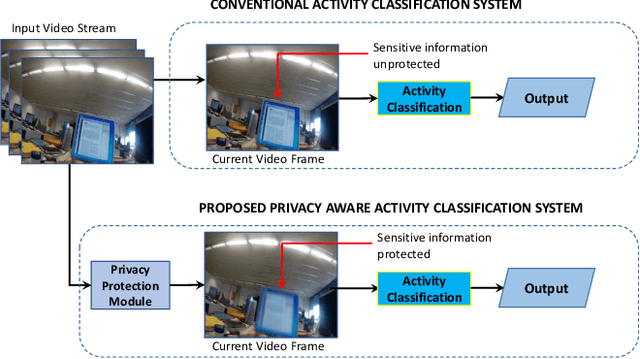

Privacy-Aware Activity Classification from First Person Office Videos

Jun 11, 2020

Abstract:In the advent of wearable body-cameras, human activity classification from First-Person Videos (FPV) has become a topic of increasing importance for various applications, including in life-logging, law-enforcement, sports, workplace, and healthcare. One of the challenging aspects of FPV is its exposure to potentially sensitive objects within the user's field of view. In this work, we developed a privacy-aware activity classification system focusing on office videos. We utilized a Mask-RCNN with an Inception-ResNet hybrid as a feature extractor for detecting, and then blurring out sensitive objects (e.g., digital screens, human face, paper) from the videos. For activity classification, we incorporate an ensemble of Recurrent Neural Networks (RNNs) with ResNet, ResNext, and DenseNet based feature extractors. The proposed system was trained and evaluated on the FPV office video dataset that includes 18-classes made available through the IEEE Video and Image Processing (VIP) Cup 2019 competition. On the original unprotected FPVs, the proposed activity classifier ensemble reached an accuracy of 85.078% with precision, recall, and F1 scores of 0.88, 0.85 & 0.86, respectively. On privacy protected videos, the performances were slightly degraded, with accuracy, precision, recall, and F1 scores at 73.68%, 0.79, 0.75, and 0.74, respectively. The presented system won the 3rd prize in the IEEE VIP Cup 2019 competition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge