Luxi Yang

Near-Field Wideband Channel Estimation for XL-MIMO Systems via Denoising Diffusion Model

Apr 22, 2026Abstract:Extremely large-scale multiple-input multiple-output (XL-MIMO) is a key enabling technology for sixth-generation (6G) communication systems. Nevertheless, the increase in array aperture and signal bandwidth brings new challenges to wideband channel estimation in XL-MIMO systems. Motivated by recent advances in deep generative modeling, we propose a diffusion model-based method for near-field wideband channel estimation in XL-MIMO systems. We first analyze the statistical correlation of wideband channel and show that near-field wideband channel exhibits both spatial non-stationarity and beam split effects. Based on these observations, the channel estimation problem is formulated as a Bayesian posterior inference task, in which a diffusion model is employed to learn the prior distribution of the channel. To further enhance the representation of complex spatial-frequency channel structures, we design a denoising network with a multi-scale attention mechanism. In particular, the network extracts multi-scale spatial-frequency features via parallel convolutional branches with different receptive fields, and combines feature attention and spatial attention modules to adaptively emphasize critical channel features. This design enables more accurate modeling of near-field wideband channel distributions and consequently improves channel estimation performance. Experimental results demonstrate that the proposed method exhibits superior robustness to existing baseline schemes for XL-MIMO wideband channel estimation under different experimental settings.

Adaptive Structured Sparse Bayesian Learning for Near-Field Non-Stationary Channel Estimation in XL-MIMO Systems

Apr 13, 2026Abstract:Extremely large-scale multiple-input multiple-output (XL-MIMO) is a key enabler for sixth-generation (6G) communications. However, near-field channel estimation is particularly challenging due to spherical-wave propagation and spatial non-stationarity. To tackle this challenge, we propose a structured sparse Bayesian learning framework with adaptive dictionary updating for near-field non-stationary channel estimation. Specifically, the proposed method iteratively updates the distance parameters within an adaptive dictionary, thereby enhancing the representation capability without increasing the dictionary size. Moreover, we develop a hierarchical prior model that jointly captures polar-domain sparsity and structured dependency, enabling efficient Bayesian inference. Simulation results demonstrate that the proposed approach outperforms existing polar-domain dictionary-based methods while achieving low dictionary overhead.

JSSAnet: Theory-Guided Subchannel Partitioning and Joint Spatial Attention for Near-Field Channel Estimation

Mar 25, 2026Abstract:The deployment of extremely large-scale antenna array (ELAA) in sixth-generation (6G) communication systems introduces unique challenges for efficient near-field channel estimation. To tackle these issues, this paper presents a theory-guided approach that incorporates angular information into an attention-based estimation framework. A piecewise Fourier representation is proposed to implicitly encode the near-field channel's inherent nonlinearity, enabling the entire channel to be segmented into multiple subchannels, each mapped to the angular domain via the discrete Fourier transform (DFT). Then, we develop a joint subchannel-spatial-attention network (JSSAnet) to extract the spatial features of both intra- and inter-subchannels. To guide theoretically the design of the joint attention mechanism, we derive upper and lower bounds based on approximation criteria and DFT quantization loss mitigation, respectively. Following by both bounds, a JSSA layer of an attention block is constructed to assign independent and adaptive spatial attention weights to each subchannel in parallel. Subsequently, a feed-forward network (FFN) of an attention block further captures and refines the residual nonlinear dependencies across subchannels. Moreover, the proposed JSSA map is linearly computed via element-wise product combining large-kernel convolutions (DLKC), maintaining strong contextual learning capability. Numerical results verify the effectiveness of embedding sparsity information into the attention network and demonstrate JSSAnet achieves superior estimation performance compared with existing methods.

A Multi-Scale Spatial Attention Network for Near-field MIMO Channel Estimation

Jul 30, 2025

Abstract:The deployment of extremely large-scale array (ELAA) brings higher spectral efficiency and spatial degree of freedom, but triggers issues on near-field channel estimation. Existing near-field channel estimation schemes primarily exploit sparsity in the transform domain. However, these schemes are sensitive to the transform matrix selection and the stopping criteria. Inspired by the success of deep learning (DL) in far-field channel estimation, this paper proposes a novel spatial-attention-based method for reconstructing extremely large-scale MIMO (XL-MIMO) channel. Initially, the spatial antenna correlations of near-field channels are analyzed as an expectation over the angle-distance space, which demonstrate correlation range of an antenna element varies with its position. Due to the strong correlation between adjacent antenna elements, interactions of inter-subchannel are applied to describe inherent correlation of near-field channels instead of inter-element. Subsequently, a multi-scale spatial attention network (MsSAN) with the inter-subchannel correlation learning capabilities is proposed tailed to near-field MIMO channel estimation. We employ the multi-scale architecture to refine the subchannel size in MsSAN. Specially, we inventively introduce the sum of dot products as spatial attention (SA) instead of cross-covariance to weight subchannel features at different scales in the SA module. Simulation results are presented to validate the proposed MsSAN achieves remarkable the inter-subchannel correlation learning capabilities and outperforms others in terms of near-field channel reconstruction.

Element-Grouping Strategy for Intelligent Reflecting Surface: Performance Analysis and Algorithm Optimization

Apr 22, 2025Abstract:As a revolutionary paradigm for intelligently controlling wireless channels, intelligent reflecting surface (IRS) has emerged as a promising technology for future sixth-generation (6G) wireless communications. While IRS-aided communication systems can achieve attractive high channel gains, existing schemes require plenty of IRS elements to mitigate the ``multiplicative fading'' effect in cascaded channels, leading to high complexity for real-time beamforming and high signaling overhead for channel estimation. In this paper, the concept of sustainable intelligent element-grouping IRS (IEG-IRS) is proposed to overcome those fundamental bottlenecks. Specifically, based on the statistical channel state information (S-CSI), the proposed grouping strategy intelligently pre-divide the IEG-IRS elements into multiple groups based on the beam-domain grouping method, with each group sharing the common reflection coefficient and being optimized in real time using the instantaneous channel state information (I-CSI). Then, we further analyze the asymptotic performance of the IEG-IRS to reveal the substantial capacity gain in an extremely large-scale IRS (XL-IRS) aided single-user single-input single-output (SU-SISO) system. In particular, when a line-of-sight (LoS) component exists, it demonstrates that the combined cascaded link can be considered as a ``deterministic virtual LoS'' channel, resulting in a sustainable squared array gain achieved by the IEG-IRS. Finally, we formulate a weighted-sum-rate (WSR) maximization problem for an IEG-IRS-aided multiuser multiple-input single-output (MU-MISO) system and a two-stage algorithm for optimizing the beam-domain grouping strategy and the multi-user active-passive beamforming is proposed.

An Efficient Network with Novel Quantization Designed for Massive MIMO CSI Feedback

May 30, 2024

Abstract:The efficacy of massive multiple-input multiple-output (MIMO) techniques heavily relies on the accuracy of channel state information (CSI) in frequency division duplexing (FDD) systems. Many works focus on CSI compression and quantization methods to enhance CSI reconstruction accuracy with lower feedback overhead. In this letter, we propose CsiConformer, a novel CSI feedback network that combines convolutional operations and self-attention mechanisms to improve CSI feedback accuracy. Additionally, a new quantization module is developed to improve encoding efficiency. Experiment results show that CsiConformer outperforms previous state-of-the-art networks, achieving an average accuracy improvement of 17.67\% with lower computational overhead.

Intelligent Reflecting Surface Aided Target Localization With Unknown Transceiver-IRS Channel State Information

Apr 08, 2024

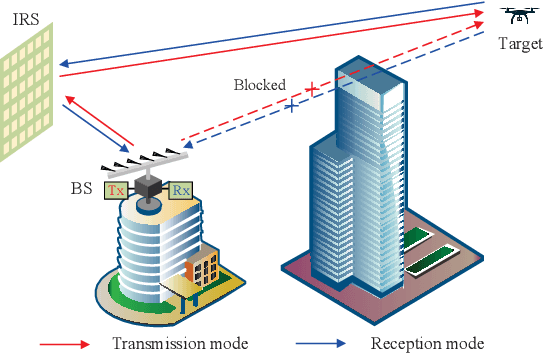

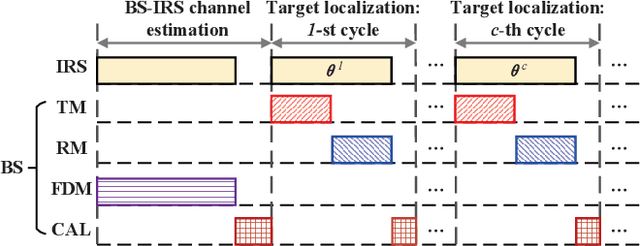

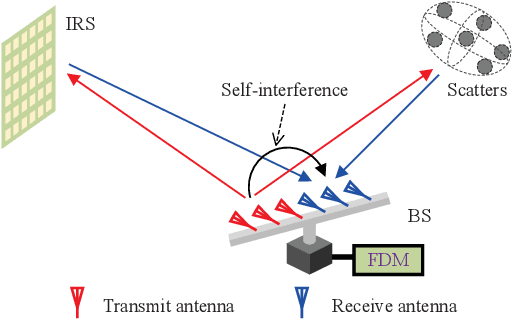

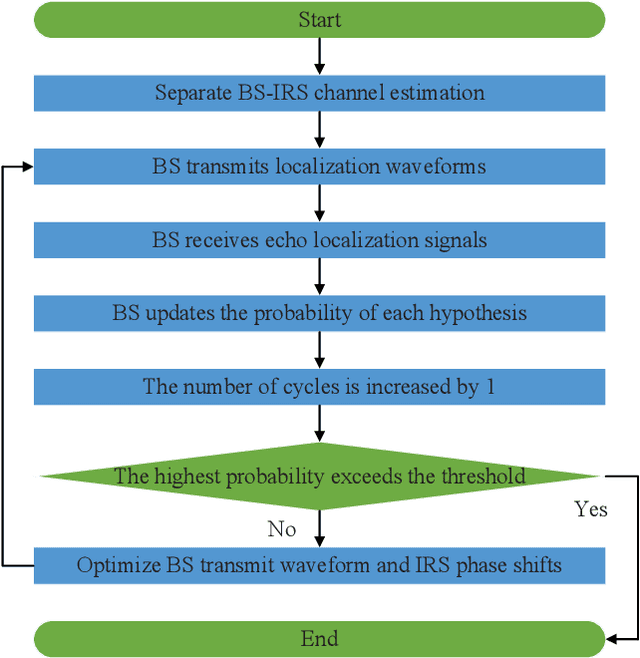

Abstract:Integrating wireless sensing capabilities into base stations (BSs) has become a widespread trend in the future beyond fifth-generation (B5G)/sixth-generation (6G) wireless networks. In this paper, we investigate intelligent reflecting surface (IRS) enabled wireless localization, in which an IRS is deployed to assist a BS in locating a target in its non-line-of-sight (NLoS) region. In particular, we consider the case where the BS-IRS channel state information (CSI) is unknown. Specifically, we first propose a separate BS-IRS channel estimation scheme in which the BS operates in full-duplex mode (FDM), i.e., a portion of the BS antennas send downlink pilot signals to the IRS, while the remaining BS antennas receive the uplink pilot signals reflected by the IRS. However, we can only obtain an incomplete BS-IRS channel matrix based on our developed iterative coordinate descent-based channel estimation algorithm due to the "sign ambiguity issue". Then, we employ the multiple hypotheses testing framework to perform target localization based on the incomplete estimated channel, in which the probability of each hypothesis is updated using Bayesian inference at each cycle. Moreover, we formulate a joint BS transmit waveform and IRS phase shifts optimization problem to improve the target localization performance by maximizing the weighted sum distance between each two hypotheses. However, the objective function is essentially a quartic function of the IRS phase shift vector, thus motivating us to resort to the penalty-based method to tackle this challenge. Simulation results validate the effectiveness of our proposed target localization scheme and show that the scheme's performance can be further improved by finely designing the BS transmit waveform and IRS phase shifts intending to maximize the weighted sum distance between different hypotheses.

Computationally Efficient Unsupervised Deep Learning for Robust Joint AP Clustering and Beamforming Design in Cell-Free Systems

Apr 03, 2024Abstract:In this paper, we consider robust joint access point (AP) clustering and beamforming design with imperfect channel state information (CSI) in cell-free systems. Specifically, we jointly optimize AP clustering and beamforming with imperfect CSI to simultaneously maximize the worst-case sum rate and minimize the number of AP clustering under power constraint and the sparsity constraint of AP clustering. By transformations, the semi-infinite constraints caused by the imperfect CSI are converted into more tractable forms for facilitating a computationally efficient unsupervised deep learning algorithm. In addition, to further reduce the computational complexity, a computationally effective unsupervised deep learning algorithm is proposed to implement robust joint AP clustering and beamforming design with imperfect CSI in cell-free systems. Numerical results demonstrate that the proposed unsupervised deep learning algorithm achieves a higher worst-case sum rate under a smaller number of AP clustering with computational efficiency.

Digital Twin-Enhanced Deep Reinforcement Learning for Resource Management in Networks Slicing

Nov 28, 2023

Abstract:Network slicing-based communication systems can dynamically and efficiently allocate resources for diversified services. However, due to the limitation of the network interface on channel access and the complexity of the resource allocation, it is challenging to achieve an acceptable solution in the practical system without precise prior knowledge of the dynamics probability model of the service requests. Existing work attempts to solve this problem using deep reinforcement learning (DRL), however, such methods usually require a lot of interaction with the real environment in order to achieve good results. In this paper, a framework consisting of a digital twin and reinforcement learning agents is present to handle the issue. Specifically, we propose to use the historical data and the neural networks to build a digital twin model to simulate the state variation law of the real environment. Then, we use the data generated by the network slicing environment to calibrate the digital twin so that it is in sync with the real environment. Finally, DRL for slice optimization optimizes its own performance in this virtual pre-verification environment. We conducted an exhaustive verification of the proposed digital twin framework to confirm its scalability. Specifically, we propose to use loss landscapes to visualize the generalization of DRL solutions. We explore a distillation-based optimization scheme for lightweight slicing strategies. In addition, we also extend the framework to offline reinforcement learning, where solutions can be used to obtain intelligent decisions based solely on historical data. Numerical simulation experiments show that the proposed digital twin can significantly improve the performance of the slice optimization strategy.

Channel Estimation with Dynamic Metasurface Antennas via Model-Based Learning

Nov 14, 2023Abstract:Dynamic Metasurface Antenna (DMA) is a cutting-edge antenna technology offering scalable and sustainable solutions for large antenna arrays. The effectiveness of DMAs stems from their inherent configurable analog signal processing capabilities, which facilitate cost-limited implementations. However, when DMAs are used in multiple input multiple output (MIMO) communication systems, they pose challenges in channel estimation due to their analog compression. In this paper, we propose two model-based learning methods to overcome this challenge. Our approach starts by casting channel estimation as a compressed sensing problem. Here, the sensing matrix is formed using a random DMA weighting matrix combined with a spatial gridding dictionary. We then employ the learned iterative shrinkage and thresholding algorithm (LISTA) to recover the sparse channel parameters. LISTA unfolds the iterative shrinkage and thresholding algorithm into a neural network and trains the neural network into a highly efficient channel estimator fitting with the previous channel. As the sensing matrix is crucial to the accuracy of LISTA recovery, we introduce another data-aided method, LISTA-sensing matrix optimization (LISTA-SMO), to jointly optimize the sensing matrix. LISTA-SMO takes LISTA as a backbone and embeds the sensing matrix optimization layers in LISTA's neural network, allowing for the optimization of the sensing matrix along with the training of LISTA. Furthermore, we propose a self-supervised learning technique to tackle the difficulty of acquiring noise-free data. Our numerical results demonstrate that LISTA outperforms traditional sparse recovery methods regarding channel estimation accuracy and efficiency. Besides, LISTA-SMO achieves better channel accuracy than LISTA, demonstrating the effectiveness in optimizing the sensing matrix.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge