Jonas Gonzalez-Billandon

ROS-LLM: A ROS framework for embodied AI with task feedback and structured reasoning

Jun 28, 2024

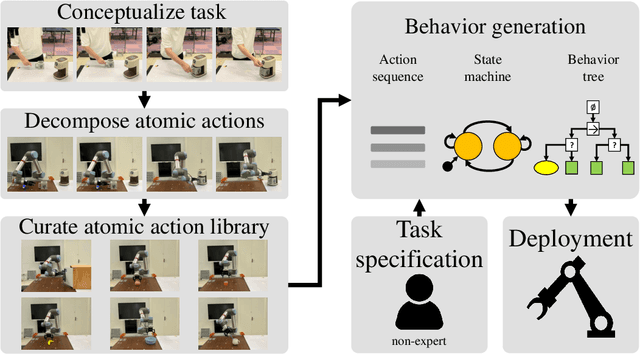

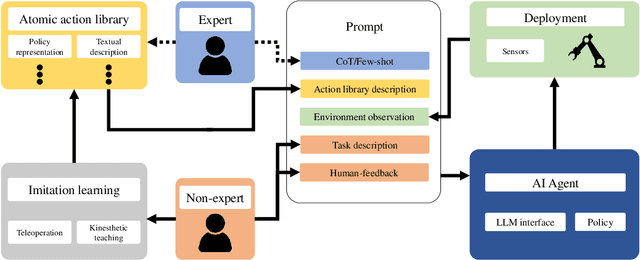

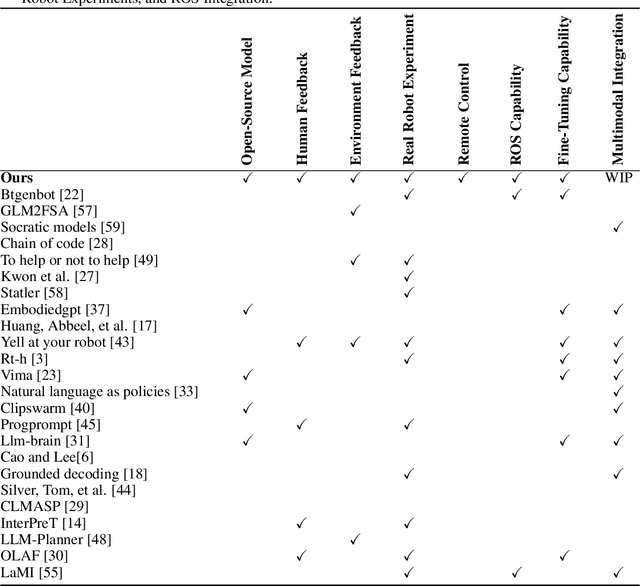

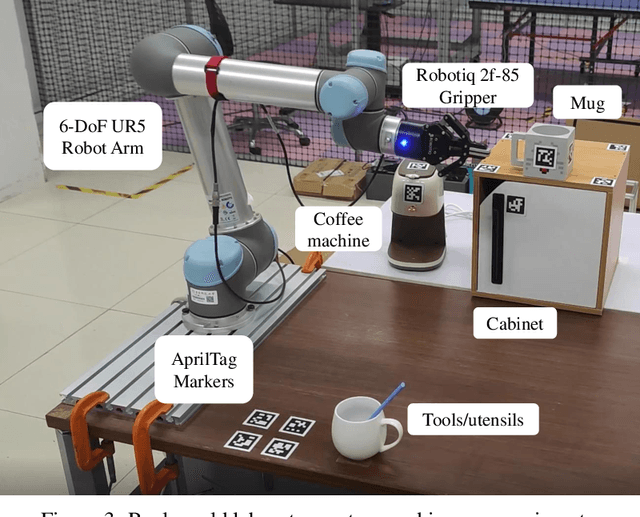

Abstract:We present a framework for intuitive robot programming by non-experts, leveraging natural language prompts and contextual information from the Robot Operating System (ROS). Our system integrates large language models (LLMs), enabling non-experts to articulate task requirements to the system through a chat interface. Key features of the framework include: integration of ROS with an AI agent connected to a plethora of open-source and commercial LLMs, automatic extraction of a behavior from the LLM output and execution of ROS actions/services, support for three behavior modes (sequence, behavior tree, state machine), imitation learning for adding new robot actions to the library of possible actions, and LLM reflection via human and environment feedback. Extensive experiments validate the framework, showcasing robustness, scalability, and versatility in diverse scenarios, including long-horizon tasks, tabletop rearrangements, and remote supervisory control. To facilitate the adoption of our framework and support the reproduction of our results, we have made our code open-source. You can access it at: https://github.com/huawei-noah/HEBO/tree/master/ROSLLM.

A call for embodied AI

Feb 06, 2024Abstract:We propose Embodied AI as the next fundamental step in the pursuit of Artificial General Intelligence, juxtaposing it against current AI advancements, particularly Large Language Models. We traverse the evolution of the embodiment concept across diverse fields - philosophy, psychology, neuroscience, and robotics - to highlight how EAI distinguishes itself from the classical paradigm of static learning. By broadening the scope of Embodied AI, we introduce a theoretical framework based on cognitive architectures, emphasizing perception, action, memory, and learning as essential components of an embodied agent. This framework is aligned with Friston's active inference principle, offering a comprehensive approach to EAI development. Despite the progress made in the field of AI, substantial challenges, such as the formulation of a novel AI learning theory and the innovation of advanced hardware, persist. Our discussion lays down a foundational guideline for future Embodied AI research. Highlighting the importance of creating Embodied AI agents capable of seamless communication, collaboration, and coexistence with humans and other intelligent entities within real-world environments, we aim to steer the AI community towards addressing the multifaceted challenges and seizing the opportunities that lie ahead in the quest for AGI.

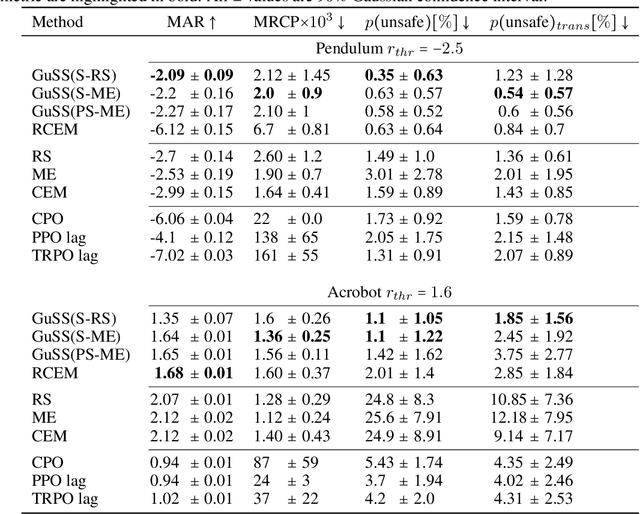

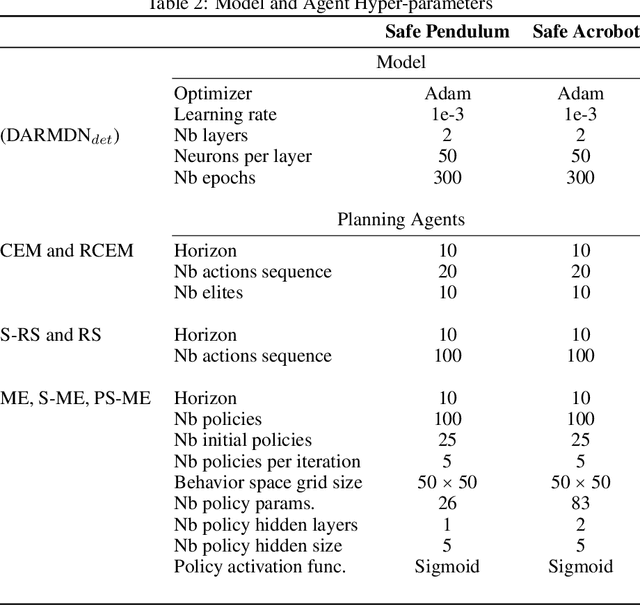

Guided Safe Shooting: model based reinforcement learning with safety constraints

Jun 20, 2022

Abstract:In the last decade, reinforcement learning successfully solved complex control tasks and decision-making problems, like the Go board game. Yet, there are few success stories when it comes to deploying those algorithms to real-world scenarios. One of the reasons is the lack of guarantees when dealing with and avoiding unsafe states, a fundamental requirement in critical control engineering systems. In this paper, we introduce Guided Safe Shooting (GuSS), a model-based RL approach that can learn to control systems with minimal violations of the safety constraints. The model is learned on the data collected during the operation of the system in an iterated batch fashion, and is then used to plan for the best action to perform at each time step. We propose three different safe planners, one based on a simple random shooting strategy and two based on MAP-Elites, a more advanced divergent-search algorithm. Experiments show that these planners help the learning agent avoid unsafe situations while maximally exploring the state space, a necessary aspect when learning an accurate model of the system. Furthermore, compared to model-free approaches, learning a model allows GuSS reducing the number of interactions with the real-system while still reaching high rewards, a fundamental requirement when handling engineering systems.

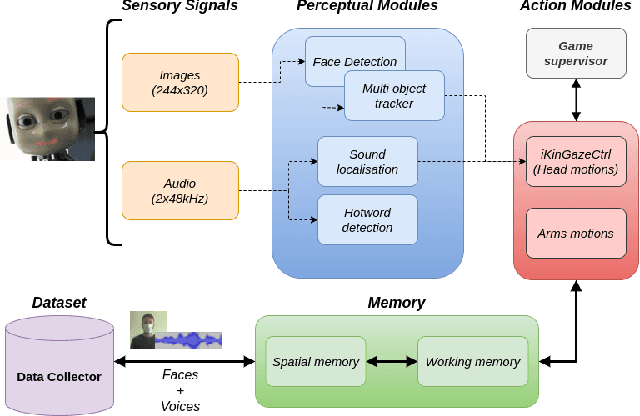

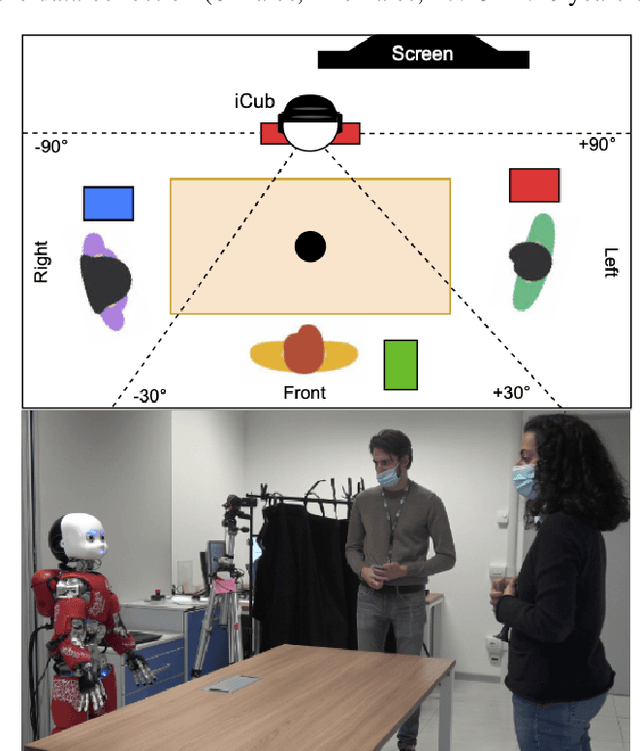

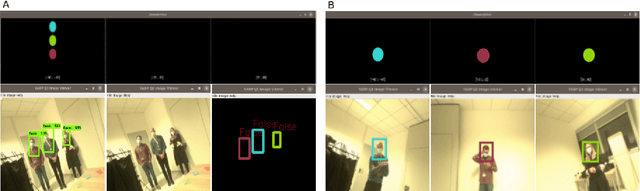

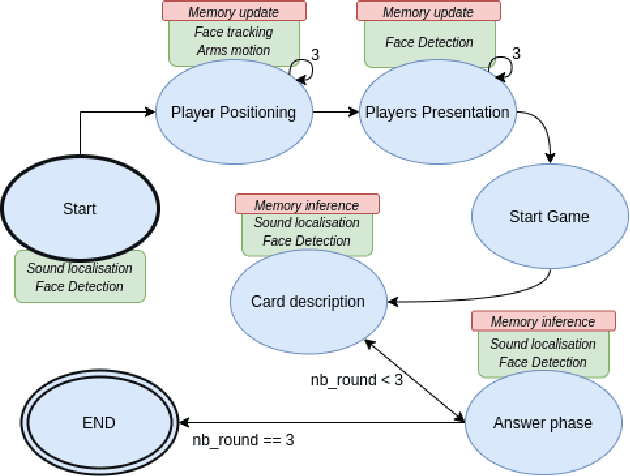

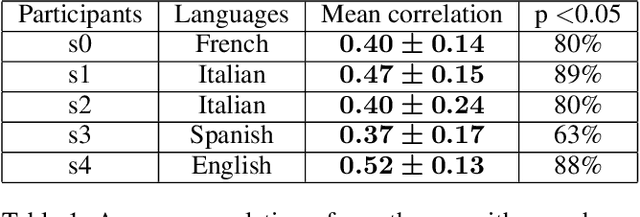

Cognitive architecture aided by working-memory for self-supervised multi-modal humans recognition

Mar 16, 2021

Abstract:The ability to recognize human partners is an important social skill to build personalized and long-term human-robot interactions, especially in scenarios like education, care-giving, and rehabilitation. Faces and voices constitute two important sources of information to enable artificial systems to reliably recognize individuals. Deep learning networks have achieved state-of-the-art results and demonstrated to be suitable tools to address such a task. However, when those networks are applied to different and unprecedented scenarios not included in the training set, they can suffer a drop in performance. For example, with robotic platforms in ever-changing and realistic environments, where always new sensory evidence is acquired, the performance of those models degrades. One solution is to make robots learn from their first-hand sensory data with self-supervision. This allows coping with the inherent variability of the data gathered in realistic and interactive contexts. To this aim, we propose a cognitive architecture integrating low-level perceptual processes with a spatial working memory mechanism. The architecture autonomously organizes the robot's sensory experience into a structured dataset suitable for human recognition. Our results demonstrate the effectiveness of our architecture and show that it is a promising solution in the quest of making robots more autonomous in their learning process.

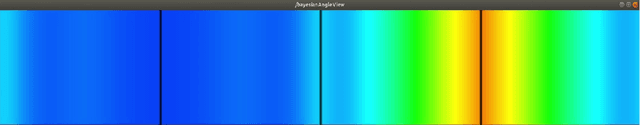

Self-supervised reinforcement learning for speaker localisation with the iCub humanoid robot

Nov 12, 2020

Abstract:In the future robots will interact more and more with humans and will have to communicate naturally and efficiently. Automatic speech recognition systems (ASR) will play an important role in creating natural interactions and making robots better companions. Humans excel in speech recognition in noisy environments and are able to filter out noise. Looking at a person's face is one of the mechanisms that humans rely on when it comes to filtering speech in such noisy environments. Having a robot that can look toward a speaker could benefit ASR performance in challenging environments. To this aims, we propose a self-supervised reinforcement learning-based framework inspired by the early development of humans to allow the robot to autonomously create a dataset that is later used to learn to localize speakers with a deep learning network.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge