Jibo Wei

Theoretical Analysis of Deep Neural Networks in Physical Layer Communication

Feb 21, 2022

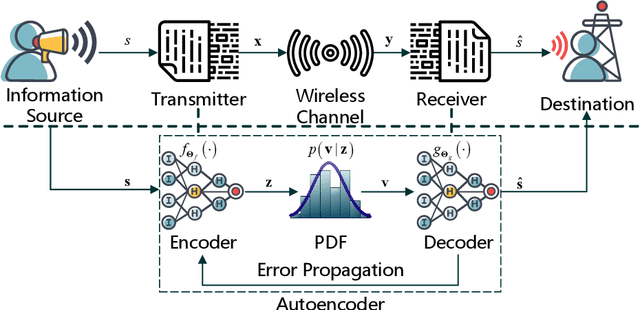

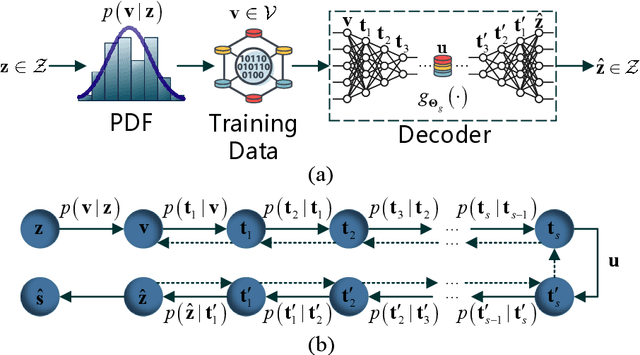

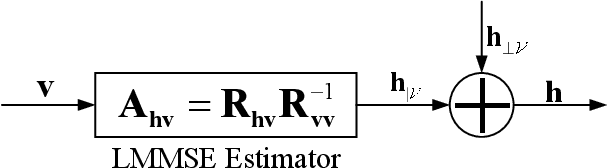

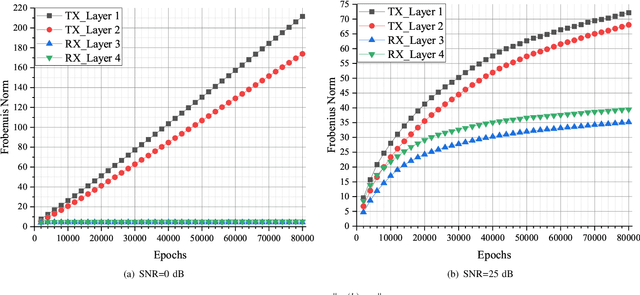

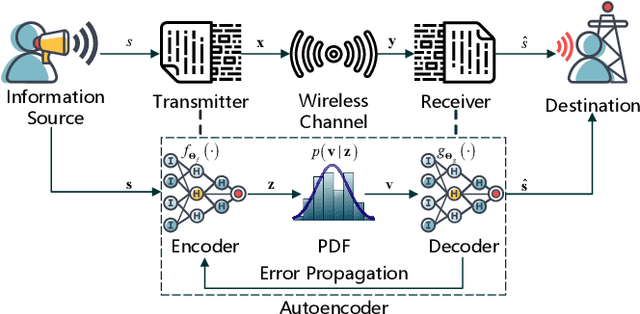

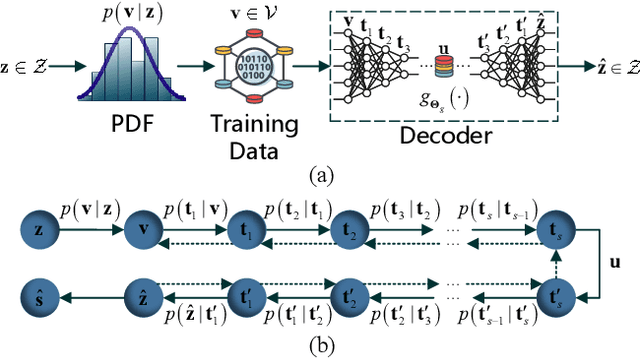

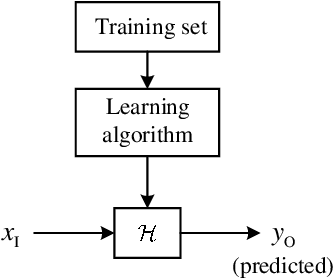

Abstract:Recently, deep neural network (DNN)-based physical layer communication techniques have attracted considerable interest. Although their potential to enhance communication systems and superb performance have been validated by simulation experiments, little attention has been paid to the theoretical analysis. Specifically, most studies in the physical layer have tended to focus on the application of DNN models to wireless communication problems but not to theoretically understand how does a DNN work in a communication system. In this paper, we aim to quantitatively analyze why DNNs can achieve comparable performance in the physical layer comparing with traditional techniques, and also drive their cost in terms of computational complexity. To achieve this goal, we first analyze the encoding performance of a DNN-based transmitter and compare it to a traditional one. And then, we theoretically analyze the performance of DNN-based estimator and compare it with traditional estimators. Third, we investigate and validate how information is flown in a DNN-based communication system under the information theoretic concepts. Our analysis develops a concise way to open the "black box" of DNNs in physical layer communication, which can be applied to support the design of DNN-based intelligent communication techniques and help to provide explainable performance assessment.

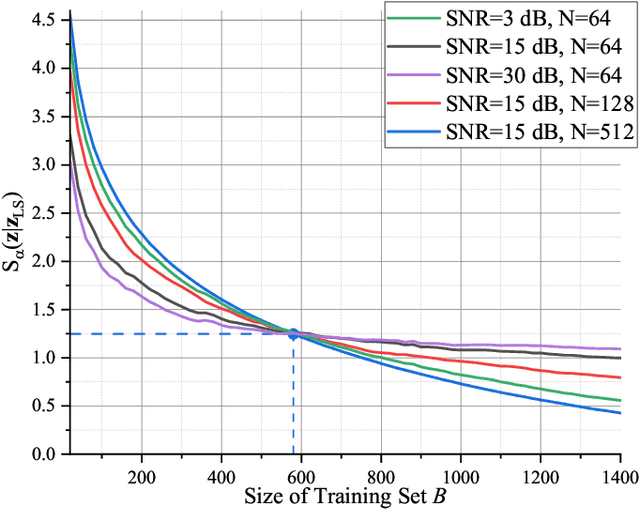

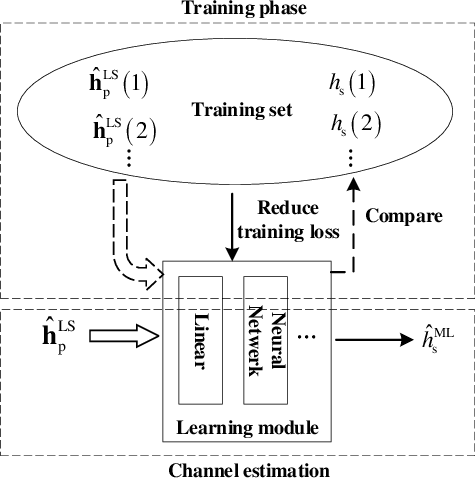

A Low Complexity Learning-based Channel Estimation for OFDM Systems with Online Training

Jul 14, 2021

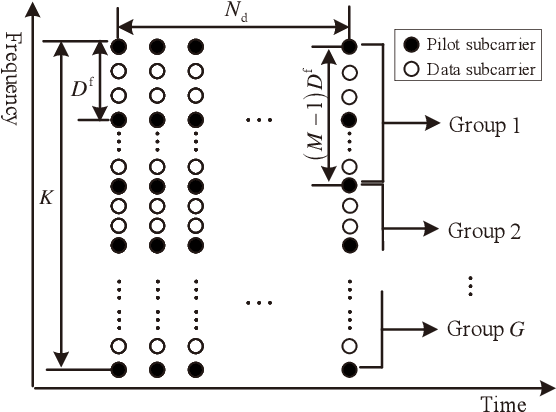

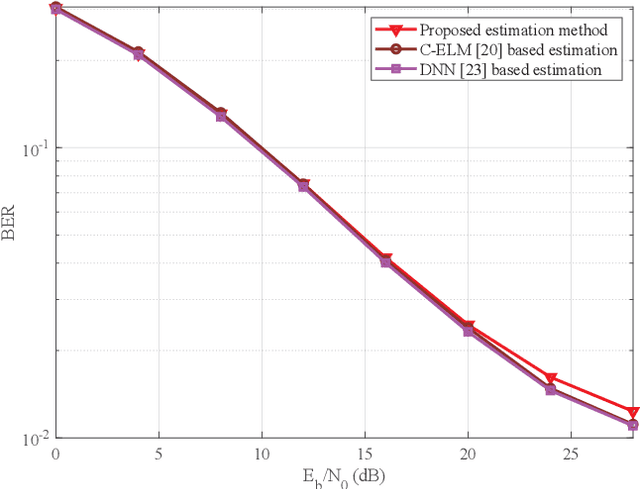

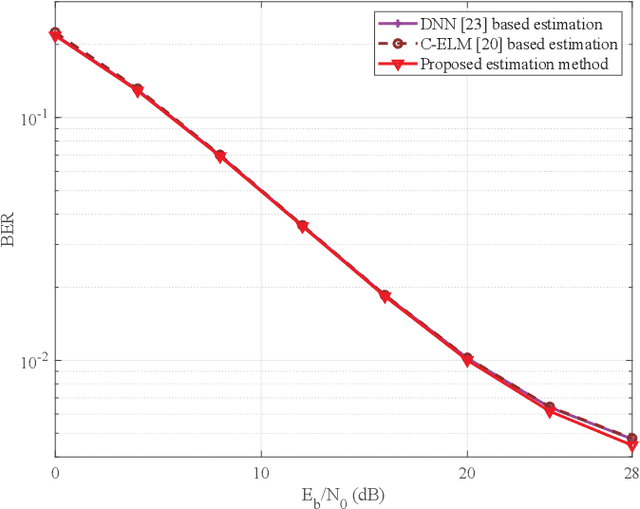

Abstract:In this paper, we devise a highly efficient machine learning-based channel estimation for orthogonal frequency division multiplexing (OFDM) systems, in which the training of the estimator is performed online. A simple learning module is employed for the proposed learning-based estimator. The training process is thus much faster and the required training data is reduced significantly. Besides, a training data construction approach utilizing least square (LS) estimation results is proposed so that the training data can be collected during the data transmission. The feasibility of this novel construction approach is verified by theoretical analysis and simulations. Based on this construction approach, two alternative training data generation schemes are proposed. One scheme transmits additional block pilot symbols to create training data, while the other scheme adopts a decision-directed method and does not require extra pilot overhead. Simulation results show the robustness of the proposed channel estimation method. Furthermore, the proposed method shows better adaptation to practical imperfections compared with the conventional minimum mean-square error (MMSE) channel estimation. It outperforms the existing machine learning-based channel estimation techniques under varying channel conditions.

Cooperative Multi-Agent Reinforcement Learning Based Distributed Dynamic Spectrum Access in Cognitive Radio Networks

Jun 17, 2021

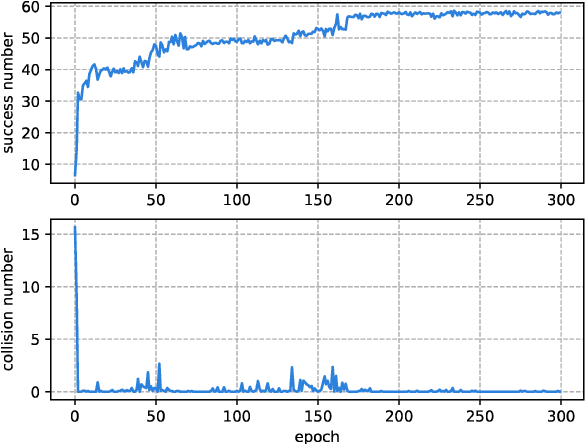

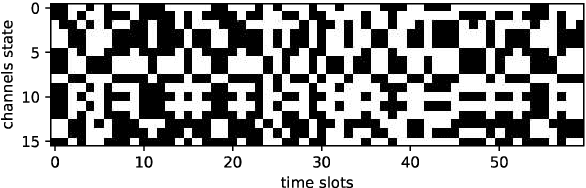

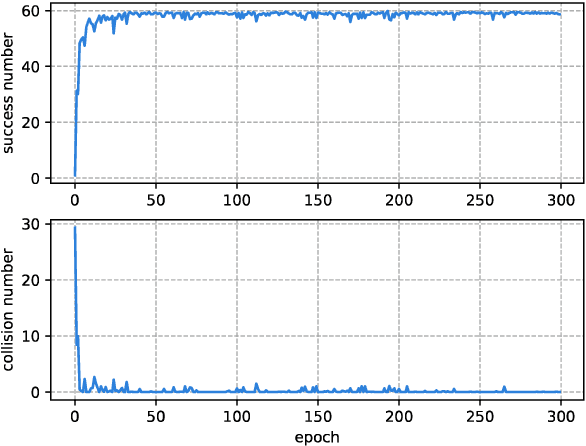

Abstract:With the development of the 5G and Internet of Things, amounts of wireless devices need to share the limited spectrum resources. Dynamic spectrum access (DSA) is a promising paradigm to remedy the problem of inefficient spectrum utilization brought upon by the historical command-and-control approach to spectrum allocation. In this paper, we investigate the distributed DSA problem for multi-user in a typical multi-channel cognitive radio network. The problem is formulated as a decentralized partially observable Markov decision process (Dec-POMDP), and we proposed a centralized off-line training and distributed on-line execution framework based on cooperative multi-agent reinforcement learning (MARL). We employ the deep recurrent Q-network (DRQN) to address the partial observability of the state for each cognitive user. The ultimate goal is to learn a cooperative strategy which maximizes the sum throughput of cognitive radio network in distributed fashion without coordination information exchange between cognitive users. Finally, we validate the proposed algorithm in various settings through extensive experiments. From the simulation results, we can observe that the proposed algorithm can converge fast and achieve almost the optimal performance.

Opening the Black Box of Deep Neural Networks in Physical Layer Communication

Jun 06, 2021

Abstract:Deep Neural Network (DNN)-based physical layer techniques are attracting considerable interest due to their potential to enhance communication systems. However, most studies in the physical layer have tended to focus on the application of DNN models to wireless communication problems but not to theoretically understand how does a DNN work in a communication system. In this letter, we aim to quantitatively analyse why DNNs can achieve comparable performance in the physical layer comparing with traditional techniques and their cost in terms of computational complexity. We further investigate and also experimentally validate how information is flown in a DNN-based communication system under the information theoretic concepts.

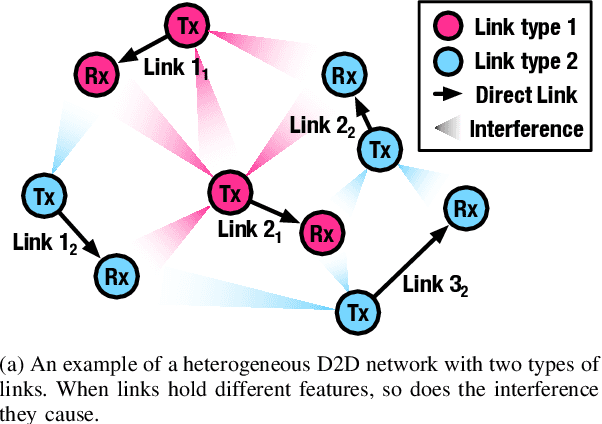

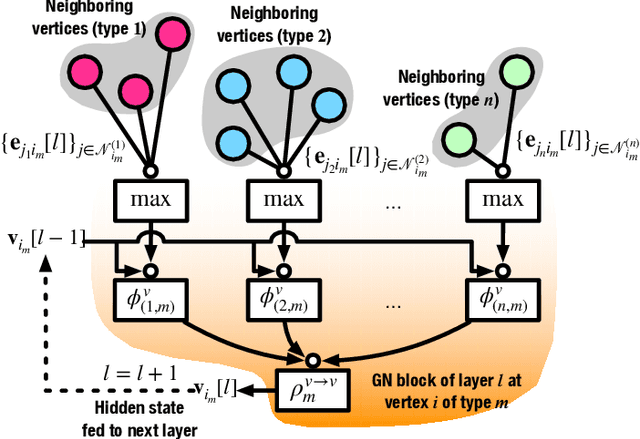

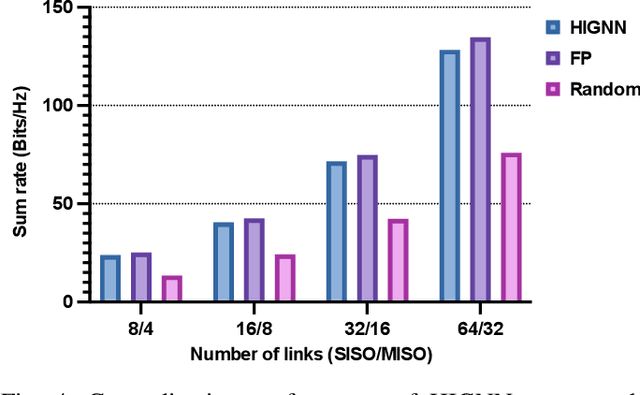

Scalable Power Control/Beamforming in Heterogeneous Wireless Networks with Graph Neural Networks

Apr 12, 2021

Abstract:Machine learning (ML) has been widely used for efficient resource allocation (RA) in wireless networks. Although superb performance is achieved on small and simple networks, most existing ML-based approaches are confronted with difficulties when heterogeneity occurs and network size expands. In this paper, specifically focusing on power control/beamforming (PC/BF) in heterogeneous device-to-device (D2D) networks, we propose a novel unsupervised learning-based framework named heterogeneous interference graph neural network (HIGNN) to handle these challenges. First, we characterize diversified link features and interference relations with heterogeneous graphs. Then, HIGNN is proposed to empower each link to obtain its individual transmission scheme after limited information exchange with neighboring links. It is noteworthy that HIGNN is scalable to wireless networks of growing sizes with robust performance after trained on small-sized networks. Numerical results show that compared with state-of-the-art benchmarks, HIGNN achieves much higher execution efficiency while providing strong performance.

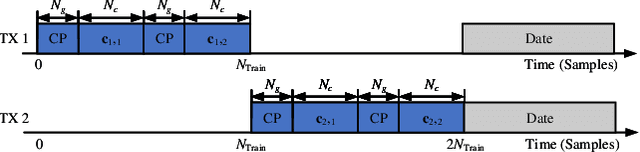

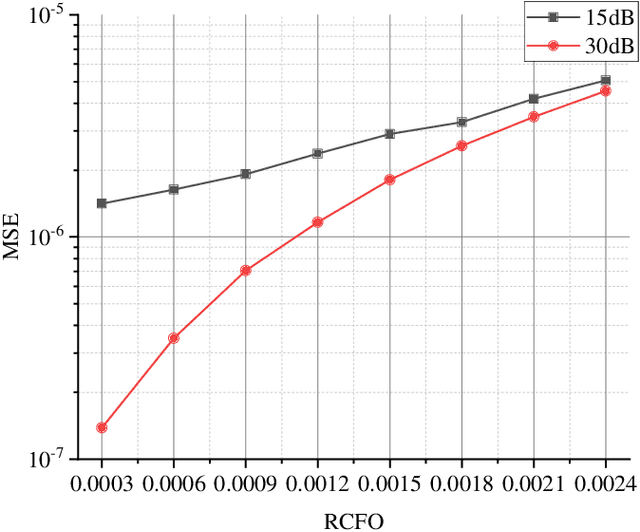

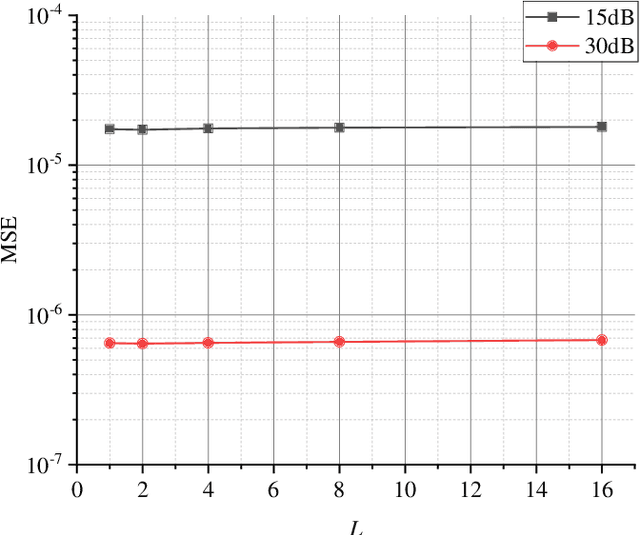

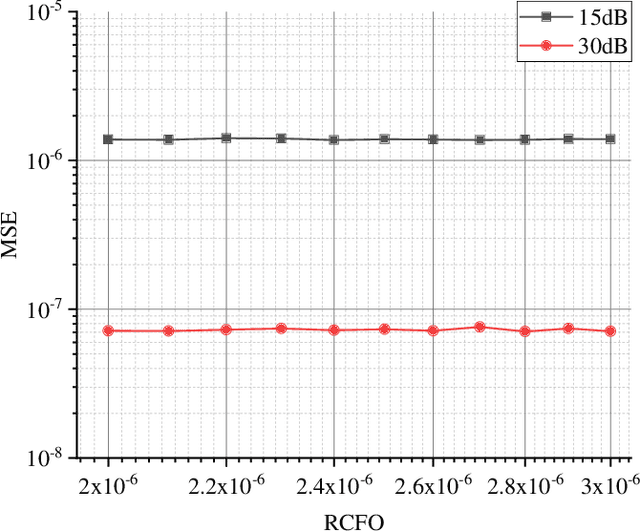

Fine Timing and Frequency Synchronization for MIMO-OFDM: An Extreme Learning Approach

Jul 17, 2020

Abstract:Multiple-input multiple-output orthogonal frequency-division multiplexing (MIMO-OFDM) is a key technology component in the evolution towards next-generation communication in which the accuracy of timing and frequency synchronization significantly impacts the overall system performance. In this paper, we propose a novel scheme leveraging extreme learning machine (ELM) to achieve high-precision timing and frequency synchronization. Specifically, two ELMs are incorporated into a traditional MIMO-OFDM system to estimate both the residual symbol timing offset (RSTO) and the residual carrier frequency offset (RCFO). The simulation results show that the performance of an ELM-based synchronization scheme is superior to the traditional method under both additive white Gaussian noise (AWGN) and frequency selective fading channels. Finally, the proposed method is robust in terms of choice of channel parameters (e.g., number of paths) and also in terms of "generalization ability" from a machine learning standpoint.

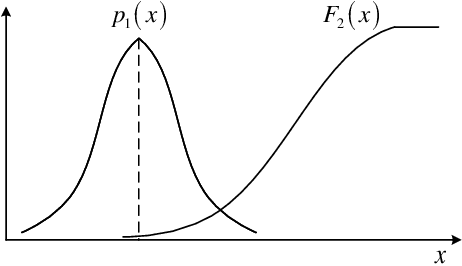

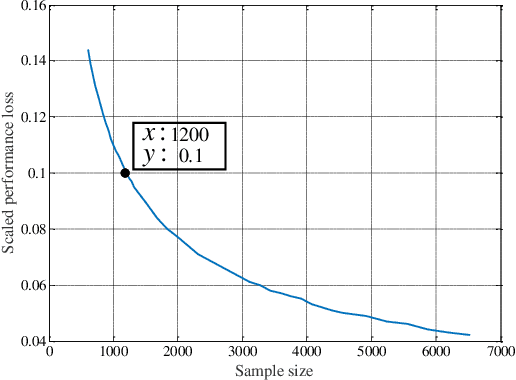

Machine Learning Based Channel Estimation: A Computational Approach for Universal Channel Conditions

Nov 10, 2019

Abstract:Recently, machine learning has been introduced in communications to deal with channel estimation. Under non-linear system models, the superiority of machine learning based estimation has been demonstrated by simulation expriments, but the theoretical analysis is not sufficient, since the performance of machine learning, especially deep learning, is hard to analyze. This paper focuses on some theoretical problems in machine learning based channel estimation. As a data-driven method, certain amount of training data is the prerequisite of a workable machine learning based estimation, and it is analyzed qualitively in a statistic view in this paper. To deduce the exact sample size, we build a statistic model ignoring the exact structure of the learning module and then the relationship between sample size and learning performance is derived. To testify our analysis, we employ machine learning based channel estimation in OFDM system and apply two typical neural networks as the learning module: single layer or linear structure and three layer structure. The simulation results show that the analysis sample size is correct when input dimension and complexity of learning module are low, but the true required sample size will be larger the analysis result otherwise, since the influence of the two factors is not considered in the analysis of sample size. Also, we simulate the performance of machine learning based channel estimation under quasi-stationary channel condition, where the explicit form of MMSE estimation is hard to obtain, and the simulation results exhibit the effectiveness and convenience of machine learning based channel estimation under complex channel models.

Deep Neural Network Aided Scenario Identification in Wireless Multi-path Fading Channels

Nov 23, 2018

Abstract:This letter illustrates our preliminary works in deep nerual network (DNN) for wireless communication scenario identification in wireless multi-path fading channels. In this letter, six kinds of channel scenarios referring to COST 207 channel model have been performed. 100% identification accuracy has been observed given signal-to-noise (SNR) over 20dB whereas a 88.4% average accuracy has been obtained where SNR ranged from 0dB to 40dB. The proposed method has tested under fast time-varying conditions, which were similar with real world wireless multi-path fading channels, enabling it to work feasibly in practical scenario identification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge