Jianing Wei

Simulating Financial Market via Large Language Model based Agents

Jun 28, 2024

Abstract:Most economic theories typically assume that financial market participants are fully rational individuals and use mathematical models to simulate human behavior in financial markets. However, human behavior is often not entirely rational and is challenging to predict accurately with mathematical models. In this paper, we propose \textbf{A}gent-based \textbf{S}imulated \textbf{F}inancial \textbf{M}arket (ASFM), which first constructs a simulated stock market with a real order matching system. Then, we propose a large language model based agent as the stock trader, which contains the profile, observation, and tool-learning based action module. The trading agent can comprehensively understand current market dynamics and financial policy information, and make decisions that align with their trading strategy. In the experiments, we first verify that the reactions of our ASFM are consistent with the real stock market in two controllable scenarios. In addition, we also conduct experiments in two popular economics research directions, and we find that conclusions drawn in our \model align with the preliminary findings in economics research. Based on these observations, we believe our proposed ASFM provides a new paradigm for economic research.

Enhanced Semantic Segmentation Pipeline for WeatherProof Dataset Challenge

Jun 07, 2024Abstract:This report describes the winning solution to the WeatherProof Dataset Challenge (CVPR 2024 UG2+ Track 3). Details regarding the challenge are available at https://cvpr2024ug2challenge.github.io/track3.html. We propose an enhanced semantic segmentation pipeline for this challenge. Firstly, we improve semantic segmentation models, using backbone pretrained with Depth Anything to improve UperNet model and SETRMLA model, and adding language guidance based on both weather and category information to InternImage model. Secondly, we introduce a new dataset WeatherProofExtra with wider viewing angle and employ data augmentation methods, including adverse weather and super-resolution. Finally, effective training strategies and ensemble method are applied to improve final performance further. Our solution is ranked 1st on the final leaderboard. Code will be available at https://github.com/KaneiGi/WeatherProofChallenge.

The Third Monocular Depth Estimation Challenge

Apr 27, 2024

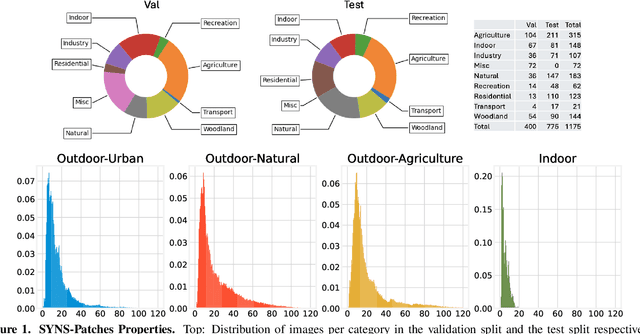

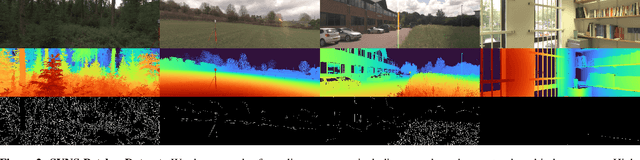

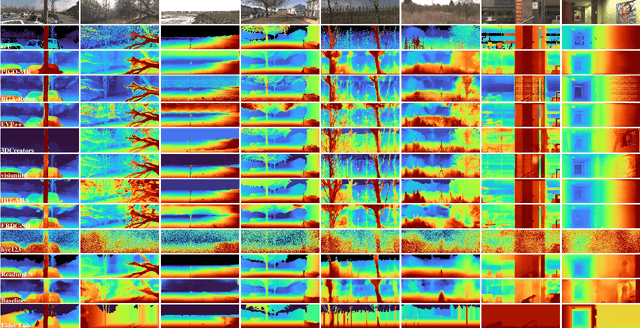

Abstract:This paper discusses the results of the third edition of the Monocular Depth Estimation Challenge (MDEC). The challenge focuses on zero-shot generalization to the challenging SYNS-Patches dataset, featuring complex scenes in natural and indoor settings. As with the previous edition, methods can use any form of supervision, i.e. supervised or self-supervised. The challenge received a total of 19 submissions outperforming the baseline on the test set: 10 among them submitted a report describing their approach, highlighting a diffused use of foundational models such as Depth Anything at the core of their method. The challenge winners drastically improved 3D F-Score performance, from 17.51% to 23.72%.

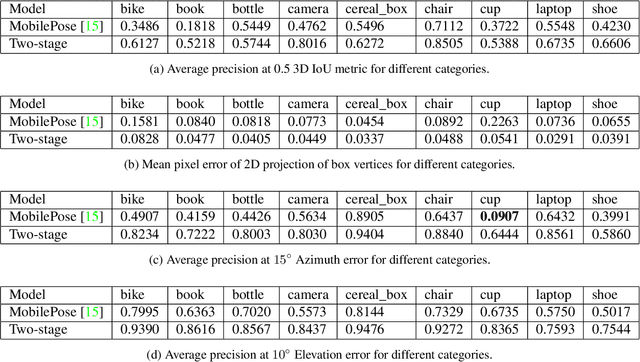

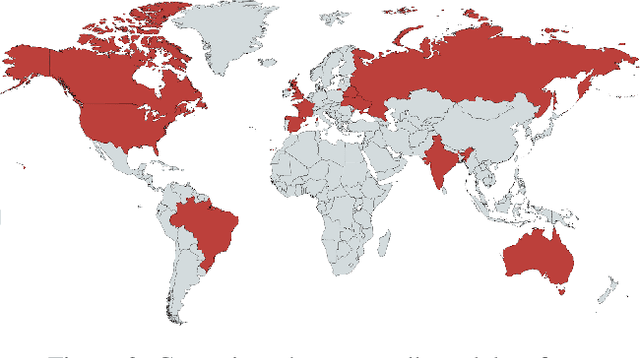

Objectron: A Large Scale Dataset of Object-Centric Videos in the Wild with Pose Annotations

Dec 18, 2020

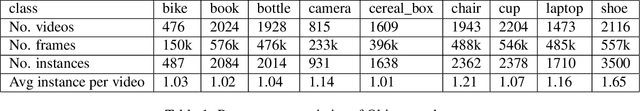

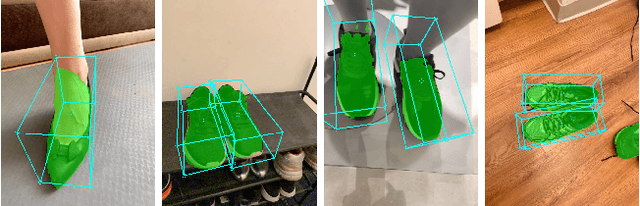

Abstract:3D object detection has recently become popular due to many applications in robotics, augmented reality, autonomy, and image retrieval. We introduce the Objectron dataset to advance the state of the art in 3D object detection and foster new research and applications, such as 3D object tracking, view synthesis, and improved 3D shape representation. The dataset contains object-centric short videos with pose annotations for nine categories and includes 4 million annotated images in 14,819 annotated videos. We also propose a new evaluation metric, 3D Intersection over Union, for 3D object detection. We demonstrate the usefulness of our dataset in 3D object detection tasks by providing baseline models trained on this dataset. Our dataset and evaluation source code are available online at http://www.objectron.dev

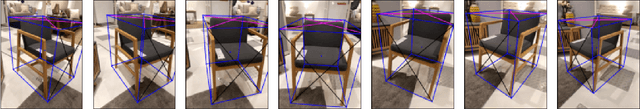

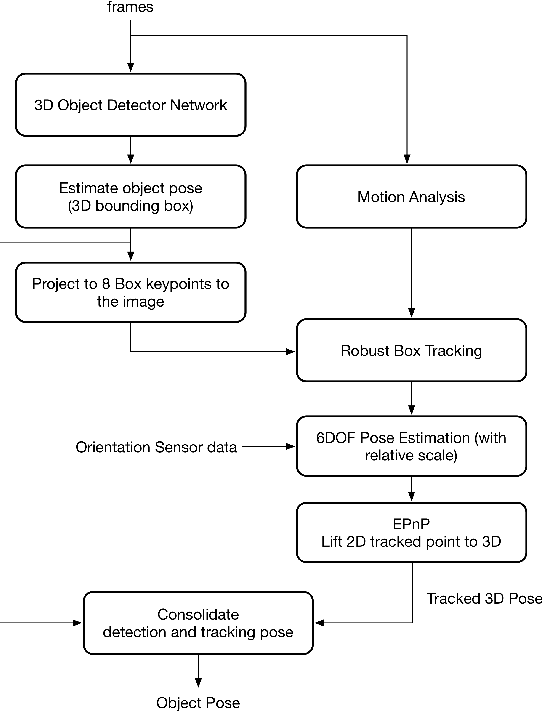

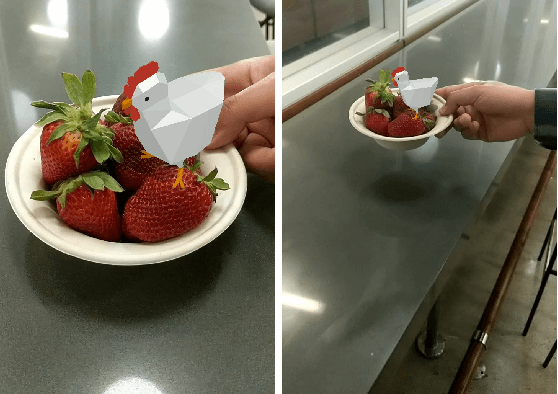

Instant 3D Object Tracking with Applications in Augmented Reality

Jun 23, 2020

Abstract:Tracking object poses in 3D is a crucial building block for Augmented Reality applications. We propose an instant motion tracking system that tracks an object's pose in space (represented by its 3D bounding box) in real-time on mobile devices. Our system does not require any prior sensory calibration or initialization to function. We employ a deep neural network to detect objects and estimate their initial 3D pose. Then the estimated pose is tracked using a robust planar tracker. Our tracker is capable of performing relative-scale 9-DoF tracking in real-time on mobile devices. By combining use of CPU and GPU efficiently, we achieve 26-FPS+ performance on mobile devices.

MobilePose: Real-Time Pose Estimation for Unseen Objects with Weak Shape Supervision

Mar 07, 2020

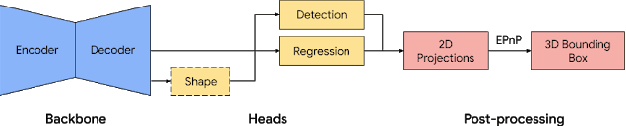

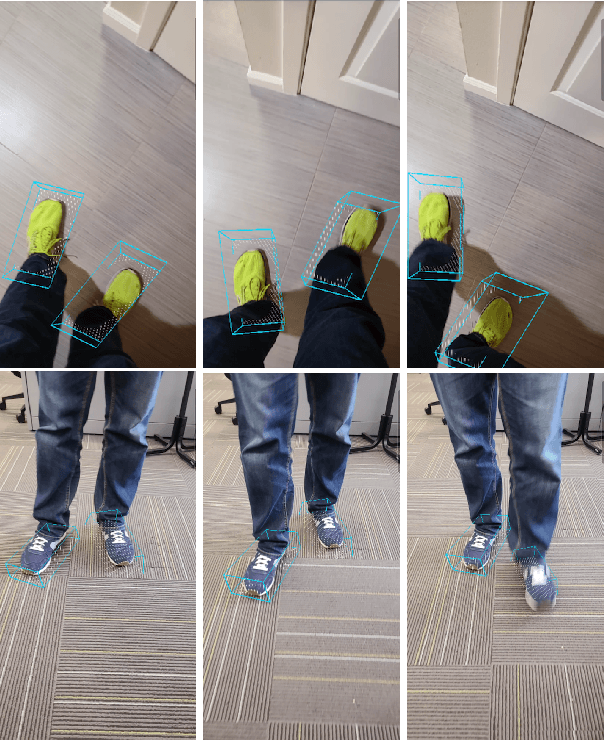

Abstract:In this paper, we address the problem of detecting unseen objects from RGB images and estimating their poses in 3D. We propose two mobile friendly networks: MobilePose-Base and MobilePose-Shape. The former is used when there is only pose supervision, and the latter is for the case when shape supervision is available, even a weak one. We revisit shape features used in previous methods, including segmentation and coordinate map. We explain when and why pixel-level shape supervision can improve pose estimation. Consequently, we add shape prediction as an intermediate layer in the MobilePose-Shape, and let the network learn pose from shape. Our models are trained on mixed real and synthetic data, with weak and noisy shape supervision. They are ultra lightweight that can run in real-time on modern mobile devices (e.g. 36 FPS on Galaxy S20). Comparing with previous single-shot solutions, our method has higher accuracy, while using a significantly smaller model (2~3% in model size or number of parameters).

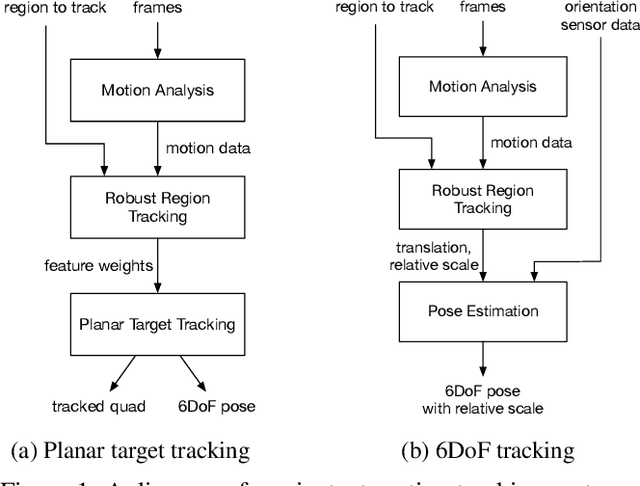

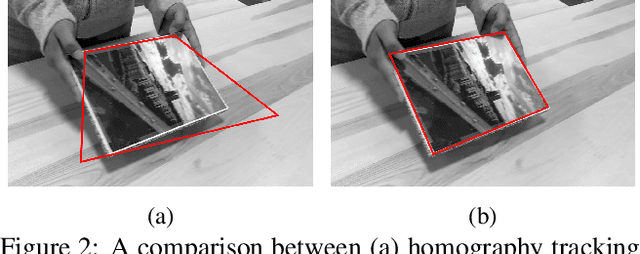

Instant Motion Tracking and Its Applications to Augmented Reality

Jul 16, 2019

Abstract:Augmented Reality (AR) brings immersive experiences to users. With recent advances in computer vision and mobile computing, AR has scaled across platforms, and has increased adoption in major products. One of the key challenges in enabling AR features is proper anchoring of the virtual content to the real world, a process referred to as tracking. In this paper, we present a system for motion tracking, which is capable of robustly tracking planar targets and performing relative-scale 6DoF tracking without calibration. Our system runs in real-time on mobile phones and has been deployed in multiple major products on hundreds of millions of devices.

* CVPR Workshop on Computer Vision for Augmented and Virtual Reality, Long Beach, CA, 2019

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge