Jean Anne C. Incorvia

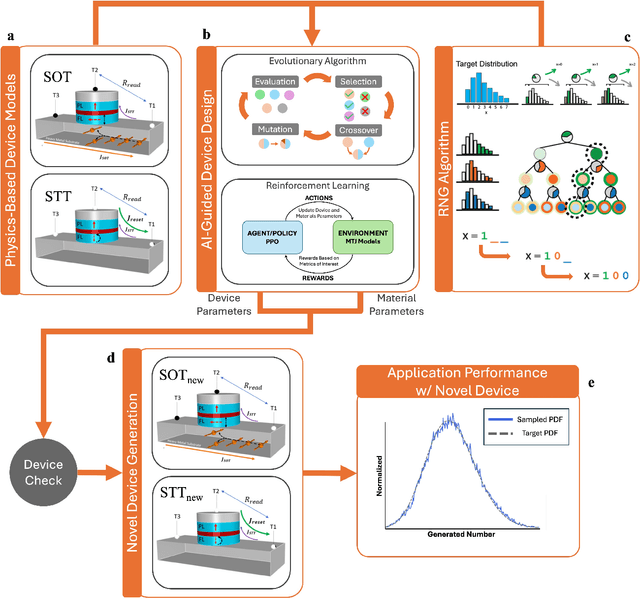

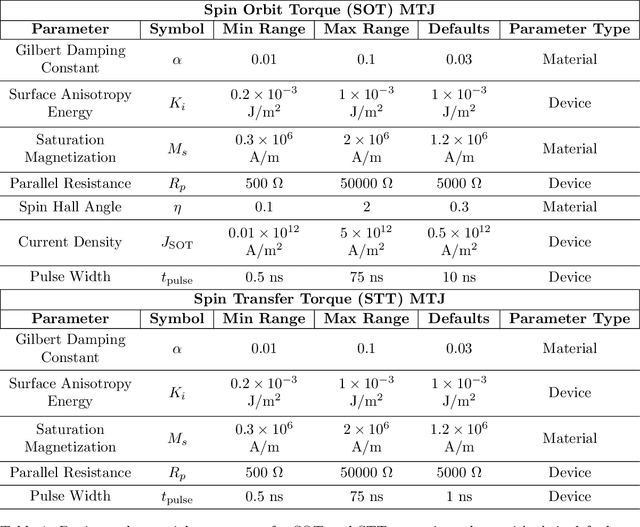

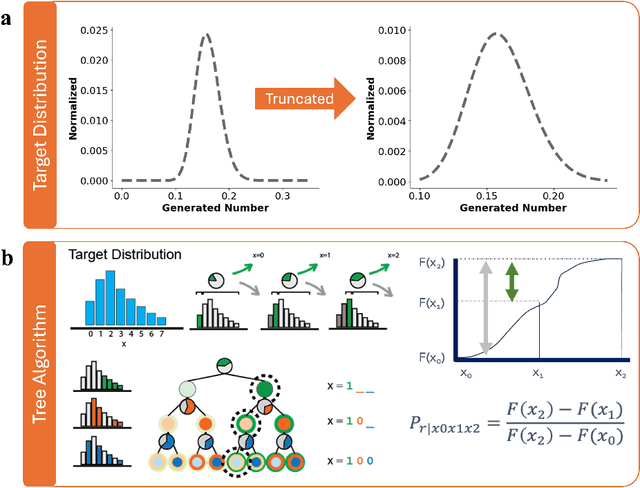

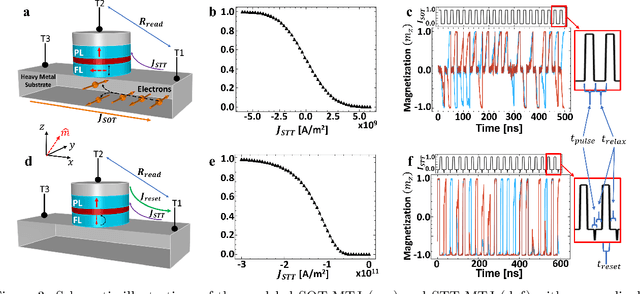

AI-Guided Codesign Framework for Novel Material and Device Design applied to MTJ-based True Random Number Generators

Nov 01, 2024

Abstract:Novel devices and novel computing paradigms are key for energy efficient, performant future computing systems. However, designing devices for new applications is often time consuming and tedious. Here, we investigate the design and optimization of spin orbit torque and spin transfer torque magnetic tunnel junction models as the probabilistic devices for true random number generation. We leverage reinforcement learning and evolutionary optimization to vary key device and material properties of the various device models for stochastic operation. Our AI guided codesign methods generated different candidate devices capable of generating stochastic samples for a desired probability distribution, while also minimizing energy usage for the devices.

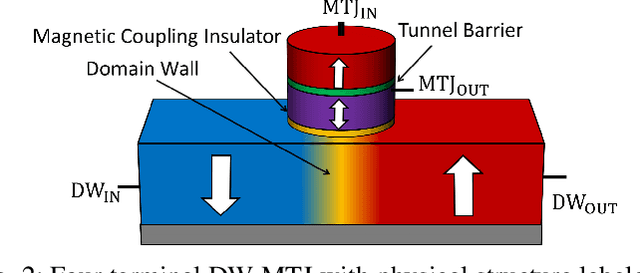

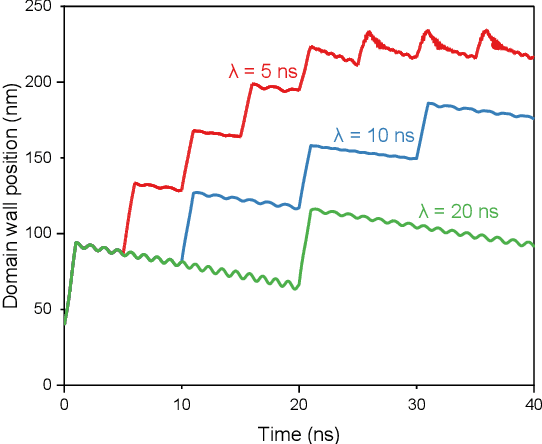

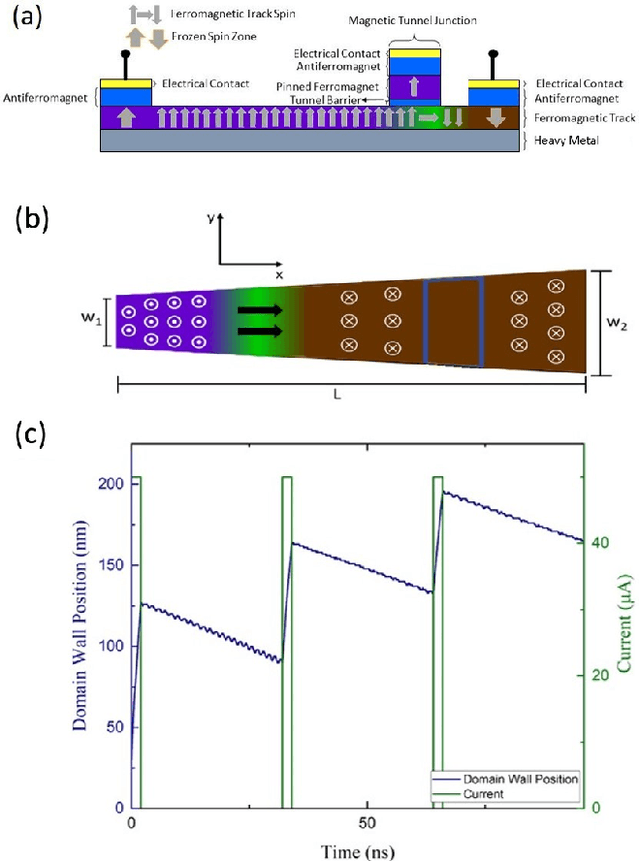

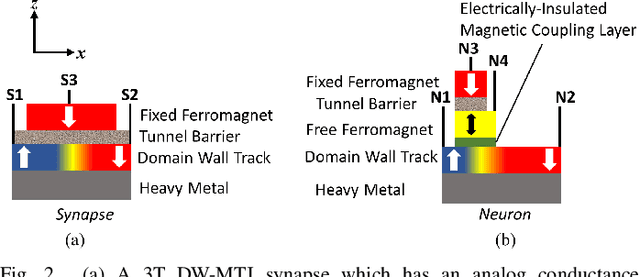

Domain Wall Magnetic Tunnel Junction Reliable Integrate and Fire Neuron

May 23, 2024

Abstract:In spiking neural networks, neuron dynamics are described by the biologically realistic integrate-and-fire model that captures membrane potential accumulation and above-threshold firing behaviors. Among the hardware implementations of integrate-and-fire neuron devices, one important feature, reset, has been largely ignored. Here, we present the design and fabrication of a magnetic domain wall and magnetic tunnel junction based artificial integrate-and-fire neuron device that achieves reliable reset at the end of the integrate-fire cycle. We demonstrate the domain propagation in the domain wall racetrack (integration), reading using a magnetic tunnel junction (fire), and reset as the domain is ejected from the racetrack, showing the artificial neuron can be operated continuously over 100 integrate-fire-reset cycles. Both pulse amplitude and pulse number encoding is demonstrated. The device data is applied on an image classification task using a spiking neural network and shown to have comparable performance to an ideal leaky, integrate-and-fire neural network. These results achieve the first demonstration of reliable integrate-fire-reset in domain wall-magnetic tunnel junction-based neuron devices and shows the promise of spintronics for neuromorphic computing.

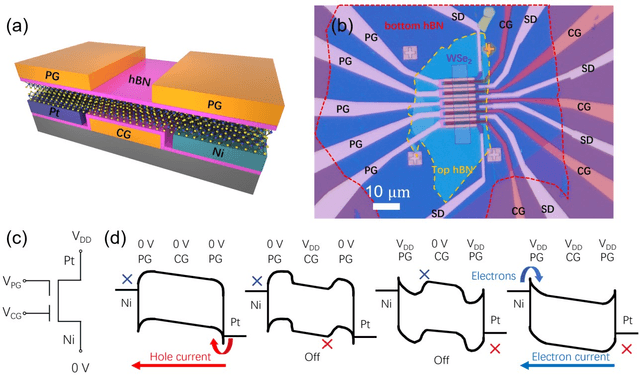

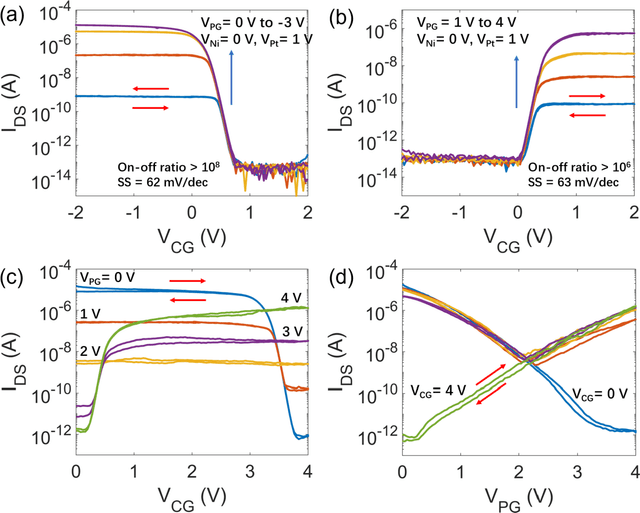

Cascaded Logic Gates Based on High-Performance Ambipolar Dual-Gate WSe2 Thin Film Transistors

May 02, 2023

Abstract:Ambipolar dual-gate transistors based on two-dimensional (2D) materials, such as graphene, carbon nanotubes, black phosphorus, and certain transition metal dichalcogenides (TMDs), enable reconfigurable logic circuits with suppressed off-state current. These circuits achieve the same logical output as CMOS with fewer transistors and offer greater flexibility in design. The primary challenge lies in the cascadability and power consumption of these logic gates with static CMOS-like connections. In this article, high-performance ambipolar dual-gate transistors based on tungsten diselenide (WSe2) are fabricated. A high on-off ratio of 10^8 and 10^6, a low off-state current of 100 to 300 fA, a negligible hysteresis, and an ideal subthreshold swing of 62 and 63 mV/dec are measured in the p- and n-type transport, respectively. For the first time, we demonstrate cascadable and cascaded logic gates using ambipolar TMD transistors with minimal static power consumption, including inverters, XOR, NAND, NOR, and buffers made by cascaded inverters. A thorough study of both the control gate and polarity gate behavior is conducted, which has previously been lacking. The noise margin of the logic gates is measured and analyzed. The large noise margin enables the implementation of VT-drop circuits, a type of logic with reduced transistor number and simplified circuit design. Finally, the speed performance of the VT-drop and other circuits built by dual-gate devices are qualitatively analyzed. This work lays the foundation for future developments in the field of ambipolar dual-gate TMD transistors, showing their potential for low-power, high-speed and more flexible logic circuits.

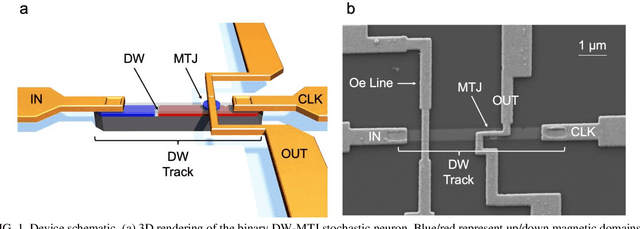

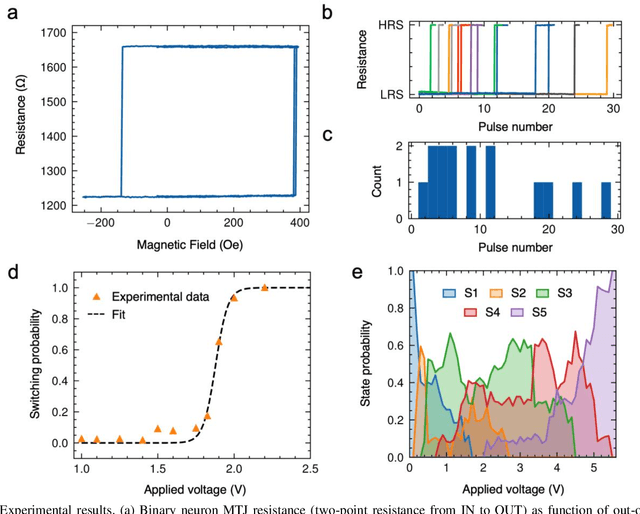

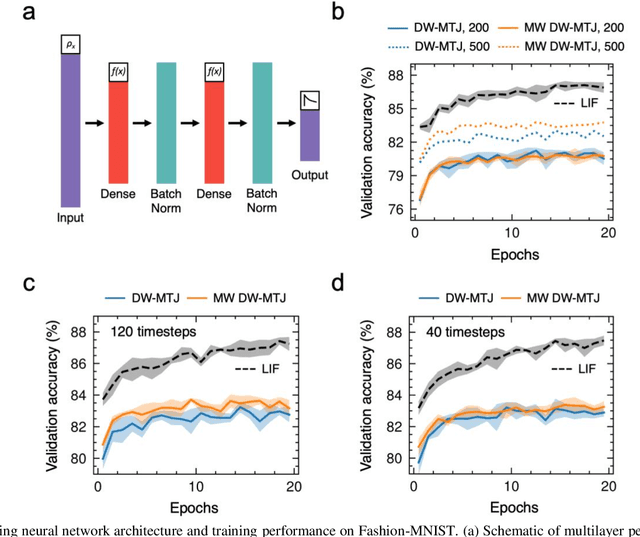

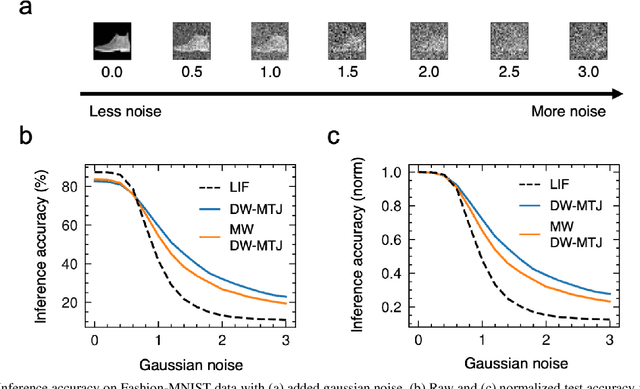

Stochastic Domain Wall-Magnetic Tunnel Junction Artificial Neurons for Noise-Resilient Spiking Neural Networks

Apr 10, 2023

Abstract:The spatiotemporal nature of neuronal behavior in spiking neural networks (SNNs) make SNNs promising for edge applications that require high energy efficiency. To realize SNNs in hardware, spintronic neuron implementations can bring advantages of scalability and energy efficiency. Domain wall (DW) based magnetic tunnel junction (MTJ) devices are well suited for probabilistic neural networks given their intrinsic integrate-and-fire behavior with tunable stochasticity. Here, we present a scaled DW-MTJ neuron with voltage-dependent firing probability. The measured behavior was used to simulate a SNN that attains accuracy during learning compared to an equivalent, but more complicated, multi-weight (MW) DW-MTJ device. The validation accuracy during training was also shown to be comparable to an ideal leaky integrate and fire (LIF) device. However, during inference, the binary DW-MTJ neuron outperformed the other devices after gaussian noise was introduced to the Fashion-MNIST classification task. This work shows that DW-MTJ devices can be used to construct noise-resilient networks suitable for neuromorphic computing on the edge.

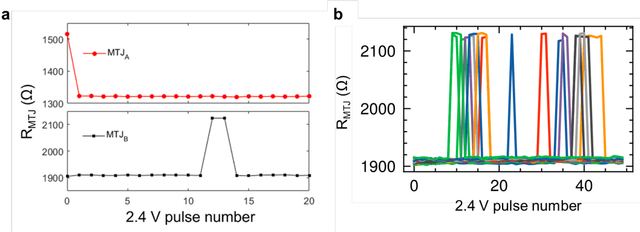

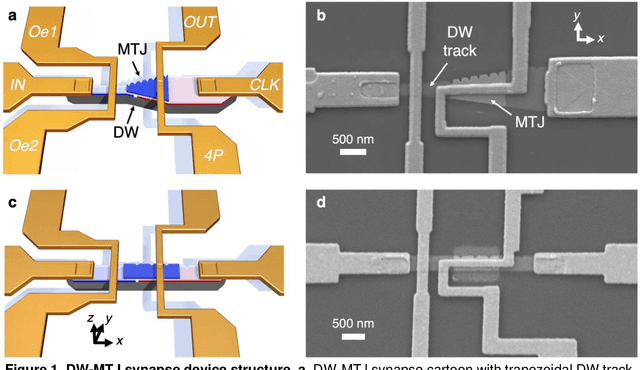

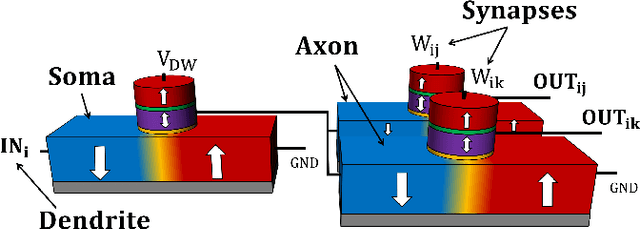

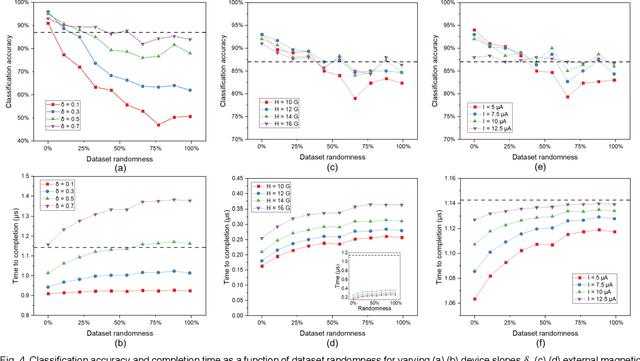

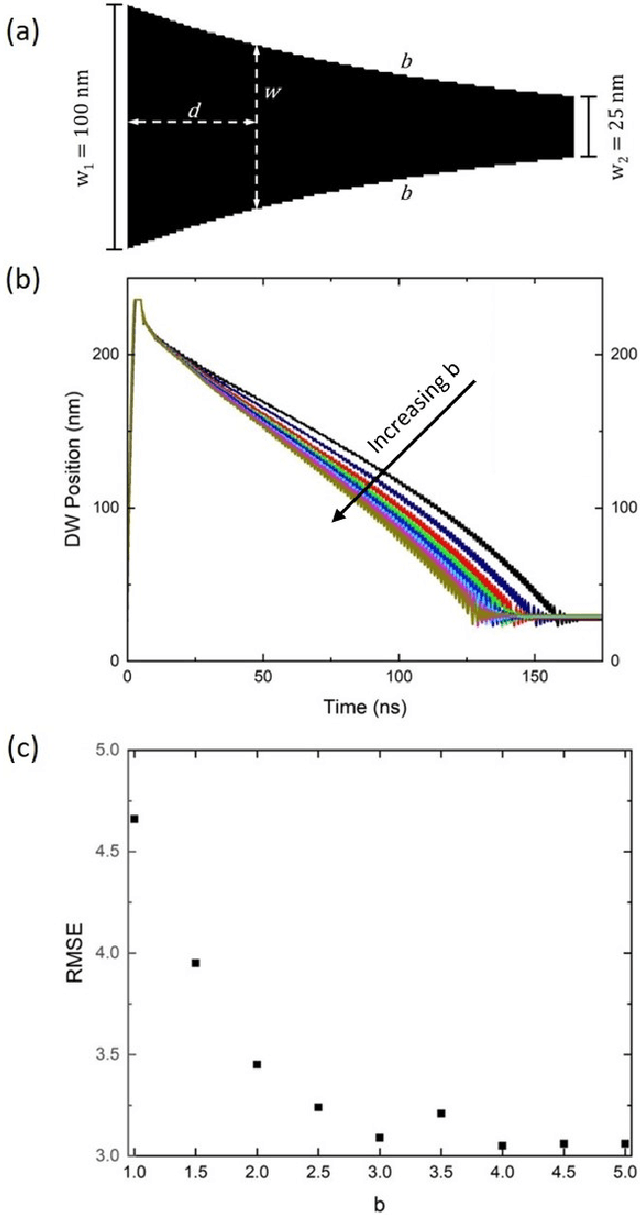

Shape-Dependent Multi-Weight Magnetic Artificial Synapses for Neuromorphic Computing

Nov 22, 2021

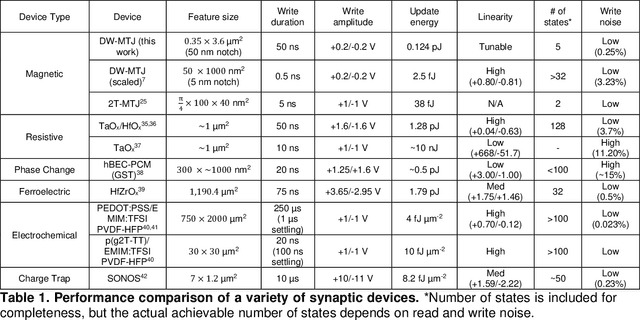

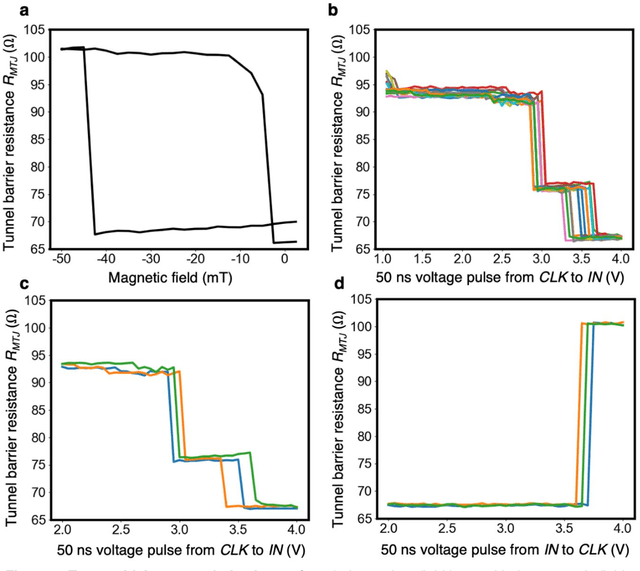

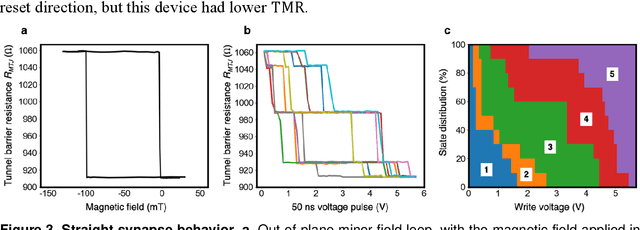

Abstract:In neuromorphic computing, artificial synapses provide a multi-weight conductance state that is set based on inputs from neurons, analogous to the brain. Additional properties of the synapse beyond multiple weights can be needed, and can depend on the application, requiring the need for generating different synapse behaviors from the same materials. Here, we measure artificial synapses based on magnetic materials that use a magnetic tunnel junction and a magnetic domain wall. By fabricating lithographic notches in a domain wall track underneath a single magnetic tunnel junction, we achieve 4-5 stable resistance states that can be repeatably controlled electrically using spin orbit torque. We analyze the effect of geometry on the synapse behavior, showing that a trapezoidal device has asymmetric weight updates with high controllability, while a straight device has higher stochasticity, but with stable resistance levels. The device data is input into neuromorphic computing simulators to show the usefulness of application-specific synaptic functions. Implementing an artificial neural network applied on streamed Fashion-MNIST data, we show that the trapezoidal magnetic synapse can be used as a metaplastic function for efficient online learning. Implementing a convolutional neural network for CIFAR-100 image recognition, we show that the straight magnetic synapse achieves near-ideal inference accuracy, due to the stability of its resistance levels. This work shows multi-weight magnetic synapses are a feasible technology for neuromorphic computing and provides design guidelines for emerging artificial synapse technologies.

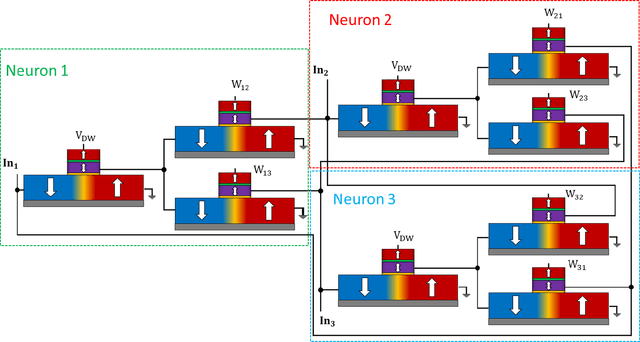

High-Speed CMOS-Free Purely Spintronic Asynchronous Recurrent Neural Network

Jul 05, 2021

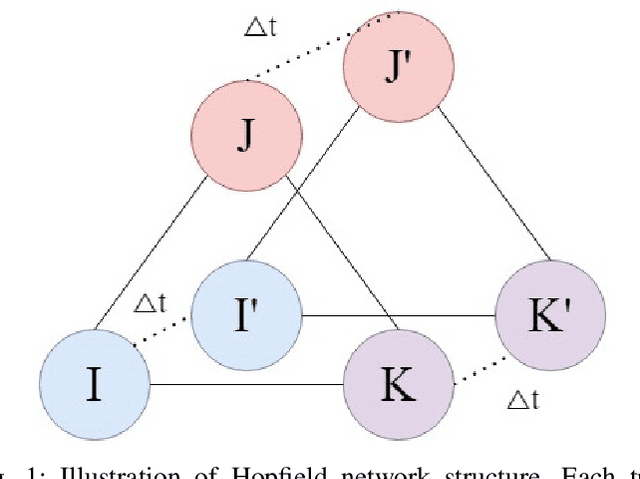

Abstract:Neuromorphic computing systems overcome the limitations of traditional von Neumann computing architectures. These computing systems can be further improved upon by using emerging technologies that are more efficient than CMOS for neural computation. Recent research has demonstrated memristors and spintronic devices in various neural network designs boost efficiency and speed. This paper presents a biologically inspired fully spintronic neuron used in a fully spintronic Hopfield RNN. The network is used to solve tasks, and the results are compared against those of current Hopfield neuromorphic architectures which use emerging technologies.

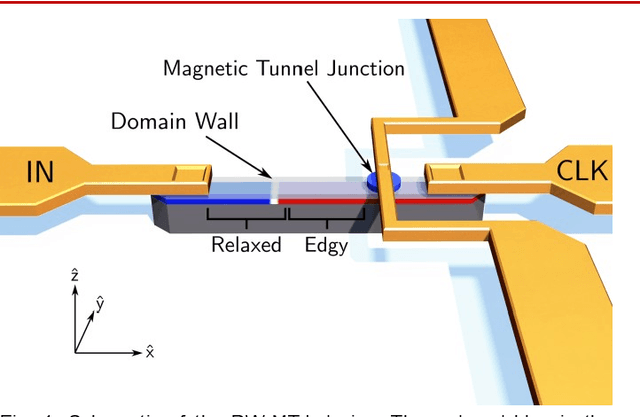

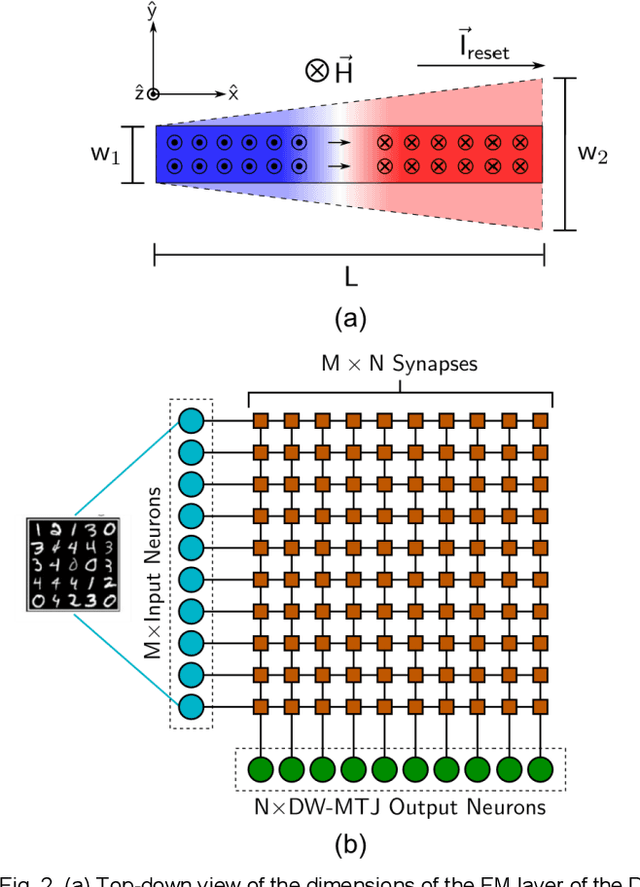

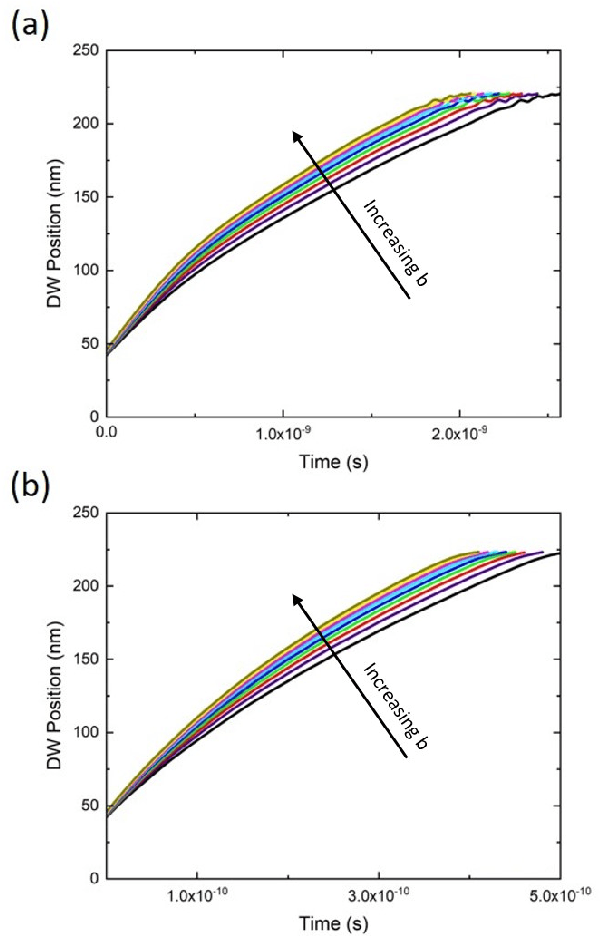

Controllable reset behavior in domain wall-magnetic tunnel junction artificial neurons for task-adaptable computation

Jan 08, 2021

Abstract:Neuromorphic computing with spintronic devices has been of interest due to the limitations of CMOS-driven von Neumann computing. Domain wall-magnetic tunnel junction (DW-MTJ) devices have been shown to be able to intrinsically capture biological neuron behavior. Edgy-relaxed behavior, where a frequently firing neuron experiences a lower action potential threshold, may provide additional artificial neuronal functionality when executing repeated tasks. In this study, we demonstrate that this behavior can be implemented in DW-MTJ artificial neurons via three alternative mechanisms: shape anisotropy, magnetic field, and current-driven soft reset. Using micromagnetics and analytical device modeling to classify the Optdigits handwritten digit dataset, we show that edgy-relaxed behavior improves both classification accuracy and classification rate for ordered datasets while sacrificing little to no accuracy for a randomized dataset. This work establishes methods by which artificial spintronic neurons can be flexibly adapted to datasets.

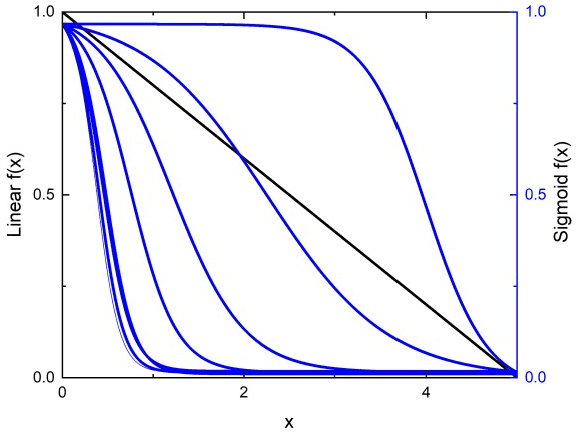

Domain Wall Leaky Integrate-and-Fire Neurons with Shape-Based Configurable Activation Functions

Nov 11, 2020

Abstract:Complementary metal oxide semiconductor (CMOS) devices display volatile characteristics, and are not well suited for analog applications such as neuromorphic computing. Spintronic devices, on the other hand, exhibit both non-volatile and analog features, which are well-suited to neuromorphic computing. Consequently, these novel devices are at the forefront of beyond-CMOS artificial intelligence applications. However, a large quantity of these artificial neuromorphic devices still require the use of CMOS, which decreases the efficiency of the system. To resolve this, we have previously proposed a number of artificial neurons and synapses that do not require CMOS for operation. Although these devices are a significant improvement over previous renditions, their ability to enable neural network learning and recognition is limited by their intrinsic activation functions. This work proposes modifications to these spintronic neurons that enable configuration of the activation functions through control of the shape of a magnetic domain wall track. Linear and sigmoidal activation functions are demonstrated in this work, which can be extended through a similar approach to enable a wide variety of activation functions.

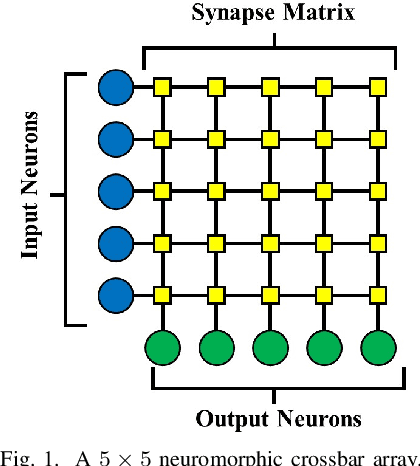

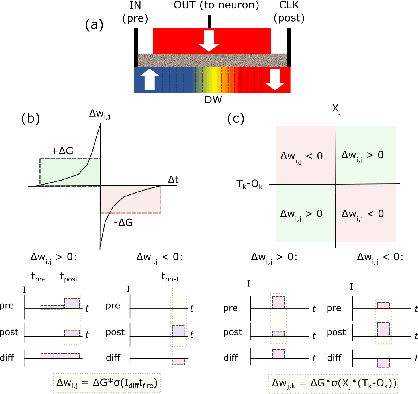

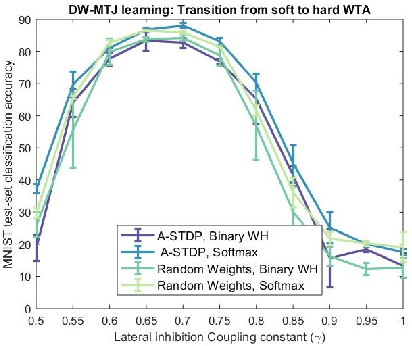

Unsupervised Competitive Hardware Learning Rule for Spintronic Clustering Architecture

Mar 24, 2020

Abstract:We propose a hardware learning rule for unsupervised clustering within a novel spintronic computing architecture. The proposed approach leverages the three-terminal structure of domain-wall magnetic tunnel junction devices to establish a feedback loop that serves to train such devices when they are used as synapses in a neuromorphic computing architecture.

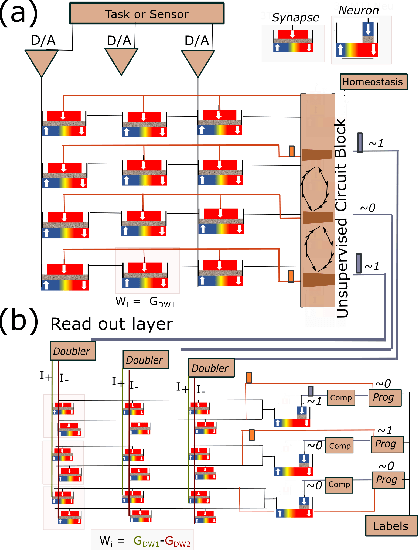

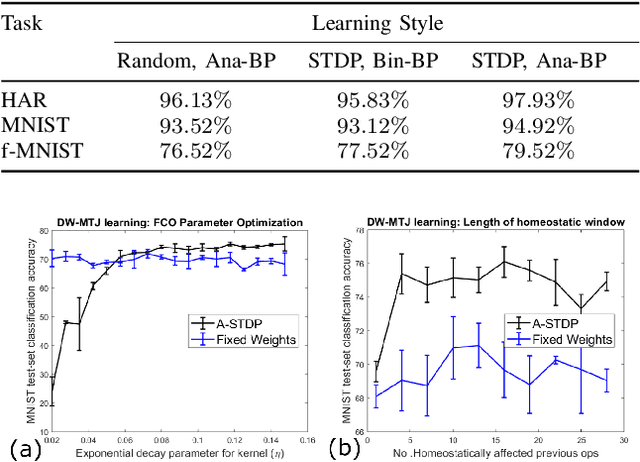

Plasticity-Enhanced Domain-Wall MTJ Neural Networks for Energy-Efficient Online Learning

Mar 04, 2020

Abstract:Machine learning implements backpropagation via abundant training samples. We demonstrate a multi-stage learning system realized by a promising non-volatile memory device, the domain-wall magnetic tunnel junction (DW-MTJ). The system consists of unsupervised (clustering) as well as supervised sub-systems, and generalizes quickly (with few samples). We demonstrate interactions between physical properties of this device and optimal implementation of neuroscience-inspired plasticity learning rules, and highlight performance on a suite of tasks. Our energy analysis confirms the value of the approach, as the learning budget stays below 20 $\mu J$ even for large tasks used typically in machine learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge