Israa Jaradat

On Detecting Cherry-picking in News Coverage Using Large Language Models

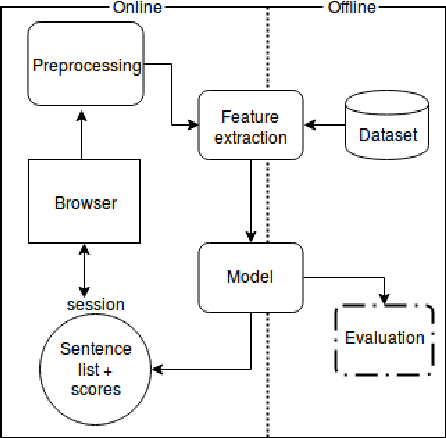

Jan 11, 2024Abstract:Cherry-picking refers to the deliberate selection of evidence or facts that favor a particular viewpoint while ignoring or distorting evidence that supports an opposing perspective. Manually identifying instances of cherry-picked statements in news stories can be challenging, particularly when the opposing viewpoint's story is absent. This study introduces Cherry, an innovative approach for automatically detecting cherry-picked statements in news articles by finding missing important statements in the target news story. Cherry utilizes the analysis of news coverage from multiple sources to identify instances of cherry-picking. Our approach relies on language models that consider contextual information from other news sources to classify statements based on their importance to the event covered in the target news story. Furthermore, this research introduces a novel dataset specifically designed for cherry-picking detection, which was used to train and evaluate the performance of the models. Our best performing model achieves an F-1 score of about %89 in detecting important statements when tested on unseen set of news stories. Moreover, results show the importance incorporating external knowledge from alternative unbiased narratives when assessing a statement's importance.

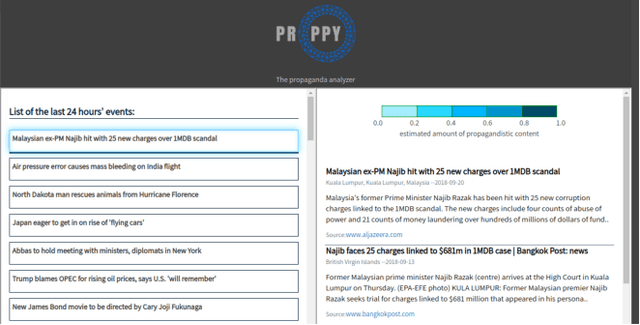

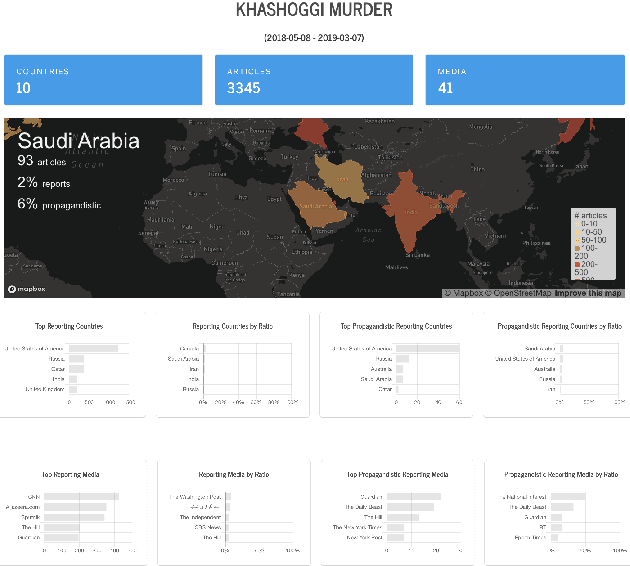

Proppy: A System to Unmask Propaganda in Online News

Dec 14, 2019

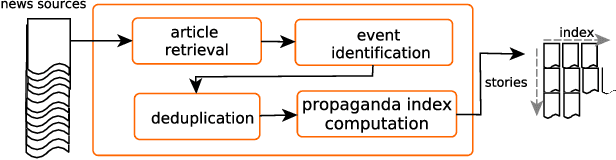

Abstract:We present proppy, the first publicly available real-world, real-time propaganda detection system for online news, which aims at raising awareness, thus potentially limiting the impact of propaganda and helping fight disinformation. The system constantly monitors a number of news sources, deduplicates and clusters the news into events, and organizes the articles about an event on the basis of the likelihood that they contain propagandistic content. The system is trained on known propaganda sources using a variety of stylistic features. The evaluation results on a standard dataset show state-of-the-art results for propaganda detection.

* propaganda, disinformation, fake news

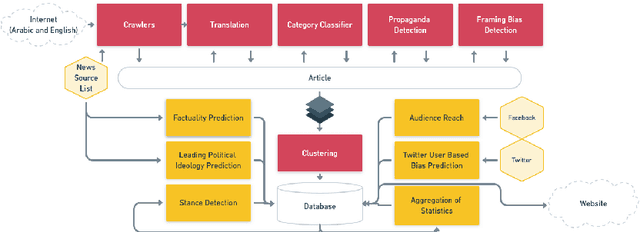

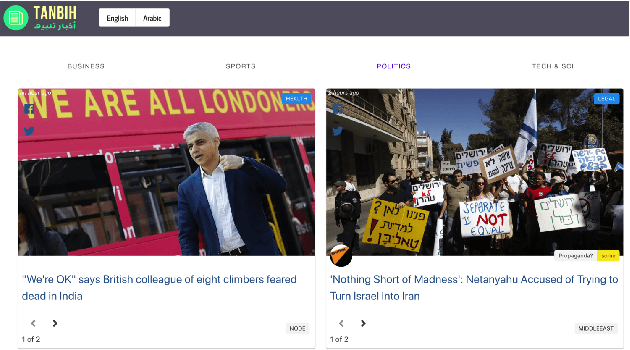

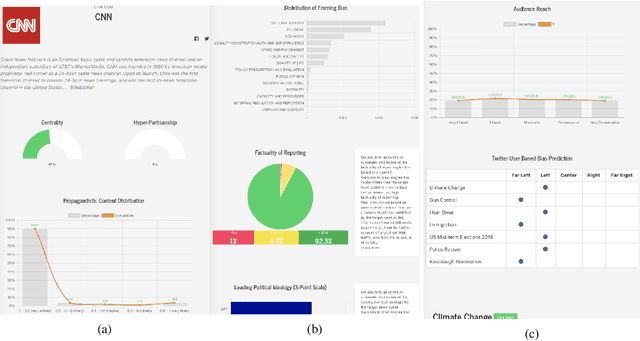

Tanbih: Get To Know What You Are Reading

Oct 04, 2019

Abstract:We introduce Tanbih, a news aggregator with intelligent analysis tools to help readers understanding what's behind a news story. Our system displays news grouped into events and generates media profiles that show the general factuality of reporting, the degree of propagandistic content, hyper-partisanship, leading political ideology, general frame of reporting, and stance with respect to various claims and topics of a news outlet. In addition, we automatically analyse each article to detect whether it is propagandistic and to determine its stance with respect to a number of controversial topics.

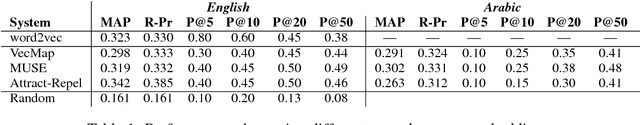

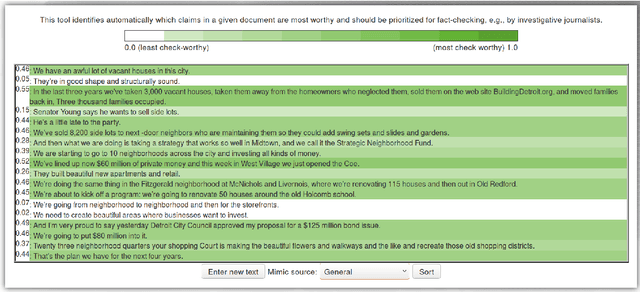

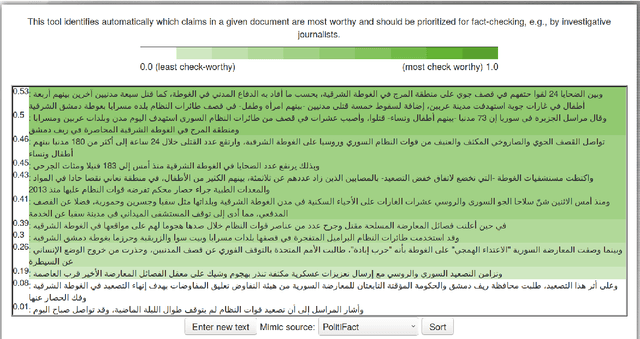

ClaimRank: Detecting Check-Worthy Claims in Arabic and English

Apr 20, 2018

Abstract:We present ClaimRank, an online system for detecting check-worthy claims. While originally trained on political debates, the system can work for any kind of text, e.g., interviews or regular news articles. Its aim is to facilitate manual fact-checking efforts by prioritizing the claims that fact-checkers should consider first. ClaimRank supports both Arabic and English, it is trained on actual annotations from nine reputable fact-checking organizations (PolitiFact, FactCheck, ABC, CNN, NPR, NYT, Chicago Tribune, The Guardian, and Washington Post), and thus it can mimic the claim selection strategies for each and any of them, as well as for the union of them all.

* Check-worthiness; Fact-Checking; Veracity; Community-Question Answering; Neural Networks; Arabic; English

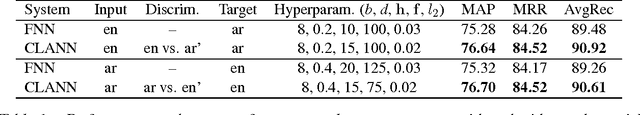

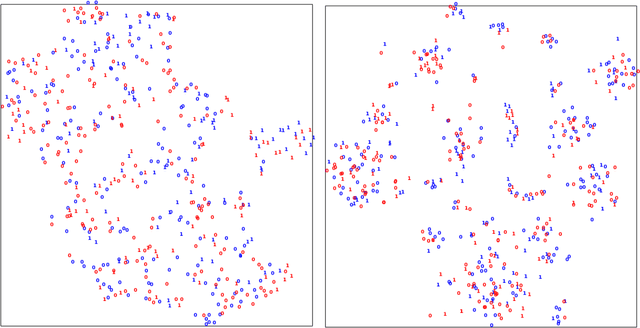

Cross-language Learning with Adversarial Neural Networks: Application to Community Question Answering

Jun 21, 2017

Abstract:We address the problem of cross-language adaptation for question-question similarity reranking in community question answering, with the objective to port a system trained on one input language to another input language given labeled training data for the first language and only unlabeled data for the second language. In particular, we propose to use adversarial training of neural networks to learn high-level features that are discriminative for the main learning task, and at the same time are invariant across the input languages. The evaluation results show sizable improvements for our cross-language adversarial neural network (CLANN) model over a strong non-adversarial system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge