Diana Acosta-Navas

AI and the Future of Digital Public Squares

Dec 13, 2024

Abstract:Two substantial technological advances have reshaped the public square in recent decades: first with the advent of the internet and second with the recent introduction of large language models (LLMs). LLMs offer opportunities for a paradigm shift towards more decentralized, participatory online spaces that can be used to facilitate deliberative dialogues at scale, but also create risks of exacerbating societal schisms. Here, we explore four applications of LLMs to improve digital public squares: collective dialogue systems, bridging systems, community moderation, and proof-of-humanity systems. Building on the input from over 70 civil society experts and technologists, we argue that LLMs both afford promising opportunities to shift the paradigm for conversations at scale and pose distinct risks for digital public squares. We lay out an agenda for future research and investments in AI that will strengthen digital public squares and safeguard against potential misuses of AI.

Holistic Evaluation of Language Models

Nov 16, 2022

Abstract:Language models (LMs) are becoming the foundation for almost all major language technologies, but their capabilities, limitations, and risks are not well understood. We present Holistic Evaluation of Language Models (HELM) to improve the transparency of language models. First, we taxonomize the vast space of potential scenarios (i.e. use cases) and metrics (i.e. desiderata) that are of interest for LMs. Then we select a broad subset based on coverage and feasibility, noting what's missing or underrepresented (e.g. question answering for neglected English dialects, metrics for trustworthiness). Second, we adopt a multi-metric approach: We measure 7 metrics (accuracy, calibration, robustness, fairness, bias, toxicity, and efficiency) for each of 16 core scenarios when possible (87.5% of the time). This ensures metrics beyond accuracy don't fall to the wayside, and that trade-offs are clearly exposed. We also perform 7 targeted evaluations, based on 26 targeted scenarios, to analyze specific aspects (e.g. reasoning, disinformation). Third, we conduct a large-scale evaluation of 30 prominent language models (spanning open, limited-access, and closed models) on all 42 scenarios, 21 of which were not previously used in mainstream LM evaluation. Prior to HELM, models on average were evaluated on just 17.9% of the core HELM scenarios, with some prominent models not sharing a single scenario in common. We improve this to 96.0%: now all 30 models have been densely benchmarked on the same core scenarios and metrics under standardized conditions. Our evaluation surfaces 25 top-level findings. For full transparency, we release all raw model prompts and completions publicly for further analysis, as well as a general modular toolkit. We intend for HELM to be a living benchmark for the community, continuously updated with new scenarios, metrics, and models.

Envisioning Communities: A Participatory Approach Towards AI for Social Good

May 04, 2021

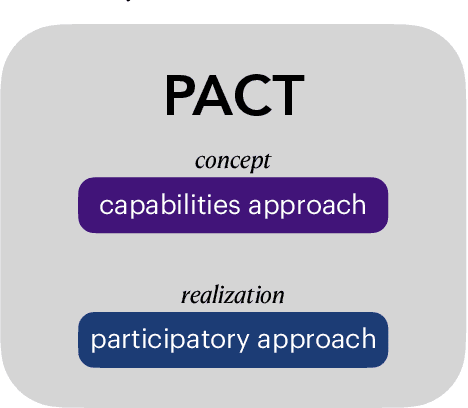

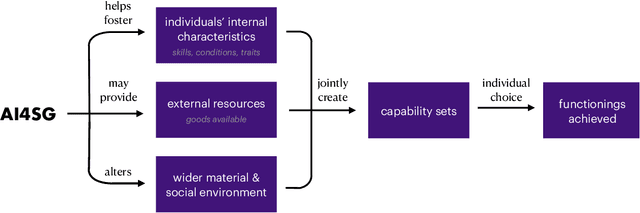

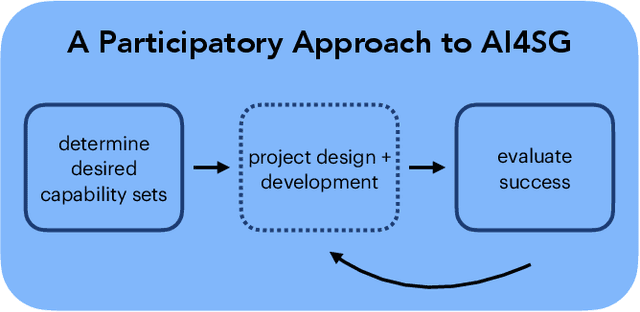

Abstract:Research in artificial intelligence (AI) for social good presupposes some definition of social good, but potential definitions have been seldom suggested and never agreed upon. The normative question of what AI for social good research should be "for" is not thoughtfully elaborated, or is frequently addressed with a utilitarian outlook that prioritizes the needs of the majority over those who have been historically marginalized, brushing aside realities of injustice and inequity. We argue that AI for social good ought to be assessed by the communities that the AI system will impact, using as a guide the capabilities approach, a framework to measure the ability of different policies to improve human welfare equity. Furthermore, we lay out how AI research has the potential to catalyze social progress by expanding and equalizing capabilities. We show how the capabilities approach aligns with a participatory approach for the design and implementation of AI for social good research in a framework we introduce called PACT, in which community members affected should be brought in as partners and their input prioritized throughout the project. We conclude by providing an incomplete set of guiding questions for carrying out such participatory AI research in a way that elicits and respects a community's own definition of social good.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge