Ciro Potena

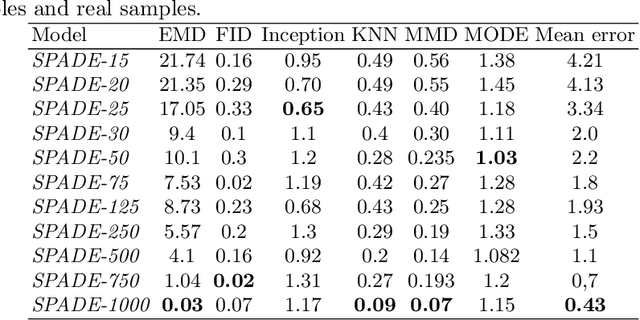

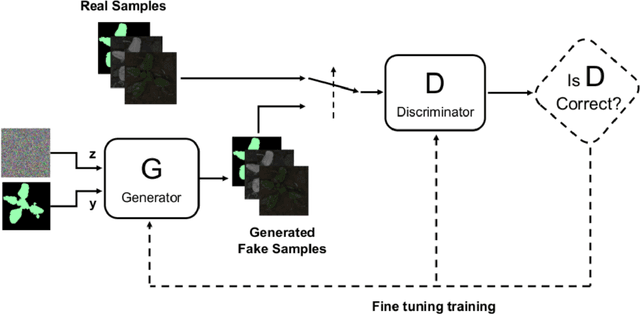

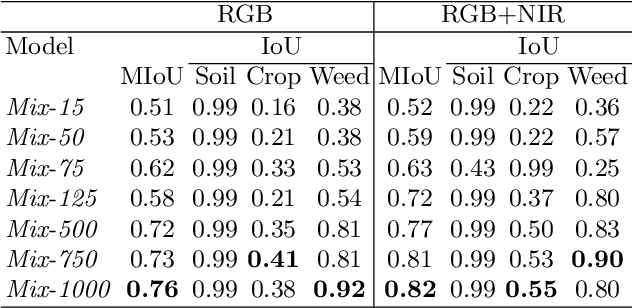

Multi-Spectral Image Synthesis for Crop/Weed Segmentation in Precision Farming

Sep 12, 2020

Abstract:An effective perception system is a fundamental component for farming robots, as it enables them to properly perceive the surrounding environment and to carry out targeted operations. The most recent approaches make use of state-of-the-art machine learning techniques to learn an effective model for the target task. However, those methods need a large amount of labelled data for training. A recent approach to deal with this issue is data augmentation through Generative Adversarial Networks (GANs), where entire synthetic scenes are added to the training data, thus enlarging and diversifying their informative content. In this work, we propose an alternative solution with respect to the common data augmentation techniques, applying it to the fundamental problem of crop/weed segmentation in precision farming. Starting from real images, we create semi-artificial samples by replacing the most relevant object classes (i.e., crop and weeds) with their synthesized counterparts. To do that, we employ a conditional GAN (cGAN), where the generative model is trained by conditioning the shape of the generated object. Moreover, in addition to RGB data, we take into account also near-infrared (NIR) information, generating four channel multi-spectral synthetic images. Quantitative experiments, carried out on three publicly available datasets, show that (i) our model is capable of generating realistic multi-spectral images of plants and (ii) the usage of such synthetic images in the training process improves the segmentation performance of state-of-the-art semantic segmentation Convolutional Networks.

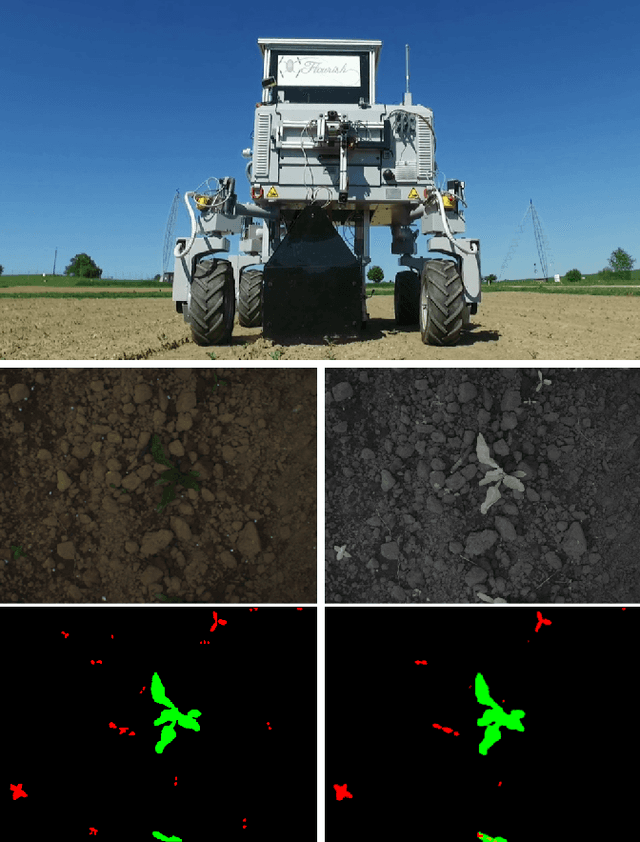

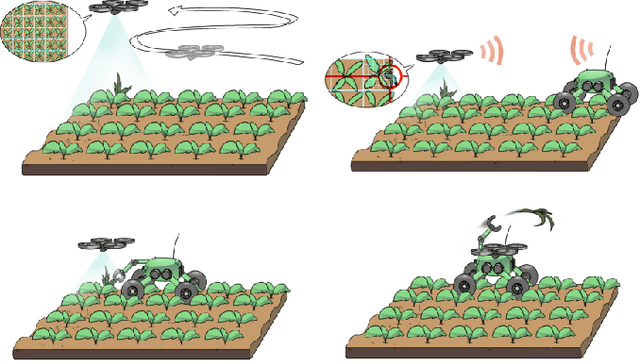

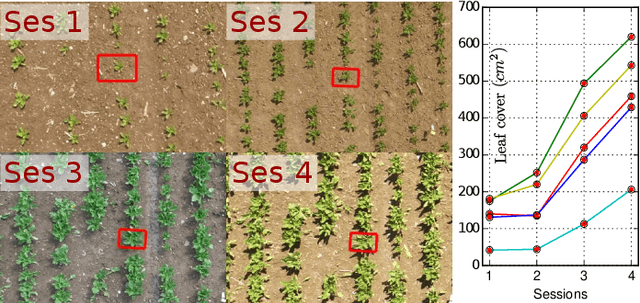

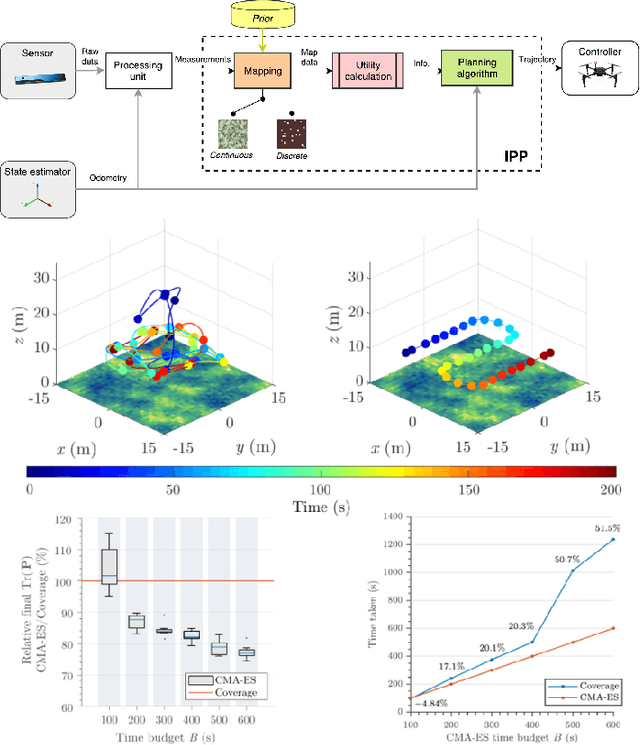

Building an Aerial-Ground Robotics System for Precision Farming

Nov 08, 2019

Abstract:The application of autonomous robots in agriculture is gaining more and more popularity thanks to the high impact it may have on food security, sustainability, resource use efficiency, reduction of chemical treatments, minimization of the human effort and maximization of yield. The Flourish research project faced this challenge by developing an adaptable robotic solution for precision farming that combines the aerial survey capabilities of small autonomous unmanned aerial vehicles (UAVs) with flexible targeted intervention performed by multi-purpose agricultural unmanned ground vehicles (UGVs). This paper presents an exhaustive overview of the scientific and technological advances and outcomes obtained in the Flourish project. We introduce multi-spectral perception algorithms and aerial and ground based systems developed to monitor crop density, weed pressure, crop nitrogen nutrition status, and to accurately classify and locate weeds. We then introduce the navigation and mapping systems to deal with the specificity of the employed robots and of the agricultural environment, highlighting the collaborative modules that enable the UAVs and UGVs to collect and share information in a unified environment model. We finally present the ground intervention hardware, software solutions, and interfaces we implemented and tested in different field conditions and with different crops. We describe here a real use case in which a UAV collaborates with a UGV to monitor the field and to perform selective spraying treatments in a totally autonomous way.

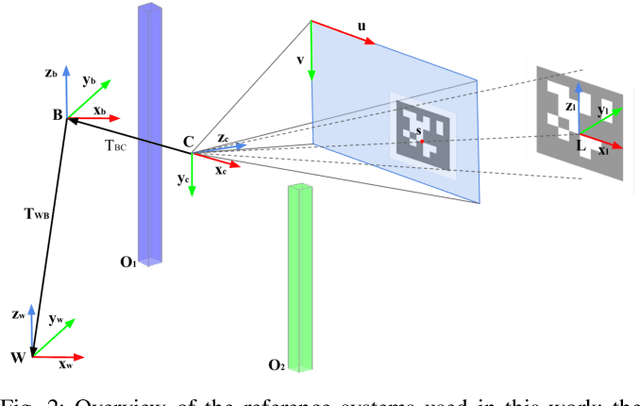

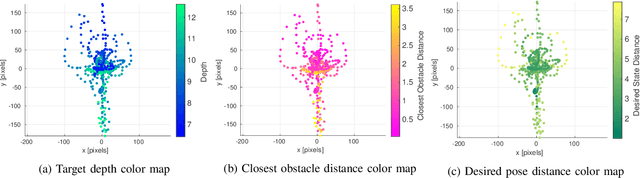

Joint Vision-Based Navigation, Control and Obstacle Avoidance for UAVs in Dynamic Environments

May 03, 2019

Abstract:This work addresses the problem of coupling vision-based navigation systems for Unmanned Aerial Vehicles (UAVs) with robust obstacle avoidance capabilities. The former is formulated by a maximization of the point of interest visibility, while the latter is modeled by ellipsoidal repulsive areas. The whole problem is transcribed into an Optimal Control Problem (OCP), and solved in a few milliseconds by leveraging state-of-the-art numerical optimization. The resulting trajectories are then well suited to achieve the specified goal location while avoiding obstacles by a safety margin and minimizing the probability to loose track with the target of interest. Combining this technique with a proper ellipsoid shaping (e.g. augmenting the shape with the obstacle velocity, or the obstacle detection uncertainties) results in a robust obstacle avoidance behaviour. We validate our approach within extensive simulated experiments demonstrating (i) capability to satisfy all the constraints, and (ii) the avoidance reactivity even in challenging situations. We release with this paper the open source implementation

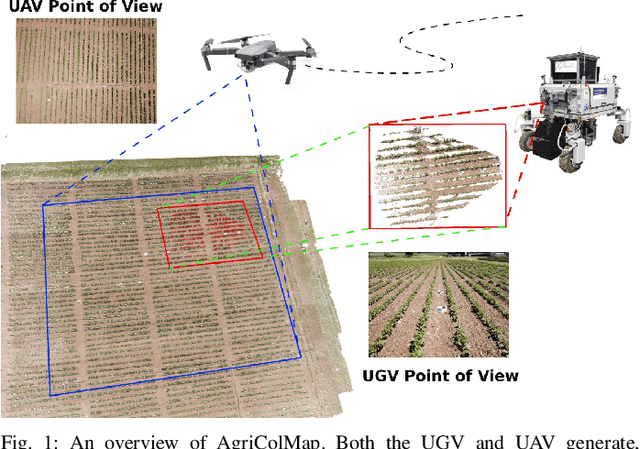

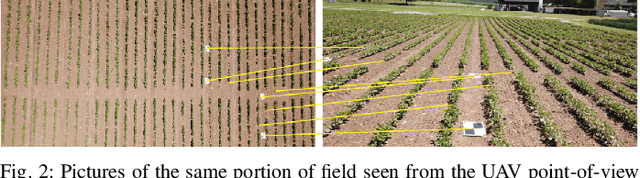

AgriColMap: Aerial-Ground Collaborative 3D Mapping for Precision Farming

Mar 14, 2019

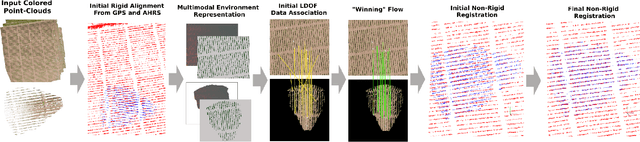

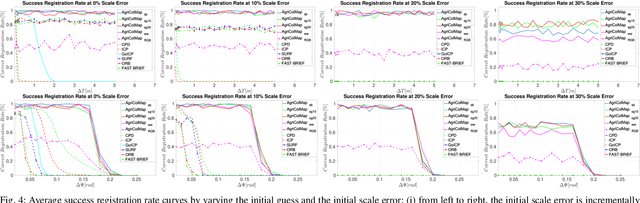

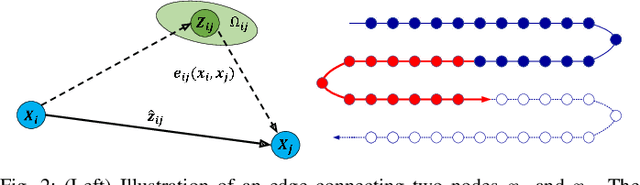

Abstract:The combination of aerial survey capabilities of Unmanned Aerial Vehicles with targeted intervention abilities of agricultural Unmanned Ground Vehicles can significantly improve the effectiveness of robotic systems applied to precision agriculture. In this context, building and updating a common map of the field is an essential but challenging task. The maps built using robots of different types show differences in size, resolution and scale, the associated geolocation data may be inaccurate and biased, while the repetitiveness of both visual appearance and geometric structures found within agricultural contexts render classical map merging techniques ineffective. In this paper we propose AgriColMap, a novel map registration pipeline that leverages a grid-based multimodal environment representation which includes a vegetation index map and a Digital Surface Model. We cast the data association problem between maps built from UAVs and UGVs as a multimodal, large displacement dense optical flow estimation. The dominant, coherent flows, selected using a voting scheme, are used as point-to-point correspondences to infer a preliminary non-rigid alignment between the maps. A final refinement is then performed, by exploiting only meaningful parts of the registered maps. We evaluate our system using real world data for 3 fields with different crop species. The results show that our method outperforms several state of the art map registration and matching techniques by a large margin, and has a higher tolerance to large initial misalignments. We release an implementation of the proposed approach along with the acquired datasets with this paper.

* Published in IEEE Robotics and Automation Letters, 2019

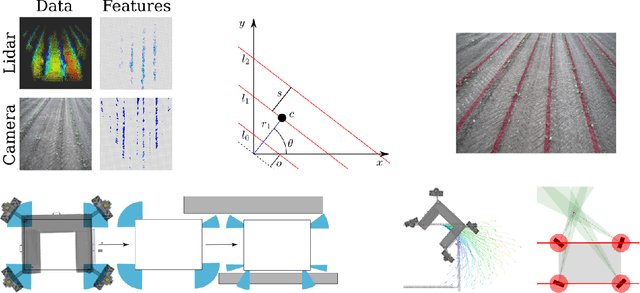

An Effective Multi-Cue Positioning System for Agricultural Robotics

Sep 11, 2018

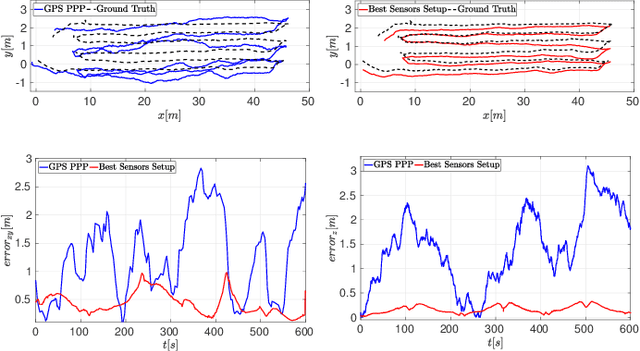

Abstract:The self-localization capability is a crucial component for Unmanned Ground Vehicles (UGV) in farming applications. Approaches based solely on visual cues or on low-cost GPS are easily prone to fail in such scenarios. In this paper, we present a robust and accurate 3D global pose estimation framework, designed to take full advantage of heterogeneous sensory data. By modeling the pose estimation problem as a pose graph optimization, our approach simultaneously mitigates the cumulative drift introduced by motion estimation systems (wheel odometry, visual odometry, ...), and the noise introduced by raw GPS readings. Along with a suitable motion model, our system also integrates two additional types of constraints: (i) a Digital Elevation Model and (ii) a Markov Random Field assumption. We demonstrate how using these additional cues substantially reduces the error along the altitude axis and, moreover, how this benefit spreads to the other components of the state. We report exhaustive experiments combining several sensor setups, showing accuracy improvements ranging from 37% to 76% with respect to the exclusive use of a GPS sensor. We show that our approach provides accurate results even if the GPS unexpectedly changes positioning mode. The code of our system along with the acquired datasets are released with this paper.

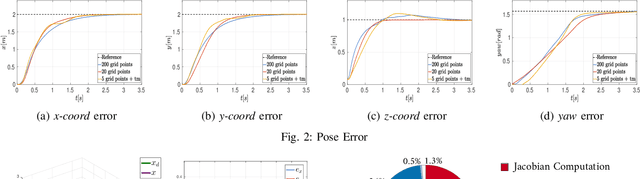

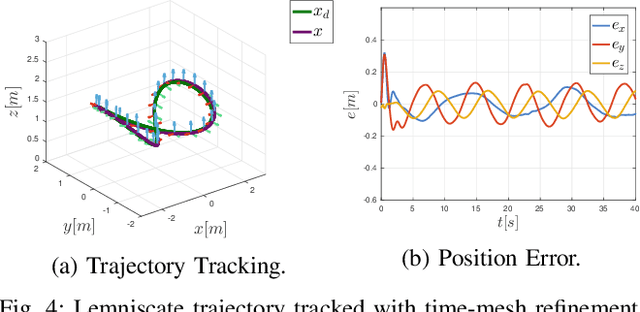

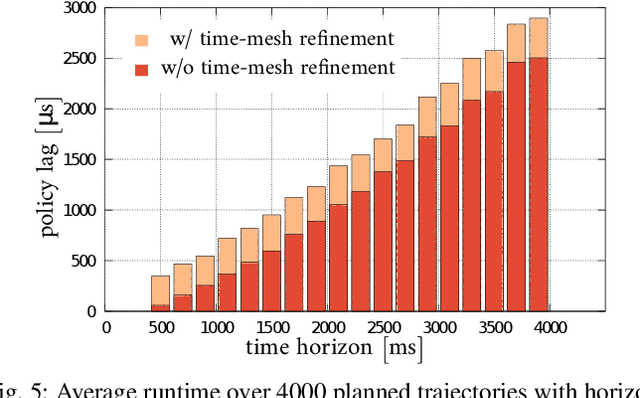

Non-Linear Model Predictive Control with Adaptive Time-Mesh Refinement

Mar 28, 2018

Abstract:In this paper, we present a novel solution for real-time, Non-Linear Model Predictive Control (NMPC) exploiting a time-mesh refinement strategy. The proposed controller formulates the Optimal Control Problem (OCP) in terms of flat outputs over an adaptive lattice. In common approximated OCP solutions, the number of discretization points composing the lattice represents a critical upper bound for real-time applications. The proposed NMPC-based technique refines the initially uniform time horizon by adding time steps with a sampling criterion that aims to reduce the discretization error. This enables a higher accuracy in the initial part of the receding horizon, which is more relevant to NMPC, while keeping bounded the number of discretization points. By combining this feature with an efficient Least Square formulation, our solver is also extremely time-efficient, generating trajectories of multiple seconds within only a few milliseconds. The performance of the proposed approach has been validated in a high fidelity simulation environment, by using an UAV platform. We also released our implementation as open source C++ code.

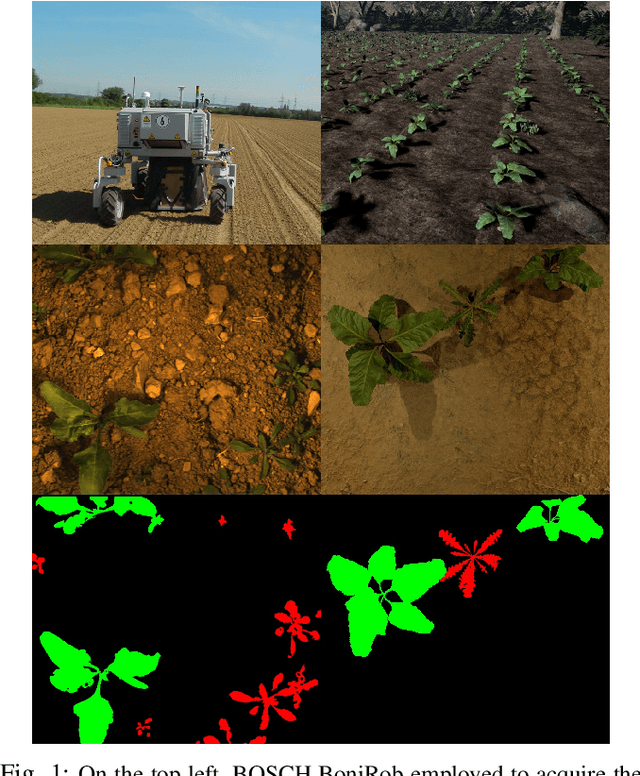

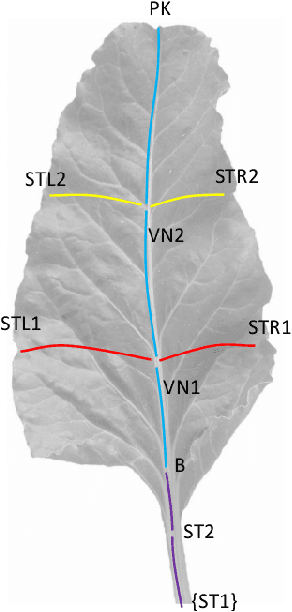

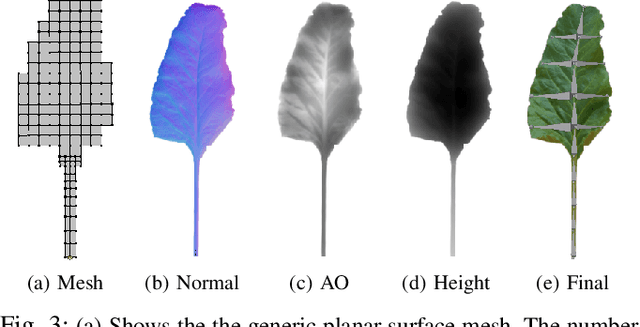

Automatic Model Based Dataset Generation for Fast and Accurate Crop and Weeds Detection

Aug 06, 2017

Abstract:Selective weeding is one of the key challenges in the field of agriculture robotics. To accomplish this task, a farm robot should be able to accurately detect plants and to distinguish them between crop and weeds. Most of the promising state-of-the-art approaches make use of appearance-based models trained on large annotated datasets. Unfortunately, creating large agricultural datasets with pixel-level annotations is an extremely time consuming task, actually penalizing the usage of data-driven techniques. In this paper, we face this problem by proposing a novel and effective approach that aims to dramatically minimize the human intervention needed to train the detection and classification algorithms. The idea is to procedurally generate large synthetic training datasets randomizing the key features of the target environment (i.e., crop and weed species, type of soil, light conditions). More specifically, by tuning these model parameters, and exploiting a few real-world textures, it is possible to render a large amount of realistic views of an artificial agricultural scenario with no effort. The generated data can be directly used to train the model or to supplement real-world images. We validate the proposed methodology by using as testbed a modern deep learning based image segmentation architecture. We compare the classification results obtained using both real and synthetic images as training data. The reported results confirm the effectiveness and the potentiality of our approach.

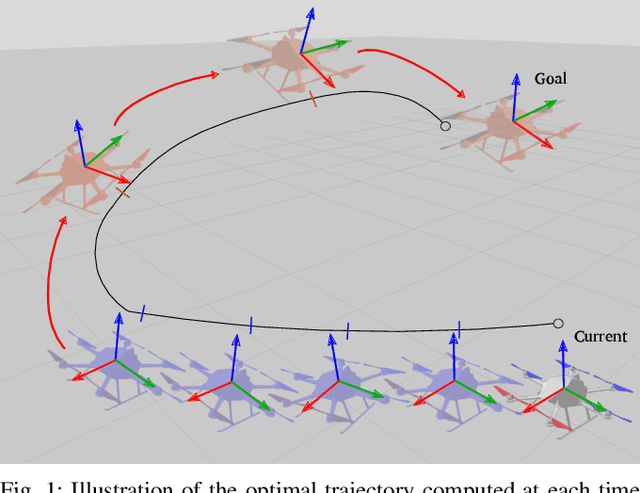

Effective Target Aware Visual Navigation for UAVs

Aug 02, 2017

Abstract:In this paper we propose an effective vision-based navigation method that allows a multirotor vehicle to simultaneously reach a desired goal pose in the environment while constantly facing a target object or landmark. Standard techniques such as Position-Based Visual Servoing (PBVS) and Image-Based Visual Servoing (IBVS) in some cases (e.g., while the multirotor is performing fast maneuvers) do not allow to constantly maintain the line of sight with a target of interest. Instead, we compute the optimal trajectory by solving a non-linear optimization problem that minimizes the target re-projection error while meeting the UAV's dynamic constraints. The desired trajectory is then tracked by means of a real-time Non-linear Model Predictive Controller (NMPC): this implicitly allows the multirotor to satisfy both the required constraints. We successfully evaluate the proposed approach in many real and simulated experiments, making an exhaustive comparison with a standard approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge