Chuan He

Bias-Variance Trade-off for Clipped Stochastic First-Order Methods: From Bounded Variance to Infinite Mean

Dec 16, 2025Abstract:Stochastic optimization is fundamental to modern machine learning. Recent research has extended the study of stochastic first-order methods (SFOMs) from light-tailed to heavy-tailed noise, which frequently arises in practice, with clipping emerging as a key technique for controlling heavy-tailed gradients. Extensive theoretical advances have further shown that the oracle complexity of SFOMs depends on the tail index $α$ of the noise. Nonetheless, existing complexity results often cover only the case $α\in (1,2]$, that is, the regime where the noise has a finite mean, while the complexity bounds tend to infinity as $α$ approaches $1$. This paper tackles the general case of noise with tail index $α\in(0,2]$, covering regimes ranging from noise with bounded variance to noise with an infinite mean, where the latter case has been scarcely studied. Through a novel analysis of the bias-variance trade-off in gradient clipping, we show that when a symmetry measure of the noise tail is controlled, clipped SFOMs achieve improved complexity guarantees in the presence of heavy-tailed noise for any tail index $α\in (0,2]$. Our analysis of the bias-variance trade-off not only yields new unified complexity guarantees for clipped SFOMs across this full range of tail indices, but is also straightforward to apply and can be combined with classical analyses under light-tailed noise to establish oracle complexity guarantees under heavy-tailed noise. Finally, numerical experiments validate our theoretical findings.

DeMuon: A Decentralized Muon for Matrix Optimization over Graphs

Oct 01, 2025Abstract:In this paper, we propose DeMuon, a method for decentralized matrix optimization over a given communication topology. DeMuon incorporates matrix orthogonalization via Newton-Schulz iterations-a technique inherited from its centralized predecessor, Muon-and employs gradient tracking to mitigate heterogeneity among local functions. Under heavy-tailed noise conditions and additional mild assumptions, we establish the iteration complexity of DeMuon for reaching an approximate stochastic stationary point. This complexity result matches the best-known complexity bounds of centralized algorithms in terms of dependence on the target tolerance. To the best of our knowledge, DeMuon is the first direct extension of Muon to decentralized optimization over graphs with provable complexity guarantees. We conduct preliminary numerical experiments on decentralized transformer pretraining over graphs with varying degrees of connectivity. Our numerical results demonstrate a clear margin of improvement of DeMuon over other popular decentralized algorithms across different network topologies.

Complexity of normalized stochastic first-order methods with momentum under heavy-tailed noise

Jun 12, 2025

Abstract:In this paper, we propose practical normalized stochastic first-order methods with Polyak momentum, multi-extrapolated momentum, and recursive momentum for solving unconstrained optimization problems. These methods employ dynamically updated algorithmic parameters and do not require explicit knowledge of problem-dependent quantities such as the Lipschitz constant or noise bound. We establish first-order oracle complexity results for finding approximate stochastic stationary points under heavy-tailed noise and weakly average smoothness conditions -- both of which are weaker than the commonly used bounded variance and mean-squared smoothness assumptions. Our complexity bounds either improve upon or match the best-known results in the literature. Numerical experiments are presented to demonstrate the practical effectiveness of the proposed methods.

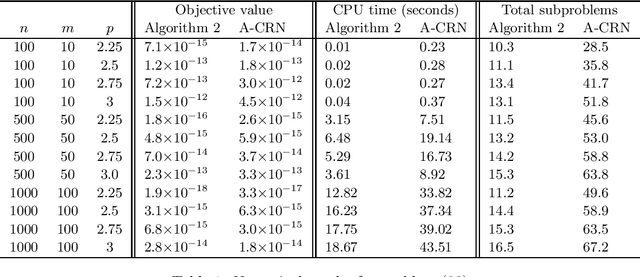

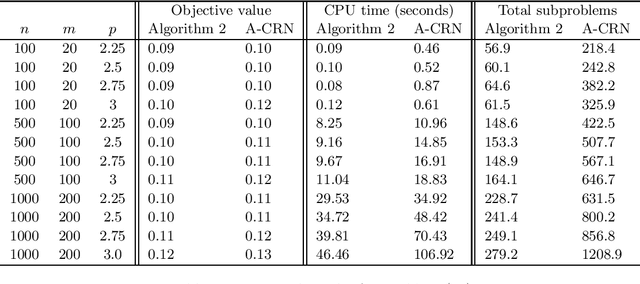

Stochastic first-order methods with multi-extrapolated momentum for highly smooth unconstrained optimization

Dec 19, 2024Abstract:In this paper we consider an unconstrained stochastic optimization problem where the objective function exhibits a high order of smoothness. In particular, we propose a stochastic first-order method (SFOM) with multi-extrapolated momentum, in which multiple extrapolations are performed in each iteration, followed by a momentum step based on these extrapolations. We show that our proposed SFOM with multi-extrapolated momentum can accelerate optimization by exploiting the high-order smoothness of the objective function $f$. Specifically, assuming that the gradient and the $p$th-order derivative of $f$ are Lipschitz continuous for some $p\ge2$, and under some additional mild assumptions, we establish that our method achieves a sample complexity of $\widetilde{\mathcal{O}}(\epsilon^{-(3p+1)/p})$ for finding a point $x$ satisfying $\mathbb{E}[\|\nabla f(x)\|]\le\epsilon$. To the best of our knowledge, our method is the first SFOM to leverage arbitrary order smoothness of the objective function for acceleration, resulting in a sample complexity that strictly improves upon the best-known results without assuming the average smoothness condition. Finally, preliminary numerical experiments validate the practical performance of our method and corroborate our theoretical findings.

Stochastic interior-point methods for smooth conic optimization with applications

Dec 17, 2024

Abstract:Conic optimization plays a crucial role in many machine learning (ML) problems. However, practical algorithms for conic constrained ML problems with large datasets are often limited to specific use cases, as stochastic algorithms for general conic optimization remain underdeveloped. To fill this gap, we introduce a stochastic interior-point method (SIPM) framework for general conic optimization, along with four novel SIPM variants leveraging distinct stochastic gradient estimators. Under mild assumptions, we establish the global convergence rates of our proposed SIPMs, which, up to a logarithmic factor, match the best-known rates in stochastic unconstrained optimization. Finally, our numerical experiments on robust linear regression, multi-task relationship learning, and clustering data streams demonstrate the effectiveness and efficiency of our approach.

Multi-Grained Preference Enhanced Transformer for Multi-Behavior Sequential Recommendation

Nov 19, 2024

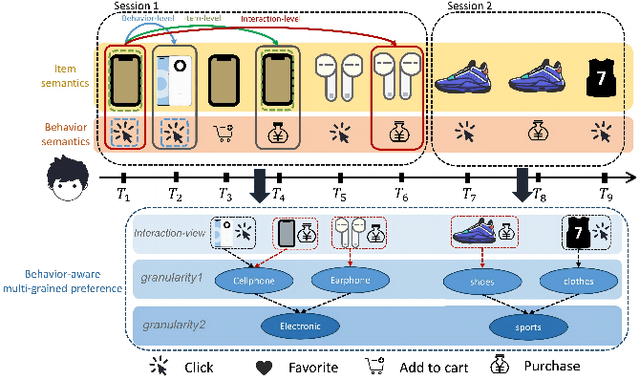

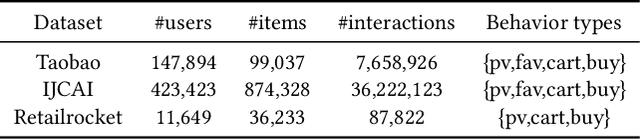

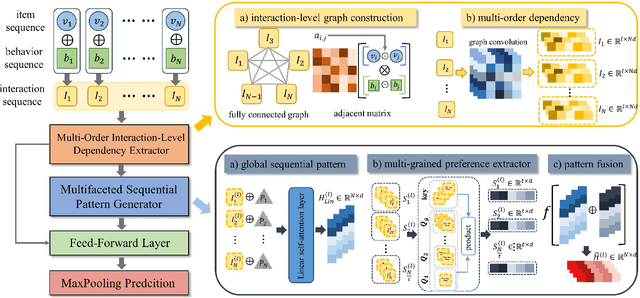

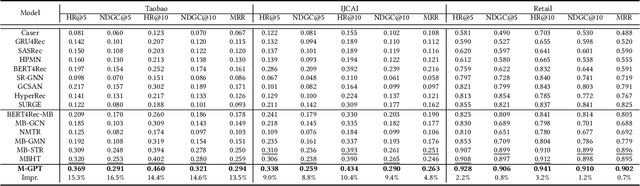

Abstract:Sequential recommendation (SR) aims to predict the next purchasing item according to users' dynamic preference learned from their historical user-item interactions. To improve the performance of recommendation, learning dynamic heterogeneous cross-type behavior dependencies is indispensable for recommender system. However, there still exists some challenges in Multi-Behavior Sequential Recommendation (MBSR). On the one hand, existing methods only model heterogeneous multi-behavior dependencies at behavior-level or item-level, and modelling interaction-level dependencies is still a challenge. On the other hand, the dynamic multi-grained behavior-aware preference is hard to capture in interaction sequences, which reflects interaction-aware sequential pattern. To tackle these challenges, we propose a Multi-Grained Preference enhanced Transformer framework (M-GPT). First, M-GPT constructs a interaction-level graph of historical cross-typed interactions in a sequence. Then graph convolution is performed to derive interaction-level multi-behavior dependency representation repeatedly, in which the complex correlation between historical cross-typed interactions at specific orders can be well learned. Secondly, a novel multi-scale transformer architecture equipped with multi-grained user preference extraction is proposed to encode the interaction-aware sequential pattern enhanced by capturing temporal behavior-aware multi-grained preference . Experiments on the real-world datasets indicate that our method M-GPT consistently outperforms various state-of-the-art recommendation methods.

Newton-CG methods for nonconvex unconstrained optimization with Hölder continuous Hessian

Nov 22, 2023

Abstract:In this paper we consider a nonconvex unconstrained optimization problem minimizing a twice differentiable objective function with H\"older continuous Hessian. Specifically, we first propose a Newton-conjugate gradient (Newton-CG) method for finding an approximate first-order stationary point (FOSP) of this problem, assuming the associated the H\"older parameters are explicitly known. Then we develop a parameter-free Newton-CG method without requiring any prior knowledge of these parameters. To the best of our knowledge, this method is the first parameter-free second-order method achieving the best-known iteration and operation complexity for finding an approximate FOSP of this problem. Furthermore, we propose a Newton-CG method for finding an approximate second-order stationary point (SOSP) of the considered problem with high probability and establish its iteration and operation complexity. Finally, we present preliminary numerical results to demonstrate the superior practical performance of our parameter-free Newton-CG method over a well-known regularized Newton method.

A proximal augmented Lagrangian based algorithm for federated learning with global and local convex conic constraints

Oct 16, 2023

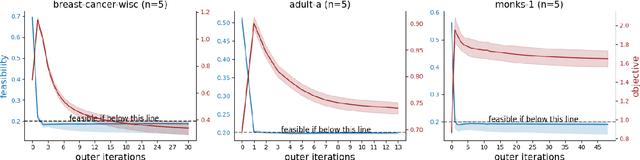

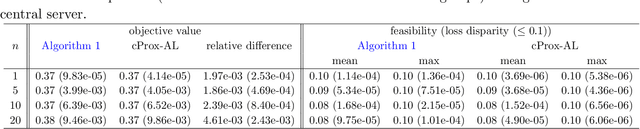

Abstract:This paper considers federated learning (FL) with constraints, where the central server and all local clients collectively minimize a sum of convex local objective functions subject to global and local convex conic constraints. To train the model without moving local data from clients to the central server, we propose an FL framework in which each local client performs multiple updates using the local objective and local constraint, while the central server handles the global constraint and performs aggregation based on the updated local models. In particular, we develop a proximal augmented Lagrangian (AL) based algorithm for FL with global and local convex conic constraints. The subproblems arising in this algorithm are solved by an inexact alternating direction method of multipliers (ADMM) in a federated fashion. Under a local Lipschitz condition and mild assumptions, we establish the worst-case complexity bounds of the proposed algorithm for finding an approximate KKT solution. To the best of our knowledge, this work proposes the first algorithm for FL with global and local constraints. Our numerical experiments demonstrate the practical advantages of our algorithm in performing Neyman-Pearson classification and enhancing model fairness in the context of FL.

A Newton-CG based barrier-augmented Lagrangian method for general nonconvex conic optimization

Jan 10, 2023Abstract:In this paper we consider finding an approximate second-order stationary point (SOSP) of general nonconvex conic optimization that minimizes a twice differentiable function subject to nonlinear equality constraints and also a convex conic constraint. In particular, we propose a Newton-conjugate gradient (Newton-CG) based barrier-augmented Lagrangian method for finding an approximate SOSP of this problem. Under some mild assumptions, we show that our method enjoys a total inner iteration complexity of $\widetilde{\cal O}(\epsilon^{-11/2})$ and an operation complexity of $\widetilde{\cal O}(\epsilon^{-11/2}\min\{n,\epsilon^{-5/4}\})$ for finding an $(\epsilon,\sqrt{\epsilon})$-SOSP of general nonconvex conic optimization with high probability. Moreover, under a constraint qualification, these complexity bounds are improved to $\widetilde{\cal O}(\epsilon^{-7/2})$ and $\widetilde{\cal O}(\epsilon^{-7/2}\min\{n,\epsilon^{-3/4}\})$, respectively. To the best of our knowledge, this is the first study on the complexity of finding an approximate SOSP of general nonconvex conic optimization. Preliminary numerical results are presented to demonstrate superiority of the proposed method over first-order methods in terms of solution quality.

A Newton-CG based augmented Lagrangian method for finding a second-order stationary point of nonconvex equality constrained optimization with complexity guarantees

Jan 09, 2023

Abstract:In this paper we consider finding a second-order stationary point (SOSP) of nonconvex equality constrained optimization when a nearly feasible point is known. In particular, we first propose a new Newton-CG method for finding an approximate SOSP of unconstrained optimization and show that it enjoys a substantially better complexity than the Newton-CG method [56]. We then propose a Newton-CG based augmented Lagrangian (AL) method for finding an approximate SOSP of nonconvex equality constrained optimization, in which the proposed Newton-CG method is used as a subproblem solver. We show that under a generalized linear independence constraint qualification (GLICQ), our AL method enjoys a total inner iteration complexity of $\widetilde{\cal O}(\epsilon^{-7/2})$ and an operation complexity of $\widetilde{\cal O}(\epsilon^{-7/2}\min\{n,\epsilon^{-3/4}\})$ for finding an $(\epsilon,\sqrt{\epsilon})$-SOSP of nonconvex equality constrained optimization with high probability, which are significantly better than the ones achieved by the proximal AL method [60]. Besides, we show that it has a total inner iteration complexity of $\widetilde{\cal O}(\epsilon^{-11/2})$ and an operation complexity of $\widetilde{\cal O}(\epsilon^{-11/2}\min\{n,\epsilon^{-5/4}\})$ when the GLICQ does not hold. To the best of our knowledge, all the complexity results obtained in this paper are new for finding an approximate SOSP of nonconvex equality constrained optimization with high probability. Preliminary numerical results also demonstrate the superiority of our proposed methods over the ones in [56,60].

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge