Chien-Ju Ho

Adaptive Recruitment Resource Allocation to Improve Cohort Representativeness in Participatory Biomedical Datasets

Aug 02, 2024Abstract:Large participatory biomedical studies, studies that recruit individuals to join a dataset, are gaining popularity and investment, especially for analysis by modern AI methods. Because they purposively recruit participants, these studies are uniquely able to address a lack of historical representation, an issue that has affected many biomedical datasets. In this work, we define representativeness as the similarity to a target population distribution of a set of attributes and our goal is to mirror the U.S. population across distributions of age, gender, race, and ethnicity. Many participatory studies recruit at several institutions, so we introduce a computational approach to adaptively allocate recruitment resources among sites to improve representativeness. In simulated recruitment of 10,000-participant cohorts from medical centers in the STAR Clinical Research Network, we show that our approach yields a more representative cohort than existing baselines. Thus, we highlight the value of computational modeling in guiding recruitment efforts.

Dataset Representativeness and Downstream Task Fairness

Jun 28, 2024

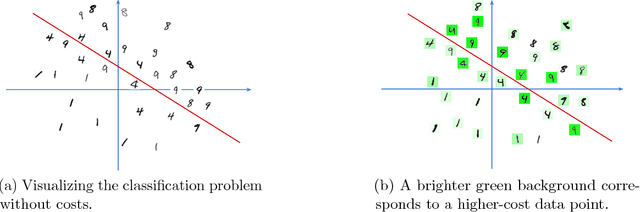

Abstract:Our society collects data on people for a wide range of applications, from building a census for policy evaluation to running meaningful clinical trials. To collect data, we typically sample individuals with the goal of accurately representing a population of interest. However, current sampling processes often collect data opportunistically from data sources, which can lead to datasets that are biased and not representative, i.e., the collected dataset does not accurately reflect the distribution of demographics of the true population. This is a concern because subgroups within the population can be under- or over-represented in a dataset, which may harm generalizability and lead to an unequal distribution of benefits and harms from downstream tasks that use such datasets (e.g., algorithmic bias in medical decision-making algorithms). In this paper, we assess the relationship between dataset representativeness and group-fairness of classifiers trained on that dataset. We demonstrate that there is a natural tension between dataset representativeness and classifier fairness; empirically we observe that training datasets with better representativeness can frequently result in classifiers with higher rates of unfairness. We provide some intuition as to why this occurs via a set of theoretical results in the case of univariate classifiers. We also find that over-sampling underrepresented groups can result in classifiers which exhibit greater bias to those groups. Lastly, we observe that fairness-aware sampling strategies (i.e., those which are specifically designed to select data with high downstream fairness) will often over-sample members of majority groups. These results demonstrate that the relationship between dataset representativeness and downstream classifier fairness is complex; balancing these two quantities requires special care from both model- and dataset-designers.

On the Utility of Accounting for Human Beliefs about AI Behavior in Human-AI Collaboration

Jun 10, 2024Abstract:To enable effective human-AI collaboration, merely optimizing AI performance while ignoring humans is not sufficient. Recent research has demonstrated that designing AI agents to account for human behavior leads to improved performance in human-AI collaboration. However, a limitation of most existing approaches is their assumption that human behavior is static, irrespective of AI behavior. In reality, humans may adjust their action plans based on their observations of AI behavior. In this paper, we address this limitation by enabling a collaborative AI agent to consider the beliefs of its human partner, i.e., what the human partner thinks the AI agent is doing, and design its action plan to facilitate easier collaboration with its human partner. Specifically, we developed a model of human beliefs that accounts for how humans reason about the behavior of their AI partners. Based on this belief model, we then developed an AI agent that considers both human behavior and human beliefs in devising its strategy for working with humans. Through extensive real-world human-subject experiments, we demonstrated that our belief model more accurately predicts humans' beliefs about AI behavior. Moreover, we showed that our design of AI agents that accounts for human beliefs enhances performance in human-AI collaboration.

Data-Driven Goal Recognition Design for General Behavioral Agents

Apr 03, 2024Abstract:Goal recognition design aims to make limited modifications to decision-making environments with the goal of making it easier to infer the goals of agents acting within those environments. Although various research efforts have been made in goal recognition design, existing approaches are computationally demanding and often assume that agents are (near-)optimal in their decision-making. To address these limitations, we introduce a data-driven approach to goal recognition design that can account for agents with general behavioral models. Following existing literature, we use worst-case distinctiveness ($\textit{wcd}$) as a measure of the difficulty in inferring the goal of an agent in a decision-making environment. Our approach begins by training a machine learning model to predict the $\textit{wcd}$ for a given environment and the agent behavior model. We then propose a gradient-based optimization framework that accommodates various constraints to optimize decision-making environments for enhanced goal recognition. Through extensive simulations, we demonstrate that our approach outperforms existing methods in reducing $\textit{wcd}$ and enhancing runtime efficiency in conventional setups, and it also adapts to scenarios not previously covered in the literature, such as those involving flexible budget constraints, more complex environments, and suboptimal agent behavior. Moreover, we have conducted human-subject experiments which confirm that our method can create environments that facilitate efficient goal recognition from real-world human decision-makers.

Performative Prediction with Bandit Feedback: Learning through Reparameterization

May 08, 2023

Abstract:Performative prediction, as introduced by Perdomo et al. (2020), is a framework for studying social prediction in which the data distribution itself changes in response to the deployment of a model. Existing work on optimizing accuracy in this setting hinges on two assumptions that are easily violated in practice: that the performative risk is convex over the deployed model, and that the mapping from the model to the data distribution is known to the model designer in advance. In this paper, we initiate the study of tractable performative prediction problems that do not require these assumptions. To tackle this more challenging setting, we develop a two-level zeroth-order optimization algorithm, where one level aims to compute the distribution map, and the other level reparameterizes the performative prediction objective as a function of the induced data distribution. Under mild conditions, this reparameterization allows us to transform the non-convex objective into a convex one and achieve provable regret guarantees. In particular, we provide a regret bound that is sublinear in the total number of performative samples taken and only polynomial in the dimension of the model parameter.

Optimal Query Complexity of Secure Stochastic Convex Optimization

Apr 05, 2021

Abstract:We study the secure stochastic convex optimization problem. A learner aims to learn the optimal point of a convex function through sequentially querying a (stochastic) gradient oracle. In the meantime, there exists an adversary who aims to free-ride and infer the learning outcome of the learner from observing the learner's queries. The adversary observes only the points of the queries but not the feedback from the oracle. The goal of the learner is to optimize the accuracy, i.e., obtaining an accurate estimate of the optimal point, while securing her privacy, i.e., making it difficult for the adversary to infer the optimal point. We formally quantify this tradeoff between learner's accuracy and privacy and characterize the lower and upper bounds on the learner's query complexity as a function of desired levels of accuracy and privacy. For the analysis of lower bounds, we provide a general template based on information theoretical analysis and then tailor the template to several families of problems, including stochastic convex optimization and (noisy) binary search. We also present a generic secure learning protocol that achieves the matching upper bound up to logarithmic factors.

Fair Bandit Learning with Delayed Impact of Actions

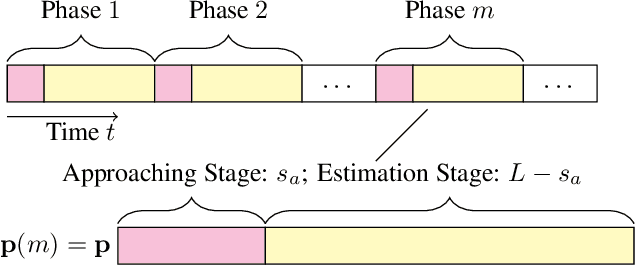

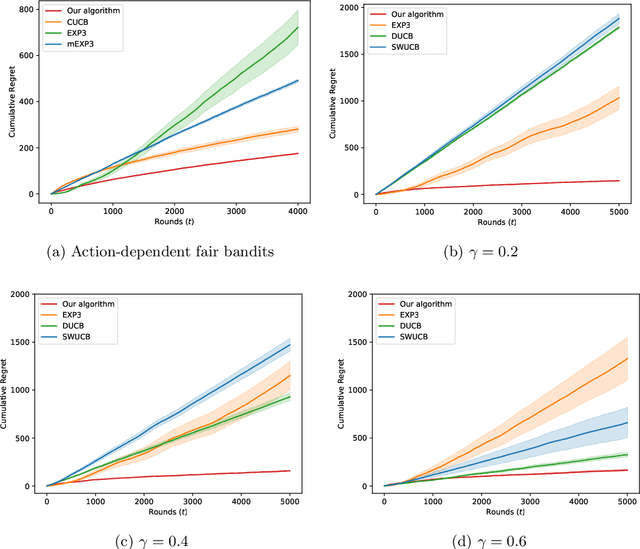

Feb 24, 2020

Abstract:Algorithmic fairness has been studied mostly in a static setting where the implicit assumptions are that the frequencies of historically made decisions do not impact the problem structure in subsequent future. However, for example, the capability to pay back a loan for people in a certain group might depend on historically how frequently that group has been approved loan applications. If banks keep rejecting loan applications to people in a disadvantaged group, it could create a feedback loop and further damage the chance of getting loans for people in that group. This challenge has been noted in several recent works but is under-explored in a more generic sequential learning setting. In this paper, we formulate this delayed and long-term impact of actions within the context of multi-armed bandits (MAB). We generalize the classical bandit setting to encode the dependency of this action "bias" due to the history of the learning. Our goal is to learn to maximize the collected utilities over time while satisfying fairness constraints imposed over arms' utilities, which again depend on the decision they have received. We propose an algorithm that achieves a regret of $\tilde{\mathcal{O}}(KT^{2/3})$ and show a matching regret lower bound of $\Omega(KT^{2/3})$, where $K$ is the number of arms and $T$ denotes the learning horizon. Our results complement the bandit literature by adding techniques to deal with actions with long-term impacts and have implications in designing fair algorithms.

Adaptive Contract Design for Crowdsourcing Markets: Bandit Algorithms for Repeated Principal-Agent Problems

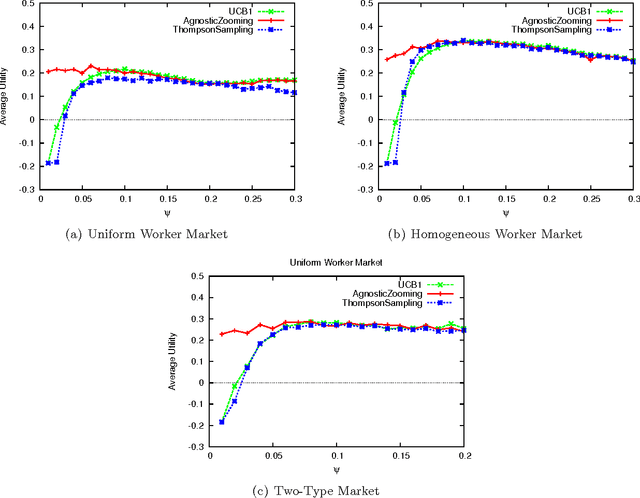

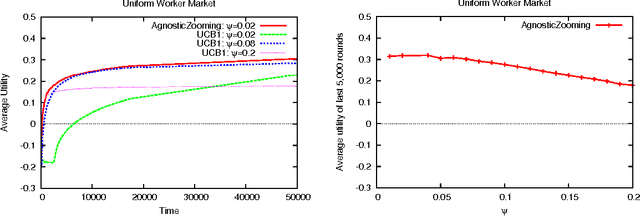

Sep 02, 2015

Abstract:Crowdsourcing markets have emerged as a popular platform for matching available workers with tasks to complete. The payment for a particular task is typically set by the task's requester, and may be adjusted based on the quality of the completed work, for example, through the use of "bonus" payments. In this paper, we study the requester's problem of dynamically adjusting quality-contingent payments for tasks. We consider a multi-round version of the well-known principal-agent model, whereby in each round a worker makes a strategic choice of the effort level which is not directly observable by the requester. In particular, our formulation significantly generalizes the budget-free online task pricing problems studied in prior work. We treat this problem as a multi-armed bandit problem, with each "arm" representing a potential contract. To cope with the large (and in fact, infinite) number of arms, we propose a new algorithm, AgnosticZooming, which discretizes the contract space into a finite number of regions, effectively treating each region as a single arm. This discretization is adaptively refined, so that more promising regions of the contract space are eventually discretized more finely. We analyze this algorithm, showing that it achieves regret sublinear in the time horizon and substantially improves over non-adaptive discretization (which is the only competing approach in the literature). Our results advance the state of art on several different topics: the theory of crowdsourcing markets, principal-agent problems, multi-armed bandits, and dynamic pricing.

Low-Cost Learning via Active Data Procurement

Jun 06, 2015

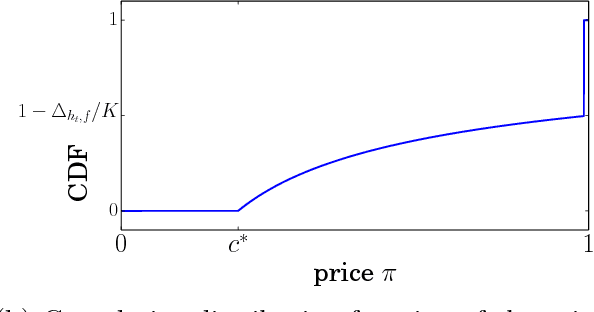

Abstract:We design mechanisms for online procurement of data held by strategic agents for machine learning tasks. The challenge is to use past data to actively price future data and give learning guarantees even when an agent's cost for revealing her data may depend arbitrarily on the data itself. We achieve this goal by showing how to convert a large class of no-regret algorithms into online posted-price and learning mechanisms. Our results in a sense parallel classic sample complexity guarantees, but with the key resource being money rather than quantity of data: With a budget constraint $B$, we give robust risk (predictive error) bounds on the order of $1/\sqrt{B}$. Because we use an active approach, we can often guarantee to do significantly better by leveraging correlations between costs and data. Our algorithms and analysis go through a model of no-regret learning with $T$ arriving pairs (cost, data) and a budget constraint of $B$. Our regret bounds for this model are on the order of $T/\sqrt{B}$ and we give lower bounds on the same order.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge