Brett Levac

DeepInverse: A Python package for solving imaging inverse problems with deep learning

May 26, 2025Abstract:DeepInverse is an open-source PyTorch-based library for solving imaging inverse problems. The library covers all crucial steps in image reconstruction from the efficient implementation of forward operators (e.g., optics, MRI, tomography), to the definition and resolution of variational problems and the design and training of advanced neural network architectures. In this paper, we describe the main functionality of the library and discuss the main design choices.

Diffusion Probabilistic Generative Models for Accelerated, in-NICU Permanent Magnet Neonatal MRI

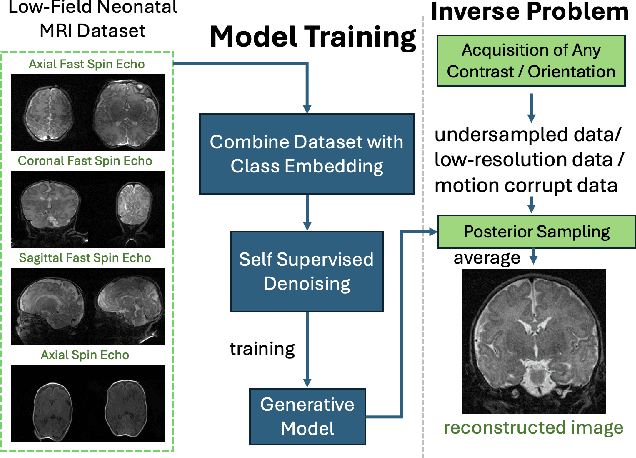

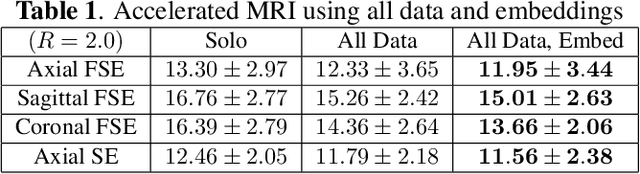

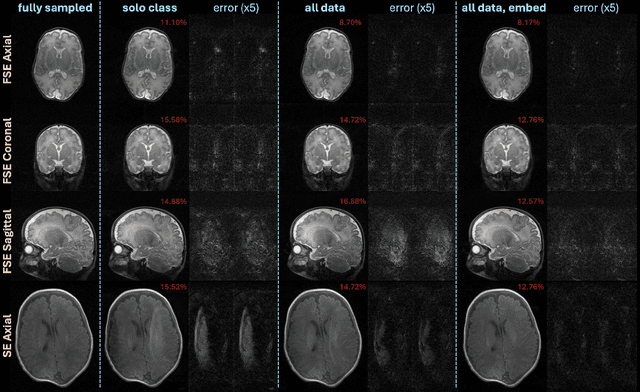

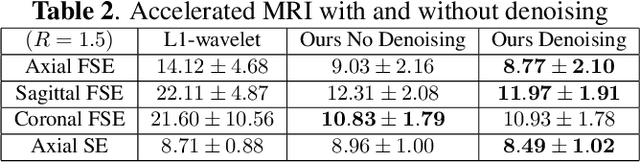

May 21, 2025Abstract:Purpose: Magnetic Resonance Imaging (MRI) enables non-invasive assessment of brain abnormalities during early life development. Permanent magnet scanners operating in the neonatal intensive care unit (NICU) facilitate MRI of sick infants, but have long scan times due to lower signal-to-noise ratios (SNR) and limited receive coils. This work accelerates in-NICU MRI with diffusion probabilistic generative models by developing a training pipeline accounting for these challenges. Methods: We establish a novel training dataset of clinical, 1 Tesla neonatal MR images in collaboration with Aspect Imaging and Sha'are Zedek Medical Center. We propose a pipeline to handle the low quantity and SNR of our real-world dataset (1) modifying existing network architectures to support varying resolutions; (2) training a single model on all data with learned class embedding vectors; (3) applying self-supervised denoising before training; and (4) reconstructing by averaging posterior samples. Retrospective under-sampling experiments, accounting for signal decay, evaluated each item of our proposed methodology. A clinical reader study with practicing pediatric neuroradiologists evaluated our proposed images reconstructed from 1.5x under-sampled data. Results: Combining all data, denoising pre-training, and averaging posterior samples yields quantitative improvements in reconstruction. The generative model decouples the learned prior from the measurement model and functions at two acceleration rates without re-training. The reader study suggests that proposed images reconstructed from approximately 1.5x under-sampled data are adequate for clinical use. Conclusion: Diffusion probabilistic generative models applied with the proposed pipeline to handle challenging real-world datasets could reduce scan time of in-NICU neonatal MRI.

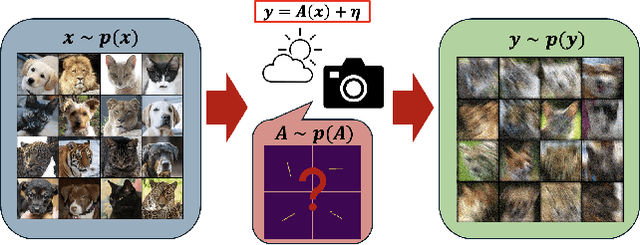

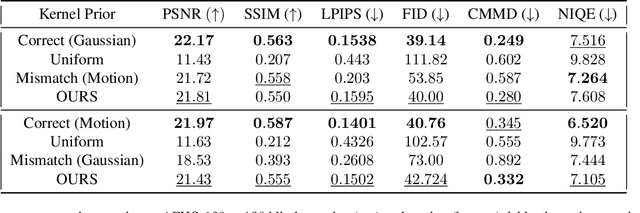

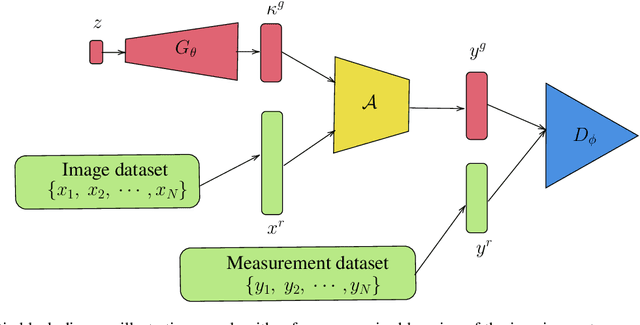

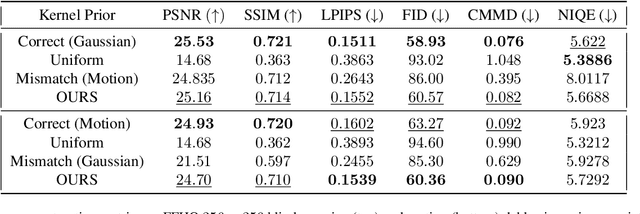

Double Blind Imaging with Generative Modeling

Mar 27, 2025

Abstract:Blind inverse problems in imaging arise from uncertainties in the system used to collect (noisy) measurements of images. Recovering clean images from these measurements typically requires identifying the imaging system, either implicitly or explicitly. A common solution leverages generative models as priors for both the images and the imaging system parameters (e.g., a class of point spread functions). To learn these priors in a straightforward manner requires access to a dataset of clean images as well as samples of the imaging system. We propose an AmbientGAN-based generative technique to identify the distribution of parameters in unknown imaging systems, using only unpaired clean images and corrupted measurements. This learned distribution can then be used in model-based recovery algorithms to solve blind inverse problems such as blind deconvolution. We successfully demonstrate our technique for learning Gaussian blur and motion blur priors from noisy measurements and show their utility in solving blind deconvolution with diffusion posterior sampling.

Accelerated, Robust Lower-Field Neonatal MRI with Generative Models

Oct 28, 2024

Abstract:Neonatal Magnetic Resonance Imaging (MRI) enables non-invasive assessment of potential brain abnormalities during the critical phase of early life development. Recently, interest has developed in lower field (i.e., below 1.5 Tesla) MRI systems that trade-off magnetic field strength for portability and access in the neonatal intensive care unit (NICU). Unfortunately, lower-field neonatal MRI still suffers from long scan times and motion artifacts that can limit its clinical utility for neonates. This work improves motion robustness and accelerates lower field neonatal MRI through diffusion-based generative modeling and signal processing based motion modeling. We first gather a training dataset of clinical neonatal MRI images. Then we train a diffusion-based generative model to learn the statistical distribution of fully-sampled images by applying several signal processing methods to handle the lower signal-to-noise ratio and lower quality of our MRI images. Finally, we present experiments demonstrating the utility of our generative model to improve reconstruction performance across two tasks: accelerated MRI and motion correction.

INFusion: Diffusion Regularized Implicit Neural Representations for 2D and 3D accelerated MRI reconstruction

Jun 19, 2024

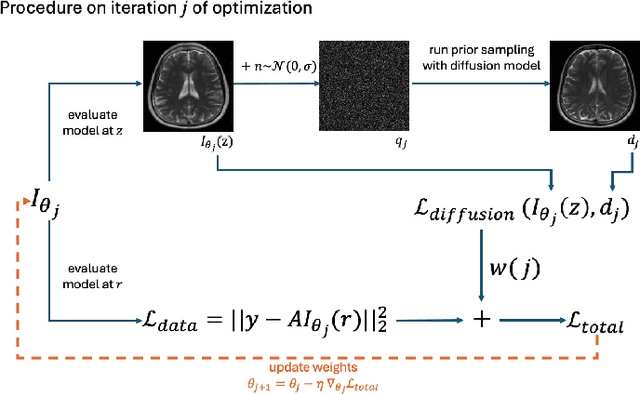

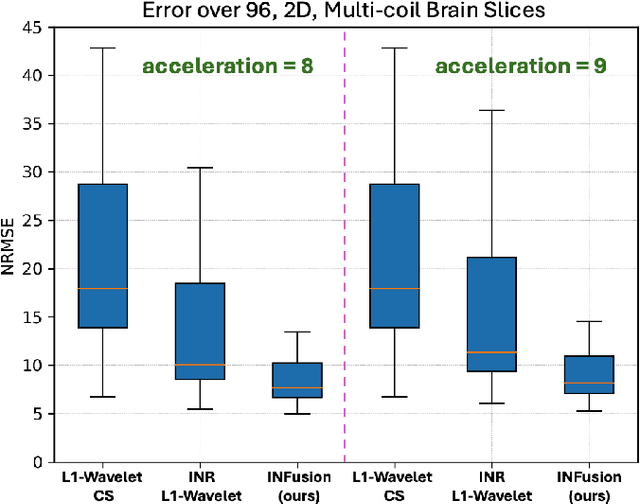

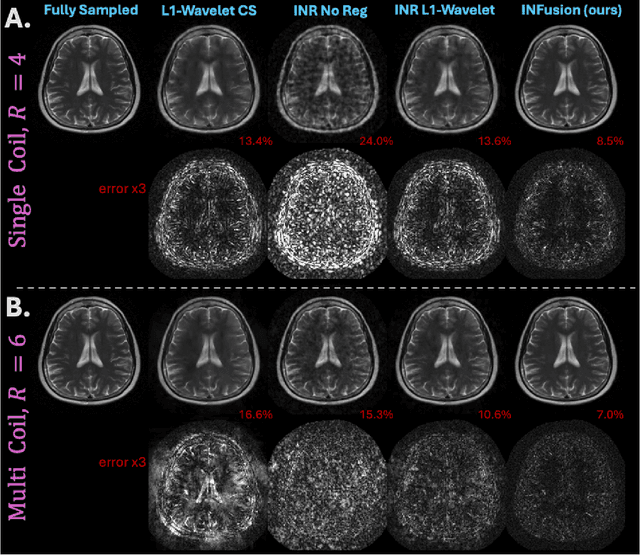

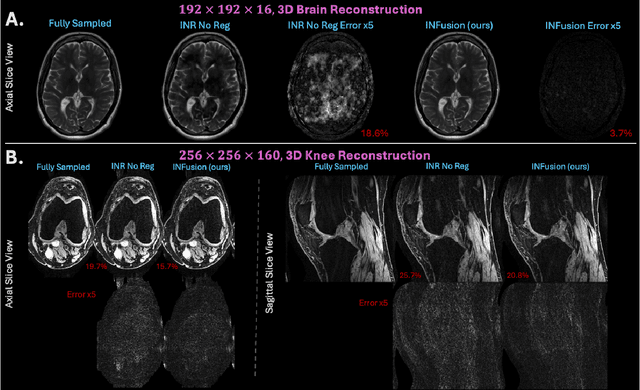

Abstract:Implicit Neural Representations (INRs) are a learning-based approach to accelerate Magnetic Resonance Imaging (MRI) acquisitions, particularly in scan-specific settings when only data from the under-sampled scan itself are available. Previous work demonstrates that INRs improve rapid MRI through inherent regularization imposed by neural network architectures. Typically parameterized by fully-connected neural networks, INRs support continuous image representations by taking a physical coordinate location as input and outputting the intensity at that coordinate. Previous work has applied unlearned regularization priors during INR training and have been limited to 2D or low-resolution 3D acquisitions. Meanwhile, diffusion based generative models have received recent attention as they learn powerful image priors decoupled from the measurement model. This work proposes INFusion, a technique that regularizes the optimization of INRs from under-sampled MR measurements with pre-trained diffusion models for improved image reconstruction. In addition, we propose a hybrid 3D approach with our diffusion regularization that enables INR application on large-scale 3D MR datasets. 2D experiments demonstrate improved INR training with our proposed diffusion regularization, and 3D experiments demonstrate feasibility of INR training with diffusion regularization on 3D matrix sizes of 256 by 256 by 80.

Ambient Diffusion Posterior Sampling: Solving Inverse Problems with Diffusion Models trained on Corrupted Data

Mar 13, 2024

Abstract:We provide a framework for solving inverse problems with diffusion models learned from linearly corrupted data. Our method, Ambient Diffusion Posterior Sampling (A-DPS), leverages a generative model pre-trained on one type of corruption (e.g. image inpainting) to perform posterior sampling conditioned on measurements from a potentially different forward process (e.g. image blurring). We test the efficacy of our approach on standard natural image datasets (CelebA, FFHQ, and AFHQ) and we show that A-DPS can sometimes outperform models trained on clean data for several image restoration tasks in both speed and performance. We further extend the Ambient Diffusion framework to train MRI models with access only to Fourier subsampled multi-coil MRI measurements at various acceleration factors (R=2, 4, 6, 8). We again observe that models trained on highly subsampled data are better priors for solving inverse problems in the high acceleration regime than models trained on fully sampled data. We open-source our code and the trained Ambient Diffusion MRI models: https://github.com/utcsilab/ambient-diffusion-mri .

Optimizing Sampling Patterns for Compressed Sensing MRI with Diffusion Generative Models

Jun 05, 2023

Abstract:Diffusion-based generative models have been used as powerful priors for magnetic resonance imaging (MRI) reconstruction. We present a learning method to optimize sub-sampling patterns for compressed sensing multi-coil MRI that leverages pre-trained diffusion generative models. Crucially, during training we use a single-step reconstruction based on the posterior mean estimate given by the diffusion model and the MRI measurement process. Experiments across varying anatomies, acceleration factors, and pattern types show that sampling operators learned with our method lead to competitive, and in the case of 2D patterns, improved reconstructions compared to baseline patterns. Our method requires as few as five training images to learn effective sampling patterns.

Conditional Score-Based Reconstructions for Multi-contrast MRI

Mar 26, 2023

Abstract:Magnetic resonance imaging (MRI) exam protocols consist of multiple contrast-weighted images of the same anatomy to emphasize different tissue properties. Due to the long acquisition times required to collect fully sampled k-space measurements, it is common to only collect a fraction of k-space for some, or all, of the scans and subsequently solve an inverse problem for each contrast to recover the desired image from sub-sampled measurements. Recently, there has been a push to further accelerate MRI exams using data-driven priors, and generative models in particular, to regularize the ill-posed inverse problem of image reconstruction. These methods have shown promising improvements over classical methods. However, many of the approaches neglect the multi-contrast nature of clinical MRI exams and treat each scan as an independent reconstruction. In this work we show that by learning a joint Bayesian prior over multi-contrast data with a score-based generative model we are able to leverage the underlying structure between multi-contrast images and thus improve image reconstruction fidelity over generative models that only reconstruct images of a single contrast.

Accelerated Motion Correction for MRI using Score-Based Generative Models

Nov 01, 2022Abstract:Magnetic Resonance Imaging (MRI) is a powerful medical imaging modality, but unfortunately suffers from long scan times which, aside from increasing operational costs, can lead to image artifacts due to patient motion. Motion during the acquisition leads to inconsistencies in measured data that manifest as blurring and ghosting if unaccounted for in the image reconstruction process. Various deep learning based reconstruction techniques have been proposed which decrease scan time by reducing the number of measurements needed for a high fidelity reconstructed image. Additionally, deep learning has been used to correct motion using end-to-end techniques. This, however, increases susceptibility to distribution shifts at test time (sampling pattern, motion level). In this work we propose a framework for jointly reconstructing highly sub-sampled MRI data while estimating patient motion using score-based generative models. Our method does not make specific assumptions on the sampling trajectory or motion pattern at training time and thus can be flexibly applied to various types of measurement models and patient motion. We demonstrate our framework on retrospectively accelerated 2D brain MRI corrupted by rigid motion.

FSE Compensated Motion Correction for MRI Using Data Driven Methods

Jul 01, 2022

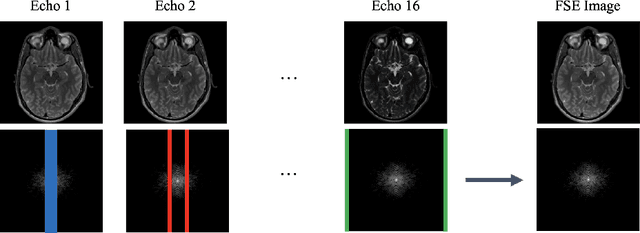

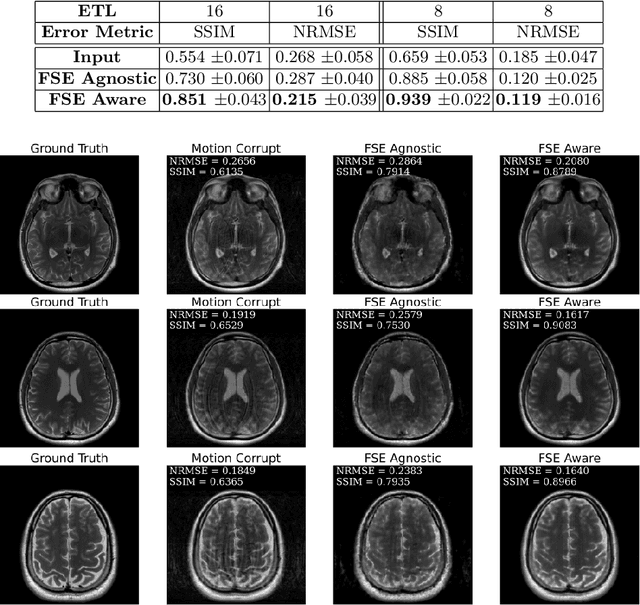

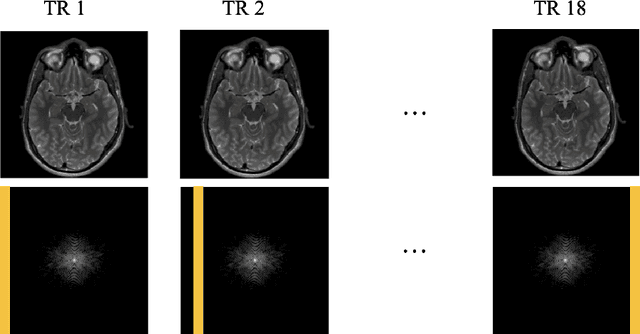

Abstract:Magnetic Resonance Imaging (MRI) is a widely used medical imaging modality boasting great soft tissue contrast without ionizing radiation, but unfortunately suffers from long acquisition times. Long scan times can lead to motion artifacts, for example due to bulk patient motion such as head movement and periodic motion produced by the heart or lungs. Motion artifacts can degrade image quality and in some cases render the scans nondiagnostic. To combat this problem, prospective and retrospective motion correction techniques have been introduced. More recently, data driven methods using deep neural networks have been proposed. As a large number of publicly available MRI datasets are based on Fast Spin Echo (FSE) sequences, methods that use them for training should incorporate the correct FSE acquisition dynamics. Unfortunately, when simulating training data, many approaches fail to generate accurate motion-corrupt images by neglecting the effects of the temporal ordering of the k-space lines as well as neglecting the signal decay throughout the FSE echo train. In this work, we highlight this consequence and demonstrate a training method which correctly simulates the data acquisition process of FSE sequences with higher fidelity by including sample ordering and signal decay dynamics. Through numerical experiments, we show that accounting for the FSE acquisition leads to better motion correction performance during inference.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge