Bassem Ouni

PatchBlock: A Lightweight Defense Against Adversarial Patches for Embedded EdgeAI Devices

Jan 01, 2026Abstract:Adversarial attacks pose a significant challenge to the reliable deployment of machine learning models in EdgeAI applications, such as autonomous driving and surveillance, which rely on resource-constrained devices for real-time inference. Among these, patch-based adversarial attacks, where small malicious patches (e.g., stickers) are applied to objects, can deceive neural networks into making incorrect predictions with potentially severe consequences. In this paper, we present PatchBlock, a lightweight framework designed to detect and neutralize adversarial patches in images. Leveraging outlier detection and dimensionality reduction, PatchBlock identifies regions affected by adversarial noise and suppresses their impact. It operates as a pre-processing module at the sensor level, efficiently running on CPUs in parallel with GPU inference, thus preserving system throughput while avoiding additional GPU overhead. The framework follows a three-stage pipeline: splitting the input into chunks (Chunking), detecting anomalous regions via a redesigned isolation forest with targeted cuts for faster convergence (Separating), and applying dimensionality reduction on the identified outliers (Mitigating). PatchBlock is both model- and patch-agnostic, can be retrofitted to existing pipelines, and integrates seamlessly between sensor inputs and downstream models. Evaluations across multiple neural architectures, benchmark datasets, attack types, and diverse edge devices demonstrate that PatchBlock consistently improves robustness, recovering up to 77% of model accuracy under strong patch attacks such as the Google Adversarial Patch, while maintaining high portability and minimal clean accuracy loss. Additionally, PatchBlock outperforms the state-of-the-art defenses in efficiency, in terms of computation time and energy consumption per sample, making it suitable for EdgeAI applications.

TESSER: Transfer-Enhancing Adversarial Attacks from Vision Transformers via Spectral and Semantic Regularization

May 26, 2025

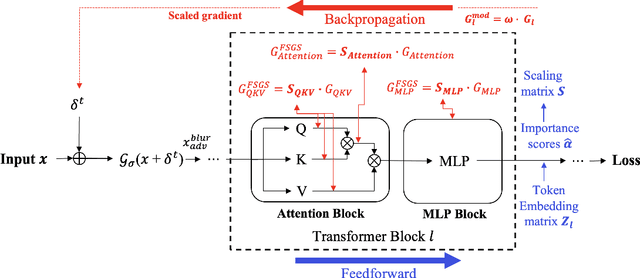

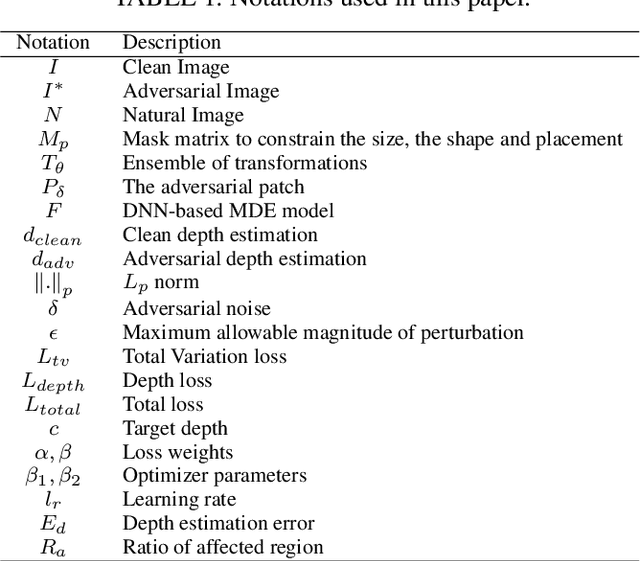

Abstract:Adversarial transferability remains a critical challenge in evaluating the robustness of deep neural networks. In security-critical applications, transferability enables black-box attacks without access to model internals, making it a key concern for real-world adversarial threat assessment. While Vision Transformers (ViTs) have demonstrated strong adversarial performance, existing attacks often fail to transfer effectively across architectures, especially from ViTs to Convolutional Neural Networks (CNNs) or hybrid models. In this paper, we introduce \textbf{TESSER} -- a novel adversarial attack framework that enhances transferability via two key strategies: (1) \textit{Feature-Sensitive Gradient Scaling (FSGS)}, which modulates gradients based on token-wise importance derived from intermediate feature activations, and (2) \textit{Spectral Smoothness Regularization (SSR)}, which suppresses high-frequency noise in perturbations using a differentiable Gaussian prior. These components work in tandem to generate perturbations that are both semantically meaningful and spectrally smooth. Extensive experiments on ImageNet across 12 diverse architectures demonstrate that TESSER achieves +10.9\% higher attack succes rate (ASR) on CNNs and +7.2\% on ViTs compared to the state-of-the-art Adaptive Token Tuning (ATT) method. Moreover, TESSER significantly improves robustness against defended models, achieving 53.55\% ASR on adversarially trained CNNs. Qualitative analysis shows strong alignment between TESSER's perturbations and salient visual regions identified via Grad-CAM, while frequency-domain analysis reveals a 12\% reduction in high-frequency energy, confirming the effectiveness of spectral regularization.

Breaking the Limits of Quantization-Aware Defenses: QADT-R for Robustness Against Patch-Based Adversarial Attacks in QNNs

Mar 10, 2025

Abstract:Quantized Neural Networks (QNNs) have emerged as a promising solution for reducing model size and computational costs, making them well-suited for deployment in edge and resource-constrained environments. While quantization is known to disrupt gradient propagation and enhance robustness against pixel-level adversarial attacks, its effectiveness against patch-based adversarial attacks remains largely unexplored. In this work, we demonstrate that adversarial patches remain highly transferable across quantized models, achieving over 70\% attack success rates (ASR) even at extreme bit-width reductions (e.g., 2-bit). This challenges the common assumption that quantization inherently mitigates adversarial threats. To address this, we propose Quantization-Aware Defense Training with Randomization (QADT-R), a novel defense strategy that integrates Adaptive Quantization-Aware Patch Generation (A-QAPA), Dynamic Bit-Width Training (DBWT), and Gradient-Inconsistent Regularization (GIR) to enhance resilience against highly transferable patch-based attacks. A-QAPA generates adversarial patches within quantized models, ensuring robustness across different bit-widths. DBWT introduces bit-width cycling during training to prevent overfitting to a specific quantization setting, while GIR injects controlled gradient perturbations to disrupt adversarial optimization. Extensive evaluations on CIFAR-10 and ImageNet show that QADT-R reduces ASR by up to 25\% compared to prior defenses such as PBAT and DWQ. Our findings further reveal that PBAT-trained models, while effective against seen patch configurations, fail to generalize to unseen patches due to quantization shift. Additionally, our empirical analysis of gradient alignment, spatial sensitivity, and patch visibility provides insights into the mechanisms that contribute to the high transferability of patch-based attacks in QNNs.

Enhancing Mutual Trustworthiness in Federated Learning for Data-Rich Smart Cities

May 01, 2024

Abstract:Federated learning is a promising collaborative and privacy-preserving machine learning approach in data-rich smart cities. Nevertheless, the inherent heterogeneity of these urban environments presents a significant challenge in selecting trustworthy clients for collaborative model training. The usage of traditional approaches, such as the random client selection technique, poses several threats to the system's integrity due to the possibility of malicious client selection. Primarily, the existing literature focuses on assessing the trustworthiness of clients, neglecting the crucial aspect of trust in federated servers. To bridge this gap, in this work, we propose a novel framework that addresses the mutual trustworthiness in federated learning by considering the trust needs of both the client and the server. Our approach entails: (1) Creating preference functions for servers and clients, allowing them to rank each other based on trust scores, (2) Establishing a reputation-based recommendation system leveraging multiple clients to assess newly connected servers, (3) Assigning credibility scores to recommending devices for better server trustworthiness measurement, (4) Developing a trust assessment mechanism for smart devices using a statistical Interquartile Range (IQR) method, (5) Designing intelligent matching algorithms considering the preferences of both parties. Based on simulation and experimental results, our approach outperforms baseline methods by increasing trust levels, global model accuracy, and reducing non-trustworthy clients in the system.

SSAP: A Shape-Sensitive Adversarial Patch for Comprehensive Disruption of Monocular Depth Estimation in Autonomous Navigation Applications

Mar 18, 2024

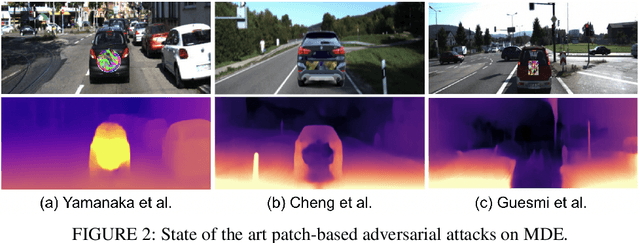

Abstract:Monocular depth estimation (MDE) has advanced significantly, primarily through the integration of convolutional neural networks (CNNs) and more recently, Transformers. However, concerns about their susceptibility to adversarial attacks have emerged, especially in safety-critical domains like autonomous driving and robotic navigation. Existing approaches for assessing CNN-based depth prediction methods have fallen short in inducing comprehensive disruptions to the vision system, often limited to specific local areas. In this paper, we introduce SSAP (Shape-Sensitive Adversarial Patch), a novel approach designed to comprehensively disrupt monocular depth estimation (MDE) in autonomous navigation applications. Our patch is crafted to selectively undermine MDE in two distinct ways: by distorting estimated distances or by creating the illusion of an object disappearing from the system's perspective. Notably, our patch is shape-sensitive, meaning it considers the specific shape and scale of the target object, thereby extending its influence beyond immediate proximity. Furthermore, our patch is trained to effectively address different scales and distances from the camera. Experimental results demonstrate that our approach induces a mean depth estimation error surpassing 0.5, impacting up to 99% of the targeted region for CNN-based MDE models. Additionally, we investigate the vulnerability of Transformer-based MDE models to patch-based attacks, revealing that SSAP yields a significant error of 0.59 and exerts substantial influence over 99% of the target region on these models.

ODDR: Outlier Detection & Dimension Reduction Based Defense Against Adversarial Patches

Nov 20, 2023

Abstract:Adversarial attacks are a major deterrent towards the reliable use of machine learning models. A powerful type of adversarial attacks is the patch-based attack, wherein the adversarial perturbations modify localized patches or specific areas within the images to deceive the trained machine learning model. In this paper, we introduce Outlier Detection and Dimension Reduction (ODDR), a holistic defense mechanism designed to effectively mitigate patch-based adversarial attacks. In our approach, we posit that input features corresponding to adversarial patches, whether naturalistic or otherwise, deviate from the inherent distribution of the remaining image sample and can be identified as outliers or anomalies. ODDR employs a three-stage pipeline: Fragmentation, Segregation, and Neutralization, providing a model-agnostic solution applicable to both image classification and object detection tasks. The Fragmentation stage parses the samples into chunks for the subsequent Segregation process. Here, outlier detection techniques identify and segregate the anomalous features associated with adversarial perturbations. The Neutralization stage utilizes dimension reduction methods on the outliers to mitigate the impact of adversarial perturbations without sacrificing pertinent information necessary for the machine learning task. Extensive testing on benchmark datasets and state-of-the-art adversarial patches demonstrates the effectiveness of ODDR. Results indicate robust accuracies matching and lying within a small range of clean accuracies (1%-3% for classification and 3%-5% for object detection), with only a marginal compromise of 1%-2% in performance on clean samples, thereby significantly outperforming other defenses.

Enhancing IoT Security via Automatic Network Traffic Analysis: The Transition from Machine Learning to Deep Learning

Nov 20, 2023

Abstract:This work provides a comparative analysis illustrating how Deep Learning (DL) surpasses Machine Learning (ML) in addressing tasks within Internet of Things (IoT), such as attack classification and device-type identification. Our approach involves training and evaluating a DL model using a range of diverse IoT-related datasets, allowing us to gain valuable insights into how adaptable and practical these models can be when confronted with various IoT configurations. We initially convert the unstructured network traffic data from IoT networks, stored in PCAP files, into images by processing the packet data. This conversion process adapts the data to meet the criteria of DL classification methods. The experiments showcase the ability of DL to surpass the constraints tied to manually engineered features, achieving superior results in attack detection and maintaining comparable outcomes in device-type identification. Additionally, a notable feature extraction time difference becomes evident in the experiments: traditional methods require around 29 milliseconds per data packet, while DL accomplishes the same task in just 2.9 milliseconds. The significant time gap, DL's superior performance, and the recognized limitations of manually engineered features, presents a compelling call to action within the IoT community. This encourages us to shift from exploring new IoT features for each dataset to addressing the challenges of integrating DL into IoT, making it a more efficient solution for real-world IoT scenarios.

Physical Adversarial Attacks For Camera-based Smart Systems: Current Trends, Categorization, Applications, Research Challenges, and Future Outlook

Aug 11, 2023

Abstract:In this paper, we present a comprehensive survey of the current trends focusing specifically on physical adversarial attacks. We aim to provide a thorough understanding of the concept of physical adversarial attacks, analyzing their key characteristics and distinguishing features. Furthermore, we explore the specific requirements and challenges associated with executing attacks in the physical world. Our article delves into various physical adversarial attack methods, categorized according to their target tasks in different applications, including classification, detection, face recognition, semantic segmentation and depth estimation. We assess the performance of these attack methods in terms of their effectiveness, stealthiness, and robustness. We examine how each technique strives to ensure the successful manipulation of DNNs while mitigating the risk of detection and withstanding real-world distortions. Lastly, we discuss the current challenges and outline potential future research directions in the field of physical adversarial attacks. We highlight the need for enhanced defense mechanisms, the exploration of novel attack strategies, the evaluation of attacks in different application domains, and the establishment of standardized benchmarks and evaluation criteria for physical adversarial attacks. Through this comprehensive survey, we aim to provide a valuable resource for researchers, practitioners, and policymakers to gain a holistic understanding of physical adversarial attacks in computer vision and facilitate the development of robust and secure DNN-based systems.

SAAM: Stealthy Adversarial Attack on Monoculor Depth Estimation

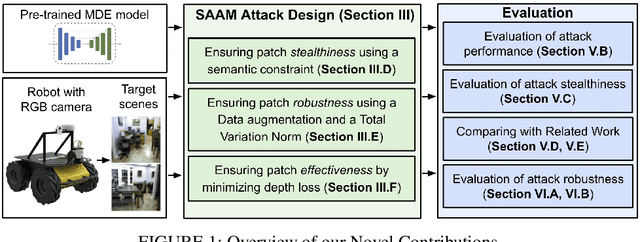

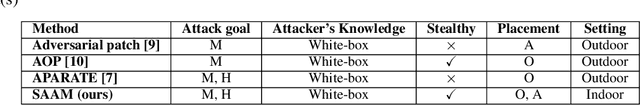

Aug 06, 2023

Abstract:In this paper, we investigate the vulnerability of MDE to adversarial patches. We propose a novel \underline{S}tealthy \underline{A}dversarial \underline{A}ttacks on \underline{M}DE (SAAM) that compromises MDE by either corrupting the estimated distance or causing an object to seamlessly blend into its surroundings. Our experiments, demonstrate that the designed stealthy patch successfully causes a DNN-based MDE to misestimate the depth of objects. In fact, our proposed adversarial patch achieves a significant 60\% depth error with 99\% ratio of the affected region. Importantly, despite its adversarial nature, the patch maintains a naturalistic appearance, making it inconspicuous to human observers. We believe that this work sheds light on the threat of adversarial attacks in the context of MDE on edge devices. We hope it raises awareness within the community about the potential real-life harm of such attacks and encourages further research into developing more robust and adaptive defense mechanisms.

Harris Hawks Feature Selection in Distributed Machine Learning for Secure IoT Environments

Feb 20, 2023

Abstract:The development of the Internet of Things (IoT) has dramatically expanded our daily lives, playing a pivotal role in the enablement of smart cities, healthcare, and buildings. Emerging technologies, such as IoT, seek to improve the quality of service in cognitive cities. Although IoT applications are helpful in smart building applications, they present a real risk as the large number of interconnected devices in those buildings, using heterogeneous networks, increases the number of potential IoT attacks. IoT applications can collect and transfer sensitive data. Therefore, it is necessary to develop new methods to detect hacked IoT devices. This paper proposes a Feature Selection (FS) model based on Harris Hawks Optimization (HHO) and Random Weight Network (RWN) to detect IoT botnet attacks launched from compromised IoT devices. Distributed Machine Learning (DML) aims to train models locally on edge devices without sharing data to a central server. Therefore, we apply the proposed approach using centralized and distributed ML models. Both learning models are evaluated under two benchmark datasets for IoT botnet attacks and compared with other well-known classification techniques using different evaluation indicators. The experimental results show an improvement in terms of accuracy, precision, recall, and F-measure in most cases. The proposed method achieves an average F-measure up to 99.9\%. The results show that the DML model achieves competitive performance against centralized ML while maintaining the data locally.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge