Andrew Thompson

Uncertainty propagation through trained multi-layer perceptrons: Exact analytical results

Jan 23, 2026Abstract:We give analytical results for propagation of uncertainty through trained multi-layer perceptrons (MLPs) with a single hidden layer and ReLU activation functions. More precisely, we give expressions for the mean and variance of the output when the input is multivariate Gaussian. In contrast to previous results, we obtain exact expressions without resort to a series expansion.

Uncertainty quantification with approximate variational learning for wearable photoplethysmography prediction tasks

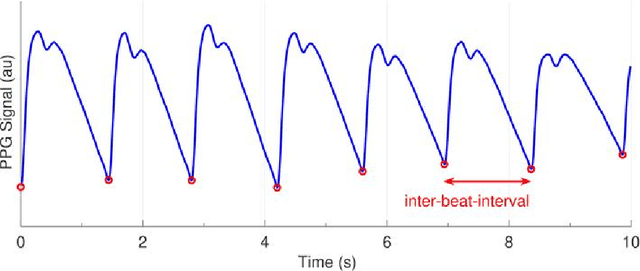

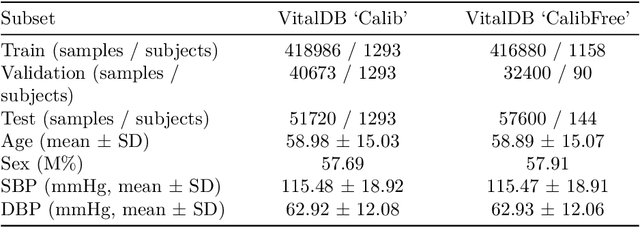

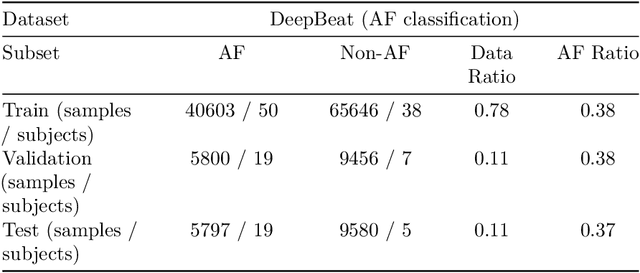

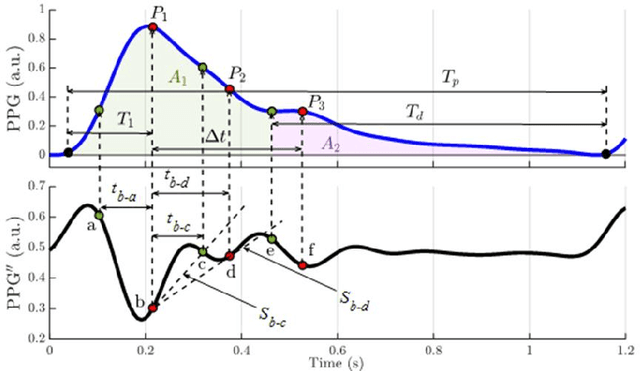

May 16, 2025Abstract:Photoplethysmography (PPG) signals encode information about relative changes in blood volume that can be used to assess various aspects of cardiac health non-invasively, e.g.\ to detect atrial fibrillation (AF) or predict blood pressure (BP). Deep networks are well-equipped to handle the large quantities of data acquired from wearable measurement devices. However, they lack interpretability and are prone to overfitting, leaving considerable risk for poor performance on unseen data and misdiagnosis. Here, we describe the use of two scalable uncertainty quantification techniques: Monte Carlo Dropout and the recently proposed Improved Variational Online Newton. These techniques are used to assess the trustworthiness of models trained to perform AF classification and BP regression from raw PPG time series. We find that the choice of hyperparameters has a considerable effect on the predictive performance of the models and on the quality and composition of predicted uncertainties. E.g. the stochasticity of the model parameter sampling determines the proportion of the total uncertainty that is aleatoric, and has varying effects on predictive performance and calibration quality dependent on the chosen uncertainty quantification technique and the chosen expression of uncertainty. We find significant discrepancy in the quality of uncertainties over the predicted classes, emphasising the need for a thorough evaluation protocol that assesses local and adaptive calibration. This work suggests that the choice of hyperparameters must be carefully tuned to balance predictive performance and calibration quality, and that the optimal parameterisation may vary depending on the chosen expression of uncertainty.

A metrological framework for uncertainty evaluation in machine learning classification models

Apr 04, 2025Abstract:Machine learning (ML) classification models are increasingly being used in a wide range of applications where it is important that predictions are accompanied by uncertainties, including in climate and earth observation, medical diagnosis and bioaerosol monitoring. The output of an ML classification model is a type of categorical variable known as a nominal property in the International Vocabulary of Metrology (VIM). However, concepts related to uncertainty evaluation for nominal properties are not defined in the VIM, nor is such evaluation addressed by the Guide to the Expression of Uncertainty in Measurement (GUM). In this paper we propose a metrological conceptual uncertainty evaluation framework for ML classification, and illustrate its use in the context of two applications that exemplify the issues and have significant societal impact, namely, climate and earth observation and medical diagnosis. Our framework would enable an extension of the VIM and GUM to uncertainty for nominal properties, which would make both applicable to ML classification models.

Machine-learning for photoplethysmography analysis: Benchmarking feature, image, and signal-based approaches

Feb 27, 2025

Abstract:Photoplethysmography (PPG) is a widely used non-invasive physiological sensing technique, suitable for various clinical applications. Such clinical applications are increasingly supported by machine learning methods, raising the question of the most appropriate input representation and model choice. Comprehensive comparisons, in particular across different input representations, are scarce. We address this gap in the research landscape by a comprehensive benchmarking study covering three kinds of input representations, interpretable features, image representations and raw waveforms, across prototypical regression and classification use cases: blood pressure and atrial fibrillation prediction. In both cases, the best results are achieved by deep neural networks operating on raw time series as input representations. Within this model class, best results are achieved by modern convolutional neural networks (CNNs). but depending on the task setup, shallow CNNs are often also very competitive. We envision that these results will be insightful for researchers to guide their choice on machine learning tasks for PPG data, even beyond the use cases presented in this work.

AAD-LLM: Adaptive Anomaly Detection Using Large Language Models

Nov 01, 2024

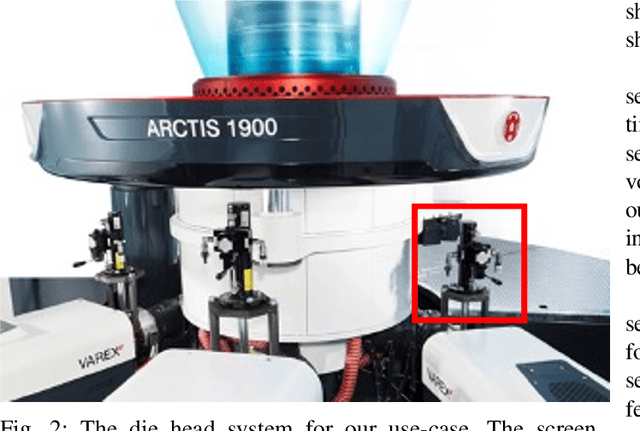

Abstract:For data-constrained, complex and dynamic industrial environments, there is a critical need for transferable and multimodal methodologies to enhance anomaly detection and therefore, prevent costs associated with system failures. Typically, traditional PdM approaches are not transferable or multimodal. This work examines the use of Large Language Models (LLMs) for anomaly detection in complex and dynamic manufacturing systems. The research aims to improve the transferability of anomaly detection models by leveraging Large Language Models (LLMs) and seeks to validate the enhanced effectiveness of the proposed approach in data-sparse industrial applications. The research also seeks to enable more collaborative decision-making between the model and plant operators by allowing for the enriching of input series data with semantics. Additionally, the research aims to address the issue of concept drift in dynamic industrial settings by integrating an adaptability mechanism. The literature review examines the latest developments in LLM time series tasks alongside associated adaptive anomaly detection methods to establish a robust theoretical framework for the proposed architecture. This paper presents a novel model framework (AAD-LLM) that doesn't require any training or finetuning on the dataset it is applied to and is multimodal. Results suggest that anomaly detection can be converted into a "language" task to deliver effective, context-aware detection in data-constrained industrial applications. This work, therefore, contributes significantly to advancements in anomaly detection methodologies.

Trustworthy Artificial Intelligence in the Context of Metrology

Jun 14, 2024Abstract:We review research at the National Physical Laboratory (NPL) in the area of trustworthy artificial intelligence (TAI), and more specifically trustworthy machine learning (TML), in the context of metrology, the science of measurement. We describe three broad themes of TAI: technical, socio-technical and social, which play key roles in ensuring that the developed models are trustworthy and can be relied upon to make responsible decisions. From a metrology perspective we emphasise uncertainty quantification (UQ), and its importance within the framework of TAI to enhance transparency and trust in the outputs of AI systems. We then discuss three research areas within TAI that we are working on at NPL, and examine the certification of AI systems in terms of adherence to the characteristics of TAI.

Analytical results for uncertainty propagation through trained machine learning regression models

Apr 17, 2024Abstract:Machine learning (ML) models are increasingly being used in metrology applications. However, for ML models to be credible in a metrology context they should be accompanied by principled uncertainty quantification. This paper addresses the challenge of uncertainty propagation through trained/fixed machine learning (ML) regression models. Analytical expressions for the mean and variance of the model output are obtained/presented for certain input data distributions and for a variety of ML models. Our results cover several popular ML models including linear regression, penalised linear regression, kernel ridge regression, Gaussian Processes (GPs), support vector machines (SVMs) and relevance vector machines (RVMs). We present numerical experiments in which we validate our methods and compare them with a Monte Carlo approach from a computational efficiency point of view. We also illustrate our methods in the context of a metrology application, namely modelling the state-of-health of lithium-ion cells based upon Electrical Impedance Spectroscopy (EIS) data

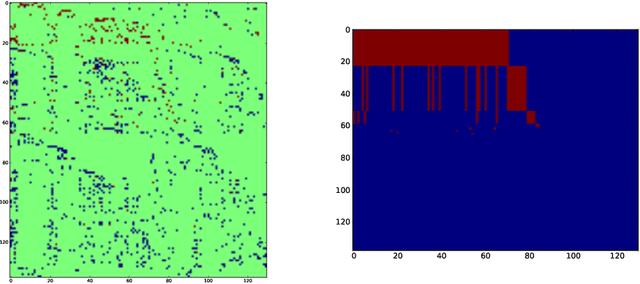

A divide-and-conquer algorithm for binary matrix completion

Jul 09, 2019

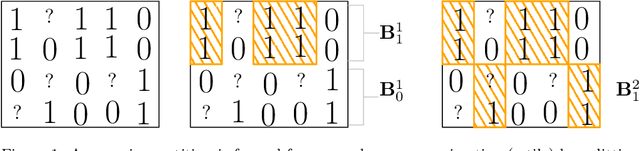

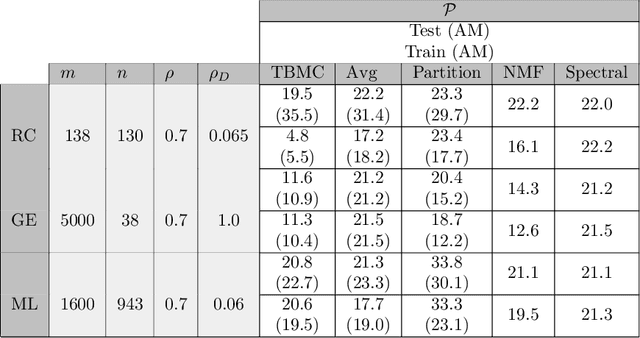

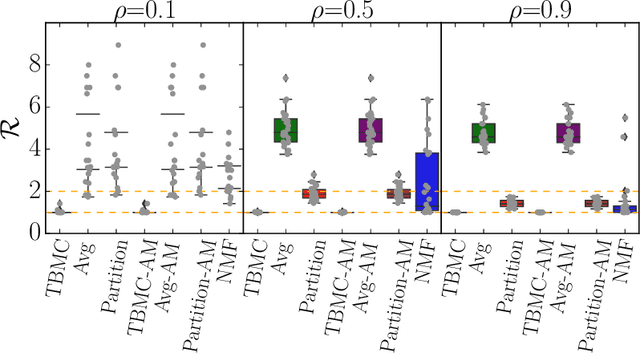

Abstract:We propose an algorithm for low rank matrix completion for matrices with binary entries which obtains explicit binary factors. Our algorithm, which we call TBMC (\emph{Tiling for Binary Matrix Completion}), gives interpretable output in the form of binary factors which represent a decomposition of the matrix into tiles. Our approach is inspired by a popular algorithm from the data mining community called PROXIMUS: it adopts the same recursive partitioning approach while extending to missing data. The algorithm relies upon rank-one approximations of incomplete binary matrices, and we propose a linear programming (LP) approach for solving this subproblem. We also prove a $2$-approximation result for the LP approach which holds for any level of subsampling and for any subsampling pattern. Our numerical experiments show that TBMC outperforms existing methods on recommender systems arising in the context of real datasets.

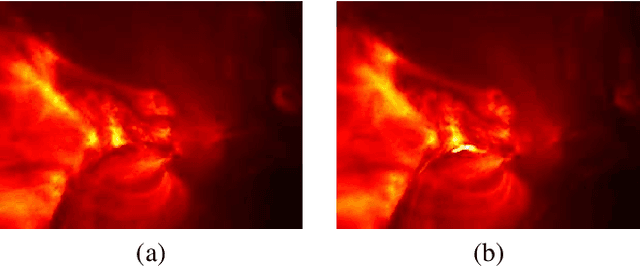

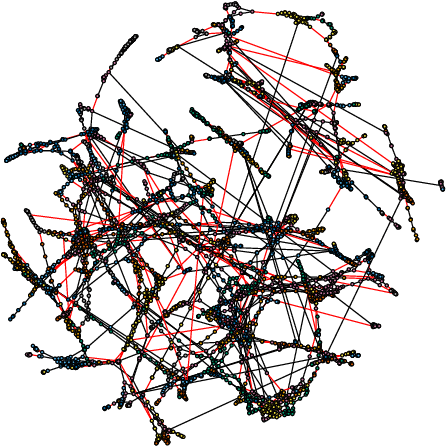

Sketching for Sequential Change-Point Detection

Apr 30, 2018

Abstract:We study sequential change-point detection procedures based on linear sketches of high-dimensional signal vectors using generalized likelihood ratio (GLR) statistics. The GLR statistics allow for an unknown post-change mean that represents an anomaly or novelty. We consider both fixed and time-varying projections, derive theoretical approximations to two fundamental performance metrics: the average run length (ARL) and the expected detection delay (EDD); these approximations are shown to be highly accurate by numerical simulations. We further characterize the relative performance measure of the sketching procedure compared to that without sketching and show that there can be little performance loss when the signal strength is sufficiently large, and enough number of sketches are used. Finally, we demonstrate the good performance of sketching procedures using simulation and real-data examples on solar flare detection and failure detection in power networks.

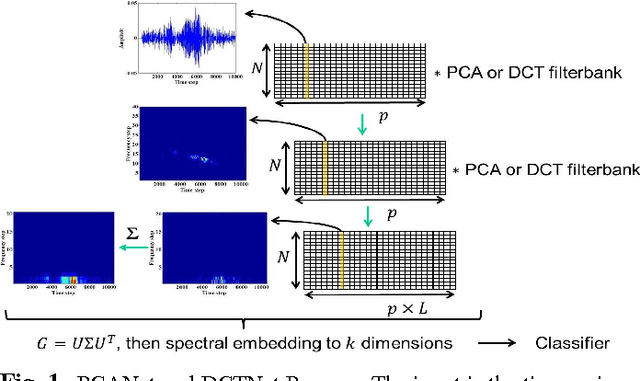

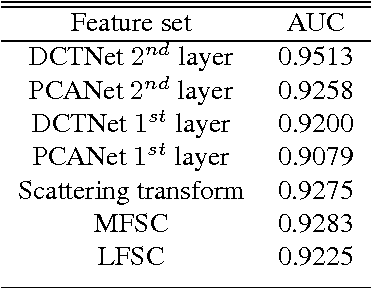

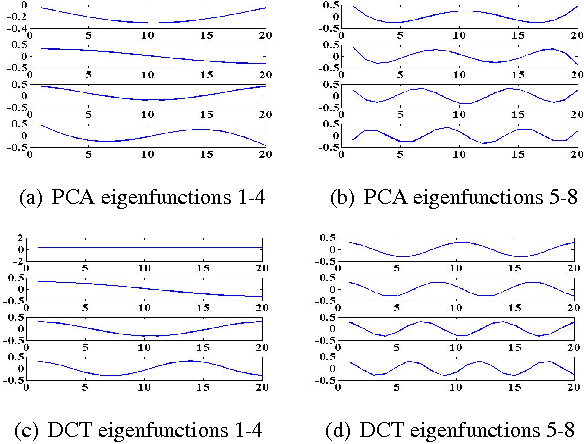

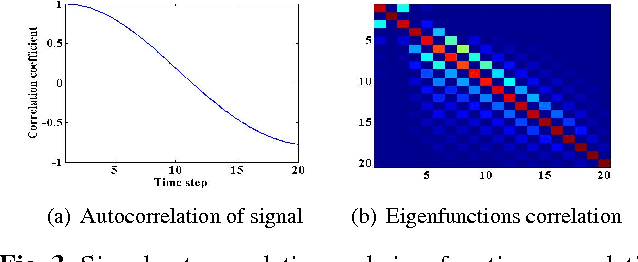

DCTNet and PCANet for acoustic signal feature extraction

Apr 28, 2016

Abstract:We introduce the use of DCTNet, an efficient approximation and alternative to PCANet, for acoustic signal classification. In PCANet, the eigenfunctions of the local sample covariance matrix (PCA) are used as filterbanks for convolution and feature extraction. When the eigenfunctions are well approximated by the Discrete Cosine Transform (DCT) functions, each layer of of PCANet and DCTNet is essentially a time-frequency representation. We relate DCTNet to spectral feature representation methods, such as the the short time Fourier transform (STFT), spectrogram and linear frequency spectral coefficients (LFSC). Experimental results on whale vocalization data show that DCTNet improves classification rate, demonstrating DCTNet's applicability to signal processing problems such as underwater acoustics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge